This is the multi-page printable view of this section. Click here to print.

Kubernetes Engine

- 1: Overview

- 1.1: Monitoring Metrics

- 1.2: ServiceWatch Metrics

- 2: How-to guides

- 2.1: Managing Nodes

- 2.2: Managing Namespaces

- 2.3: Manage Workloads

- 2.4: Manage services and ingresses

- 2.5: Managing Storage

- 2.6: Configuration(Configuration) Management

- 2.7: Manage Permissions

- 3: Kubernetes Engine Usage Guide

- 3.1: Access Cluster

- 3.2: Authentication and Authorization

- 3.3: Using type LoadBalancer service

- 3.4: Usage Considerations

- 3.5: Version information

- 4: API Reference

- 5: CLI Reference

- 6: Release Note

1 - Overview

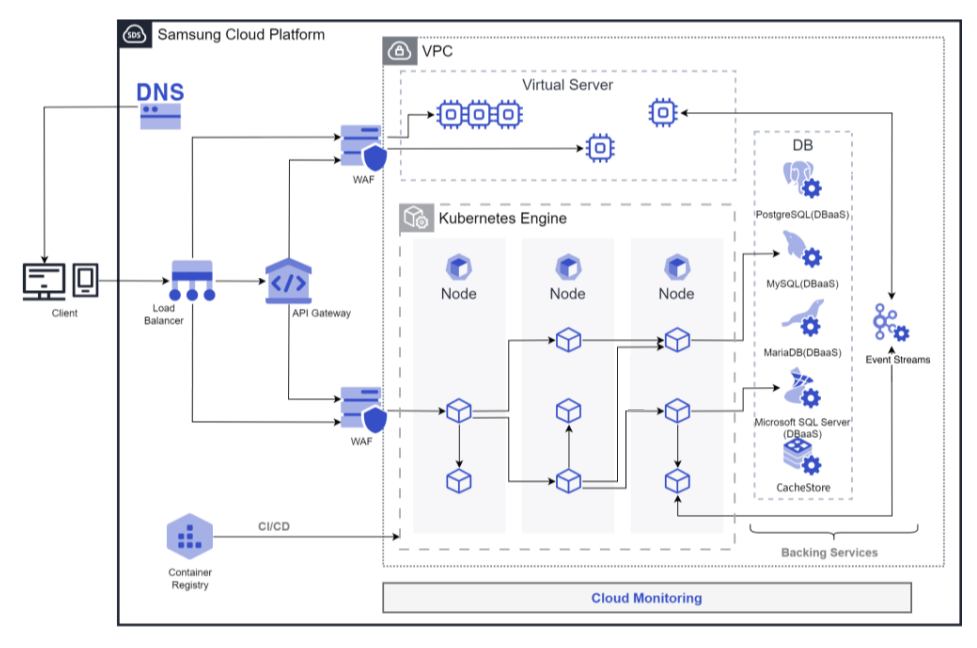

Service Overview

Kubernetes Engine is a service that provides lightweight virtual computing, containers, and a Kubernetes cluster to manage them. Users can leverage a Kubernetes environment without complex preparation by installing, operating, and maintaining the Kubernetes Control Plane.

Features

Standard Kubernetes Environment Setup: You can use a standard Kubernetes environment without additional configuration through the built-in Kubernetes Control Plane. It is compatible with applications in other standard Kubernetes environments, allowing you to use standard Kubernetes applications without modifying code.

Easy Kubernetes Deployment: provides secure communication between the worker node (Worker Node) and the managed control plane, and quickly provisions worker nodes so users can focus on building applications on the provided container environment.

Convenient Kubernetes Management: For enterprise environments, we provide various management features to conveniently use the created Kubernetes clusters, including cluster information lookup and management via a dashboard, namespace management, and workload management functions.

Service Diagram

Provided features

Kubernetes Engine provides the following features.

- Cluster Management: You can create and manage clusters to use the Kubernetes Engine service. After creating a cluster, you can add services needed for operation such as nodes, namespaces, and workloads.

- Node Management: A node is a set of machines that run containerized applications. Every cluster must have at least one worker node to deploy applications. Nodes can be used by defining node pools. Nodes belonging to a node pool must have the same server type, size, and OS image, and creating multiple node pools enables flexible deployment strategies.

- Namespace Management: A namespace is a logical partition within a Kubernetes cluster and is used to specify access permissions or resource usage limits per namespace.

- Workload Management: A workload is an application running on Kubernetes Engine. After creating a namespace, you can add or delete workloads. Workloads are created and managed per item such as Deployment, Pod, StatefulSet, DaemonSet, Job, and CronJob.

- Service and Ingress Management: A service is an abstraction that exposes applications running in a set of pods as a network service, and an ingress is used to expose HTTP and HTTPS paths from outside the cluster to inside the cluster. After creating a namespace, you can create or delete services, endpoints, ingresses, and ingress classes.

- Storage Management: You can create and manage the storage to be used when using Kubernetes Engine. Storage is created and managed per PVC, PV, and StorageClass items.

- Configuration Management: When you need to manage values that change inside containers across multiple environments such as Dev/Prod, creating separate images to handle them via environment variables is inconvenient and wasteful. In Kubernetes, you can manage environment variables or configuration settings as variables that can be changed externally and injected when a Pod is created; at that point you can use ConfigMaps and Secrets.

- Permission Management: When multiple users access a Kubernetes cluster, you can assign permissions per specific API or namespace to define the access scope. By applying Kubernetes’ role-based access control (RBAC) feature, you can set permissions for clusters or namespaces. You can create and manage ClusterRoles, ClusterRoleBindings, Roles, and RoleBindings.

Component

control plane

Control Plane is the component that serves as the master node in the Kubernetes Engine service. The master node is the cluster’s management node, responsible for managing the other nodes in the cluster. A cluster is the basic creation unit of the Kubernetes Engine service and is used for managing node pools, objects, controllers, etc., that belong to it. Users configure the cluster name (cluster name), control plane, network, File Storage, and then create node pools within the cluster for use. The master node assigns work to the cluster, monitors node status, and handles data communication between nodes.

The cluster name creation rules are as follows.

- It must start with a letter and can be set using letters, numbers, and special characters (

-) within 3 to 30 characters. - It must not duplicate an already existing cluster name.

worker node

The worker node (Worker Node) is a compute node in the cluster that performs tasks. It receives task assignments from the cluster’s master node, executes them, and reports the results back to the master node. All nodes created within a node pool and namespace serve as worker nodes.

The rules for creating a node pool, which is a collection of worker nodes, are as follows.

- A node pool must contain at least one node for the application deployment to be possible.

- A maximum of 100 nodes can be created within a node pool.

- Since the maximum number of nodes is 100, you can freely create up to 100 nodes—for example, with 100 node pools you get 1 node per pool, and with 50 node pools you get 2 nodes per pool.

- It is possible to configure block storage attached to a node pool.

- You can configure the server type, size, and OS image for nodes in a node pool, and they must all be identical.

- Through the Auto-Scaling service, you can configure automatic scaling and shrinking of node pools according to the requirements of the deployed application.

Preliminary Service

This is a list of services that must be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | service | Detailed description |

|---|---|---|

| Networking | VPC | A service that provides an isolated virtual network in a cloud environment |

| Networking | Security Group | Virtual firewall that controls server traffic |

| Storage | File Storage | A storage that allows multiple clients to share files over the network

|

1.1 - Monitoring Metrics

According to Samsung Cloud Platform’s policy, the Cloud Monitoring service is scheduled to be discontinued in September 2026.

Accordingly, after the September 2026 release, resource monitoring of the Samsung Cloud Platform via Cloud Monitoring will no longer be possible.

With the new alternative service, you can continuously perform resource monitoring by using ServiceWatch, released in October 2025.

ServiceWatch provides more modern and powerful features, replacing Cloud Monitoring to deliver a seamless monitoring environment.

Detailed information about ServiceWatch is available in the ServiceWatch Overview.

Kubernetes Engine monitoring metrics

The table below shows the monitoring metrics of Kubernetes Engine that can be viewed through Cloud Monitoring. For detailed usage of Cloud Monitoring, refer to the Cloud Monitoring guide.

| Performance items | Detailed description | unit |

|---|---|---|

| Cluster Namespaces [Active] | Number of namespaces in active state | cnt |

| Cluster Namespaces [Total] | Total number of namespaces in the cluster | cnt |

| Cluster Nodes [Ready] | Number of nodes in READY state | cnt |

| Cluster Nodes [Total] | Total number of nodes in the cluster | cnt |

| Cluster Pods [Failed] | Number of failed-state pods in the cluster | cnt |

| Cluster Pods [Pending] | Number of pending pods in the cluster | cnt |

| Cluster Pods [Running] | Number of pods in running state within the cluster | cnt |

| Cluster Pods [Succeeded] | Number of succeeded pods in the cluster | cnt |

| Cluster Pods [Unknown] | Number of pods in unknown state within the cluster | cnt |

| Instance Status | cluster status | status |

| Namespace Pods [Failed] | Number of failed-state pods in a namespace | cnt |

| Namespace Pods [Pending] | Number of pending pods in a namespace | cnt |

| Namespace Pods [Running] | Number of running pods in a namespace | cnt |

| Namespace Pods [Succeeded] | Number of succeeded-state pods in a namespace | cnt |

| Namespace Pods [Unknown] | Number of pods in unknown state within a namespace | cnt |

| Namespace GPU Clock Frequency | SM clock frequency in the Namespace | MHz |

| Namespace GPU Memory Usage | Memory utilization in the Namespace | % |

| Namespace GPU Usage | GPU utilization in the Namespace | % |

| Node CPU Size [Allocatable] | Node CPU allocatable | cnt |

| Node CPU Size [Capacity] | CPU capacity in the node | cnt |

| Node CPU Usage | CPU usage per node | % |

| Node CPU Usage [Request] | CPU request_ratio within node | % |

| Node CPU Used | CPU utilization within the node | status |

| Node Filesystem Usage | Node FS utilization | % |

| Node Memory Size [Allocatable] | memory allocatable within the node | bytes |

| Node Memory Size [Capacity] | Node memory utilization | bytes |

| Node Memory Usage | Node memory utilization | % |

| Node Memory Usage [Request] | memory request_ratio within node | % |

| Node Memory Workingset | memory working set within the node | bytes |

| Node Network In Bytes | Node network rx bytes | bytes |

| Node Network Out Bytes | Node network tx bytes | bytes |

| Node Network Total Bytes | Node network total bytes | bytes |

| Node Pods [Failed] | Number of pods in failed state within the node | cnt |

| Node Pods [Pending] | Number of pending pods in the node | cnt |

| Node Pods [Running] | Number of running pods per node | cnt |

| Node Pods [Succeeded] | Number of succeeded pods in the node | cnt |

| Node Pods [Unknown] | Number of unknown‑state pods in the node | cnt |

| Pod CPU Usage [Limit] | CPU usage_limit_ratio in the pod | % |

| Pod CPU Usage [Request] | CPU request_ratio in the pod | % |

| Pod CPU Usage | CPU usage within the pod | % |

| Pod GPU Clock Frequency | SM clock frequency in the Pod | MHz |

| Pod GPU Memory Usage | Memory utilization within the Pod | % |

| Pod GPU Usage | GPU utilization within the Pod | % |

| Pod Memory Usage [Limit] | memory usage_limit_ratio in pod | % |

| Pod Memory Usage [Request] | memory request_ratio in pod | % |

| Pod Memory Usage | Memory usage within pod | bytes |

| Pod Network In Bytes | network rx bytes in pod | bytes |

| Pod Network Out Bytes | network tx bytes in pod | bytes |

| Pod Network Total Bytes | Network total bytes in pod | bytes |

| Pod Restart Containers | container restart count in pod | cnt |

| Workload Pods [Running] | - | cnt |

1.2 - ServiceWatch Metrics

Kubernetes Engine sends metrics to ServiceWatch. The metrics provided by default monitoring are data collected at a 1‑minute interval.

Basic Metrics

The following are the basic metrics for the Kubernetes Engine namespace.

The metrics whose names are displayed in bold below are the metrics selected as key metrics among the default metrics provided by Kubernetes Engine. Key metrics are used to configure service dashboards that are automatically generated for each service in ServiceWatch.

Each metric indicates through the user guide which statistical values are meaningful when viewing that metric, and among the meaningful statistics, the values displayed in bold are the primary statistics. In the service dashboard, you can view key metrics using these primary statistical values.

| Indicator name | Detailed description | unit | meaningful statistics |

|---|---|---|---|

| cluster_up | Cluster up | Count |

|

| cluster_node_count | Cluster node count | Count |

|

| cluster_failed_node_count | Number of failed nodes in the cluster | Count |

|

| cluster_namespace_phase_count | Number of cluster namespace phases | Count |

|

| cluster_pod_phase_count | Number of cluster pod phases | Count |

|

| node_cpu_allocatable | Node CPU allocatable amount | - |

|

| node_cpu_capacity | Node CPU capacity | - |

|

| node_cpu_usage | Node CPU usage | - |

|

| node_cpu_utilization | Node CPU utilization | - |

|

| node_memory_allocatable | Node memory allocatable amount | Bytes |

|

| node_memory_capacity | Node memory capacity | Bytes |

|

| node_memory_usage | Node memory usage | Bytes |

|

| node_memory_utilization | Node memory usage rate | - |

|

| node_network_rx_bytes | Node network received bytes | Bytes/Second |

|

| node_network_tx_bytes | Node network transmitted bytes | Bytes/Second |

|

| node_network_total_bytes | Total bytes of the node network | Bytes/Second |

|

| node_number_of_running_pods | Number of pods running on a node | Count |

|

| namespace_number_of_running_pods | Number of running pods in a namespace | Count |

|

| namespace_deployment_pod_count | Namespace deployment pod count | Count |

|

| namespace_statefulset_pod_count | Namespace StatefulSet pod count | Count |

|

| namespace_daemonset_pod_count | Namespace DaemonSet Pod Count | Count |

|

| namespace_job_active_count | Active namespace job count | Count |

|

| namespace_cronjob_active_count | Number of active namespace cron jobs | Count |

|

| pod_cpu_usage | Pod CPU usage | - |

|

| pod_memory_usage | Pod memory usage | Bytes |

|

| pod_network_rx_bytes | Pod network received bytes | Bytes/Second |

|

| pod_network_tx_bytes | Pod network transmit bytes | Bytes/Second |

|

| pod_network_total_bytes | Pod network total bytes | Count |

|

| container_cpu_usage | Container CPU usage | - |

|

| container_cpu_limit | Container CPU limit | - |

|

| container_cpu_utilization | Container CPU usage | - |

|

| container_memory_usage | Container memory usage | Bytes |

|

| container_memory_limit | Container memory limit | Bytes |

|

| container_memory_utilization | Container memory usage | - |

|

| node_gpu_count | Number of node GPUs | Count |

|

| gpu_temp | GPU temperature | - |

|

| gpu_power_usage | GPU power consumption | - |

|

| gpu_util | GPU utilization | Percent |

|

| gpu_sm_clock | GPU SM clock | - |

|

| gpu_fb_used | GPU FB usage | Megabytes |

|

| gpu_tensor_active | GPU Tensor Utilization | - |

|

| pod_gpu_util | Pod GPU utilization | Percent |

|

| pod_gpu_tensor_active | Pod GPU Tensor Utilization | - |

|

2 - How-to guides

Users can create a service by entering the required information for the Kubernetes Engine and selecting detailed options through the Samsung Cloud Platform Console.

Create Kubernetes Engine

You can create and use the Kubernetes Engine service in the Samsung Cloud Platform Console.

You can create and manage clusters to use the Kubernetes Engine service. After creating the cluster, you can add services needed for operation such as nodes, namespaces, and workloads.

In the network settings of Kubernetes Engine, you can select up to 4 Security Groups.

- If you manually add a Security Group to a node created by Kubernetes Engine on the Virtual Server service page, it may be automatically removed because it is not managed by Kubernetes Engine.

- For nodes, be sure to add and manage the Security Group in the network settings of the Kubernetes Engine service.

Managed Security Group is automatically managed in Kubernetes Engine.

- Do not use it for any user-defined purpose because if you delete a Managed Security Group or add/delete rules, it will automatically be restored.

Create a cluster

You can create and use a Kubernetes Engine cluster service in the Samsung Cloud Platform Console.

To create a Kubernetes Engine cluster, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Create Cluster button. 2. Navigate to the Create Cluster page.

- Create Cluster page, enter the information needed to create the service, and select detailed options.

- In the Service Information Input area, enter or select the required information.

Category RequiredDetailed description Cluster name Required Cluster name - must start with an English letter and be entered using English letters, numbers, and special characters (

-) within 3 - 30 characters

Control plane settings > Kubernetes version Required Select Kubernetes version Control plane settings > Private endpoint allowed resources Select After selecting Enable, click Add to select the resource to allow access to the private endpoint - Only resources in the same account and the same region can be registered

- Regardless of whether Enable is enabled, the nodes of the cluster can access the private endpoint

Control Plane Settings > Public Endpoint Select After selecting Use, enter the public endpoint Allowed IP range for access as 192.168.99.0/24 - Set the access control IP range to allow external access to the Kubernetes API server endpoint

- If external access is not required, you can disable it to reduce security threats

ServiceWatch log collection Select Set whether to enable log collection so that cluster logs can be viewed in ServiceWatch - Enable selection provides 5 GB of log storage free for all services within the Account, and charges apply based on storage volume when exceeding 5 GB

- If you need to view cluster logs, it is recommended to enable the ServiceWatch log collection feature

Cloud Monitoring log collection Select Set whether to enable log collection so that logs for the cluster can be viewed in Cloud Monitoring - If you select Use, 1 GB of log storage is provided for free across all services in the Account, and any data exceeding 1 GB will be deleted sequentially

Network Settings Essential Network connection settings for the node pool - VPC name: Select a pre‑created VPC

- Subnet name: Select a standard Subnet to use from the subnets of the selected VPC

- Security Group: Click the Select button and then choose a Security Group in the Select Security Group popup

- Up to 4 Security Group can be selected

StorageClass setting Required Select the storage volume to use in the cluster - NFS Volume: After clicking the Search button, select the file storage in the File Storage Selection popup. The default file storage supports only the NFS format

Table. Kubernetes Engine service information input items - must start with an English letter and be entered using English letters, numbers, and special characters (

- Additional Information Input area, please enter or select the required information.

Category required statusDetailed description tag Select Add Tag - Up to 50 per resource can be added

- After clicking the Add Tag button, input or select Key, Value values

Table. Kubernetes Engine additional information input fields

- In the Service Information Input area, enter or select the required information.

- Summary Check the detailed information and estimated charges generated in the panel, and click the Create button.

- Once creation is complete, verify the created resources on the Cluster List page.

View cluster details

The Kubernetes Engine service allows you to view and edit the full list of resources and detailed information. Cluster Details page consists of Details, Node Pools, Tags, Job History tabs.

To view detailed cluster information, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- Click the Cluster menu on the Service Home page. 2. Navigate to the Cluster List page.

- Cluster List page, click the resource (cluster) whose detailed information you want to view. 3. Navigate to the Cluster Details page.

- Cluster Details page displays the cluster’s status information and detailed information, and it consists of Details, Node Pools, Tags, Job History tabs.

Category Detailed description Cluster status Kubernetes Engine cluster status - Creating: in progress

- Running: creation complete / operational

- Updating: version upgrade in progress

- Deleting: in progress

- Error: error occurred

Service cancellation Button to delete a Kubernetes Engine cluster - To delete a Kubernetes Engine service, you must delete all node pools added to the cluster

- If the service is deleted, the running service may be terminated immediately, so deletion is required after considering the impact of service interruption

Table. Cluster status information and additional features

- Cluster Details page displays the cluster’s status information and detailed information, and it consists of Details, Node Pools, Tags, Job History tabs.

Detailed Information

On the Cluster List page, you can view detailed information of the selected resource and edit the information if needed.

| Category | Detailed description |

|---|---|

| service | Service name |

| Resource type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform |

| Resource Name | Resource name

|

| Resource ID | Unique resource ID in the service |

| Constructor | User who created the service |

| Creation date and time | Service creation date and time |

| Modifier | User who edited the service information |

| Modification timestamp | Date and time the service information was modified |

| Cluster name | Cluster name |

| LLM Endpoint | LLM Endpoint information |

| Control area configuration | Check the assigned Kubernetes control plane (Control Plane) version and allowed access scope

|

| Network Settings | View the VPC, Subnet, and Security Group information configured when creating a Kubernetes Engine cluster

|

| StorageClass configuration | If you click the NFS volume name, you can view detailed information on the storage details page |

- The version of Kubernetes Engine is expressed as

[major].[minor].[patch], and you can upgrade only one minor version at a time.- Example: version

1.11.x > 1.13.x(Not allowed) / version1.11.x > 1.12.x(Allowed)

- Example: version

- If you are using a Kubernetes version that has reached end of support or a version that is scheduled to reach end of support, a red exclamation mark will appear to the right of the version. * If this icon is displayed, we recommend upgrading the Kubernetes version.

Node Pool

You can view, add, modify, or delete cluster node pool information. For detailed information on using node pools, refer to 노드 관리하기.

| Category | Detailed description |

|---|---|

| Add node pool | Add a node pool to the current cluster

|

| Node pool list | Check the list of node pools created in the current cluster

|

| More menu | Provides node pool management functionality

|

If a red exclamation‑mark icon appears on the node pool version, the node pool’s server OS is not supported in newer Kubernetes versions. The node pool server OS must be upgraded to ensure stable service.

- To upgrade the node pool version, delete the existing node pool and then create a new node pool with a higher server OS version.

Tag

On the Cluster List page, you can view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag list | Tag list

|

Job History

You can view the operation history of the selected resource on the Cluster List page.

| Category | Detailed description |

|---|---|

| Task History List | Resource Change History

|

Managing Cluster Resources

To manage cluster resources, we provide cluster version upgrades, kubeconfig downloads, and control‑plane logging modification features.

Even without create/delete permissions, Security Group and Virtual Server are created/deleted by Kubernetes Engine for lifecycle management purposes, and the creator/modifier is recorded as System.

Cluster version upgrade

If there is a version available for upgrade from the cluster’s Kubernetes version, you can perform the upgrade on the Cluster Details page.

- Check the following items before upgrading the cluster.

- Check if the cluster’s status is Running

- Check that the status of all node pools in the cluster is Running or Deleting.

- Verify that all node pool versions in the cluster match the cluster version.

- Check whether automatic scaling (up/down) of all node pools in the cluster and the node auto-recovery feature are disabled.

- After upgrading the cluster, proceed with the node pool upgrade. * The control plane and node pool upgrades of a Kubernetes cluster are performed separately.

- You can upgrade only one minor version at a time.

- Example: version 1.12.x > 1.13.x (possible) / version 1.11.x > 1.13.x (not possible)

- After an upgrade, you cannot perform a downgrade or rollback, so to use a previous version again you must create a new cluster.

- User systems that are using an end‑of‑life Kubernetes version may become vulnerable, so upgrade the control plane and node pool versions directly from the Samsung Cloud Platform Console.

- There are no additional costs associated with the upgrade.

- Please conduct compatibility testing of the upgrade version in advance to ensure stable system operation for users.

Pre-upgrade preparation for cluster version

When upgrading the cluster version, there is no need to delete and recreate API objects. For the migrated API, all existing API objects can be read and updated using the new API version. However, due to the deprecated API in older versions of Kubernetes, you may be unable to read or modify existing objects, or create new objects. Therefore, for system stability, we recommend migrating the client and manifest before upgrading.

Migrate the client and manifest using the following method.

- Download the latest version of the client (e.g., kubectl) and install it on the cluster, then modify the YAML to reference the new API.

- Or use a separate plugin (kubectl convert) to convert automatically. For detailed instructions, refer to the Kubernetes official documentation > Install and configure kubectl on Linux.

Upgrading Cluster and Node Pool Versions

To update the cluster and node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, click the resource (cluster) to upgrade the version. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click the Edit icon of the Kubernetes version. 4. Cluster version upgrade A popup window opens.

- Select the Kubernetes version to upgrade, and click the Confirm button.

- It may take a few minutes for the cluster upgrade to complete.

- During the upgrade, the cluster status is shown as Updating, and when the upgrade is complete, it is shown as Running.

- When the upgrade is complete, select the Node Pool tab. 6. Navigate to the Node Pool page.

- Click the More button of the node pool item, then click Node Pool Upgrade. 7. Node Pool Version Upgrade A popup window opens.

- Node Pool Version Upgrade After reviewing the message in the popup window, click the Confirm button.

- It may take a few minutes for the node pool upgrade to complete.

- While the upgrade is in progress, the node pool status is shown as Updating, and when the upgrade is complete, it is shown as Running.

Download kubeconfig

You can download the administrator/user kubeconfig settings for the cluster’s public and private endpoints as a yaml document.

To download the cluster’s kubeconfig configuration, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, click the resource (cluster) to download the kubeconfig. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click the Download admin kubeconfig/Download user kubeconfig button of the desired endpoint.

- You can download the kubeconfig file in YAML format for each permission.

Modify resources that allow private endpoint access

You can modify the resource settings that allow private endpoint access to the cluster.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- Cluster List page, click the resource (cluster) whose private endpoint access control you want to modify. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click the Edit icon for Private Endpoint Access Allowed Resources. 4. Private endpoint access allowed resource edit The popup window opens.

- Private Endpoint Access Allowed Resource Modification In the popup, set the Private Endpoint Access Allowed Resource’s Usage and add the allowed access resource, then click the Confirm button.

Modify public endpoint

You can change the public endpoint settings of the cluster.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, click the resource (cluster) whose public endpoint access control you want to modify. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click the Edit icon of the Public Endpoint. 4. Public Endpoint Edit The popup window opens.

- Public Endpoint Edit In the Public Endpoint popup, configure the usage setting and add the allowed IP address range, then click the Confirm button.

Modify control plane log collection settings

You can change the log collection settings of the cluster’s control plane. Detailed logs of the cluster can be viewed in the ServiceWatch service or the Cloud Monitoring service.

Even if you configure log collection in Cloud Monitoring, you can view the cluster logs.

- However, since the Cloud Moniotring log collection feature is scheduled for discontinuation, we recommend using ServiceWatch log collection.

To change the cluster’s control plane log collection settings, follow the steps below.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- Cluster List page, click the resource (cluster) whose control plane logging you want to modify. 3. Go to the Cluster Details page.

- On the Cluster Details page, click the Edit icon of ServiceWatch log collection. 4. ServiceWatch log collection The popup window opens.

- The Cloud Monitoring log collection feature can also be configured in the same way.

- In the ServiceWatch Log Collection popup, after setting the Use option for ServiceWatch Log Modification, click the Confirm button.

When log collection is enabled, you can view the cluster control plane’s Audit/Event logs in each service. Detailed logs can be viewed on the next page.

Modify Security Group

You can modify the cluster’s Security Group.

In the network settings of Kubernetes Engine, you can select up to 4 Security Groups.

- If you manually add a Security Group to a node created by Kubernetes Engine on the Virtual Server service page, it may be automatically removed because it is not managed by Kubernetes Engine.

- For nodes, be sure to add and manage the Security Group in the network settings of the Kubernetes Engine service.

Managed Security Group is automatically managed in Kubernetes Engine.

- Do not use it for any user-defined purpose because deleting a Managed Security Group or adding/deleting rules will automatically be restored.

To modify the cluster’s Security Group, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, click the resource (cluster) whose Security Group you want to modify. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click the Edit icon of the Security Group. 4. Security Group Edit The popup window opens.

- After selecting or deselecting the Security Group to modify, click the Confirm button.

Terminate Cluster

To terminate the cluster, follow the steps below.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engines.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- Cluster List page, click the resource (cluster) whose detailed information you want to view. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, click Cancel Service.

- Service Termination After reviewing the content in the popup window, click the Confirm button.

2.1 - Managing Nodes

A node is a set of machines that run containerized applications. A cluster must have at least one node to deploy an application. Nodes can be defined in a node pool for use. Nodes belonging to a node pool must have the same server type, size, and OS image, and flexible deployment strategies can be established by creating multiple node pools.

After creating a Kubernetes Engine cluster, add a node pool and modify or delete it as needed.

- It is recommended not to use the OS firewall on Kubernetes Engine nodes that use Calico.

- The firewall settings of Samsung Cloud Platform are set to Inactive by default.

- As shown in the reference link below, it is recommended to set the firewall to a disabled state in environments that use Calico.

- When a node is designated as a Backup service target, it cannot be deleted, so the functions below are unavailable.

- Node pool reduction (including automatic scaling)

- Node pool upgrade

- Automatic node pool recovery

- Delete node pool

Add node pool

A node refers to a machine that runs containerized applications, and at least one node is required to deploy applications in a Kubernetes cluster. After the Kubernetes Engine cluster has been created, add a node pool from the details page.

- In Kubernetes Engine, you can define and use a node pool, which is a set of nodes. * Since the nodes in a node pool use the same server type, size, and OS image, users can devise flexible deployment strategies by using multiple node pools.

In the Virtual Server menu, you can create a node pool using the user’s Custom Image. To create a node pool using a Custom Image, follow these steps.

- Create a Virtual Server that includes a Samsung Cloud Platform Kubernetes Engine image.

- Use the Virtual Server’s Create Image feature to proceed with image creation.

- Select the registered Custom Image and create a node pool.

- For more details, see Virtual Server > Create Image.

To add a node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster to which you want to add a node pool. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, select the Node Pool tab, then click the Add Node Pool button. 4. Navigate to the Add Cluster Node Pool page.

- On the Add Cluster Node Pool page, enter the information required to create a node pool and select detailed options.

- In the Service Information Input area, enter or select the required information.

Category Required statusDetailed description Node pool name Required Node pool name - must start with a lowercase English letter and be entered using lowercase English letters, numbers, and special characters (

-) within 3 - 20 characters- cannot end with a special character (

-)

- cannot end with a special character (

Node Pool > Server Type Required Virtual Server server types for the node - Standard: Standard specifications commonly used

- High Capacity: Large-scale server specifications beyond Standard

- GPU: GPU specifications available when securing resources for special requirements such as AI/ML

- For detailed information about the server types offered by Virtual Server, refer to Virtual Server 서버 타입

Node Pool > Server OS Essential Node’s Virtual Sever OS image - Standard: RHEL 8.10, Ubuntu 22.04

- Custom: Custom image for Kubernetes created from the Virtual Server product (RHEL, Ubuntu)

Node Pool > Block Storage Essential Block storage settings used by the node’s Virtual Server - SSD: High‑performance general volume

- HDD: General volume

- SSD/HDD_KMS: Additional encrypted volume that uses encryption keys from Samsung Cloud Platform KMS(Key Management System)

- Encryption can be applied only at initial creation and cannot be changed after the service is created

- Performance degradation occurs when using the SSD_KMS disk type

- SSD_Provisioned: Enter detailed settings for the selected storage type

- Enter a value between 5,000 and 20,000 for the Max IOPS field, and between 250 and 1,000 for the Max Throughput field

- For a Custom Image with SSD_Provisioned, the predetermined values are auto‑filled and the fields are disabled

- Capacity is entered in Units, with a value between 13 and 125

- Since 1 Unit equals 8 GB, this creates 104 ~ 1,000 GB

Node Pool > Server Group Select Apply a pre‑created Server Group in the Virtual Server service on the node - Click Use to set the Server Group usage

- When usage is enabled, select a Server Group

- Supports Affinity or Anti‑Affinity policies

- Partition policy is not supported

- Cannot modify after creating a node pool

- GPU server type cannot be selected

Node pool auto scaling Essential Automatically adjust the number of nodes in a node pool - For configuration, refer to 노드 풀 자동 확장/축소하기

Number of nodes Required Number of nodes to create within a node pool - Enter a value in the range 1 - 100

Automatic node recovery Required When an abnormal node is detected in the node pool, automatically delete and create a new one - For configuration, refer to 노드 풀 자동 복구하기

Keypair Essential User authentication method used to connect to a node’s Virtual Server - New: Create a new one if a new Keypair is required

- Refer to Keypair 생성하기 for how to create a new Keypair

- Default login account list by OS

- Alma Linux: almalinux

- RHEL: cloud-user

- Rocky Linux: rocky

- Ubuntu: ubuntu

- Windows: sysadmin

Label Selection Optionally schedule the workload on a node - Click the Add button to enter the label key and value

- Refer to 노드 풀 레이블 설정하기 for configuration

Tint Select Prevent workloads from being scheduled onto nodes - Add button to click for taint effect, enter key and value

- Refer to 노드 풀 테인트 설정하기 for configuration method

Advanced Settings Selection Settings for detailed areas such as pods and logs for the node - Click Use to choose whether to apply the advanced settings for the node pool you will create

- Refer to Configure advanced node pool settings for the configuration method

Connection resource Select Configure File Storage and Object Storage resources for nodes at the node pool level - Click the Add button to select the File Storage and Object Storage resources to attach to the node pool you will create

- Refer to Configure Linked Resources for Node Pools for the configuration method

Table. Input fields for Kubernetes Engine node pool service information - must start with a lowercase English letter and be entered using lowercase English letters, numbers, and special characters (

- In the Service Information Input area, enter or select the required information.

- Summary Verify the detailed information and estimated charges generated in the panel, then click the Create button.

- When creation is complete, check the created resources on the Cluster Details > Node Pool tab > Node Pool list page.

- When the notification popup opens, click the Confirm button.

Update Node Pool

If needed, modify the number of nodes in the node pool on the Kubernetes Engine details page.

To modify the number of nodes, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- Click the Cluster menu on the Service Home page. 2. Navigate to the Cluster List page.

- Select the cluster whose node count you want to modify on the Cluster List page. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, select the Node Pool tab, then click the Node Pool Name you want to edit. 4. Navigate to the Node Pool Details page.

- On the Node Pool Details page, click the Edit icon to the right of Node Pool Information. 5. Node Pool Edit The popup window opens.

- Edit Node Pool In the popup window, edit the node pool information, then click the Confirm button.

Upgrade Node Pool

If the Kubernetes version of the control plane and the version of the node pool differ, you can upgrade the node pool to synchronize the versions.

After upgrading the cluster, proceed with the node pool upgrade. The control plane and node pool upgrades of a Kubernetes cluster are performed separately.

- When you perform a node pool upgrade, a rolling update is carried out on the nodes belonging to the node pool. During this process, a brief service interruption may occur, which is normal for a rolling update and will automatically recover after a short period.

- The server OS version may vary depending on the Kubernetes version of the node pool.

To upgrade the node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to perform a node pool version upgrade. 3. Navigate to the Cluster Details page.

- Cluster Details page, select the Node Pool tab, then click More > Node Pool Upgrade at the far right end of the node pool row. 4. Node Pool Version Upgrade A popup window opens.

- You can upgrade the node pool only when the node’s status is Running.

- Node Pool Version Upgrade After reviewing the information in the popup window, click the Confirm button.

Auto-scaling node pools

Node pool auto-scaling is a feature that automatically adjusts the number of node pools by adding new nodes to a specified node pool or removing existing nodes based on workload demands. This feature operates based on the node pool.

- When automatically scaling a node pool up or down, it is adjusted based on the resource requests of the pods running on the node pool’s nodes rather than the actual resource utilization, and it periodically checks the status of pods and nodes and executes automatic scaling operations.

To set up automatic scaling for a node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to use the node auto-scaling feature. 3. Navigate to the Cluster Details page.

- Cluster Details page, after selecting the Node Pool tab, click the Node Pool name you wish to modify. 4. Navigate to the Node Pool Details page.

- Node Pool Details page, click the Edit icon on the right of Node Pool Information. 5. Edit Node Pool The popup window opens.

- Edit Node Pool in the popup window, select Node Pool Auto Scaling as Enable.

- After entering the minimum and maximum node counts, click the Confirm button.Reference

Node pool auto-scaling settings can also be configured on the cluster node pool creation page.

- Node pool scaling conditions

- When a pod fails to start in the cluster due to insufficient resources (Pending pod occurs)

- Node pool reduction criteria (when all are met)

- If the sum of resource requests (CPU/Memory) of all pods running on a node is less than 50 % of the node’s allocatable resources.

- When all pods running on a node can be scheduled on another node (there must be no pods subject to PDB restrictions, etc.)

- When using automatic node pool scaling, to prevent deletion caused by node reduction, add the following annotation to the node.

cluster-autoscaler.kubernetes.io/scale-down-disabled: “true”

- Node pool scaling conditions

- Node pool auto scaling/downsizing operates only when NotReady nodes constitute 45% or less of the total nodes in the cluster and there are three or fewer such nodes.

- If there are nodes directly attached instead of node pools created by the Kubernetes Engine service, using this feature may cause malfunction.

Automatically Restore Node Pool

Node auto-recovery is a feature that automatically deletes an abnormal node detected in the cluster and creates a new node to restore the node count in the node pool to a normal state. This feature operates based on the node pool.

Node auto-recovery deletes the existing node and creates a new node when communication between K8S Control Planes fails due to node (Virtual Server) problems, a stopped state, network issues, etc., according to the node auto-recovery conditions, so caution is required when using it.

- When creating a node pool, it is restored according to the initially set conditions, and any custom settings made after node creation are not restored.

If there are nodes that were directly connected instead of node pools created by the Kubernetes Engine service, using this feature may cause malfunction.

To configure the node auto-recovery feature, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Go to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to use the node auto-recovery feature. 3. Go to the Cluster Details page.

- On the Cluster Details page, after selecting the Node Pool tab, click the Node Pool name you wish to edit. 4. Navigate to the Node Pool Details page.

- On the Node Pool Details page, click the Edit icon on the right of Node Pool Information. 5. Edit Node Pool A popup window opens.

- Node Pool Edit in the popup window, after selecting Node Auto Recovery as Enable, click the Confirm button.

Node auto-recovery settings can also be configured on the cluster node pool creation page.

- When the node is an auto-recovery target

- If a node reports a NotReady status in consecutive checks for a certain time threshold (approximately 10 minutes)

- When a node does not report its status at all for a certain time threshold (approximately 10 minutes)

- If the node is not a target for automatic recovery

- When a node is first created, it remains in the Creating state instead of reaching the Running state.

- When more than five abnormal nodes occur simultaneously in the same node pool.

Setting node pool labels

Node pool labels are a feature for optionally scheduling workloads onto nodes.

- When applying a node pool label, it is not applied to existing nodes; the label is applied only to nodes created thereafter.

- If you need to apply a label to an existing node, the user must set it directly with kubectl.

To set the node pool label, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- Click the Cluster menu on the Service Home page. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to set the node pool label. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, select the Node Pool tab, then click the Node Pool Name you want to edit. 4. Navigate to the Node Pool Details page.

- On the Node Pool Details page, when you click the Edit icon of a label, the Edit Label popup opens.

- In the Label Edit popup, click the Add button to add as many labels as needed.

- Enter the label information and click the Confirm button.

Configure Node Pool Taint

Node pool taint is a feature that prevents workloads from being scheduled onto nodes.

- If you set taints on all node pools, pods required for normal cluster operation may not be scheduled.

- When applying a node pool taint, it does not affect existing nodes; the taint is applied only to nodes created thereafter.

- If you need to apply a taint to an existing node, the user must configure it directly with kubectl.

To configure the node pool taint, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- Select the cluster for which you want to set a node pool taint on the Cluster List page. 3. Navigate to the Cluster Details page.

- Cluster Details page, after selecting the Node Pool tab, click the Node Pool name you wish to modify. 4. Navigate to the Node Pool Details page.

- On the Node Pool Details page, clicking the Edit icon of a taint opens the Edit Taint popup window.

- Tint Edit In the popup window, click the Add button to add the required number of tints.

- Enter the tint information and click the Confirm button.

Configure advanced node pool settings

Node pool advanced settings are a feature for applying detailed configurations such as the number of pods per node, PID, logs, and image garbage collection.

Each setting corresponds to the kubelet configuration as follows.

- Maximum pods per node: maxPods

- Image GC upper limit percent: imageGCHighThresholdPercent

- Image GC low threshold percent: imageGCLowThresholdPercent

- Container log maximum size MB: containerLogMaxSize

- Container log maximum file count: containerLogMaxFiles

- Pod PID limit: podPidsLimit

- Allow unsafe Sysctl: allowedUnsafeSysctls

To configure advanced settings for the node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to configure advanced node pool settings. 3. Navigate to the Cluster Details page.

- Cluster Details page, after selecting the Node Pool tab, click Create Node Pool. 4. Go to the Create Node Pool page.

- On the Node Pool Creation page, select Advanced Settings to Enable.

- After selecting Use, enter the required information for the displayed items.

- After confirming that the required information has been entered correctly in the Summary tab, click the Create button.

Configure linked resources for node pool

Node pool connection resources are a feature for connecting or disconnecting File Storage and Object Storage on a per‑node‑pool basis.

- Node pool connection resources have a quantity limit.

- You can add up to three File Storage and three Object Storage, for a total of six connection resources.

- StorageClass and Provisioner for the connected resource are not provided.

- Do not arbitrarily modify the connection resources automatically added in the node pool for the File Storage and Object Storage services. * Changes may be reverted or cause unexpected behavior.

To configure node pool connection resources, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster for which you want to configure node pool connection resources. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, select the Node Pool tab, then click the Node Pool Name you want to edit. 4. Navigate to the Node Pool Details page.

- When you click the Edit icon of a connection resource on the Node Pool Details page, the Edit Connection Resource popup opens.

- In the Edit Connected Resource popup, clicking the Add button opens the Add Connected Resource popup.

- Add Connected Resource In the popup window, select File Storage and Object Storage.

- After verifying the resources to connect to the node pool, click the Confirm button.

Delete Node Pool

If needed, delete the node pool from the Kubernetes Engine details page.

To delete a node pool, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Cluster menu. 2. Navigate to the Cluster List page.

- On the Cluster List page, select the cluster whose node count you want to modify. 3. Navigate to the Cluster Details page.

- On the Cluster Details page, select the Node Pool tab, then click the More button at the far right of the node pool row. 4. Click Delete Node Pool in the More button.

- Node Pool Deletion In the popup window, select the checkbox, enter the name of the node pool to delete, and click the Confirm button.

- You must select the checkbox in the node deletion confirmation message for the confirm button to become active.

View node details

After creating the cluster, you can view metadata, object information, and other details of the added nodes, and edit resource files using a YAML editor.

To view detailed information about the node pool, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Node menu. 2. Go to the Node List page.

- On the Node List page, select the cluster whose detailed information you want to view from the gear button at the top left, then click the Confirm button.

- Select the node whose detailed information you want to view and click. 4. Navigate to the Node Details page.

Category Detailed descriptionStatus Indicator Display the current status of the node Detailed Information Check the node’s Account information, metadata, and object information YAML Node resources can be edited in the YAML editor - Click the Edit button, modify the resource, then click the Save button to apply the changes

- When editing content, click the Diff button to view the changes

event Check events that occurred on the node Pod Check node pod information - A Pod (pod) is the smallest compute unit that can be created, managed, and deployed in Kubernetes Engine

Account Information Check basic information about the Account, such as the Account name, location, and creation time. Metadata Information Check metadata information such as node labels, annotations, and taints. Object Information Internal IP and machine ID, capacity, resources, etc., the object information of the created node is displayed - If GPU resources exist, check the GPU count in the Capacity > Nvidia.com/GPU column

Table. Node detailed information items

2.2 - Managing Namespaces

A namespace is a logical separation unit within a Kubernetes cluster, used to specify access permissions or resource usage limits per namespace.

Create a namespace

To create a namespace, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Namespace menu. 2. Navigate to the Namespace List page.

- On the Namespace List page, select the cluster where you want to create a namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check detailed namespace information

On the namespace detail page, you can view the namespace status and detailed information.

To view detailed namespace information, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Namespace menu. 2. Navigate to the Namespace List page.

- On the Namespace List page, select the cluster that the namespace requiring detailed information belongs to from the gear button at the top left, then click Confirm.

- On the Namespace List page, select the item you want to view details for and click it. 4. Go to the Namespace Details page.

Category Detailed description Status indicator Display the current state of the namespace Delete Namespace Delete namespace - A namespace containing workloads cannot be deleted. To delete a namespace, you must delete all associated workloads

Detailed Information Check the Account information and metadata of the namespace YAML Namespaces can be edited in the YAML editor - Click the Edit button, modify the namespace, then click the Done button to apply the changes

- When editing content, click the Diff button to view the changes

event Check events that occurred within the namespace Pod Check the pod information in the namespace Account information Check basic information about the Account, such as name, location, and creation timestamp. Metadata Information Check the metadata information of the namespace Table. Namespace detailed information items

Delete namespace

To delete a namespace, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Namespace menu. 2. Navigate to the Namespace List page.

- On the Namespace List page, select the cluster that the namespace you want to delete belongs to from the gear button at the top left, then click the Confirm button.

- On the Namespace List page, select the item you want to view details for and click it. 4. Navigate to the Namespace Details page.

- On the Namespace Details page, click Delete Namespace.

- When the notification confirmation window appears, click the Confirm button.

2.3 - Manage Workloads

The workload is an application running on Kubernetes Engine. You can create a namespace and then add or delete workloads. Workloads are created and then managed for each item: Deployment, Pod, StatefulSet, DaemonSet, Job, and CronJob.

Deployments, Pods, StatefulSets, DaemonSets, Jobs, and CronJobs are defaulted to the cluster (namespace) selected when creating the service. Even if you select a different item in the list, the default cluster (namespace) setting is retained.

- To select a different cluster (namespace), click the gear button on the right side of the list. * Cluster/Namespace Settings In the popup window, select the cluster and namespace to change, and click the Confirm button. * You can view the services created in the selected cluster/namespace.

Managing Deployments

A Deployment refers to a resource that provides updates for Pods and ReplicaSets (ReplicaSet). You can create a deployment in the workload, view its details, or delete it.

Create Deployment

To create a deployment, follow the steps below.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Deployment under the Workload menu. 2. Go to the Deployment List page.

- On the Deployment List page, select the cluster and namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

- The following is an example

.yamlfile that shows the required fields and object spec for creating a Deployment. * (application/deployment.yaml)Color modeapiVersion: apps/v1 kind: Deployment metadata: name: nginx-deployment spec: selector: matchLabels: app: nginx replicas: 2 # tells deployment to run 2 pods matching the template template: metadata: labels: app: nginx spec: containers: - name: nginx image: nginx:1.14.2 ports: - containerPort: 80apiVersion: apps/v1 kind: Deployment metadata: name: nginx-deployment spec: selector: matchLabels: app: nginx replicas: 2 # tells deployment to run 2 pods matching the template template: metadata: labels: app: nginx spec: containers: - name: nginx image: nginx:1.14.2 ports: - containerPort: 80Code block. Required fields and object Spec for deployment creation.

- The following is an example

View deployment details

To view deployment details, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Deployment under the Workload menu. 2. Go to the Deployment List page.

- On the Deployment List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item you want to view detailed information for on the Deployment List page. 4. Deployment Details page will be opened.

- If you select Show system objects at the top of the list, all items except the Kubernetes object entries will be displayed.

- Click each tab to view the service information.

Category Detailed descriptionDelete Deployment Delete the deployment Detailed Information Detailed deployment information can be viewed YAML The deployment’s resource file can be edited in the YAML editor - Edit button, click and modify the resource, then click the Done button to apply the changes

- When editing content, click the Diff button to view the changes

event Check events that occurred within the deployment Pod Check the pod information of the deployment - A Pod (pod) is the smallest compute unit that can be created, managed, and deployed in Kubernetes Engine

Account information Check basic information about the Account, such as the Account name, location, and creation time. Metadata Information Check the deployment’s metadata information Object Information Check the deployment’s object information Table. Deployment detailed information items

Delete Deployment

To delete the deployment, follow the steps below.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Deployment under the Workload menu. 2. Navigate to the Deployment List page.

- On the Deployment list page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item you want to delete on the Deployment List page. 4. Navigate to the Deployment Details page.

- On the Deployment Details page, click Delete Deployment.

- When the notification confirmation window appears, click the Confirm button.

Managing Pods

A pod (Pod) is the smallest compute unit in Kubernetes that can be created, managed, and deployed, representing a group of one or more containers. You can create pods in the workload, view their details, or delete them.

Create Pod

To create a pod, follow the steps below.

- All Services > Container > Kubernetes Engine Click the menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Pod under the Workload menu. 2. Navigate to the Pod List page.

- On the Pod List page, select the cluster and namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check pod detailed information

To view detailed pod information, follow these steps.

- All Services > Container > Kubernetes Engine menu, click it. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Pod under the Workload menu. 2. Navigate to the Pod List page.

- On the Pod List page, select the cluster and namespace using the gear button at the top left, then click Confirm.

- Select the item you want to view detailed information for on the Pod List page. 4. Navigate to the Pod Details page.

- If you select Show system objects at the top of the list, all items except the Kubernetes object entries will be displayed.

- Click each tab to view the service information.

Category Detailed descriptionStatus indicator Display the current status of the pod Delete pod Delete the pod Detailed Information Can view detailed pod information YAML The pod’s resource file can be edited in the YAML editor - Click the Edit button, modify the resource, then click the Done button to apply the changes

- When editing content, you can click the Diff button to view the changes

event Check events that occurred within the pod log Select a container to view the pod’s container information. Account Information Check basic information about the Account, such as name, location, and creation timestamp. Metadata Information Check the pod’s metadata information Object Information Check the pod’s object information Initialization Container Information Check the pod’s init container information Container Information Check the pod’s container information Table. Pod detailed information items

Delete Pod

To delete a pod, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Pod under the Workload menu. 2. Go to the Pod List page.

- On the Pod List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the items you want to delete on the Pod List page. 4. Navigate to the Pod Details page.

- On the Pod Details page, click Delete Pod.

- When the notification dialog appears, click the Confirm button.

Managing StatefulSets

A StatefulSet is a workload API object used to manage an application’s stateful components. You can create a StatefulSet in the workload, view its details, or delete it.

Creating a StatefulSet

To create a StatefulSet, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click StatefulSet under the Workload menu. 2. StatefulSet list page is opened.

- On the StatefulSet list page, select the cluster and namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check detailed information of StatefulSet

To view detailed information about a StatefulSet, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click StatefulSet under the Workload menu. 2. StatefulSet list page is opened.

- On the StatefulSet List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item whose detailed information you want to view on the StatefulSet List page. 4. Navigate to the StatefulSet Details page.

- If you select Show system objects at the top of the list, all items except the Kubernetes object entries will be displayed.

- Click each tab to view the service information.

Category Detailed descriptionDelete StatefulSet Delete the StatefulSet Detailed Information Can view detailed information of a StatefulSet YAML The resource file of a StatefulSet can be edited in the YAML editor - Click the Edit button, modify the resource, then click the Done button to apply the changes

- When editing content, click the Diff button to view the changes

event Check events that occurred within the StatefulSet Pod Check the pod information of the StatefulSet Account Information Check basic information about the Account, such as name, location, creation time, etc. Metadata Information Check the metadata information of the StatefulSet Object Information Check the object information of the StatefulSet Table. StatefulSet detailed information items

Delete StatefulSet

To delete a StatefulSet, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click StatefulSet under the Workload menu. 2. Navigate to the StatefulSet list page.

- On the StatefulSet List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- StatefulSet list page, select the items you want to delete. 4. Navigate to the StatefulSet Details page.

- On the StatefulSet Details page, click Delete StatefulSet.

- When the notification confirmation window appears, click the Confirm button.

Managing DaemonSets

A DaemonSet is a resource that ensures a copy of a pod runs on every node or on a subset of nodes. You can create a DaemonSet in the workload, view its details, or delete it.

Creating a DaemonSet

To create a DaemonSet, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click DaemonSet under the Workload menu. 2. Go to the DaemonSet list page.

- On the DaemonSet list page, select the cluster and namespace from the gear button at the top left, then click Create object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check DaemonSet detailed information

To view detailed information about a DaemonSet, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click DaemonSet under the Workload menu. 2. Go to the DaemonSet List page.

- On the DaemonSet list page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item you want to view details for on the DaemonSet List page. 4. Navigate to the DaemonSet Details page.

- If you select Show system objects at the top of the list, all items except the Kubernetes object entries are displayed.

- Click each tab to view the service information.

Category Detailed descriptionDelete DaemonSet Delete the DaemonSet Detailed Information Can view detailed DaemonSet information YAML The DaemonSet’s resource file can be edited in the YAML editor - Click the Edit button, modify the resource, then click the Done button to apply the changes

- When editing content, you can click the Diff button to view the changed content

event Check events that occurred within the DaemonSet Pod Check DaemonSet pod information Account Information Check basic information about the Account, such as name, location, creation time, etc. Metadata Information Check the DaemonSet’s metadata information Object Information Check the DaemonSet object information Table. DaemonSet detailed information items

Delete DaemonSet

To delete a DaemonSet, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click DaemonSet under the Workload menu. 2. Navigate to the DaemonSet list page.

- On the DaemonSet list page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the items you want to delete on the DaemonSet List page. 4. DaemonSet Details Navigate to the page.

- On the DaemonSet Details page, click Delete DaemonSet.

- When the notification confirmation window appears, click the Confirm button.

Job Management

A Job is a resource that creates one or more Pods and continues to run Pods until the specified number of Pods have completed successfully. You can create a job in the workload, view its details, or delete it.

Create Job

To create a job, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Job under the Workload menu. 2. Go to the Job List page.

- On the Job List page, select the cluster and namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check job details

To view the job details, follow these steps.

- All Services > Container > Kubernetes Engine Click the menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Job under the Workload menu. 2. Navigate to the Job List page.

- On the Job List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item you want to view detailed information for on the Job List page. 4. Go to the Job Details page.

- If you select Show system objects at the top of the list, all items except the Kubernetes object entries will be displayed.

- Click each tab to view the service information.

Category Detailed descriptionDelete Job Delete the job Detailed Information Detailed job information can be viewed YAML You can edit the job’s resource file in the YAML editor - Click the Edit button, modify the resource, then click the Done button to apply the changes

- When editing content, click the Diff button to view the changes

event Check events that occurred within the job Pod Check the pod information of the job Account Information Check basic information about the Account, such as name, location, creation time, etc. Metadata Information Check the job’s metadata information Object Information Check job object information Table. Job detail information items

Delete job

To delete a job, follow the steps below.

- Click the All Services > Container > Kubernetes Engine menu. 1. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click Job under the Workload menu. 2. Go to the Job List page.

- Job List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the items you want to delete on the Job List page. 4. Navigate to the Job Details page.

- On the Job Details page, click Delete Job.

- When the notification dialog appears, click the Confirm button.

Managing Cron Jobs

A cron job is a resource that runs a job periodically according to a schedule written in cron format. It can be used when executing repetitive tasks at regular intervals, such as backups and report generation. In the workload, you can create a cron job and view or delete its details.

Create a cron job

To create a cron job, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click CronJob under the Workload menu. 2. Navigate to the Cron Job List page.

- On the CronJob List page, select the cluster and namespace from the gear button at the top left, then click Create Object.

- Enter the object information in the Object Creation Popup and click the Confirm button.

Check detailed cron job information

To view detailed information about the cron job, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. 1. Go to the Service Home page of Kubernetes Engine.

- On the Service Home page, click CronJob under the Workload menu. 2. Navigate to the Cron Job List page.

- On the CronJob List page, select the cluster and namespace from the gear button at the top left, then click Confirm.

- Select the item you want to view detailed information for on the Cron Job List page. 4. Navigate to the Cron Job Details page.