This is the multi-page printable view of this section. Click here to print.

GPU Server

1 -

1.1 - Server Type

GPU Server Server Type

GPU Server is classified according to the GPU Type provided, and the GPU used in the GPU Server is determined by the server type selected when creating the GPU Server. Please select the server type according to the specifications of the application you want to run on the GPU Server.

The server types supported by the GPU Server are as follows.

GPU-H100-2 g2v12h1

Category | Example | Detailed description |

|---|---|---|

| Server Type | GPU-H100-2 | Provided server type classification

|

| Server specifications | g2 | Provided server type classification and generation

|

| Server specifications | v12 | Number of vCores

|

| Server specifications | h1 | GPU type and quantity

|

g1 server type

The g1 server type is a GPU Server that uses NVIDIA A100 Tensor Core GPU, suitable for high-performance applications.

- Provides up to 8 NVIDIA A100 Tensor Core GPUs

- Equipped with 6,912 CUDA cores and 432 Tensor cores per GPU

- Supports up to 128 vCPUs and 1,920 GB of memory

- Maximum 40 Gbps networking speed

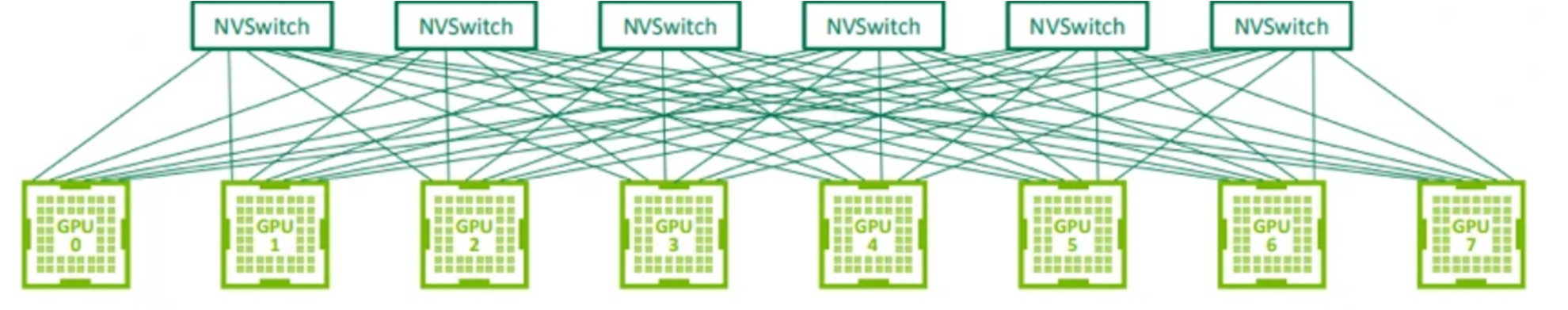

- 600GB/s GPU and NVIDIA NVSwitch P2P communication

| Category | Server Type | GPU | CPU | Memory | GPU Memory | Network Bandwidth |

|---|---|---|---|---|---|---|

| GPU-A100-1 | g1v16a1 | 1 | 16 vCore | 234 GB | 80 GB | up to 20 Gbps |

| GPU-A100-1 | g1v32a2 | 2 | 32 vCore | 468 GB | 160 GB | up to 20 Gbps |

| GPU-A100-1 | g1v64a4 | 4 | 64 vCore | 936 GB | 320 GB | up to 40 Gbps |

| GPU-A100-1 | g1v128a8 | 8 | 128 vCore | 1872 GB | 640 GB | Maximum 40 Gbps |

g2 server type

The g2 server type is a GPU Server that uses NVIDIA H100 Tensor Core GPU, suitable for high-performance applications.

- Up to 8 NVIDIA H100 Tensor Core GPUs provided

- Equipped with 16,896 CUDA cores and 528 Tensor cores per GPU

- Supports up to 96 vCPUs and 1,920 GB of memory

- Maximum networking speed of 40Gbps

- 900GB/s GPU and NVIDIA NVSwitch P2P communication

| Category | Server Type | GPU | CPU | Memory | GPU Memory | Network Bandwidth |

|---|---|---|---|---|---|---|

| GPU-H100-2 | g2v12h1 | 1 | 12 vCore | 234 GB | 80 GB | up to 20 Gbps |

| GPU-H100-2 | g2v24h2 | 2 | 24 vCore | 468 GB | 160 GB | up to 20 Gbps |

| GPU-H100-2 | g2v48h4 | 4 | 48 vCore | 936 GB | 320 GB | Maximum 40 Gbps |

| GPU-H100-2 | g2v96h8 | 8 | 96 vCore | 1872 GB | 640 GB | up to 40 Gbps |

1.2 - Monitoring Metrics

GPU Server Monitoring Metrics

The following table shows the monitoring metrics of the GPU Server that can be checked through Cloud Monitoring.

Even without installing an Agent, basic monitoring metrics are provided. Please check the Table. GPU Server Monitoring Metrics (Basic) below. Additionally, metrics that can be retrieved by installing an Agent are referenced in the Table. GPU Server Additional Monitoring Metrics (Agent Installation Required) below.

For detailed Cloud Monitoring usage, please refer to the Cloud Monitoring guide.

| Performance Item Name | Description | Unit |

|---|---|---|

| Memory Total [Basic] | Total available memory in bytes | bytes |

| Memory Used [Basic] | Currently used memory in bytes | bytes |

| Memory Swap In [Basic] | Swapped memory in bytes | bytes |

| Memory Swap Out [Basic] | Swapped memory in bytes | bytes |

| Memory Free [Basic] | Unused memory in bytes | bytes |

| Disk Read Bytes [Basic] | Read bytes | bytes |

| Disk Read Requests [Basic] | Number of read requests | cnt |

| Disk Write Bytes [Basic] | Written bytes | bytes |

| Disk Write Requests [Basic] | Number of write requests | cnt |

| CPU Usage [Basic] | Average system CPU usage over 1 minute | % |

| Instance State [Basic] | Instance state | state |

| Network In Bytes [Basic] | Received bytes | bytes |

| Network In Dropped [Basic] | Dropped received packets | cnt |

| Network In Packets [Basic] | Received packets | cnt |

| Network Out Bytes [Basic] | Sent bytes | bytes |

| Network Out Dropped [Basic] | Dropped sent packets | cnt |

| Network Out Packets [Basic] | Sent packets | cnt |

| Performance Item Name | Description | Unit |

|---|---|---|

| GPU Count | Number of GPUs | cnt |

| GPU Memory Usage | GPU memory usage rate | % |

| GPU Memory Used | Used GPU memory | MB |

| GPU Temperature | GPU temperature | ℃ |

| GPU Usage | GPU utilization | % |

| GPU Usage [Avg] | Average GPU usage rate | % |

| GPU Power Cap | Maximum power capacity of the GPU | W |

| GPU Power Usage | Current power usage of the GPU | W |

| GPU Memory Usage [Avg] | Average GPU memory usage rate | % |

| GPU Count in use | Number of GPUs in use by jobs on the node | cnt |

| Execution Status for nvidia-smi | Execution result of the nvidia-smi command | status |

| Core Usage [IO Wait] | CPU time spent in IO wait state | % |

| Core Usage [System] | CPU time spent in system space | % |

| Core Usage [User] | CPU time spent in user space | % |

| CPU Cores | Number of CPU cores on the host | cnt |

| CPU Usage [Active] | CPU time used, excluding idle and IO wait states | % |

| CPU Usage [Idle] | CPU time spent in idle state | % |

| CPU Usage [IO Wait] | CPU time spent in IO wait state | % |

| CPU Usage [System] | CPU time used by the kernel | % |

| CPU Usage [User] | CPU time used by user space | % |

| CPU Usage/Core [Active] | CPU time used per core, excluding idle and IO wait states | % |

| CPU Usage/Core [Idle] | CPU time spent in idle state per core | % |

| CPU Usage/Core [IO Wait] | CPU time spent in IO wait state per core | % |

| CPU Usage/Core [System] | CPU time used by the kernel per core | % |

| CPU Usage/Core [User] | CPU time used by user space per core | % |

| Disk CPU Usage [IO Request] | CPU time spent on IO requests | % |

| Disk Queue Size [Avg] | Average queue length of requests | num |

| Disk Read Bytes | Bytes read from the device per second | bytes |

| Disk Read Bytes [Delta Avg] | Average delta of bytes read from the device | bytes |

| Disk Read Bytes [Delta Max] | Maximum delta of bytes read from the device | bytes |

| Disk Read Bytes [Delta Min] | Minimum delta of bytes read from the device | bytes |

| Disk Read Bytes [Delta Sum] | Sum of delta of bytes read from the device | bytes |

| Disk Read Bytes [Delta] | Delta of bytes read from the device | bytes |

| Disk Read Bytes [Success] | Total bytes successfully read | bytes |

| Disk Read Requests | Number of read requests to the device per second | cnt |

| Disk Read Requests [Delta Avg] | Average delta of read requests to the device | cnt |

| Disk Read Requests [Delta Max] | Maximum delta of read requests to the device | cnt |

| Disk Read Requests [Delta Min] | Minimum delta of read requests to the device | cnt |

| Disk Read Requests [Delta Sum] | Sum of delta of read requests to the device | cnt |

| Disk Read Requests [Success Delta] | Delta of successful read requests to the device | cnt |

| Disk Read Requests [Success] | Total successful read requests | cnt |

| Disk Request Size [Avg] | Average size of requests to the device | num |

| Disk Service Time [Avg] | Average service time of requests to the device | ms |

| Disk Wait Time [Avg] | Average wait time of requests to the device | ms |

| Disk Wait Time [Read] | Average read wait time of the device | ms |

| Disk Wait Time [Write] | Average write wait time of the device | ms |

| Disk Write Bytes [Delta Avg] | Average delta of bytes written to the device | bytes |

| Disk Write Bytes [Delta Max] | Maximum delta of bytes written to the device | bytes |

| Disk Write Bytes [Delta Min] | Minimum delta of bytes written to the device | bytes |

| Disk Write Bytes [Delta Sum] | Sum of delta of bytes written to the device | bytes |

| Disk Write Bytes [Delta] | Delta of bytes written to the device | bytes |

| Disk Write Bytes [Success] | Total bytes successfully written | bytes |

| Disk Write Requests | Number of write requests to the device per second | cnt |

| Disk Write Requests [Delta Avg] | Average delta of write requests to the device | cnt |

| Disk Write Requests [Delta Max] | Maximum delta of write requests to the device | cnt |

| Disk Write Requests [Delta Min] | Minimum delta of write requests to the device | cnt |

| Disk Write Requests [Delta Sum] | Sum of delta of write requests to the device | cnt |

| Disk Write Requests [Success Delta] | Delta of successful write requests to the device | cnt |

| Disk Write Requests [Success] | Total successful write requests | cnt |

| Disk Writes Bytes | Bytes written to the device per second | bytes |

| Filesystem Hang Check | Filesystem hang check (normal: 1, abnormal: 0) | status |

| Filesystem Nodes | Total number of filesystem nodes | cnt |

| Filesystem Nodes [Free] | Total number of available filesystem nodes | cnt |

| Filesystem Size [Available] | Available disk space in bytes | bytes |

| Filesystem Size [Free] | Free disk space in bytes | bytes |

| Filesystem Size [Total] | Total disk space in bytes | bytes |

| Filesystem Usage | Disk space usage rate | % |

| Filesystem Usage [Avg] | Average disk space usage rate | % |

| Filesystem Usage [Inode] | Inode usage rate | % |

| Filesystem Usage [Max] | Maximum disk space usage rate | % |

| Filesystem Usage [Min] | Minimum disk space usage rate | % |

| Filesystem Usage [Total] | Total disk space usage rate | % |

| Filesystem Used | Used disk space in bytes | bytes |

| Filesystem Used [Inode] | Used inode space in bytes | bytes |

| Memory Free | Total available memory in bytes | bytes |

| Memory Free [Actual] | Actual available memory in bytes | bytes |

| Memory Free [Swap] | Available swap memory in bytes | bytes |

| Memory Total | Total memory in bytes | bytes |

| Memory Total [Swap] | Total swap memory in bytes | bytes |

| Memory Usage | Memory usage rate | % |

| Memory Usage [Actual] | Actual memory usage rate | % |

| Memory Usage [Cache Swap] | Cache swap usage rate | % |

| Memory Usage [Swap] | Swap memory usage rate | % |

| Memory Used | Used memory in bytes | bytes |

| Memory Used [Actual] | Actual used memory in bytes | bytes |

| Memory Used [Swap] | Used swap memory in bytes | bytes |

| Collisions | Network collisions | cnt |

| Network In Bytes | Received bytes | bytes |

| Network In Bytes [Delta Avg] | Average delta of received bytes | bytes |

| Network In Bytes [Delta Max] | Maximum delta of received bytes | bytes |

| Network In Bytes [Delta Min] | Minimum delta of received bytes | bytes |

| Network In Bytes [Delta Sum] | Sum of delta of received bytes | bytes |

| Network In Bytes [Delta] | Delta of received bytes | bytes |

| Network In Dropped | Dropped received packets | cnt |

| Network In Errors | Received errors | cnt |

| Network In Packets | Received packets | cnt |

| Network In Packets [Delta Avg] | Average delta of received packets | cnt |

| Network In Packets [Delta Max] | Maximum delta of received packets | cnt |

| Network In Packets [Delta Min] | Minimum delta of received packets | cnt |

| Network In Packets [Delta Sum] | Sum of delta of received packets | cnt |

| Network In Packets [Delta] | Delta of received packets | cnt |

| Network Out Bytes | Sent bytes | bytes |

| Network Out Bytes [Delta Avg] | Average delta of sent bytes | bytes |

| Network Out Bytes [Delta Max] | Maximum delta of sent bytes | bytes |

| Network Out Bytes [Delta Min] | Minimum delta of sent bytes | bytes |

| Network Out Bytes [Delta Sum] | Sum of delta of sent bytes | bytes |

| Network Out Bytes [Delta] | Delta of sent bytes | bytes |

| Network Out Dropped | Dropped sent packets | cnt |

| Network Out Errors | Sent errors | cnt |

| Network Out Packets | Sent packets | cnt |

| Network Out Packets [Delta Avg] | Average delta of sent packets | cnt |

| Network Out Packets [Delta Max] | Maximum delta of sent packets | cnt |

| Network Out Packets [Delta Min] | Minimum delta of sent packets | cnt |

| Network Out Packets [Delta Sum] | Sum of delta of sent packets | cnt |

| Network Out Packets [Delta] | Delta of sent packets | cnt |

| Open Connections [TCP] | Open TCP connections | cnt |

| Open Connections [UDP] | Open UDP connections | cnt |

| Port Usage | Port usage rate | % |

| SYN Sent Sockets | Number of sockets in SYN_SENT state | cnt |

| Kernel PID Max | Maximum PID value | cnt |

| Kernel Thread Max | Maximum thread value | cnt |

| Process CPU Usage | CPU time used by the process | % |

| Process CPU Usage/Core | CPU time used by the process per core | % |

| Process Memory Usage | Resident Set size | % |

| Process Memory Used | Used memory by the process | bytes |

| Process PID | Process ID | PID |

| Process PPID | Parent process ID | PID |

| Processes [Dead] | Number of dead processes | cnt |

| Processes [Idle] | Number of idle processes | cnt |

| Processes [Running] | Number of running processes | cnt |

| Processes [Sleeping] | Number of sleeping processes | cnt |

| Processes [Stopped] | Number of stopped processes | cnt |

| Processes [Total] | Total number of processes | cnt |

| Processes [Unknown] | Number of unknown processes | cnt |

| Processes [Zombie] | Number of zombie processes | cnt |

| Running Process Usage | Process usage rate | % |

| Running Processes | Number of running processes | cnt |

| Running Thread Usage | Thread usage rate | % |

| Running Threads | Number of running threads | cnt |

| Context Switches | Context switches per second | cnt |

| Load/Core [1 min] | Load per core over 1 minute | cnt |

| Load/Core [15 min] | Load per core over 15 minutes | cnt |

| Load/Core [5 min] | Load per core over 5 minutes | cnt |

| Multipaths [Active] | Number of active multipath connections | cnt |

| Multipaths [Failed] | Number of failed multipath connections | cnt |

| Multipaths [Faulty] | Number of faulty multipath connections | cnt |

| NTP Offset | Measured offset from the NTP server | num |

| Run Queue Length | Run queue length | num |

| Uptime | System uptime in milliseconds | ms |

| Context Switchies | Context switches per second | cnt |

| Disk Read Bytes [Sec] | Bytes read from the device per second | cnt |

| Disk Read Time [Avg] | Average read time from the device | sec |

| Disk Transfer Time [Avg] | Average disk transfer time | sec |

| Disk Usage | Disk usage rate | % |

| Disk Write Bytes [Sec] | Bytes written to the device per second | cnt |

| Disk Write Time [Avg] | Average write time to the device | sec |

| Pagingfile Usage | Paging file usage rate | % |

| Pool Used [Non Paged] | Non-paged pool usage | bytes |

| Pool Used [Paged] | Paged pool usage | bytes |

| Process [Running] | Number of running processes | cnt |

| Threads [Running] | Number of running threads | cnt |

| Threads [Waiting] | Number of waiting threads | cnt |

1.3 - ServiceWatch Metrics

GPU Server sends metrics to ServiceWatch. The metrics provided by default monitoring are data collected at 5‑minute intervals. If detailed monitoring is enabled, you can view data collected at 1‑minute intervals.

- The basic monitoring and detailed monitoring of the GPU Server are provided with the same metrics as the Virtual Server, and the namespace is also provided as Virtual Server.

- GPU related metrics are provided through ServiceWatch Agent, and for how to collect metrics using ServiceWatch Agent, refer to the ServiceWatch Agent guide.

How to enable detailed monitoring of GPU Server, please refer to How-to guides > ServiceWatch Enable Detailed Monitoring.

Basic Indicators

The following are the basic metrics for the Virtual Server namespace.

| Performance Item | Detailed Description | Unit | Meaningful Statistics |

|---|---|---|---|

| Instance State | Instance State Display | - | - |

| CPU Usage | CPU Usage | % |

|

| Disk Read Bytes | Capacity read from block device (bytes) | Bytes |

|

| Disk Read Requests | Number of read requests on block device | Count |

|

| Disk Write Bytes | Write capacity on block device (bytes) | Bytes |

|

| Disk Write Requests | Number of write requests on block device | Count |

|

| Network In Bytes | Capacity received from network interface (bytes) | Bytes |

|

| Network In Dropped | Number of packet drops received on network interface | Count |

|

| Network In Packets | Number of packets received on the network interface | Count |

|

| Network Out Bytes | Data transmitted from the network interface (bytes) | Bytes |

|

| Network Out Dropped | Number of packet drops transmitted from the network interface | Count |

|

| Network Out Packets | Number of packets transmitted from the network interface | Count |

|

2 - How-to guides

The user can enter the required information of the GPU Server through the Samsung Cloud Platform Console, select detailed options, and create the service.

Create GPU Server

You can create and use GPU Server services from the Samsung Cloud Platform Console.

If you want to create a GPU Server, follow the steps below.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Service Home on the page, click the Create GPU Server button. Navigate to the Create GPU Server page.

- GPU Server creation On the page, enter the information required to create the service, and select detailed options.

- Image and version selection Select the required information in the area.

Category Required or notDetailed description Image Required Select provided image type - Ubuntu

Image version Required Select version of the chosen image - Provides a list of server image versions offered

Table. GPU Server image and version selection input items - Service Information Input Enter or select the required information in the area.

Category Required or notDetailed description Server count Required Number of GPU Server servers to create simultaneously - Only numbers can be entered, and input a value between 1 and 100

Service Type > Server Type Required GPU Server Server Type - Indicates the server specifications of GPU type, select a server that includes 1, 2, 4, or 8 GPUs

- For detailed information about the server types provided by GPU Server, refer to GPU Server Server Type

Service Type > Planned Compute Select Resource status with Planned Compute set - In Use: Number of resources with Planned Compute set that are currently in use

- Configured: Number of resources with Planned Compute set

- Coverage Preview: Amount applied per resource by Planned Compute

- Planned Compute Service Application: Go to the Planned Compute service application page

- For more details, refer to Apply for Planned Compute

Block Storage Required Set the Block Storage used by the GPU Server according to its purpose - Basic: Area where the OS is installed and used

- Capacity can be entered in Units (minimum capacity varies depending on the OS image type)

- RHEL: values between 3 and 1,536 can be entered

- Ubuntu: values between 3 and 1,536 can be entered

- SSD: high-performance general volume

- HDD: general volume

- SSD/HDD_KMS: additional encrypted volume using Samsung Cloud Platform KMS (Key Management System) encryption key

- Encryption can only be applied at initial creation (cannot be changed after creation)

- Performance degradation occurs when using SSD_KMS disk type

- Capacity can be entered in Units (minimum capacity varies depending on the OS image type)

- Additional: Used when additional user space is needed outside the OS area

- After selecting Use, enter the storage type and capacity

- To add storage, click the + button (up to 25 can be added); to delete, click the x button

- Capacity can be entered in Units, values between 1 and 1,536

- Since 1 Unit equals 8 GB, 8 to 12,288 GB can be created

- SSD: high-performance general volume

- HDD: general volume

- SSD/HDD_KMS: additional encrypted volume using Samsung Cloud Platform KMS (Key Management System) encryption key

- Encryption can only be applied at initial creation (cannot be changed after creation)

- Performance degradation may occur when using SSD_KMS disk type

- For detailed information on each Block Storage type, refer to Create Block Storage

- Delete on termination: If Delete on Termination is set to Enabled, the volume will be terminated together when the server is terminated

- Volumes with snapshots are not deleted even if Delete on termination is set to Enabled

- A multi-attach volume is deleted only when the server being terminated is the last remaining server attached to the volume

Table. GPU Server Service Configuration Items - Required Information Input Please enter or select the required information in the area.

Category RequiredDetailed description Server Name Required Enter a name to distinguish the server when the selected server count is 1 - Set hostname with the entered server name

- Use English letters, numbers, spaces, and special characters (

-_) within 63 characters

Server name Prefix Required Enter Prefix to distinguish each server generated when the selected number of servers is 2 or more - Automatically generated as user input value (prefix) + ‘

-#’ format

- Enter within 59 characters using English letters, numbers, spaces, and special characters (

-,_)

Network Settings > Create New Network Port Required Set the network where the GPU Server will be installed - Select a pre-created VPC.

- General Subnet: Select a pre-created General Subnet

- IP can be set to auto-generate or user input; if input is selected, the user can directly enter the IP

- NAT: Can be used only if there is one server and the VPC has an Internet Gateway attached. Use checked allows selection of NAT IP

- NAT IP: Select NAT IP

- If there is no NAT IP to select, click the Create New button to generate a Public IP

- Refresh button click to view and select the created Public IP

- Creating a Public IP incurs charges according to the Public IP pricing policy

- Local Subnet (Optional): Select Local Subnet Use

- Not a required element for creating the service

- A pre-created Local Subnet must be selected

- IP can be set to auto-generate or user input; input selected allows user to directly enter IP

- Security Group: Settings required to access the server

- Select: Choose a pre-created Security Group

- Create New: If there is no applicable Security Group, it can be created separately in the Security Group service

- Up to 5 can be selected

- If no Security Group is set, all access is blocked by default

- A Security Group must be set to allow required access

Network Settings > Existing Network Port Specification Required Set the network where the GPU Server will be installed - Select a pre-created VPC

- General Subnet: Select a pre-created General Subnet and Port

- NAT: Can be used only if there is one server and the VPC has an Internet Gateway attached; checking use allows selection of a NAT IP.

- NAT IP: Select NAT IP

- If there is no NAT IP to select, click the New Creation button to generate a Public IP

- Click the Refresh button to view and select the created Public IP

- Local Subnet (Optional): Select Use of Local Subnet

- Select a pre-created Local Subnet and Port

Keypair Required User authentication method to use when connecting to the server - New Creation: If a new Keypair is needed, create a new one

- Refer to Create Keypair for how to create a new Keypair

- List of default login accounts by OS

- RHEL: cloud-user

- Ubuntu: ubuntu

Table. GPU Server required information input items - Additional Information Input Enter or select the required information in the area.

Category RequiredDetailed description Lock Select Set whether to use Lock - Using Lock prevents actions such as server termination, start, stop from being executed, preventing malfunctions caused by mistakes

Init script Select Script executed when the server starts - The Init script must be written as a Batch script for Windows, a Shell script or cloud‑init for Linux, depending on the image type.

- Up to 45,000 bytes can be entered

Tag Select Add Tag - Up to 50 can be added per resource

- After clicking the Add Tag button, enter or select Key, Value values

Table. GPU Server Additional Information Input Items

- Image and version selection Select the required information in the area.

- Summary Check the detailed information and estimated billing amount generated in the panel, and click the Complete button.

- When creation is complete, check the created resources on the GPU Server list page.

GPU Server Check detailed information

GPU Server service can view and edit the full resource list and detailed information. GPU Server Detail page consists of Detail Information, Tags, Job History tabs.

To view detailed information about the GPU Server service, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Go to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Navigate to the GPU Server list page.

- GPU Server List Click the resource to view detailed information on the page. GPU Server Details You will be taken to the page.

- GPU Server Details page displays status information and additional feature information, and consists of Details, Tags, Job History tabs.

- GPU Server add-on features for detailed information, refer to GPU Server management add-on features.

Category Detailed description GPU Server status Status of GPU Server created by the user - Build: State where Build command has been delivered

- Building: Build in progress

- Networking: Server creation in‑progress process

- Scheduling: Server creation in‑progress process

- Block_Device_Mapping: Connecting Block Storage during server creation

- Spawning: State where server creation process is ongoing

- Active: Usable state

- Powering_off: State when stop request is made

- Deleting: Server deletion in progress

- Reboot_Started: Reboot in progress state

- Error: Error state

- Migrating: State where server is migrating to another host

- Reboot: State where Reboot command has been delivered

- Rebooting: Restart in progress

- Rebuild: State where Rebuild command has been delivered

- Rebuilding: State when Rebuild is requested

- Rebuild_Spawning: State where Rebuild process is ongoing

- Resize: State where Resize command has been delivered

- Resizing: Resize in progress

- Resize_Prep: State when server type modification is requested

- Resize_Migrating: State where server is moving to another host while Resize is in progress

- Resize_Migrated: State where server has completed moving to another host while Resize is in progress

- Resize_Finish: Resize completed

- Revert_Resize: Resize or migration of the server failed for some reason. The target server is cleaned up and the original server restarts

- Shutoff: State when Powering off is completed

- Verity_Resize: After Resize_Prep due to server type modification request, state where server type is confirmed / can choose to revert server type

- Resize_Reverting: State when server type revert is requested

- Resize_Confirming: State where server’s Resize request is being confirmed

Server Control Button to change server status - Start: Start a stopped server

- Stop: Stop a running server

- Restart: Restart a running server

Image Generation Create user custom image using the current server’s image Console Log View current server’s console log - You can check the console log output from the current server. For more details, refer to Check Console Log.

Dump creation Create a dump of the current server - The dump file is created inside the GPU Server

- For detailed dump creation method, refer to Create Dump

Rebuild All data and settings of the existing server are deleted, and a new server is set up - For details, refer to Perform Rebuild.

Service Cancellation Button to cancel the service Table. GPU Server status information and additional features

Detailed Information

GPU Server list page, you can view the detailed information of the selected resource and, if necessary, edit the information.

| Category | Detailed description |

|---|---|

| service | service name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Resource Name

|

| Resource ID | Unique resource ID in the service |

| Creator | User who created the service |

| Creation time | Service creation time |

| Editor | User who edited the service information |

| Modification Date | Date Service Information Was Modified |

| Server name | Server name |

| Server Type | vCPU, Memory, GPU Information Display

|

| Image Name | Service OS Image and Version |

| Lock | Display Lock usage status

|

| Server Group | Server group name the server belongs to |

| Keypair name | Server authentication information set by the user |

| Planned Compute | Resource status with Planned Compute set

|

| Network | Network information of GPU Server

|

| Local Subnet | GPU Server’s Local Subnet Information

|

| Block Storage | Information of Block Storage connected to the server

|

tag

On the GPU Server List page, you can view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag List | Tag List

|

Work History

You can view the job history of the selected resource on the GPU Server List page.

| Category | Detailed description |

|---|---|

| Work History List | Resource Change History

|

GPU Server Operation Control

If you need to control the operation of the generated GPU Server resources, you can perform the task on the GPU Server List or GPU Server Details page. You can start, stop, and restart a running server.

GPU Server Start

You can start a shutoff GPU Server. To start the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Go to the GPU Server List page.

- GPU Server List page, click the resource to start among the shutoff servers, and go to the GPU Server Details page.

- GPU Server list page, you can start each resource via the right more button.

- After selecting multiple servers with the check box, you can control multiple servers simultaneously through the Start button at the top.

- GPU Server Details On the page, click the Start button at the top to start the server. Check the changed server status in the Status Display item.

- When the GPU Server start is completed, the server status changes from Shutoff to Active.

- For detailed information about the GPU Server status, please refer to Check GPU Server detailed information.

GPU Server Stop

You can stop a GPU Server that is active. To stop the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Go to the Service Home page of GPU Server.

- Service Home page, click the GPU Server menu. Navigate to the GPU Server List page.

- GPU Server List page, click the resource to stop among the servers that are active (Active), and go to the GPU Server Details page.

- GPU Server List on the page, you can stop each resource via the right More button.

- After selecting multiple servers with the checkbox, you can control multiple servers simultaneously through the Stop button at the top.

- GPU Server Details page, click the top Stop button to start the server. Check the changed server status in the Status Display item.

- When GPU Server shutdown is completed, the server status changes from Active to Shutoff.

- For detailed information about the GPU Server status, please refer to Check GPU Server detailed information.

GPU Server Restart

You can restart the generated GPU Server. To restart the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. It navigates to the GPU Server List page.

- GPU Server List page, click the resource to restart, and navigate to the GPU Server Details page.

- GPU Server List page, you can restart each resource by using the right More button.

- After selecting multiple servers with the checkbox, you can control multiple servers simultaneously through the Restart button at the top.

- GPU Server Details on the page, click the Restart button at the top to start the server. Check the changed server status in the Status Display item.

- GPU Server during restart, the server status goes through Rebooting and finally changes to Active.

- For detailed information about the GPU Server status, please refer to Check GPU Server detailed information.

GPU Server Resource Management

If you need server control and management functions for the generated GPU Server resources, you can perform the work on the GPU Server Resource List or GPU Server Details page.

Image Create

You can create an image of a running GPU server.

This content provides instructions on how to create a user custom image using the image of a running GPU server.

- GPU Server list or GPU Server details page, click the Create Image button to create a user Custom Image.

To create an Image of the GPU Server, follow the steps below.

All Services > Compute > GPU Server Click the menu. Navigate to the GPU Server’s Service Home page.

Service Home page, click the GPU Server menu. Go to the GPU Server list page.

Click the resource to create an Image on the GPU Server List page. It navigates to the GPU Server Details page.

GPU Server Details on the page, click the Image Generation button. Navigate to the Image Generation page.

- Service Information Input area, please enter the required information.

Category RequiredDetailed description Image Name Required Image name to be generated - Enter within 200 characters using English letters, numbers, spaces, and special characters (

-_)

Table. Image Service Information Input Items - Enter within 200 characters using English letters, numbers, spaces, and special characters (

- Service Information Input area, please enter the required information.

Check the input information and click the Complete button.

- When creation is complete, check the created resources on the All Services > Compute > GPU Server > Image List page.

- If you create an Image, the generated Image is stored in the Object Storage used as internal storage. Therefore, Object Storage usage fees are charged.

- Active state GPU Server-generated image’s file system cannot guarantee integrity, so image creation after server shutdown is recommended.

ServiceWatch Enable Detailed Monitoring

Basically, the GPU Server is linked with the basic monitoring of ServiceWatch and the Virtual Server namespace. You can enable detailed monitoring as needed to more quickly identify and address operational issues. For more information about ServiceWatch, see the ServiceWatch Overview (/userguide/management/service_watch/overview/).

To enable detailed monitoring of ServiceWatch on the GPU Server, follow these steps.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Navigate to the GPU Server list page.

- On the GPU Server List page, click the resource to enable ServiceWatch detailed monitoring. You will be taken to the GPU Server Details page.

- GPU Server Details page, click the ServiceWatch detailed monitoring Edit button. You will be taken to the ServiceWatch Detailed Monitoring Edit popup.

- ServiceWatch Detailed Monitoring Modification In the popup window, after selecting Enable, check the guidance text and click the Confirm button.

- GPU Server Details page, check the ServiceWatch detailed monitoring items.

ServiceWatch Disable detailed monitoring

To disable the detailed monitoring of ServiceWatch on the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Service Home page, click the GPU Server menu. Navigate to the GPU Server list page.

- Click the resource to disable ServiceWatch detailed monitoring on the GPU Server List page. It navigates to the GPU Server Details page.

- GPU Server Details page, click the ServiceWatch detailed monitoring Edit button. It moves to the ServiceWatch Detailed Monitoring Edit popup.

- ServiceWatch Detailed Monitoring Edit in the popup window, after deselecting Enable, check the guide text and click the Confirm button.

- GPU Server Details page, check the ServiceWatch detailed monitoring items.

GPU Server Management Additional Features

For GPU Server management, you can view Console logs, generate Dump, and Rebuild. To view Console logs, generate Dump, and Rebuild the GPU Server, follow the steps below.

Check console log

You can view the current console log of the GPU Server.

To check the console logs of the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Please click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Navigate to the GPU Server List page.

- On the GPU Server List page, click the resource to view the console log. Navigate to the GPU Server Details page.

- GPU Server Details on the page, click the Console Log button. It will move to the Console Log popup window.

- Console Log Check the console log displayed in the popup window.

Create Dump

To create a Dump file of the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Navigate to the GPU Server List page.

- GPU Server List Click the resource to view detailed information on the page. GPU Server Details Navigate to the page.

- GPU Server Details on the page Create Dump Click the button.

- The dump file is created inside the GPU Server.

Rebuild perform

You can delete all data and settings of the existing GPU Server and rebuild it on a new server.

To perform the Rebuild of the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Navigate to the Service Home page of GPU Server.

- Click the GPU Server menu on the Service Home page. It navigates to the GPU Server List page.

- GPU Server List page, click the resource to perform Rebuild. GPU Server Details page will be opened.

- GPU Server Details on the page click the Rebuild button.

- During GPU Server Rebuild, the server status changes to Rebuilding, and when the Rebuild is completed, it returns to the state before the Rebuild.

- For detailed information about the GPU Server status, please refer to Check GPU Server detailed information.

GPU Server Cancel

If you cancel an unused GPU Server, you can reduce operating costs. However, if you cancel a GPU Server, the service currently running may be stopped immediately, so you should proceed with the cancellation after fully considering the impact that may occur when the service is interrupted.

To cancel the GPU Server, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Navigate to the GPU Server’s Service Home page.

- Click the GPU Server menu on the Service Home page. Navigate to the GPU Server List page.

- GPU Server List on the page, select the resource to cancel, and click the Cancel Service button.

- The termination of connected storage depends on the Delete on termination setting, so please refer to Termination Constraints.

- When termination is completed, check on the GPU Server List page whether the resource has been terminated.

Termination Constraints

If the termination request for GPU Server cannot be processed, we will guide you with a popup window. Please refer to the cases below.

- If File Storage is connected, please disconnect the File Storage connection first.

- If LB Pool is connected please disconnect the LB Pool connection first.

- When Lock is set please change the Lock setting to unused and try again.

The termination of attached storage depends on the Delete on termination setting.

- Delete on termination Whether the volume deletion also varies depending on the setting.

- Delete on termination If not set: Even if you terminate the GPU Server, the volume will not be deleted.

- Delete on termination when set: If you terminate the GPU Server, the volume will be deleted.

- Volumes with a Snapshot will not be deleted even if Delete on termination is set.

- Multi attach volume is deleted only when the server you are trying to delete is the last remaining server attached to the volume.

2.1 - Image Management

Users can enter the required information for the Image service within the GPU Server service and select detailed options through the Samsung Cloud Platform Console to create the respective service.

Image Generation

You can create an image of a running GPU Server. To create an image of a GPU Server, please refer to Create Image.

Image Check detailed information

Image service allows you to view and edit the full resource list and detailed information. Image detail page consists of detail information, tags, operation history tabs.

To view detailed information of the Image service, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Navigate to the Service Home page of GPU Server.

- Click the Image menu on the Service Home page. Go to the Image List page.

- Image List page, click the resource to view detailed information. You will be taken to the Image Detail page.

- Image Details page displays status information and additional feature information, and consists of Detail Information, Tag, Work History tabs.

Category Detailed description Image Status User-generated Image’s status - Active: Available state

- Queued: When creating Image, Image is uploaded and waiting for processing

- Importing: When creating Image, Image is uploaded and being processed

Share to another Account Image can be shared to another Account - Image’s Visibility must be in Shared state to be able to share to another Account

Delete Image Button to delete the Image - If the Image is deleted, it cannot be recovered

Table. GPU Server Image status information and additional features

- Image Details page displays status information and additional feature information, and consists of Detail Information, Tag, Work History tabs.

Detailed Information

Image list page allows you to view detailed information of the selected resource and edit the information if necessary.

| Category | Detailed description |

|---|---|

| Service | Service Name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Image Name |

| Resource ID | Image ID |

| Creator | User who created the Image |

| Creation date/time | Date/time when the image was created |

| Editor | User who edited the Image |

| Edit date/time | Date and time the image was edited |

| Image name | Image name |

| Minimum Disk | Image’s minimum disk capacity (GB)

|

| Minimum RAM | Image’s minimum RAM capacity (GB) |

| OS type | Image’s OS type |

| OS hash algorithm | OS hash algorithm method |

| Visibility | Displays access permissions for images

|

| Protected | Select whether image deletion is prohibited

|

| Image File URL | Image file URL uploaded when generating image

|

| Sharing Status | Status of sharing images with other Accounts

|

Tag

On the Image list page, you can view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag List | Tag List

|

Work History

You can view the operation history of the selected resource on the Image list page.

| Category | Detailed description |

|---|---|

| Work History List | Resource Change History

|

Image Resource Management

Describes the control and management functions of the generated image.

Share to another account

To share the Image with another Account, follow the steps below.

- Log in to the shared Account and click the All Services > Compute > GPU Server menu. Navigate to the GPU Server’s Service Home page.

- Click the Image menu on the Service Home page. Go to the Image list page.

- Click the Image to control on the Image List page. It moves to the Image Detail page.

- Click the Share to another Account button. It navigates to the Share image to another Account page.

- Share to another Account feature allows you to share the Image with another Account. To share the Image with another Account, the Image’s Visibility must be Shared.

- Share images to another Account On the page, enter the required information and click the Complete button.

Category Required or notDetailed description Image Name - Name of the image to share - Input not allowed

Image ID - Image ID to share - Input not allowed

Shared Account ID Required Enter another Account ID to share - Enter within 64 characters using English letters, numbers, special characters

-

Table. Required input items for sharing images to another Account - Image Details page’s sharing status can be checked for information.

- At the initial request, the status is Pending, and when approval is completed in the account to be shared, it changes to Accepted.

Receive sharing from another account

To receive an Image shared from another Account, follow the steps below.

- Log in to the account to be shared and click the 모든 서비스 > Compute > GPU Server menu. Navigate to the Service Home page of the GPU Server.

- Click the Image menu on the Service Home page. Go to the Image list page.

- Image List on the page click the Get Image Share button. Go to the Get Image Share popup window.

- Receive Image Share In the popup window, enter the resource ID of the Image you want to receive, and click the Confirm button.

- When image sharing is completed, you can check the shared Image in the Image list.

Image Delete

You can delete unused Images. However, once an Image is deleted it cannot be recovered, so you should fully consider the impact before proceeding with the deletion.

To delete the image, follow the steps below.

- All Services > Compute > GPU Server Click the menu. Navigate to the GPU Server’s Service Home page.

- Click the Image menu on the Service Home page. Go to the Image List page.

- On the Image list page, select the resource to delete and click the Delete button.

- Image list page, select multiple Image check boxes, and click the Delete button at the top of the resource list.

- When deletion is complete, check on the Image List page whether the resource has been deleted.

2.2 - Using Multi-instance GPU in GPU Server

After creating a GPU Server, you can enable the MIG (Multi-instance GPU) feature on the GPU Server’s VM (Guest OS) and create an instance to use it.

Multi-instance GPU (NVIDIA A100) Overview

NVIDIA A100 is a Multi-instance GPU (MIG) based on the NVIDIA Ampere architecture, which can be securely divided into up to 7 independent GPU instances to operate CUDA (Compute Unified Device Architecture) applications. The NVIDIA A100 provides independent GPU resources to multiple users by allocating computing resources in a way optimized for GPU usage while utilizing high-bandwidth memory (HBM) and cache. Users can maximize GPU utilization by utilizing workloads that have not reached the maximum computing capacity of the GPU through parallel execution of each workload.

Using Multi-instance GPU Feature

To use the multi-instance GPU feature, you must create a GPU Server service on the Samsung Cloud Platform and then create a VM Instance (GuestOS) with an A100 GPU assigned. After completing the GPU Server creation, you can follow the MIG application order and MIG release order below to apply it.

- The system requirements for using the MIG feature are as follows (refer to NVIDIA - Supported GPUs).

- CUDA toolkit 11, NVIDIA driver 450.80.02 or later version

- Linux distribution operating system supporting CUDA toolkit 11

- When operating a container or Kubernetes service, the requirements for using the MIG feature are as follows.

- NVIDIA Container Toolkit(nvidia-docker2) v 2.5.0 or later version

- NVIDIA K8s Device Plugin v 0.7.0 or later version

- NVIDIA gpu-feature-discovery v 0.2.0 or later version

MIG Application and Usage

To activate MIG and create an instance to assign a task, follow these steps.

MIG Activation

Check the GPU status on the VM Instance (GuestOS) before applying MIG.

- MIG mode is Disabled status, please check.Color mode

$ nvidia-smi Mon Sep 27 08:37:08 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | 0 | | N/A 32C P0 59W / 400W | 0MiB / 81251MiB | 0% Default | | | | Disabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+$ nvidia-smi Mon Sep 27 08:37:08 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | 0 | | N/A 32C P0 59W / 400W | 0MiB / 81251MiB | 0% Default | | | | Disabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+Code block. nvidia-smi command - Check GPU inactive state (1) Color mode$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0)$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0)Code block. nvidia-smi command - Check GPU inactive state (2)

- MIG mode is Disabled status, please check.

In the VM Instance(GuestOS), enable MIG for each GPU and reboot the VM Instance.

Color mode$ nvidia-smi –I 0 –mig 1 Enabled MIG mode for GPU 00000000:05:00.0 All done. # reboot$ nvidia-smi –I 0 –mig 1 Enabled MIG mode for GPU 00000000:05:00.0 All done. # rebootCode Block. nvidia-smi Command - MIG Activation

If the GPU monitoring agent displays the following warning message, stop the nvsm and dcgm services before enabling MIG.

Warning: MIG mode is in pending enable state for GPU 00000000:05:00.0: In use by another client. 00000000:05:00.0 is currently being used by one or more other processes (e.g. CUDA application or a monitoring application such as another instance of nvidia-smi).

# systemctl stop nvsm

# systemctl stop dcgm

- After completing the MIG work, restart the nvsm and dcgm services.

- Check the GPU status after applying MIG in the VM Instance(GuestOS).

- MIG mode must be in Enabled state.Color mode

$ nvidia-smi Mon Sep 27 09:44:33 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | On | | N/A 32C P0 59W / 400W | 0MiB / 81251MiB | 0% Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | No MIG devices found | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+$ nvidia-smi Mon Sep 27 09:44:33 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | On | | N/A 32C P0 59W / 400W | 0MiB / 81251MiB | 0% Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | No MIG devices found | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+Code block. nvidia-smi command - Check GPU activation status (1) Color mode$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0)$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0)Code block. nvidia-smi command - Check GPU activation status (2)

- MIG mode must be in Enabled state.

GPU Instance Creation

After activating MIG and checking the status, you can create a GPU Instance.

Check the list of MIG GPU instance profiles that can be created.

Color mode$ nvidia-smi mig -i [GPU ID] -lgip$ nvidia-smi mig -i [GPU ID] -lgipCode block. nvidia-smi command - MIG GPU Instance profile list check Color mode$ nvidia-smi mig -i 0 -lgip +-----------------------------------------------------------------------------+ | GPU instance profiles: | | GPU Name ID Instances Memory P2P SM DEC ENC | | Free/Total GiB CE JPEG OFA | |=============================================================================| | 0 MIG 1g.10gb 19 7/7 9.50 No 14 0 0 | | 1 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 1g.10gb+me 20 1/1 9.50 No 14 0 0 | | 1 1 1 | +-----------------------------------------------------------------------------+ | 0 MIG 2g.20gb 14 3/3 19.50 No 28 1 0 | | 2 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 3g.40gb 9 2/2 39.50 No 42 2 0 | | 3 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 4g.40gb 5 1/1 39.50 No 56 2 0 | | 4 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 7g.80gb 0 1/1 79.25 No 98 0 0 | | 7 1 1 | +-----------------------------------------------------------------------------+$ nvidia-smi mig -i 0 -lgip +-----------------------------------------------------------------------------+ | GPU instance profiles: | | GPU Name ID Instances Memory P2P SM DEC ENC | | Free/Total GiB CE JPEG OFA | |=============================================================================| | 0 MIG 1g.10gb 19 7/7 9.50 No 14 0 0 | | 1 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 1g.10gb+me 20 1/1 9.50 No 14 0 0 | | 1 1 1 | +-----------------------------------------------------------------------------+ | 0 MIG 2g.20gb 14 3/3 19.50 No 28 1 0 | | 2 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 3g.40gb 9 2/2 39.50 No 42 2 0 | | 3 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 4g.40gb 5 1/1 39.50 No 56 2 0 | | 4 0 0 | +-----------------------------------------------------------------------------+ | 0 MIG 7g.80gb 0 1/1 79.25 No 98 0 0 | | 7 1 1 | +-----------------------------------------------------------------------------+Code Block. MIG GPU Instance Profile List

| Profile Name | Fraction of Memory | Fraction of SMs | Hardware Units | L2 Cache Size | Number of Instances Available |

|---|---|---|---|---|---|

| MIG 1g.10gb | 1/8 | 1/7 | 0 NVDECs /0 JPEG /0 OFA | 1/8 | 7 |

| MIG 1g.10gb+me | 1/8 | 1/7 | 1 NVDEC /1 JPEG /1 OFA | 1/8 | 1 (A single 1g profile can include media extensions) |

| MIG 2g.20gb | 2/8 | 2/7 | 1 NVDECs /0 JPEG /0 OFA | 2/8 | 3 |

| MIG 3g.40gb | 4/8 | 3/7 | 2 NVDECs /0 JPEG /0 OFA | 4/8 | 2 |

| MIG 4g.40gb | 4/8 | 4/7 | 2 NVDECs /0 JPEG /0 OFA | 4/8 | 1 |

| MIG 7g.80gb | Full | 7/7 | 5 NVDECs /1 JPEG /1 OFA | Full | 1 |

- Check after creating the MIG GPU Instance.

GPU Instance creation

Color mode$ nvidia-smi mig -i [GPU ID] -cgi [Profile ID]$ nvidia-smi mig -i [GPU ID] -cgi [Profile ID]Code Block. nvidia-smi command - GPU Instance creation Color mode$ nvidia-smi mig -i 0 -cgi 0 Successfully created GPU instance ID 0 on GPU 0 using profile MIG 7g.80gb (ID 0)$ nvidia-smi mig -i 0 -cgi 0 Successfully created GPU instance ID 0 on GPU 0 using profile MIG 7g.80gb (ID 0)Code block. nvidia-smi command - GPU Instance creation example GPU Instance check

Color mode$ nvidia-smi mig -i [GPU ID] -lgi$ nvidia-smi mig -i [GPU ID] -lgiCode Block. nvidia-smi Command - GPU Instance Check Color mode$ nvidia-smi mig -i 0 -lgi +--------------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |========================================================| | 0 MIG 7g.80gb 0 0 0:8 | +--------------------------------------------------------+$ nvidia-smi mig -i 0 -lgi +--------------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |========================================================| | 0 MIG 7g.80gb 0 0 0:8 | +--------------------------------------------------------+Code block. nvidia-smi command - GPU Instance check example

Compute Instance Creation

If you have created a GPU Instance, you can create a Compute Instance.

Check the MIG Compute Instance profile that can be created.

Color mode$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -lcip$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -lcipCode Block. nvidia-smi command - MIG Compute Instance profile check Color mode$ nvidia-smi mig -i 0 -gi 0 -lcip +---------------------------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Exclusive Shared | | GPU Instance ID Free/Total SM DEC ENC OFA | | ID CE JPEG | |=================================================================================| | 0 0 MIG 1c.7g.80gb 0 7/7 14 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 2c.7g.80gb 1 3/3 28 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 3c.7g.80gb 2 2/2 42 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 4c.7g.80gb 3 1/1 56 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 7g.80gb 4* 1/1 98 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+$ nvidia-smi mig -i 0 -gi 0 -lcip +---------------------------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Exclusive Shared | | GPU Instance ID Free/Total SM DEC ENC OFA | | ID CE JPEG | |=================================================================================| | 0 0 MIG 1c.7g.80gb 0 7/7 14 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 2c.7g.80gb 1 3/3 28 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 3c.7g.80gb 2 2/2 42 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 4c.7g.80gb 3 1/1 56 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+ | 0 0 MIG 7g.80gb 4* 1/1 98 5 0 1 | | 7 1 | +---------------------------------------------------------------------------------+Code block. MIG Compute Instance profile list example Create and check the MIG Compute Instance.

- MIG Compute Instance creationColor mode

$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -cci [Compute Profile ID]$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -cci [Compute Profile ID]Code Block. nvidia-smi command - MIG Compute Instance creation Color mode$ nvidia-smi mig -i 0 -gi 0 -cci 4 Successfully created compute instance ID 0 on GPU instance ID 0 using profile MIG 7g.80gb(ID 4)$ nvidia-smi mig -i 0 -gi 0 -cci 4 Successfully created compute instance ID 0 on GPU instance ID 0 using profile MIG 7g.80gb(ID 4)Code block. nvidia-smi command - MIG Compute Instance creation example - MIG Compute Instance checkColor mode

$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –lci$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –lciCode block. nvidia-smi command - MIG Compute Instance check Color mode$ nvidia-smi mig -i 0 -gi 0 –lci +-----------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Placement | | GPU Instance ID ID Start:Size | | ID | |=================================================================| | 0 0 MIG 7g.80gb 4 0 0:7 | +-----------------------------------------------------------------+$ nvidia-smi mig -i 0 -gi 0 –lci +-----------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Placement | | GPU Instance ID ID Start:Size | | ID | |=================================================================| | 0 0 MIG 7g.80gb 4 0 0:7 | +-----------------------------------------------------------------+Code block. MIG Compute Instance confirmation example Color mode$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0) MIG 7g.80gb Device 0: (UUID: MIG-53e20040-758b-5ecb-948e-c626d03a9a32)$ nvidia-smi –L GPU 0: NVIDIA A100-SXM-80GB (UUID: GPU-c956838f-494a-92b2-6818-56eb28fe25e0) MIG 7g.80gb Device 0: (UUID: MIG-53e20040-758b-5ecb-948e-c626d03a9a32)Code block. nvidia-smi command - Check GPU status (1) Color mode$ nvidia-smi Mon Sep 27 09:52:17 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | On | | N/A 32C P0 49W / 400W | 0MiB / 81251MiB | N/A Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | 0 0 0 0 | 0MiB / 81251MiB | 98 0 | 7 0 5 1 1 | | | 1MiB / 13107... | | | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+$ nvidia-smi Mon Sep 27 09:52:17 2021 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 | |-------------------------------+----------------------+----------------------| | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 NVDIA A100-SXM... Off | 00000000:05:00.0 Off | On | | N/A 32C P0 49W / 400W | 0MiB / 81251MiB | N/A Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | 0 0 0 0 | 0MiB / 81251MiB | 98 0 | 7 0 5 1 1 | | | 1MiB / 13107... | | | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+Code block. nvidia-smi command - Check GPU status (2)

- MIG Compute Instance creation

Using MIG

- Use the MIG Instance to perform the Job.

- Work execution exampleYou can check an example of how to perform the task as follows.Color mode

$ docker run --gpus '"device=[GPU ID]:[MIG ID]"' -rm nvcr.io/nvidia/cuda nvidia-smi$ docker run --gpus '"device=[GPU ID]:[MIG ID]"' -rm nvcr.io/nvidia/cuda nvidia-smiCode Block. Work Execution Example Color mode$ docker run --gpus '"device=0:0"' -rm -it --network=host --shm-size=1g --ipc=host -v /root/.ssh/:/root/.ssh ================ == TensorFlow == ================ NVIDIA Release 21.08-tf1 (build 26012104) TensorFlow Version 1.15.5 Container image Copyright (c) 2021, NVIDIA CORPORATION. All right reserved. ... # Python process execution root@d622a93c9281:/workspace# python /workspace/nvidia-examples/cnn/resnet.py --num_iter 100 ... PY 3.8.10 (default, Jun 2 2021, 10:49:15) [GCC 9.4.0] TF 1.15.5 ...$ docker run --gpus '"device=0:0"' -rm -it --network=host --shm-size=1g --ipc=host -v /root/.ssh/:/root/.ssh ================ == TensorFlow == ================ NVIDIA Release 21.08-tf1 (build 26012104) TensorFlow Version 1.15.5 Container image Copyright (c) 2021, NVIDIA CORPORATION. All right reserved. ... # Python process execution root@d622a93c9281:/workspace# python /workspace/nvidia-examples/cnn/resnet.py --num_iter 100 ... PY 3.8.10 (default, Jun 2 2021, 10:49:15) [GCC 9.4.0] TF 1.15.5 ...Code Block. Work Result

- Work execution example

- Check the GPU usage rate. (Creating a JOB process)

- You can see that when the Job is driven, the process is assigned to the MIG device and the usage rate increases.You can check the GPU usage rate as follows.Color mode

$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -lcip$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -lcipCode Block. nvidia-smi command - Check GPU usage Color mode+-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | 0 0 0 0 | 66562MiB / 81251MiB | 98 0 | 7 0 5 1 1 | | | 5MiB / 13107... | | | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 0 0 0 17483 C python 66559MiB | +-----------------------------------------------------------------------------++-----------------------------------------------------------------------------+ | MIG devices: | +-----------------------------------------------------------------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |=============================================================================| | 0 0 0 0 | 66562MiB / 81251MiB | 98 0 | 7 0 5 1 1 | | | 5MiB / 13107... | | | +-----------------------------------------------------------------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | 0 0 0 17483 C python 66559MiB | +-----------------------------------------------------------------------------+Code block. Example of checking GPU usage

- You can see that when the Job is driven, the process is assigned to the MIG device and the usage rate increases.

MIG Instance deletion and release

To delete a MIG instance and release the MIG, follow these procedures.

Compute Instance deletion

- Delete the Compute Instance.Color mode

$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –dci $ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -ci [Compute Instance] –dci$ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –dci $ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] -ci [Compute Instance] –dciCode Block. nvidia-smi command - Compute Instance deletion Color mode$ nvidia-smi mig -i 0 -gi 0 –lci +-----------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Placement | | GPU Instance ID ID Start:Size | | ID | |=================================================================| | 0 0 MIG 7g.80gb 4 0 0:7 | +-----------------------------------------------------------------+$ nvidia-smi mig -i 0 -gi 0 –lci +-----------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Placement | | GPU Instance ID ID Start:Size | | ID | |=================================================================| | 0 0 MIG 7g.80gb 4 0 0:7 | +-----------------------------------------------------------------+Code Block. MIG Compute Instance Check Example Color mode$ nvidia-smi mig -i 0 -gi 0 –dci Successfully destroyed compute instance ID 0 from GPU instance ID 0$ nvidia-smi mig -i 0 -gi 0 –dci Successfully destroyed compute instance ID 0 from GPU instance ID 0Code Block. Compute Instance deletion example Color mode$ nvidia-smi mig -i 0 -gi 0 –lci No compute instances found: Not found$ nvidia-smi mig -i 0 -gi 0 –lci No compute instances found: Not foundCode Block. Compute Instance deletion confirmation

GPU Instance deletion

- Delete the GPU Instance.Color mode

$ nvidia-smi mig -i [GPU ID] –dgi $ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –dgi$ nvidia-smi mig -i [GPU ID] –dgi $ nvidia-smi mig -i [GPU ID] -gi [GPU Instance ID] –dgiCode block. nvidia-smi command - GPU Instance deletion Color mode$ nvidia-smi mig -i 0 -lgi +--------------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |========================================================| | 0 MIG 7g.80gb 0 0 0:8 | +--------------------------------------------------------+$ nvidia-smi mig -i 0 -lgi +--------------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |========================================================| | 0 MIG 7g.80gb 0 0 0:8 | +--------------------------------------------------------+Code block. nvidia-smi command - GPU Instance check example Color mode$ nvidia-smi mig -i 0 -dgi Successfully destroyed GPU instance ID 0 from GPU 0$ nvidia-smi mig -i 0 -dgi Successfully destroyed GPU instance ID 0 from GPU 0Code block. nvidia-smi command - GPU Instance deletion example Color mode$ nvidia-smi mig -i 0 -lgi No GPU instances found: Not found$ nvidia-smi mig -i 0 -lgi No GPU instances found: Not foundCode block. nvidia-smi command - GPU Instance deletion example

MIG Function Disablement (Deactivation)

- Disable MIG and then reboot.Color mode