Based on the best stability in Korea, it provides the optimal computing resources conveniently and flexibly according to the purpose of use.

This is the multi-page printable view of this section. Click here to print.

Compute

- 1: Virtual Server

- 1.1: Overview

- 1.1.1: Server Type

- 1.1.2: Monitoring Metrics

- 1.1.3: ServiceWatch Metrics

- 1.2: How-to guides

- 1.2.1: Image

- 1.2.2: Keypair

- 1.2.3: Server Group

- 1.2.4: IP Change

- 1.2.5: Linux NTP Setting

- 1.2.6: Setting up RHEL Repo and WKMS

- 1.2.7: ServiceWatch Agent Installation

- 1.3: API Reference

- 1.4: CLI Reference

- 1.5: Release Note

- 2: Virtual Server Auto-Scaling

- 2.1: Overview

- 2.1.1: Monitoring Metrics

- 2.2: How-to guides

- 2.2.1: Launch Configuration

- 2.2.2: Managing Policies

- 2.2.3: Managing Schedules

- 2.2.4: Managing Notifications

- 2.3: API Reference

- 2.4: CLI Reference

- 2.5: Release Note

- 3: GPU Server

- 3.1:

- 3.1.1: Server Type

- 3.1.2: Monitoring Metrics

- 3.1.3: ServiceWatch Metrics

- 3.2: How-to guides

- 3.2.1: Image Management

- 3.2.2: Using Multi-instance GPU in GPU Server

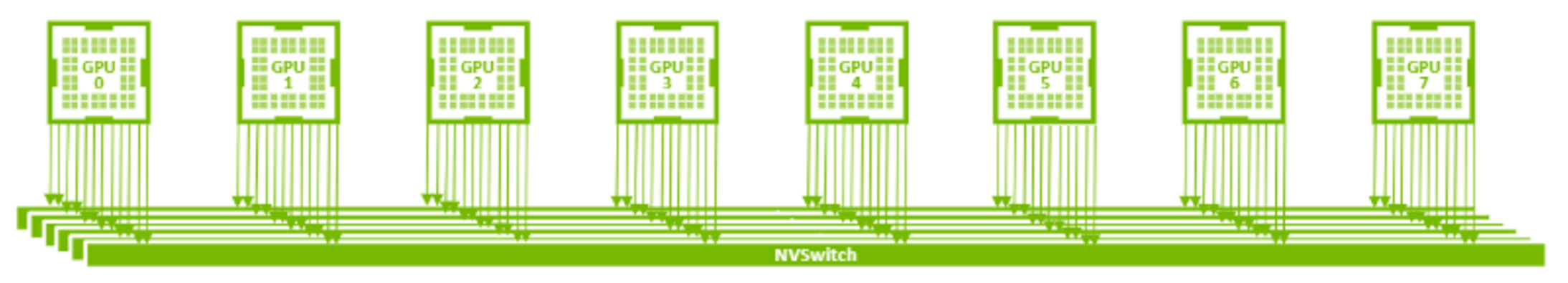

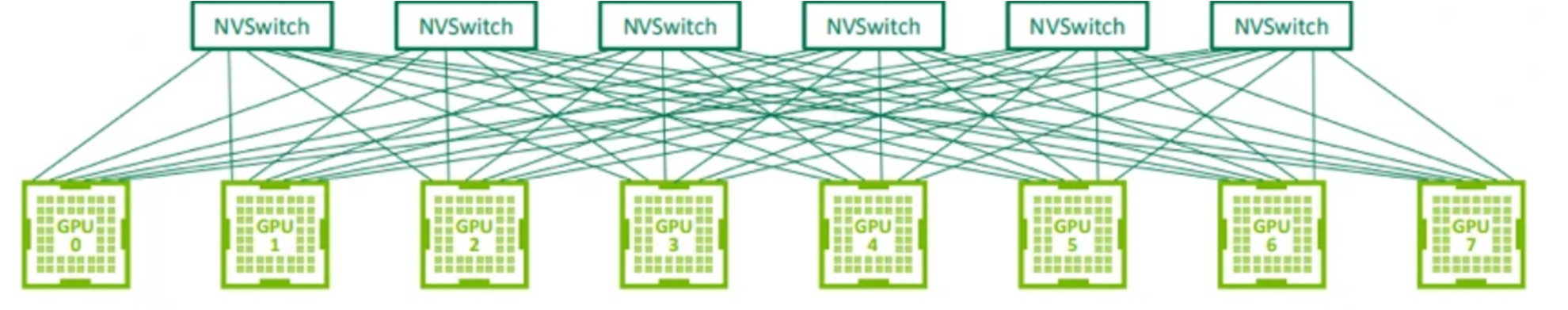

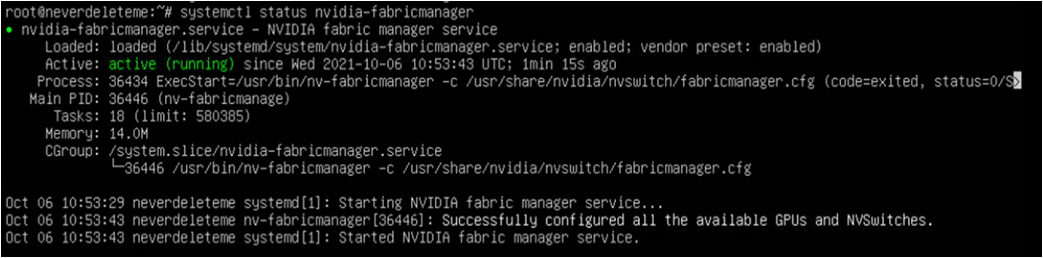

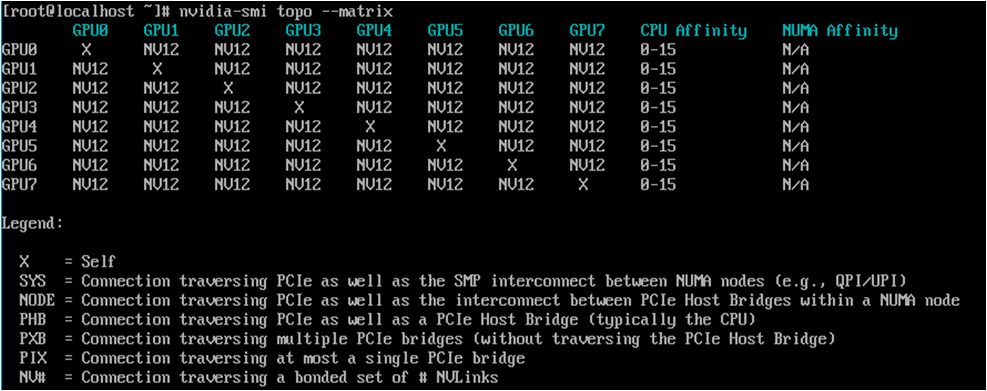

- 3.2.3: Using NVSwitch on GPU Server

- 3.2.4: Keypair Management

- 3.2.5: ServiceWatch Agent Install

- 3.3: API Reference

- 3.4: CLI Reference

- 3.5: Release Note

- 4: Bare Metal Server

- 4.1: Overview

- 4.1.1: Server Type

- 4.1.2: Monitoring Metrics

- 4.2: How-to guides

- 4.2.1: ServiceWatch Agent Install

- 4.3: API Reference

- 4.4: CLI Reference

- 4.5: Release Note

- 5: Multi-node GPU Cluster

- 5.1: Overview

- 5.1.1: Server Type

- 5.1.2: Monitoring Metrics

- 5.2: How-to guides

- 5.2.1: Cluster Fabric Management

- 5.2.2: ServiceWatch Agent Install

- 5.2.3: Multi-node GPU Cluster Service Scope and Inspection Guide

- 5.3: Release Note

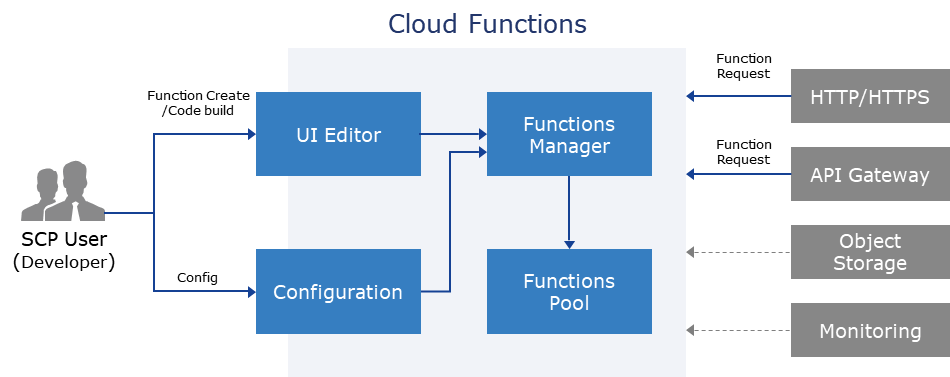

- 6: Cloud Functions

- 6.1: Overview

- 6.2: How-to guides

- 6.2.1: Set Trigger

- 6.2.2: AIOS Connect

- 6.2.3: Blueprint Detailed Guide

- 6.2.4: PrivateLink Service Integration

- 6.3: Release Note

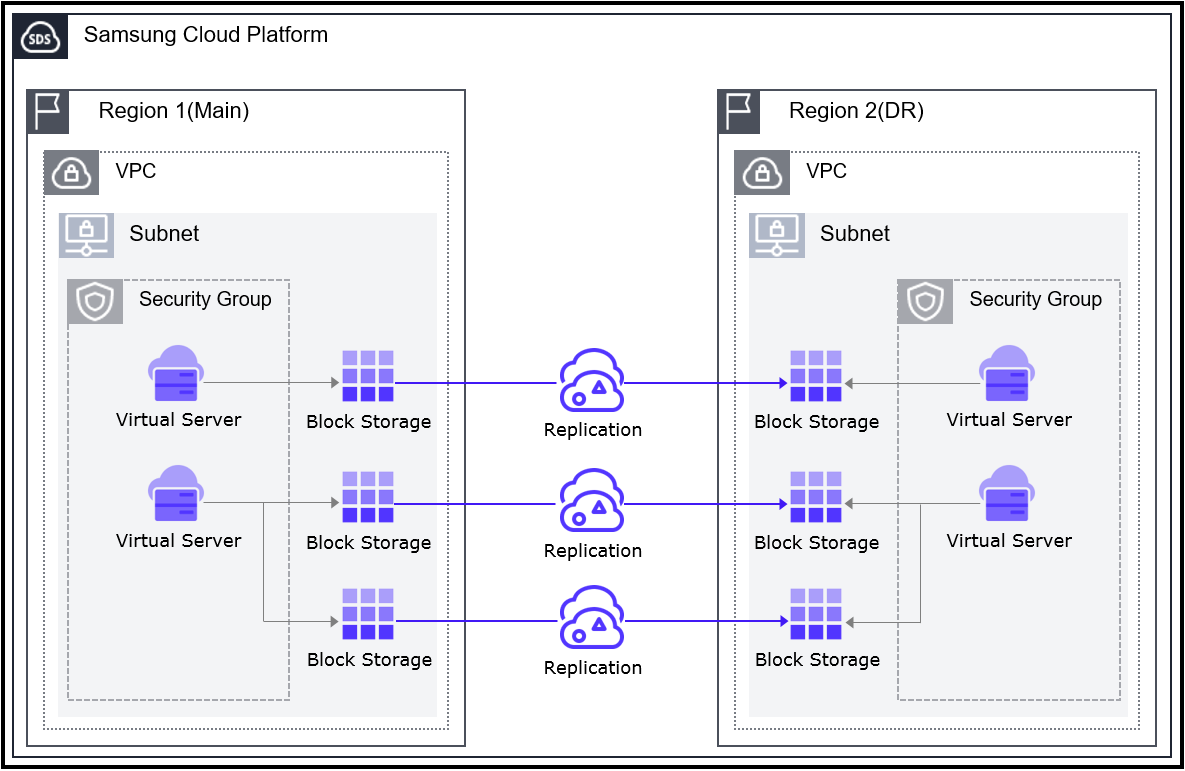

- 7: Virtual Server DR

- 7.1: Overview

- 7.2: Release Note

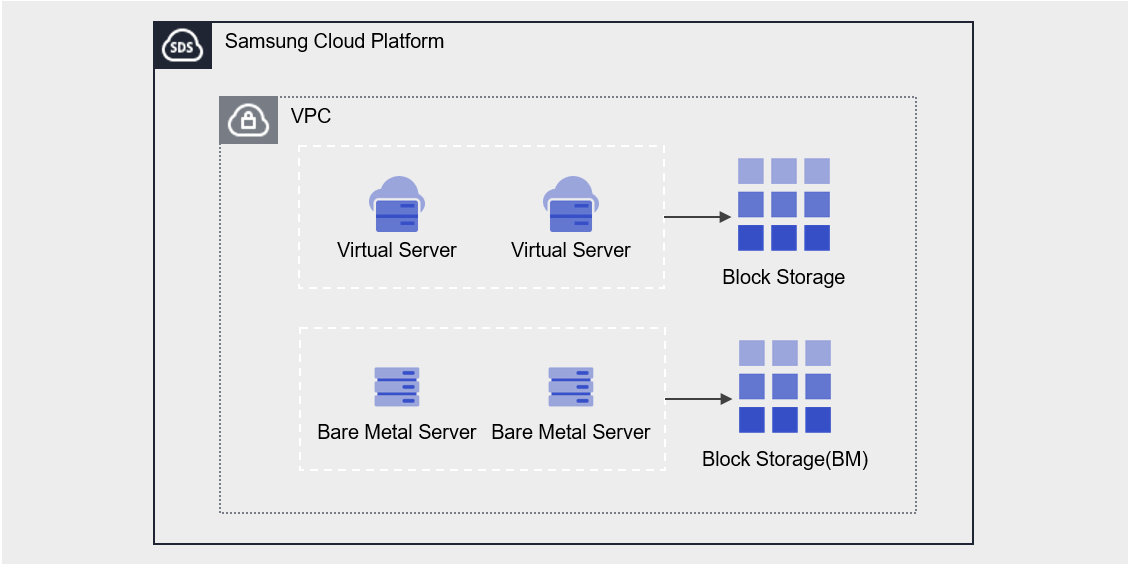

- 8: Block Storage

- 8.1: Overview

- 8.1.1: Monitoring Metrics

- 8.2: How-to guides

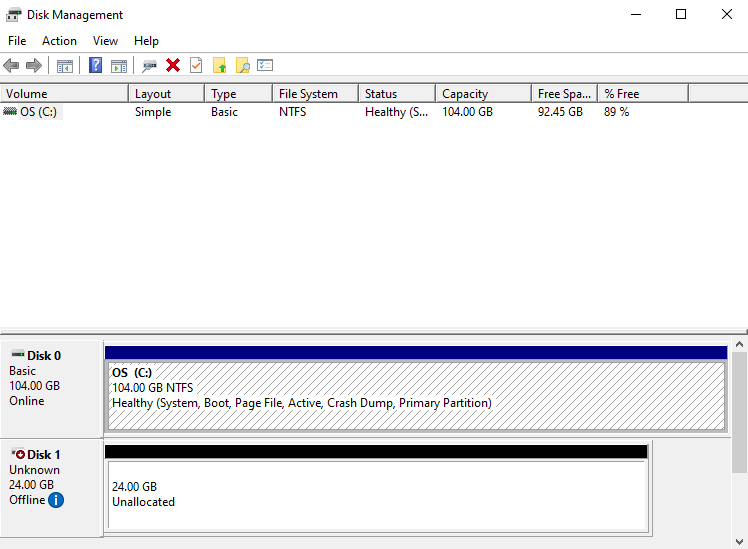

- 8.2.1: Connecting to the Server

- 8.2.2: Using Snapshots

- 8.2.3: Move Volume

- 8.3: API Reference

- 8.4: CLI Reference

- 8.5: Release Note

1 - Virtual Server

1.1 - Overview

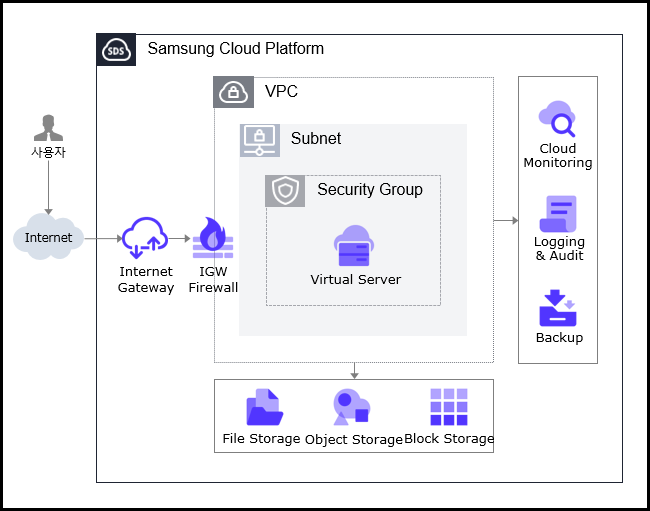

Service Overview

Virtual Server can be allocated freely as needed at the time needed without having to purchase infrastructure resources such as CPU, Memory provided by the server individually, and it is a virtual server optimized for cloud computing. In a cloud environment, you can use resources with optimized performance according to the user’s computing usage purpose such as development, testing, and application execution.

Features

Easy and convenient computing environment configuration: Through a web-based console, users can easily use self-service from Virtual Server provisioning to resource management and cost management. If capacity changes of major resources such as CPU or Memory are needed while using Virtual Server, they can be easily scaled up or down without operator intervention.

Providing various types of services: Provides virtualized vCore/Memory resources according to predefined server types (1~128 vCore).

- General Virtual Server: Provides commonly used Computing Spec (up to 16 vCore, 256 GB)

- Large-capacity Virtual Server: Provided when large-capacity resources beyond the standard Virtual Server Spec are needed

Strong Security Implementation: Through the Security Group service, it controls inbound/outbound traffic communicating with external internet or other VPC (Virtual Private Cloud) to safely protect the server. Additionally, real-time monitoring enables stable operation of computing resources.

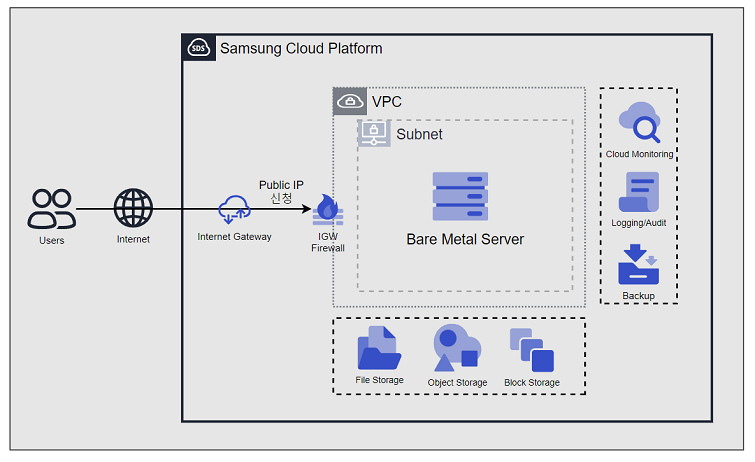

Service Architecture Diagram

Provided Features

Virtual Server provides the following functions.

- Auto Provisioning and Management: Provides functions from Virtual Server provisioning to resource management and cost management through a web-based console. If you need to change the capacity of major resources such as CPU or Memory while using Virtual Server, you can change it immediately using the server type modification feature.

- Standard server type and Image provision: Provides virtualized vCore/Memory resources according to the standard server type, and provides a standard OS Image.

- Storage Connection: Provides additional connected storage besides the OS disk. Block Storage, File Storage, and Object Storage can be added and used.

- Network Connection: You can connect the Virtual Server’s general subnet/IP settings and Public NAT IP. Provides a local subnet connection for inter-server communication. This operation can be modified on the detail page.

- Security Group applied: Through the Security Group service, it controls inbound/outbound traffic communicating with external internet or other VPCs, safely protecting the server.

- Monitoring: You can check monitoring information such as CPU, Memory, Disk that correspond to computing resources through the Cloud Monitoring service.

- Backup and Recovery: You can backup and recover Virtual Server Image through the Backup service.

- Cost Management: You can create, stop, or terminate servers as needed, and since billing is based on actual usage time, you can check costs according to usage.

- ServiceWatch service integration provision: You can monitor data through the ServiceWatch service.

Components

Virtual Server provides standard server types and standard OS images. Users can select and use them according to the desired service scale.

Image

You can create and manage images. The main features are as follows.

- Image creation: You can create an Image from the configuration of the Virtual Server you are using, and you can create an Image by uploading the user’s Image file to Object Storage.

- Create Shared Image: You can create an Image with Visibility set to Private as a Shared Image that can be shared.

- Share with another Account: You can share the Image with another Account.

- Image creation and usage methods, please refer to the How-to guides > Image document.

Keypair

To provide a safer OS access, we enhance security by offering a Key Pair instead of the ID/Password entry method. The main features are as follows.

- Keypair creation: Create user credentials to connect to the Virtual Server.

- Get Public Key: You can retrieve the public key by loading a file or entering the public key directly.

- For creating and using Keypair, refer to the How-to guides > Keypair document.

Server Group

Through Server Group settings, you can place the Block Storage added when creating a Virtual Server near or distributed across racks and hosts. The main features are as follows.

- Server Group creation: You can set Virtual Servers belonging to the same Server Group to Anti-Affinity (distributed placement), Affinity (proximate placement), Partition (distributed placement of Virtual Server and Block Storage).

- For how to create and use Server Group, refer to the How-to guides > Server Group document.

OS Image Provided Version

The OS Image provided by Virtual Server are as follows

| OS Image Version | EoS Date |

|---|---|

| Alma Linux 8.10 | 2029-05-31 |

| Alma Linux 9.6 | 2025-11-17 |

| Oracle Linux 8.10 | 2029-07-31 |

| Oracle Linux 9.6 | 2025-11-25 |

| RHEL 8.10 | 2029-05-31 |

| RHEL 9.4 | 2026-04-30 |

| RHEL 9.6 | 2027-05-31 |

| Rocky Linux 8.10 | 2029-05-31 |

| Rocky Linux 9.6 | 2025-11-30 |

| Ubuntu 22.04 | 2027-06-30 |

| Ubuntu 24.04 | 2029-06-30 |

| Windows 2019 | 2029-01-09 |

| Windows 2022 | 2031-10-14 |

- Linux operating systems such as Alma Linux and Rocky Linux provide only even Minor versions, except for the very last release of a Major version. This is a policy to ensure the stability and consistency of the SCP system. We recommend checking the EOS (End of Support) and EOL (End of Life) dates for the operating system, and if necessary, applying new or additional individual packages to maintain a stable environment.

Server Type

The server types supported by Virtual Server are as follows. For more details about server types, refer to Virtual Server Server Type.

Standard s1v2m4

Category | Example | Detailed description |

|---|---|---|

| Server Type | Standard | Provided server type categories

|

| Server Specification | s1 | Provided server type classification and generation

|

| Server specifications | v2 | Number of vCores

|

| Server Specifications | m4 | Memory Capacity

|

Constraints

- If you create a Virtual Server with Rocky Linux or Oracle Linux, additional settings are required for time synchronization (NTP: Network Time Protocol). If you create it with another image, it is set automatically and no separate configuration is needed.

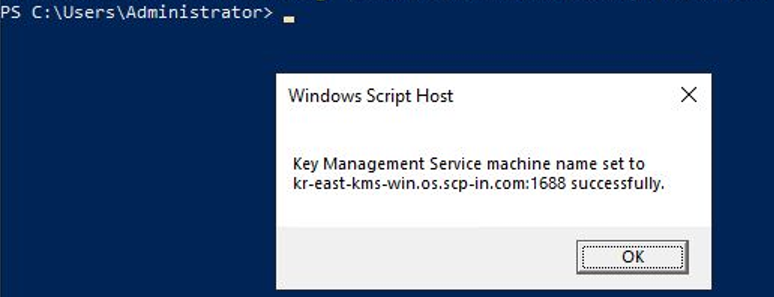

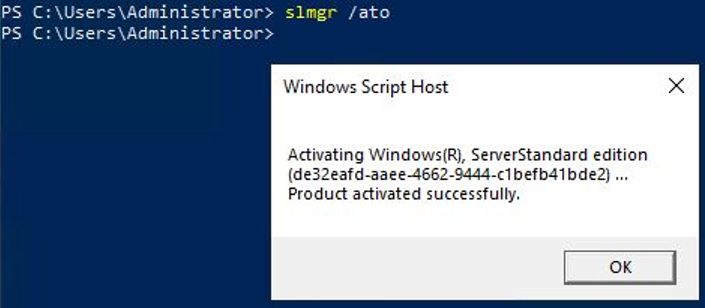

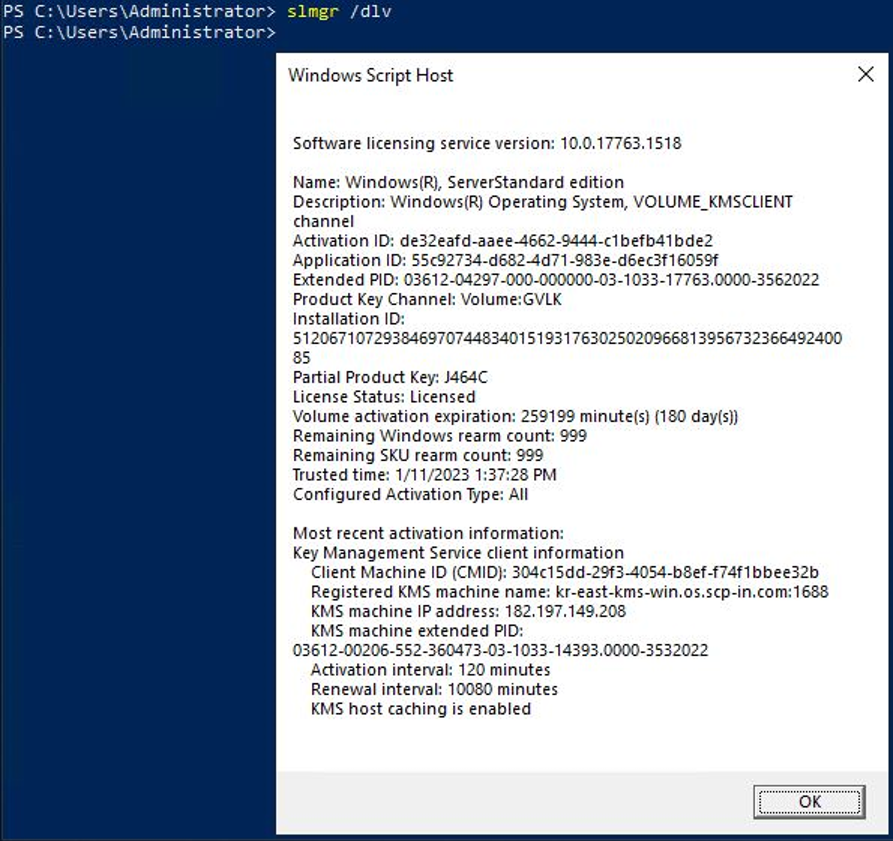

For more details, please refer to Linux NTP Setup. - If RHEL and Windows Server were created before August 2025, RHEL Repository and WKMS (Windows Key Management Service) settings need to be modified.

For more details, see RHEL Repo and WKMS Setting.

Preceding Service

This is a list of services that need to be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | Service | Detailed Description |

|---|---|---|

| Networking | VPC | A service that provides an independent virtual network in a cloud environment |

| Networking | Security Group | Virtual firewall that controls server traffic |

1.1.1 - Server Type

Virtual Server server type

Virtual Server provides a server type suitable for the purpose of use. The server type consists of various combinations such as CPU, Memory, Network Bandwidth, etc. The host server used by the Virtual Server is determined by the server type selected when creating the Virtual Server. Please select a server type according to the specifications of the application you want to run on the Virtual Server.

The server types supported by Virtual Server are as follows.

Standard s1v2m4

Classification | Example | Detailed Description |

|---|---|---|

| Server Type | Standard | Provided server type distinction

|

| Server Specification | s1 | Type of server provided and generation distinction

|

| Server Specification | v2 | Number of vCores

|

| Server Specification | m4 | Memory Capacity

|

s1 server type

The s1 server type of Virtual Server is provided with standard specifications (vCPU, Memory) and is suitable for various applications.

- Samsung Cloud Platform v2’s 1st generation: up to 3.3Ghz Intel 3rd generation (Ice Lake) Xeon Gold 6342 Processor

- Supports up to 16 vCPUs and 256 GB of memory

- Up to 12.5 Gbps networking speed

| Division | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| Standard | s1v1m2 | 1 vCore | 2 GB | up to 10 Gbps |

| Standard | s1v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | s1v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | s1v2m16 | 2 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v2m24 | 2 vCore | 24 GB | Up to 10 Gbps |

| Standard | s1v2m32 | 2 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | s1v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v4m32 | 4 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v4m48 | 4 vCore | 48 GB | Up to 10 Gbps |

| Standard | s1v4m64 | 4 vCore | 64 GB | Up to 10 Gbps |

| Standard | s1v6m12 | 6 vCore | 12 GB | Up to 10 Gbps |

| Standard | s1v6m24 | 6 vCore | 24 GB | Up to 10 Gbps |

| Standard | s1v6m48 | 6 vCore | 48 GB | Up to 10 Gbps |

| Standard | s1v6m72 | 6 vCore | 72 GB | Up to 10 Gbps |

| Standard | s1v6m96 | 6 vCore | 96 GB | Up to 10 Gbps |

| Standard | s1v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v8m64 | 8 vCore | 64 GB | Up to 10 Gbps |

| Standard | s1v8m96 | 8 vCore | 96 GB | Up to 10 Gbps |

| Standard | s1v8m128 | 8 vCore | 128 GB | Up to 10 Gbps |

| Standard | s1v10m20 | 10 vCore | 20 GB | up to 10 Gbps |

| Standard | s1v10m40 | 10 vCore | 40 GB | Up to 10 Gbps |

| Standard | s1v10m80 | 10 vCore | 80 GB | up to 10 Gbps |

| Standard | s1v10m120 | 10 vCore | 120 GB | up to 10 Gbps |

| Standard | s1v10m160 | 10 vCore | 160 GB | Up to 10 Gbps |

| Standard | s1v12m24 | 12 vCore | 24 GB | Up to 12.5 Gbps |

| Standard | s1v12m48 | 12 vCore | 48 GB | Up to 12.5 Gbps |

| Standard | s1v12m96 | 12 vCore | 96 GB | Up to 12.5 Gbps |

| Standard | s1v12m144 | 12 vCore | 144 GB | Up to 12.5 Gbps |

| Standard | s1v12m192 | 12 vCore | 192 GB | up to 12.5 Gbps |

| Standard | s1v14m28 | 14 vCore | 28 GB | Up to 12.5 Gbps |

| Standard | s1v14m56 | 14 vCore | 56 GB | Up to 12.5 Gbps |

| Standard | s1v14m112 | 14 vCore | 112 GB | Up to 12.5 Gbps |

| Standard | s1v14m168 | 14 vCore | 168 GB | Up to 12.5 Gbps |

| Standard | s1v14m224 | 14 vCore | 224 GB | Up to 12.5 Gbps |

| Standard | s1v16m32 | 16 vCore | 32 GB | up to 12.5 Gbps |

| Standard | s1v16m64 | 16 vCore | 64 GB | Up to 12.5 Gbps |

| Standard | s1v16m128 | 16 vCore | 128 GB | up to 12.5 Gbps |

| Standard | s1v16m192 | 16 vCore | 192 GB | Up to 12.5 Gbps |

| Standard | s1v16m256 | 16 vCore | 256 GB | up to 12.5 Gbps |

S2 Server Type

Virtual Server s2 server type is provided with standard specifications (vCPU, Memory) and is suitable for various applications.

- Samsung Cloud Platform v2’s 2nd generation: up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor

- Supports up to 16 vCPUs and 256 GB of memory

- Up to 12.5 Gbps networking speed

| Classification | Server Type | CPU vCore | Memory | Network Bandwidth(Gbps) |

|---|---|---|---|---|

| Standard | s2v1m2 | 1 vCore | 2 GB | Up to 10 Gbps |

| Standard | s2v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | s2v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | s2v2m16 | 2 vCore | 16 GB | Up to 10 Gbps |

| Standard | s2v2m24 | 2 vCore | 24 GB | Up to 10 Gbps |

| Standard | s2v2m32 | 2 vCore | 32 GB | Up to 10 Gbps |

| Standard | s2v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | s2v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | s2v4m32 | 4 vCore | 32 GB | Up to 10 Gbps |

| Standard | s2v4m48 | 4 vCore | 48 GB | Up to 10 Gbps |

| Standard | s2v4m64 | 4 vCore | 64 GB | Up to 10 Gbps |

| Standard | s2v6m12 | 6 vCore | 12 GB | Up to 10 Gbps |

| Standard | s2v6m24 | 6 vCore | 24 GB | Up to 10 Gbps |

| Standard | s2v6m48 | 6 vCore | 48 GB | Up to 10 Gbps |

| Standard | s2v6m72 | 6 vCore | 72 GB | Up to 10 Gbps |

| Standard | s2v6m96 | 6 vCore | 96 GB | Up to 10 Gbps |

| Standard | s2v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | s2v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | s2v8m64 | 8 vCore | 64 GB | Up to 10 Gbps |

| Standard | s2v8m96 | 8 vCore | 96 GB | Up to 10 Gbps |

| Standard | s2v8m128 | 8 vCore | 128 GB | Up to 10 Gbps |

| Standard | s2v10m20 | 10 vCore | 20 GB | Up to 10 Gbps |

| Standard | s2v10m40 | 10 vCore | 40 GB | Up to 10 Gbps |

| Standard | s2v10m80 | 10 vCore | 80 GB | Up to 10 Gbps |

| Standard | s2v10m120 | 10 vCore | 120 GB | Up to 10 Gbps |

| Standard | s2v10m160 | 10 vCore | 160 GB | Up to 10 Gbps |

| Standard | s2v12m24 | 12 vCore | 24 GB | Up to 12.5 Gbps |

| Standard | s2v12m48 | 12 vCore | 48 GB | Up to 12.5 Gbps |

| Standard | s2v12m96 | 12 vCore | 96 GB | Up to 12.5 Gbps |

| Standard | s2v12m144 | 12 vCore | 144 GB | Up to 12.5 Gbps |

| Standard | s2v12m192 | 12 vCore | 192 GB | Up to 12.5 Gbps |

| Standard | s2v14m28 | 14 vCore | 28 GB | Up to 12.5 Gbps |

| Standard | s2v14m56 | 14 vCore | 56 GB | Up to 12.5 Gbps |

| Standard | s2v14m112 | 14 vCore | 112 GB | Up to 12.5 Gbps |

| Standard | s2v14m168 | 14 vCore | 168 GB | up to 12.5 Gbps |

| Standard | s2v14m224 | 14 vCore | 224 GB | Up to 12.5 Gbps |

| Standard | s2v16m32 | 16 vCore | 32 GB | up to 12.5 Gbps |

| Standard | s2v16m64 | 16 vCore | 64 GB | Up to 12.5 Gbps |

| Standard | s2v16m128 | 16 vCore | 128 GB | up to 12.5 Gbps |

| Standard | s2v16m192 | 16 vCore | 192 GB | up to 12.5 Gbps |

| Standard | s2v16m256 | 16 vCore | 256 GB | up to 12.5 Gbps |

h2 Server Type

The h2 server type of Virtual Server is provided with large-capacity server specifications and is suitable for applications for large-scale data processing.

- Samsung Cloud Platform v2’s 2nd generation: up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor Supports up to 128 vCPUs and 1,536 GB of memory

- up to 25Gbps networking speed

| Division | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| High Capacity | h2v24m48 | 24 vCore | 48 GB | Up to 25 Gbps |

| High Capacity | h2v24m96 | 24 vCore | 96 GB | Up to 25 Gbps |

| High Capacity | h2v24m192 | 24 vCore | 192 GB | Up to 25 Gbps |

| High Capacity | h2v24m288 | 24 vCore | 288 GB | Up to 25 Gbps |

| High Capacity | h2v32m64 | 32 vCore | 64 GB | Up to 25 Gbps |

| High Capacity | h2v32m128 | 32 vCore | 128 GB | Up to 25 Gbps |

| High Capacity | h2v32m256 | 32 vCore | 256 GB | Up to 25 Gbps |

| High Capacity | h2v32m384 | 32 vCore | 384 GB | Up to 25 Gbps |

| High Capacity | h2v48m96 | 48 vCore | 96 GB | up to 25 Gbps |

| High Capacity | h2v48m192 | 48 vCore | 192 GB | Up to 25 Gbps |

| High Capacity | h2v48m384 | 48 vCore | 384 GB | Up to 25 Gbps |

| High Capacity | h2v48m576 | 48 vCore | 576 GB | Up to 25 Gbps |

| High Capacity | h2v64m128 | 64 vCore | 128 GB | Up to 25 Gbps |

| High Capacity | h2v64m256 | 64 vCore | 256 GB | Up to 25 Gbps |

| High Capacity | h2v64m512 | 64 vCore | 512 GB | Up to 25 Gbps |

| High Capacity | h2v64m768 | 64 vCore | 768 GB | Up to 25 Gbps |

| High Capacity | h2v72m144 | 72 vCore | 144 GB | Up to 25 Gbps |

| High Capacity | h2v72m288 | 72 vCore | 288 GB | Up to 25 Gbps |

| High Capacity | h2v72m576 | 72 vCore | 576 GB | Up to 25 Gbps |

| High Capacity | h2v72m864 | 72 vCore | 864 GB | Up to 25 Gbps |

| High Capacity | h2v96m192 | 96 vCore | 192 GB | Up to 25 Gbps |

| High Capacity | h2v96m384 | 96 vCore | 384 GB | Up to 25 Gbps |

| High Capacity | h2v96m768 | 96 vCore | 768 GB | Up to 25 Gbps |

| High Capacity | h2v96m1152 | 96 vCore | 1152 GB | Up to 25 Gbps |

| High Capacity | h2v128m256 | 128 vCore | 256 GB | Up to 25 Gbps |

| High Capacity | h2v128m512 | 128 vCore | 512 GB | Up to 25 Gbps |

| High Capacity | h2v128m1024 | 128 vCore | 1024 GB | Up to 25 Gbps |

| High Capacity | h2v128m1536 | 128 vCore | 1536 GB | Up to 25 Gbps |

1.1.2 - Monitoring Metrics

Virtual Server monitoring metrics

The following table shows the monitoring metrics of Virtual Server that can be checked through Cloud Monitoring. For more information on how to use Cloud Monitoring, please refer to the Cloud Monitoring guide.

You can get basic monitoring metrics without installing Agent, and please check the metrics below in Table. Virtual Server Monitoring Metrics (Basic). In addition, you can check the metrics that can be retrieved by installing Agent in Table. Virtual Server Additional Monitoring Metrics (Agent Installation Required).

For Windows OS, memory-related metrics can only be retrieved if the Agent is installed.

| Performance Item | Detailed Description | Unit |

|---|---|---|

| Memory Total [Basic] | Available memory bytes | bytes |

| Memory Used [Basic] | Currently used memory bytes | bytes |

| Memory Swap In [Basic] | Swapped memory bytes | bytes |

| Memory Swap Out [Basic] | bytes of swapped memory | bytes |

| Memory Free [Basic] | Unused memory bytes | bytes |

| Disk Read Bytes [Basic] | Read bytes | bytes |

| Disk Read Requests [Basic] | Number of Read Requests | cnt |

| Disk Write Bytes [Basic] | Write bytes | bytes |

| Disk Write Requests [Basic] | Number of Write Requests | cnt |

| CPU Usage [Basic] | 1-minute average system CPU usage rate | % |

| Instance State [Basic] | Instance Status | state |

| Network In Bytes [Basic] | Received bytes | bytes |

| Network In Dropped [Basic] | Receive Packet Drop | cnt |

| Network In Packets [Basic] | Received Packet Count | cnt |

| Network Out Bytes [Basic] | Transmission bytes | bytes |

| Network Out Dropped [Basic] | Transmission Packet Drop | cnt |

| Network Out Packets [Basic] | Transmission packet count | cnt |

| Performance Item | Detailed Description | Unit |

|---|---|---|

| Core Usage [IO Wait] | The ratio of CPU time spent in waiting state (disk waiting) | % |

| Core Usage [System] | The ratio of CPU time spent in kernel space | % |

| Core Usage [User] | The ratio of CPU time spent in user space | % |

| CPU Cores | The number of CPU cores on the host | cnt |

| CPU Usage [Active] | Idle and IOWait status excluding the percentage of CPU time used | % |

| CPU Usage [Idle] | The ratio of CPU time spent in idle state. | % |

| CPU Usage [IO Wait] | the ratio of CPU time spent in a waiting state (disk waiting) | % |

| CPU Usage [System] | The percentage of CPU time used by the kernel | % |

| CPU Usage [User] | The percentage of CPU time used in the user area | % |

| CPU Usage/Core [Active] | Idle and IOWait status excluding the percentage of CPU time used | % |

| CPU Usage/Core [Idle] | The ratio of CPU time spent in idle state. | % |

| CPU Usage/Core [IO Wait] | the ratio of CPU time spent in waiting state (disk waiting) | % |

| CPU Usage/Core [System] | The percentage of CPU time used by the kernel | % |

| CPU Usage/Core [User] | The percentage of CPU time used in the user area | % |

| DiskCPU Usage [IO Request] | The ratio of CPU time spent executing input/output requests for the device | % |

| Disk Queue Size [Avg] | The average queue length of requests executed for the device. | num |

| Disk Read Bytes | The number of bytes read from the device per second. | bytes |

| Disk Read Bytes [Delta Avg] | Average of system.diskio.read.bytes_delta for each Disk | bytes |

| Disk Read Bytes [Delta Max] | Individual Disks’ system.diskio.read.bytes_delta maximum | bytes |

| Disk Read Bytes [Delta Min] | Individual Disks’ system.diskio.read.bytes_delta minimum | bytes |

| Disk Read Bytes [Delta Sum] | Individual Disks’ sum of system.diskio.read.bytes_delta | bytes |

| Disk Read Bytes [Delta] | Individual Disk’s system.diskio.read.bytes value delta | bytes |

| Disk Read Bytes [Success] | Total bytes read successfully | bytes |

| Disk Read Requests | Number of read requests for the disk device during 1 second | cnt |

| Disk Read Requests [Delta Avg] | Individual Disks’ average of system.diskio.read.count_delta | cnt |

| Disk Read Requests [Delta Max] | Individual Disks’ system.diskio.read.count_delta maximum | cnt |

| Disk Read Requests [Delta Min] | Individual Disks’ minimum of system.diskio.read.count_delta | cnt |

| Disk Read Requests [Delta Sum] | The sum of system.diskio.read.count_delta of individual Disks | cnt |

| Disk Read Requests [Success Delta] | Individual Disk’s system.diskio.read.count delta | cnt |

| Disk Read Requests [Success] | Successfully completed total read count | cnt |

| Disk Request Size [Avg] | The average size of requests executed for the device (unit: sector) | num |

| Disk Service Time [Avg] | The average service time (in milliseconds) for the input requests executed on the device. | ms |

| Disk Wait Time [Avg] | The average time spent on requests executed for supported devices. | ms |

| Disk Wait Time [Read] | Disk Average Wait Time | ms |

| Disk Wait Time [Write] | Disk Average Wait Time | ms |

| Disk Write Bytes [Delta Avg] | Individual Disks’ average of system.diskio.write.bytes_delta | bytes |

| Disk Write Bytes [Delta Max] | Individual Disks’ system.diskio.write.bytes_delta maximum | bytes |

| Disk Write Bytes [Delta Min] | Individual Disks’ system.diskio.write.bytes_delta minimum | bytes |

| Disk Write Bytes [Delta Sum] | Individual Disks’ sum of system.diskio.write.bytes_delta | bytes |

| Disk Write Bytes [Delta] | Individual Disk’s system.diskio.write.bytes value delta | bytes |

| Disk Write Bytes [Success] | Total bytes written successfully | bytes |

| Disk Write Requests | Number of write requests to the disk device for 1 second | cnt |

| Disk Write Requests [Delta Avg] | Individual Disks’ average of system.diskio.write.count_delta | cnt |

| Disk Write Requests [Delta Max] | Individual Disks’ system.diskio.write.count_delta maximum | cnt |

| Disk Write Requests [Delta Min] | Minimum of system.diskio.write.count_delta for each Disk | cnt |

| Disk Write Requests [Delta Sum] | Sum of system.diskio.write.count_delta of individual Disks | cnt |

| Disk Write Requests [Success Delta] | Individual Disk’s system.diskio.write.count Delta | cnt |

| Disk Write Requests [Success] | Total number of writes completed successfully | cnt |

| Disk Writes Bytes | The number of bytes written to the device per second | bytes |

| Filesystem Hang Check | filesystem(local/NFS) hang check(Normal:1, Abnormal:0) | status |

| Filesystem Nodes | The total number of file nodes in the file system. | cnt |

| Filesystem Nodes [Free] | The total number of available file nodes in the file system. | cnt |

| Filesystem Size [Available] | Disk space (bytes) available for use by unauthorized users | bytes |

| Filesystem Size [Free] | Available disk space(bytes) | bytes |

| Filesystem Size [Total] | Total disk space (bytes) | bytes |

| Filesystem Usage | Used disk space percentage | % |

| Filesystem Usage [Avg] | Average of individual filesystem.used.pct | % |

| Filesystem Usage [Inode] | iNode usage rate | % |

| Filesystem Usage [Max] | Individual filesystem used percentage Max | % |

| Filesystem Usage [Min] | Individual minimum of filesystem.used.pct | % |

| Filesystem Usage [Total] | - | % |

| Filesystem Used | Used Disk Space (bytes) | bytes |

| Filesystem Used [Inode] | iNode usage | bytes |

| Memory Free | Total available memory amount (bytes) | bytes |

| Memory Free [Actual] | Actually available Memory(bytes) | bytes |

| Memory Free [Swap] | Available Swap memory | bytes |

| Memory Total | Total Memory | bytes |

| Memory Total [Swap] | Total Swap memory. | bytes |

| Memory Usage | used Memory percentage | % |

| Memory Usage [Actual] | Actual used Memory percentage | % |

| Memory Usage [Cache Swap] | cached swap usage rate | % |

| Memory Usage [Swap] | Used Swap memory percentage | % |

| Memory Used | Used Memory | bytes |

| Memory Used [Actual] | Actually used Memory(bytes) | bytes |

| Memory Used [Swap] | Used Swap memory | bytes |

| Collisions | Network Collision | cnt |

| Network In Bytes | Received byte count | bytes |

| Network In Bytes [Delta Avg] | Individual Networks’ average of system.network.in.bytes_delta | bytes |

| Network In Bytes [Delta Max] | Individual Network’s system.network.in.bytes_delta maximum | bytes |

| Network In Bytes [Delta Min] | Individual Networks’ system.network.in.bytes_delta minimum values | bytes |

| Network In Bytes [Delta Sum] | Individual networks’ sum of system.network.in.bytes_delta | bytes |

| Network In Bytes [Delta] | Received byte count delta | bytes |

| Network In Dropped | Number of packets dropped among incoming packets | cnt |

| Network In Errors | Number of errors during reception | cnt |

| Network In Packets | Received packet count | cnt |

| Network In Packets [Delta Avg] | Individual Networks’ average of system.network.in.packets_delta | cnt |

| Network In Packets [Delta Max] | Individual Network’s system.network.in.packets_delta maximum value | cnt |

| Network In Packets [Delta Min] | Individual Network’s system.network.in.packets_delta minimum value | cnt |

| Network In Packets [Delta Sum] | The sum of system.network.in.packets_delta of individual Networks | cnt |

| Network In Packets [Delta] | Received packet count delta | cnt |

| Network Out Bytes | Sent byte count | bytes |

| Network Out Bytes [Delta Avg] | Individual Networks’ average of system.network.out.bytes_delta | bytes |

| Network Out Bytes [Delta Max] | Individual Networks’ system.network.out.bytes_delta maximum | bytes |

| Network Out Bytes [Delta Min] | Individual Networks’ system.network.out.bytes_delta minimum value | bytes |

| Network Out Bytes [Delta Sum] | Individual Network’s system.network.out.bytes_delta sum | bytes |

| Network Out Bytes [Delta] | Sent byte count delta | bytes |

| Network Out Dropped | number of packets dropped among outgoing packets | cnt |

| Network Out Errors | Number of errors during transmission | cnt |

| Network Out Packets | Transmitted packet count | cnt |

| Network Out Packets [Delta Avg] | Average of system.network.out.packets_delta for individual Networks | cnt |

| Network Out Packets [Delta Max] | Individual Networks’ system.network.out.packets_delta maximum values | cnt |

| Network Out Packets [Delta Min] | Individual Network’s system.network.out.packets_delta minimum value | cnt |

| Network Out Packets [Delta Sum] | The sum of system.network.out.packets_delta of individual Networks | cnt |

| Network Out Packets [Delta] | Sent packet count delta | cnt |

| Open Connections [TCP] | All open TCP connections | cnt |

| Open Connections [UDP] | All open UDP connections | cnt |

| Port Usage | Accessible port usage rate | % |

| SYN Sent Sockets | Number of sockets in SYN_SENT state (when connecting from local to remote) | cnt |

| Kernel PID Max | kernel.pid_max value | count |

| Kernel Thread Max | kernel threads maximum value | count |

| Process CPU Usage | The percentage of CPU time consumed by the process after the last update | % |

| Process CPU Usage/Core | The percentage of CPU time used by the process since the last event | % |

| Process Memory Usage | main memory(RAM) where the process occupies a ratio | % |

| Process Memory Used | Resident Set size. The amount of memory a process occupies in RAM | bytes |

| Process PID | Process pid | pid |

| Process PPID | Parent process’s pid | pid |

| Processes [Dead] | number of dead processes | cnt |

| Processes [Idle] | idle Number of Processes | cnt |

| Processes [Running] | running Number of Processes | count |

| Processes [Sleeping] | sleeping processes count | cnt |

| Processes [Stopped] | stopped processes count | cnt |

| Processes [Total] | Total number of processes | cnt |

| Processes [Unknown] | The status cannot be searched or the number of unknown processes | cnt |

| Processes [Zombie] | Number of zombie processes | cnt |

| Running Process Usage | Process Usage Rate | % |

| Running Processes | number of running processes | count |

| Running Thread Usage | thread usage rate | % |

| Running Threads | running processes where the total number of threads being executed | cnt |

| Context Switches | number of context switches (per second) | cnt |

| Load/Core [1 min] | The value divided by the number of cores for the last 1 minute load | cnt |

| Load/Core [15 min] | The value of load divided by the number of cores for the last 15 minutes | cnt |

| Load/Core [5 min] | The value divided by the number of cores for the last 5 minutes | cnt |

| Multipaths [Active] | External storage connection path status = active count | cnt |

| Multipaths [Failed] | External storage connection path status = failed count | cnt |

| Multipaths [Faulty] | External storage connection path status = faulty count | cnt |

| NTP Offset last | sample’s measured offset (time difference between NTP server and local environment) | num |

| Run Queue Length | Execution Waiting Queue Length | num |

| Uptime | OS operation time(uptime) (milliseconds) | ms |

| Context Switchies CPU | number of context switches (per second) | cnt |

| Disk Read Bytes [Sec] | bytes read from the Windows logical disk in 1 second

| cnt |

| Disk Read Time [Avg] | Data Read Average Time (sec)

| sec |

| Disk Transfer Time [Avg] | Disk average wait time (seconds)

| sec |

| Disk Write Bytes [Sec] | The number of bytes written to the Windows logical disk in 1 second

| cnt |

| Disk Write Time [Avg] | Data write average time (seconds)

| sec |

| Pagingfile Usage | Paging file usage rate

| % |

| Pool Used [Non Paged] | _KERNEL MEMORY among Nonpaged Pool usage

| bytes |

| Pool Used [Paged] | Kernel memory Paged Pool usage among kernel memory

| bytes |

| Process [Running] | The number of processes currently running

| cnt |

| Threads [Running] | The number of threads currently running

| cnt |

| Threads [Waiting] | The number of threads waiting for processor time

| cnt |

1.1.3 - ServiceWatch Metrics

Virtual Server sends metrics to ServiceWatch. The metrics provided by basic monitoring are data collected at 5-minute intervals. If detailed monitoring is enabled, you can view data collected at 1-minute intervals.

How to enable detailed monitoring of Virtual Server, please refer to How-to guides > ServiceWatch Detailed Monitoring Activation.

Basic Indicators

The following are the basic metrics for the Virtual Server namespace.

| Performance Item | Detailed Description | Unit | Meaningful Statistics |

|---|---|---|---|

| Instance State | Instance status display | - | - |

| CPU Usage | CPU Usage | Percent |

|

| Disk Read Bytes | Read capacity from block device (bytes) | Bytes |

|

| Disk Read Requests | Read request count on block device | Count |

|

| Disk Write Bytes | Write capacity on block device (bytes) | Bytes |

|

| Disk Write Requests | Number of write requests on block device | Count |

|

| Network In Bytes | Capacity received from network interface (bytes) | Bytes |

|

| Network In Dropped | Number of packet drops received on network interface | Count |

|

| Network In Packets | Number of packets received on the network interface | Count |

|

| Network Out Bytes | Data transmitted from the network interface (bytes) | Bytes |

|

| Network Out Dropped | Number of packet drops transmitted from the network interface | Count |

|

| Network Out Packets | Number of packets transmitted from the network interface | Count |

|

1.2 - How-to guides

The user can enter the required information for a Virtual Server through the Samsung Cloud Platform Console, select detailed options, and create the service.

Virtual Server Create

You can create and use Virtual Server services from the Samsung Cloud Platform Console.

To create Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server Creation button on the Service Home page. You will be taken to the Virtual Server Creation page.

- Virtual Server Creation page, enter the information required to create the service, and select detailed options.

- Image and Version Selection Select the required information in the area.

Category RequiredDetailed description Image Required Select the type of Image to provide - Standard: Samsung Cloud Platform standard provided Image

- Alma Linux, Oracle Linux, RHEL, Rocky Linux, Ubuntu, Windows

- Custom: User-created Image

- Kubernetes: Image for Kubernetes

- RHEL, Ubuntu

- Marketplace: Image subscribed from Marketplace

Image version Required Select version of the chosen Image - Provides a list of versions of the server Image offered

Table. Virtual Server Image and version selection input items - Standard: Samsung Cloud Platform standard provided Image

- Service Information Input area, enter or select the required information.

Category RequiredDetailed description Server count Required Number of servers to create simultaneously - Only numbers can be entered, input a value between 1 and 100

Service Type > Server Type Required Virtual Server Server Type - Standard: Standard specifications commonly used

- High Capacity: Large server specifications above Standard

- For detailed information about server types provided by Virtual Server, refer to Virtual Server Server Type

Service Type > Planned Compute Required Status of resources with Planned Compute set - In Use: Number of resources with Planned Compute set that are in use

- Configured: Number of resources with Planned Compute set

- Coverage Preview: Amount applied by Planned Compute per resource

- Apply for Planned Compute Service: Go to Planned Compute service creation page

- For details, refer to Apply for Planned Compute

Block Storage Required Block Storage settings used by the server according to purpose - Basic OS: Area where OS is installed and used

- Enter capacity in Units; minimum capacity varies by OS Image type

- Alma Linux: Enter a value between 2 and 1,536

- Oracle Linux: Enter a value between 7 and 1,536

- RHEL: Enter a value between 2 and 1,536

- Rocky Linux: Enter a value between 2 and 1,536

- Ubuntu: Enter a value between 2 and 1,536

- Windows: Enter a value between 4 and 1,536

- SSD: High-performance general volume

- HDD: General volume

- SSD/HDD_KMS: Additional encrypted volume using Samsung Cloud Platform KMS (Key Management Service) encryption key

- Encryption can only be applied at initial creation and cannot be changed afterwards

- Using SSD_KMS disk type causes performance degradation

- Enter capacity in Units; minimum capacity varies by OS Image type

- Additional: Use when additional user space is needed outside the OS area

- After selecting Use, enter the storage type and capacity

- Click the + button to add storage, and the x button to delete (up to 25 can be added)

- Enter capacity in Units, value between 1 and 1,536

- 1 Unit is 8GB, so 8 ~ 12,288GB is created

- SSD: High-performance general volume

- HDD: General volume

- SSD/HDD_KMS: Additional encrypted volume using Samsung Cloud Platform KMS (Key Management Service) encryption key

- Encryption can only be applied at initial creation and cannot be changed afterwards

- Using SSD_KMS disk type may cause performance degradation

- SSD_MultiAttach: Volume that can be attached to more than one server

- For detailed information on each Block Storage type, refer to Create Block Storage

- Delete on termination: When Delete on Termination is selected, the volume is terminated together with the server

- Volumes with snapshots are not deleted even when Delete on termination is enabled

- Multi-attach volumes are deleted only when the server being deleted is the last remaining server attached to the volume

Server Group Select Set servers belonging to the same Server Group to Anti-Affinity (distributed placement), Affinity (proximate placement), Partition (distributed placement of Virtual Server and Block Storage) - Use after selecting, select Server Group

- Select Create New to create a Server Group

- Place servers belonging to the same Server Group according to the selected policy using a Best Effort method

- Select a policy among Anti-Affinity (distributed placement), Affinity (proximate placement), Partition (distributed placement of Virtual Server and Block Storage)

Table. Virtual Server Service Information Input Items

- Image and Version Selection Select the required information in the area.

- If you use the Partition (distributed deployment of Virtual Server and Block Storage) policy among Server Group policies, since additional allocation of Block Storage Volume after Virtual Server creation is not possible, please create all required Block Storage at the Virtual Server creation stage.

* In the **Required Information Input** area, enter or select the necessary information.

| Category | Required or not | Detailed description |

|---|---|---|

| Server Name | Required | Enter a name to distinguish the server when the number of selected servers is 1

|

| Network Settings > Create New Network Port | Required | Set the network where the Virtual Server will be installed

|

| Network Settings > Existing Network Port Assignment | Required | Set the network where the Virtual Server will be installed

|

| Keypair | Required | User authentication method to use when connecting to the server

|

| Category | Required | Detailed description |

|---|---|---|

| Lock | Select | Lock usage setting

|

| Init script | Select | Script executed when the server starts

|

| Tag | Select | Add Tag

|

When entering a server name, if you use spaces and special characters (_) the OS hostname will have spaces and special characters (_) changed to the special character (-) when set. Please refer to this when setting the OS hostname.

- Example: If the server name is ‘server name_01’, the OS hostname is set to ‘server-name-01’.

- Rocky Linux, Oracle Linux with Virtual Server creation case, additional configuration is required for time synchronization (NTP: Network Time Protocol). For more details, please refer to Setting up Linux NTP.

- If RHEL and Windows Server were created before July 2025, RHEL Repository and WKMS (Windows Key Management Service) settings need to be modified. For more details, see Setting up RHEL Repo and WKMS.

Check Virtual Server detailed information

Virtual Server service allows you to view and edit the full resource list and detailed information. Virtual Server Details page is composed of Detailed Information, Tags, Activity Log tabs.

To view detailed information of the Virtual Server service, follow the steps below.

- Click the All Services > Compute > Virtual Server menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. You will be taken to the Virtual Server list page.

- Click the resource to view detailed information on the Virtual Server List page. It will navigate to the Virtual Server Details page.

- Virtual Server Details page displays status information and additional feature information, and consists of Details, Tags, Activity History tabs.

- Virtual Server Additional Features for detailed information, please refer to Virtual Server Management Additional Features.

Category Detailed description Virtual Server Status Status of Virtual Server created by the user - Build: State where the Build command has been issued

- Building: Build in progress

- Networking: Server creation in progress process

- Scheduling: Server creation in progress process

- Block_Device_Mapping: Block Storage being attached during server creation

- Spawning: Server creation process is ongoing

- Active: Available state

- Powering_off: State when a stop request is made

- Deleting: Server deletion in progress

- Reboot_Started: Reboot in progress state

- Error: Error state

- Migrating: State where the server is migrating to another host

- Reboot: State where the Reboot command has been issued

- Rebooting: Rebooting in progress

- Rebuild: State where the Rebuild command has been issued

- Rebuilding: State when a Rebuild request is made

- Rebuild_Spawning: Rebuild process is ongoing

- Resize: State where the Resize command has been issued

- Resizing: Resize in progress

- Resize_Prep: State when a server type modification request is made

- Resize_Migrating: State where the server is being moved to another host while resizing

- Resize_Migrated: State where the server has completed moving to another host while resizing

- Resize_Finish: Resize completed

- Revert_Resize: Resize or migration of the server failed for some reason. The target server is cleaned up and the original server is restarted

- Shutoff: State when Powering off is completed

- Verity_ Resize: After Resize_Prep due to a server type modification request, the server type is confirmed or can be reverted

- Resize_Reverting: State when a server type revert request is made

- Resize_Confirming: State where the server’s Resize request is being confirmed

Server Control Button to change server status - Start: Start a stopped server

- Stop: Stop a running server

- Restart: Restart a running server

Image creation Create user Image from the current server’s Image - For detailed Image creation method refer to Image creation

Console Log View current server’s console log - You can check the console logs output by the current server. For more details, refer to Check Console Log.

Dump creation Create a dump of the current server - The dump file is created inside the Virtual Server

- For detailed dump creation method, refer to Dump creation

Rebuild The OS area data and settings of the existing Virtual Server are deleted, and it is configured by rebuilding to a new server - For details, refer to Rebuild Execution

Service termination Button to cancel the service Table. Virtual Server status information and additional functions

Detailed Information

Virtual Server List page, you can view the detailed information of the selected resource and, if necessary, edit the information.

| Category | Detailed description |

|---|---|

| Service | Service Name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Resource Name

|

| Resource ID | Unique resource ID in the service |

| Creator | User who created the service |

| Creation time | Service creation time |

| Editor | User who edited the service information |

| Modification Date/Time | Date/Time when service information was edited |

| Server Name | Server Name

|

| Server Type | vCPU, Memory Information Display

|

| Image name | Server’s OS Image and version

|

| Lock | Display whether Lock is used or not

|

| Server Group | Name of the server group the server belongs to

|

| Keypair name | Server authentication information set by the user

|

| Planned Compute | Resource status with Planned Compute set

|

| LLM Endpoint | URL for using LLM

|

| ServiceWatch detailed monitoring | Display whether ServiceWatch detailed monitoring is enabled

|

| Network | Network information of Virtual Server

|

| Local Subnet | Local Subnet information of Virtual Server

|

| Block Storage | Information of Block Storage connected to the server

|

Tag

Virtual Server list page allows you to view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag List | Tag List

|

Work History

Virtual Server List page allows you to view the operation history of the selected resource.

| Category | Detailed description |

|---|---|

| Work History List | Resource Change History

|

Virtual Server Operation Control

If you need to control the operation of generated Virtual Server resources, you can perform the task on the Virtual Server List or Virtual Server Details page. You can start, stop, and restart a running server.

Virtual Server Start

You can start a shutoff (Shutoff) Virtual Server. To start the Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Navigate to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. Go to the Virtual Server list page.

- Virtual Server List page, click the resource to start among the stopped (Shutoff) servers, and go to the Virtual Server Details page.

- Virtual Server List page, you can start each resource via the right More button.

- After selecting multiple servers with checkboxes, you can control multiple servers simultaneously via the Start button at the top.

- Click the Start button at the top of the Virtual Server Details page to start the server. Check the changed server status in the Status Display item.

- When the Virtual Server start is completed, the server status changes from Shutoff to Active.

- For more details about Virtual Server status, see Virtual Server Detailed Information Check.

Virtual Server Stop

You can stop a Virtual Server that is active (Active). To stop the Virtual Server, follow the steps below.

- Click the All Services > Compute > Virtual Server menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. You will be taken to the Virtual Server List page.

- On the Virtual Server List page, click the resource to stop among the servers that are running (Active), and go to the Virtual Server Details page.

- Virtual Server List page, you can Stop each resource via the right More button.

- After selecting multiple servers with the check box, you can control multiple servers simultaneously using the Stop button at the top.

- Click the Stop button at the top on the Virtual Server Details page to start the server. Check the changed server status in the Status Display item.

- When the Virtual Server shutdown is completed, the server status changes from Active to Shutoff.

- For detailed information about the Virtual Server status, please refer to Check Virtual Server detailed information.

Virtual Server Restart

You can restart the generated Virtual Server. To restart the Virtual Server, follow the steps below.

- Click the All Services > Compute > Virtual Server menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. You will be taken to the Virtual Server list page.

- On the Virtual Server List page, click the resource to restart, and navigate to the Virtual Server Details page.

- You can restart each resource via the right More button on the Virtual Server list page.

- After selecting multiple servers with the check box, you can control multiple servers simultaneously via the Restart button at the top.

- Virtual Server Details page, click the Restart button at the top to start the server. Check the status of the changed server in the Status Display item.

- Virtual Server during restart, the server status goes through Rebooting and finally changes to Active.

- For more details about Virtual Server status, refer to Virtual Server Detailed Information Check.

Virtual Server Resource Management

If you need server control and management functions for the generated Virtual Server resources, you can perform tasks on the Virtual Server List or Virtual Server Details page.

Image Create

You can create an image of a running Virtual Server.

This content provides instructions on how to create a user image with a running Virtual Server.

- Virtual Server List or Virtual Server Detail page, click the Create Image button to create a user Image.

- The method of creating an Image by uploading the Image file you own, please refer to Image Detailed Guide: Creating Image.

To create a Virtual Server’s Image, follow the steps below.

All Services > Compute > Virtual Server Click the menu. Navigate to Virtual Server’s Service Home page.

Click the Virtual Server menu on the Service Home page. Go to the Virtual Server list page.

Virtual Server List page, click the resource to create an Image. Virtual Server Details page, navigate.

Virtual Server Details page, click the Create Image button. It navigates to the Create Image page.

- Service Information Input Enter the required information in the area.

Category RequiredDetailed description Image name Required Name of the Image to create - Enter within 200 characters using English letters, numbers, spaces, and special characters (

-,_)

Table. Image Service Information Input Items - Enter within 200 characters using English letters, numbers, spaces, and special characters (

- Service Information Input Enter the required information in the area.

Check the input information and click the Complete button.

- When creation is complete, check the created resources on the All Services > Compute > Virtual Server > Image List page.

- When you create an Image, the generated Image is stored in the Object Storage used as internal storage. Therefore, usage fees for Image storage are charged.

- The file system of the Image generated from an Active state Virtual Server cannot guarantee integrity, so it is recommended to stop the server before creating the Image.

Edit Server Type

You can modify the server type of the Virtual Server.

To modify the server type of a Virtual Server, follow these steps.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. Go to the Virtual Server list page.

- Virtual Server List page, click the resource to control operation. Move to the Virtual Server Details page.

- Virtual Server Details page, check the server status, and click the Edit button for server type. Server Type Edit popup opens.

- Server Type Modification In the popup window, after changing the server type, click the Confirm button.

- Virtual Server if you modify the server type, Virtual Server status changes to a state related to performing a resize.

- For detailed information about the Virtual Server status, please refer to Check Virtual Server detailed information.

Change IP

How to change IP, please refer to IP Change.

- If you proceed with changing the IP, you will no longer be able to communicate with that IP, and you cannot cancel the IP change while it is in progress.

- The server will be rebooted to apply the changed IP.

- If the server is running the Load Balancer service, you must delete the old IP from the LB server group and directly add the changed IP as a member of the LB server group.

- Servers using Public NAT/Private NAT must disable the use of Public NAT/Private NAT after changing the IP and set it again.

- If you are using Public NAT/Private NAT, first disable the use of Public NAT/Private NAT, complete the IP change, and then set it up again.

- Public NAT/Private NAT usage can be changed by clicking the Edit button of Public NAT IP/Private NAT IP on the Virtual Server Details page.

ServiceWatch Enable detailed monitoring

Basically, Virtual Server is linked with ServiceWatch and basic monitoring. As needed, you can enable detailed monitoring to identify operational issues more quickly and take action. For more information about ServiceWatch, see ServiceWatch Overview.

To enable detailed monitoring of ServiceWatch on Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. Navigate to the Virtual Server list page.

- Virtual Server List page, click the resource to enable detailed ServiceWatch monitoring. Virtual Server Detail page.

- Click the Edit button for ServiceWatch Detailed Monitoring on the Virtual Server Details page. It will navigate to the ServiceWatch Detailed Monitoring Edit popup.

- ServiceWatch Detailed Monitoring Modification in the popup window, after selecting Enable, check the guidance text and click the Confirm button.

- Virtual Server Details page, check the ServiceWatch detailed monitoring items.

ServiceWatch Disable detailed monitoring

To disable detailed monitoring of ServiceWatch on Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. Navigate to the Virtual Server list page.

- Virtual Server List page, click the resource to disable ServiceWatch detailed monitoring. Move to the Virtual Server Details page.

- Click the ServiceWatch detailed monitoring Edit button on the Virtual Server Details page. You will be taken to the ServiceWatch detailed monitoring Edit popup.

- ServiceWatch Detailed Monitoring Modification in the popup window after deselecting Activation, check the guidance text and click the Confirm button.

- Virtual Server Details page, check the ServiceWatch detailed monitoring items.

Virtual Server Management Additional Features

Virtual Server can view console logs, generate dumps, and rebuild for server management. To view console logs, generate dumps, and rebuild the Virtual Server, follow the steps below.

Check console log

You can view the current console log of the Virtual Server.

To check the console log of the Virtual Server, follow the steps below.

- Click the All Services > Compute > Virtual Server menu. Go to the Service Home page of Virtual Server.

- Service Home page, click the Virtual Server menu. Navigate to the Virtual Server list page.

- Virtual Server list page, click the resource to view the console log. It moves to the Virtual Server details page.

- Virtual Server Details page, click the Console Log button. It navigates to the Console Log popup window.

- Console Log Check the console log output in the popup window.

Create Dump

To create a dump file of the Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Navigate to Virtual Server’s Service Home page.

- Click the Virtual Server menu on the Service Home page. You will be taken to the Virtual Server list page.

- Click the resource to view detailed information on the Virtual Server List page. It navigates to the Virtual Server Details page.

- Click the Create Dump button on the Virtual Server Details page.

- The dump file is created inside the Virtual Server.

Rebuild perform

You can delete the OS area data and settings of the existing Virtual Server and rebuild it as a new server.

To perform a Rebuild of the Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Service Home page, click the Virtual Server menu. Navigate to the Virtual Server list page.

- Virtual Server List page, click the resource to perform Rebuild. Move to the Virtual Server Details page.

- Virtual Server Details on the page click the Rebuild button.

- During Virtual Server Rebuild, the server status changes to Rebuilding, and when the Rebuild is completed, it returns to the state before the Rebuild.

- Virtual Server status detailed information, please refer to Virtual Server Check Detailed Information.

Virtual Server Cancel

If you terminate an unused Virtual Server, you can reduce operating costs. However, terminating a Virtual Server may cause the running service to stop immediately, so you should consider the impact of service interruption thoroughly before proceeding with the termination.

To cancel the Virtual Server, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Virtual Server menu on the Service Home page. Navigate to the Virtual Server list page.

- Virtual Server list On the page, select the resource to cancel, and click the Cancel Service button.

- The termination of connected storage depends on the Delete on termination setting, please refer to Termination Constraints.

- When the termination is complete, check on the Virtual Server List page whether the resource has been terminated.

Termination Constraints

Virtual Server termination request cannot be processed, we will guide you with a popup window. Please refer to the cases below.

- When File Storage is connected: Please disconnect the File Storage first.

- When the LB server group is connected: First disconnect the LB server group pool connection.

- When Lock is set: After changing the Lock setting to disabled, try again.

- When Backup is connected: Please disconnect the Backup connection first.

- If the Auto-Scaling Group attached to the Virtual Server is not in In Service state: After changing the status of the attached Auto-Scaling Group, try again.

Termination of attached storage depends on the Delete on termination setting, please refer to it.

- Delete on termination The volume deletion also depends on the setting.

- Delete on termination If not set: Even if you terminate the Virtual Server, the volume will not be deleted.

- Delete on termination when set: If the Virtual Server is terminated, the volume will be deleted.

- Volumes that have a Snapshot will not be deleted even if Delete on termination is set.

- Multi attach volume will be deleted only when the server being deleted is the last remaining server attached to the volume.

1.2.1 - Image

The user can enter the required information for the Image service within the Virtual Server service and select detailed options through the Samsung Cloud Platform Console to create the service.

Image generation

You can create and use the Image service while using the Virtual Server service on the Samsung Cloud Platform Console.

To create an Image, follow the steps below.

Click the All Services > Compute > Virtual Server menu. Go to the Virtual Server’s Service Home page.

Click the Image menu on the Service Home page. Go to the Image List page.

Click the Image Create button on the Image List page. It navigates to the Image Create page.

- Service Information Input Enter or select the required information in the area.

Category RequiredDetailed description Image name Required Name of the Image to create - Enter within 255 characters using English letters, numbers, spaces, and special characters (

-,_)

Image file > URL Required Enter URL after uploading Image file to Object Storage - Object Storage Details page allows copying URL

- The Bucket of Object Storage where the Image file is uploaded must be in the same zone as the server to be created

- Image file can only have .qcow2 extension

- Upload a secure Image file to minimize security risks.

OS type Required OS type of the uploaded Image file - Select from Alma Linux, CentOS, Oracle Linux, RHEL, Rocky Linux, Ubuntu

Minimum Disk Required Minimum disk size (GB) for the Image to be created - Enter a value between

0and12,288GB

Minimum RAM Required Minimum RAM capacity (GB) of the image to be created - Enter a value between

0and2,097,151GB

Visibility Required Indicates access permissions for the Image - Private: Can be used only within the Account

- Shared: Can be shared between Accounts

Protected Select Select whether Image deletion is prohibited - Checking Use prevents accidental deletion of the Image

- This setting can be changed after Image creation

Table. Image Service Information Input Items - Enter within 255 characters using English letters, numbers, spaces, and special characters (

- Additional Information Input Enter or select the required information in the area.

Category RequiredDetailed description Tag Select Add Tag - Up to 50 can be added per resource

- After clicking the Add Tag button, enter or select Key, Value values

Table. Image additional information input items

- Service Information Input Enter or select the required information in the area.

Summary Check the detailed information and estimated billing amount generated in the panel, and click the Complete button.

- When creation is complete, check the created resources on the Image List page.

Image Check detailed information

Image service can view and edit the full resource list and detailed information. Image detail page consists of detailed information, tags, operation history tabs.

To view detailed information of the Image service, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Go to the Service Home page of Virtual Server.

- Click the Image menu on the Service Home page. Go to the Image list page.

- Image List page, click the resource to view detailed information. Image Details page will be opened.

- Image Details page displays status information and additional feature information, and consists of Detail Information, Tag, Work History tabs.

Category Detailed description Image status Status of the Image created by the user - Active: Available

- Queued: When an Image creation request is made, the Image is uploaded and waiting for processing

- Importing: When an Image creation request is made, the Image is uploaded and being processed

Create shared Image Create Image to share with another Account - Can be created only when the Image’s Visibility is private and the Image has snapshot information

Share with another Account Image can be shared with another Account - If the Image’s Visibility is Shared, it can be shared with another Account

- Only displayed for Images created by Create shared Image or by uploading a qcow2 file

Image Delete Button to delete the Image - If the Image is deleted, it cannot be recovered

Table. Image status information and additional functions

- Image Details page displays status information and additional feature information, and consists of Detail Information, Tag, Work History tabs.

Detailed Information

Image list page allows you to view detailed information of the selected resource and edit the information if needed.

| Category | Detailed description |

|---|---|

| Service | Service Name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Image Name |

| Resource ID | Image’s unique resource ID |

| Creator | User who created the Image |

| Creation time | Image creation time |

| Editor | User who modified the Image information |

| Modification Date | Date/Time when Image information was modified |

| Image name | Image name |

| Minimum Disk | Image’s minimum disk capacity (GB)

|

| Minimum RAM | Minimum RAM capacity of the Image (GB) |

| OS type | Image’s OS type

|

| OS hash algorithm | OS hash algorithm method |

| Visibility | Displays access permissions for the Image

|

| Protected | Select whether image deletion is prohibited

|

| Image size | Image size

|

| Image Type | Classification by Image creation method

|

| Image file URL | Image file URL uploaded to Object Storage when creating an Image

|

| Sharing Status | Status of sharing images with other Accounts

|

Tag

Image list page, you can view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag List | Tag List

|

Work History

You can view the operation history of the selected resource on the Image list page.

| Category | Detailed description |

|---|---|

| Work History List | Resource Change History

|

Image Resource Management

Describes the control and management functions of the generated Image.

Create Image for Sharing

Create an Image to share with another Account.

- Image’s Visibility is private and only when the Image has snapshot information can a shared Image be created.

- Shared Image includes only one OS area disk volume as the imaging target. Additionally, connected data volumes are not included in the Image, so if needed, please copy the data to a separate volume and use the volume migration function.

To create an image for sharing, follow the steps below.

- Log in to the shared Account and click the All Services > Compute > Virtual Server menu. Go to the Virtual Server’s Service Home page.

- Click the Image menu on the Service Home page. Navigate to the Image List page.

- Click the Image to create a shared Image on the Image List page. You will be taken to the Image Details page.

- Create Shared Image Click the button. A popup window notifying the creation of a shared Image will open.

- After checking the notification content, click the Complete button.

Share Image to another Account

Create an image to share with another Account.

- .qcow2 extension file uploaded to create, or only Images created via Image Details page with Create Shared Image can be shared with other Accounts.

- The Image to be shared must have Visibility set to Shared.

To share the Image with another Account, follow these steps.

Log in to the shared Account and click the All Services > Compute > Virtual Server menu. Navigate to the Virtual Server’s Service Home page.

Click the Image menu on the Service Home page. It navigates to the Image List page.

On the Image List page, click the Image you want to share with another Account. It moves to the Image Details page.

Click the Share to another Account button. A popup window notifying Image sharing opens.

After checking the notification content, click the Confirm button. It moves to the Share Image with another Account page.

Share Image with another Account on the page, enter Share Account ID, and click the Complete button. A popup notifying Image sharing opens.

Category RequiredDetailed description Image name - Name of the Image to share - Input not allowed

Image ID - Image ID to share - Input not allowed

Shared Account ID Required Enter another Account ID to share - Enter within 64 characters using English letters, numbers, and special character

-

Table. Image sharing items to another AccountAfter checking the notification content, click the Confirm button. You can check the information in the sharing status of the Image Details page.

- When first requested, the status is Pending, and when approval is completed by the Account to be shared, it changes to Accepted, and if approval is denied, it changes to Rejected.

Receive shared Image from another Account

To receive an Image shared from another Account, follow the steps below.

Log in to the account to be shared and click the All Services > Compute > Virtual Server menu. Go to the Service Home page of the Virtual Server.

Click the Image menu on the Service Home page. It navigates to the Image List page.

Image List on the page More > Image Share Request List click the button. Image Share Request List popup opens.

Image Sharing Request List In the popup window, click the Approve or Reject button for the Image to be shared.

Category Detailed description Image name shared Image name OS type OS type of shared Image Owner Account ID Owner Account ID of shared Image Creation time Creation time of shared Image Approval Approve the shared Image Reject Reject processing of the shared Image Table. Image sharing request list itemAfter checking the notification content, click the Confirm button. You can check the shared Image in the Image list.

Image Delete

You can delete unused images. However, once an image is deleted it cannot be recovered, so you should fully consider the impact before proceeding with the deletion.

To delete Image, follow the steps below.

- All Services > Compute > Virtual Server Click the menu. Navigate to the Service Home page of Virtual Server.

- Click the Image menu on the Service Home page. Go to the Image list page.

- Image list 페이지에서 삭제할 자원을 선택하고, Delete 버튼을 클릭하세요.

- Image list page, select multiple Image check boxes, and click the Delete button at the top of the resource list.

- When deletion is complete, check on the Image List page whether the resource has been deleted.

1.2.2 - Keypair

The user can create the service by entering the required information of the Keypair in the Virtual Server service through the Samsung Cloud Platform Console and selecting detailed options.

Create Keypair

You can use the Virtual Server service while using the Samsung Cloud Platform Console and create and use the Keypair service.

To create a key pair, follow these steps.

- Click All Services > Compute > Virtual Server menu. It moves to the Service Home page of Virtual Server.

- Service Home page, click the Keypair menu. It moves to the Keypair list page.

- Keypair list page, click the Create Keypair button. Move to the Create Keypair page.

- Enter Service Information area, please enter the necessary information.

Classification NecessityDetailed Description Keypair name Required Name of the Keypair to be created - Enter within 255 characters using English, numbers, spaces, and special characters (

-,_)

Keypair type mandatory ssh Table. Keypair service information input items - Enter within 255 characters using English, numbers, spaces, and special characters (

- Enter Additional Information Enter or select the required information in the area.

Classification NecessityDetailed Description Tag Selection Add Tag - Up to 50 can be added per resource

- Click the Add Tag button and enter or select Key, Value

Table. Input items for adding Keypair informationCaution- After creation is complete, you can download the Key only once for the first time. Since re-issuance is not possible, please check if it has been downloaded.

- Please store the downloaded Private Key in a safe place.

- Enter Service Information area, please enter the necessary information.

- Check the input information and click the Complete button.

- Once creation is complete, check the created resource on the Keypair list page.

Check Keypair Details

The Keypair service allows you to view and modify the list of all resources and detailed information. The Keypair details page consists of details, tags, and operation history tabs.

To check the Keypair details, follow the following procedure.

- All services > Compute > Virtual Server menu is clicked. It moves to the Service Home page of Virtual Server.

- Service Home page, click the Keypair menu. It moves to the Keypair list page.

- Keypair list page, click on the resource to check the detailed information. Move to the Keypair details page.

- Keypair Details page displays status information and additional feature information, and consists of Details, Tags, Operation History tabs.

Detailed Information

On the Keypair list page, you can check the detailed information of the selected resource and modify the information if necessary.

| Classification | Detailed Description |

|---|---|

| Service | Service Name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Keypair Name |

| Resource ID | Keypair’s unique resource ID |

| Creator | User who created the Keypair |

| Creation Time | Time when Keypair was created |

| Modifier | User who modified Keypair information |

| Modified Time | Time when Keypair information was modified |

| Keypair name | Keypair name |

| Fingerprint | A unique value to identify the Key |

| User ID | Keypair creation user ID |

| Public Key | Public Key Information |

Tag

On the Keypair List page, you can check the tag information of the selected resource, and add, change, or delete it.

| Classification | Detailed Description |

|---|---|

| Tag List | Tag list

|

Work History

Keypair list page where you can check the operation history of the selected resource.

| Classification | Detailed Description |

|---|---|

| Work history list | Resource change history

|

Managing Keypair Resources

Describes the control and management functions of the Keypair.

Get Public Key

To import a public key, follow these steps.

Click All services > Compute > Virtual Server menu. It moves to the Service Home page of Virtual Server.

Service Home page, click the Keypair menu. It moves to the Keypair list page.

On the Keypair list page, click the More button at the top and then click the Import Public Key button. This will move to the Import Public Key page.

- Required Information Input Enter or select the necessary information in the required information input area.