Overview

Service Overview

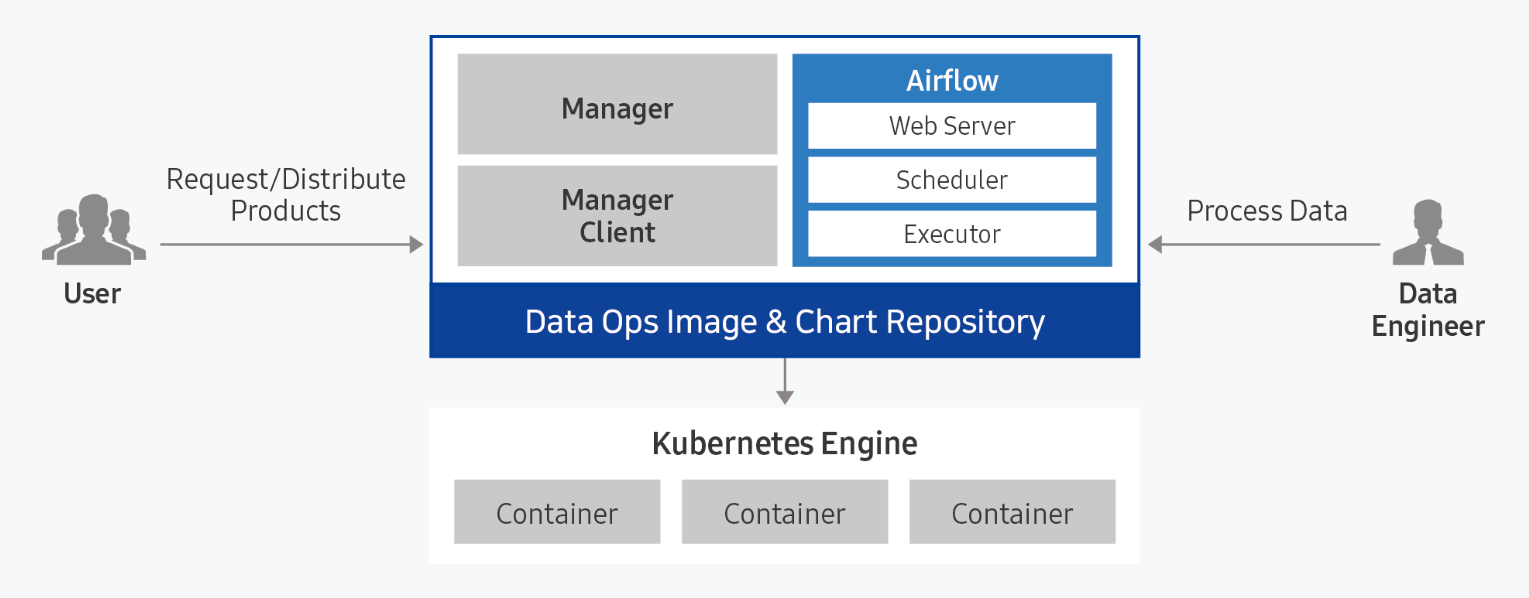

Data Ops is a managed workflow orchestration service based on Apache Airflow that writes workflows for periodic or repetitive data processing tasks and automates task scheduling. Users can automate the process of bringing useful data to the right place at the right time, and monitor the configuration and progress of data pipelines.

Provided Features

Data Ops provides the following functions.

- Easy installation and management: Data Ops can be easily installed through a web-based Console in a standard Kubernetes cluster environment. Apache Airflow and management modules are automatically installed, and integrated monitoring of the execution status of web servers and schedulers is possible through an integrated dashboard.

- Dynamic Pipeline Composition: Pipeline composition for data tasks is possible based on Python code. Since it dynamically generates tasks in conjunction with data task scheduling, you can freely compose the desired workflow form and scheduling.

- Convenient workflow management: DAG (Direct Acyclic Graph: directed acyclic graph) configuration is visualized and managed through a web-based UI, making it easy to understand the data flow’s preceding and parallel relationships. Additionally, each task’s timeout, retry count, priority definition, etc. can be easily managed.

Component

Data Ops consists of Manager and Service modules, and provides Apache Airflow by packaging it.

Data Ops Manager

Data Ops Manager provides various managing functions to use Airflow more efficiently.

- You can upload Plugin File, Shared File, Python Library File to be used in Ops Service through Ops Manager.

- You can easily provision setting information for Airflow configuration components within the cluster.

- You can manage and easily provision different service settings within the Airflow cluster.

Data Ops Service

- Provides a managed workflow orchestration service based on Apache Airflow.

- When Airflow is provided, you can set Description, necessary resource size, DAGs GitSync, and Host Alias.

- After creating a service, you can modify Description, resource usage, DAGs GitSync, and Host Alias to reflect the service.

Server Spec Type

When creating a Data Ops service, please check the following contents.

- Recommended Service Installation Specifications: CPU KubernetesExecutor 43 core, CPU CeleryExecutor 25 core, Memory 50 GB, storage 100 GB or more

- It is necessary to install Ingress Controller before creating Data Ops service.

- In a Kubernetes cluster, only 1 Ingress Controller can be installed.

- For more detailed information, please refer to Ingress Controller installation.

Regional Provision Status

Data Ops is available in the following environments.

| Region | Availability |

|---|---|

| Western Korea(kr-west1) | Provided |

| Korea East(kr-east1) | Not provided |

| South Korea (kr-south1) | Provided |

| South Korea Central(kr-central) | Available |

| South Korea southern region 3(kr-south3) | Provided |

Preceding Service

This is a list of services that must be pre-configured before creating this service. Please refer to the guide provided for each service and prepare in advance.

| Service Category | Service | Detailed Description |

|---|---|---|

| Storage | File Storage | Storage that allows multiple client servers to share files through network connections |

| Container | Kubernetes Engine | Kubernetes container orchestration service |

| Container | Container Registry | A service that easily stores, manages, and shares container images |