Provides an analysis service that can process big data easily and quickly.

This is the multi-page printable view of this section. Click here to print.

Data Analytics

- 1: Event Streams

- 1.1: Overview

- 1.1.1: Server Type

- 1.1.2: Monitoring Metrics

- 1.2: How-to guides

- 1.3: API Reference

- 1.4: CLI Reference

- 1.5: Release Note

- 2: Search Engine

- 2.1: Overview

- 2.1.1: Server Type

- 2.1.2: Monitoring Metrics

- 2.2: How-to guides

- 2.3: API Reference

- 2.4: CLI Reference

- 2.5: Release Note

- 3: Vertica(DBaaS)

- 3.1: Overview

- 3.1.1: Server Type

- 3.1.2: Monitoring Metrics

- 3.2: How-to guides

- 3.2.1: Vertica Backup and Recovery

- 3.3: API Reference

- 3.4: CLI Reference

- 3.5: Release Note

- 4: Data Flow

- 4.1: Overview

- 4.2: How-to guides

- 4.2.1: Data Flow Services

- 4.2.2: Installing Ingress Controller

- 4.3: API Reference

- 4.4: CLI Reference

- 4.5: Release Note

- 5: Data Ops

- 5.1: Overview

- 5.2: How-to guides

- 5.2.1: Data Ops Services

- 5.2.2: Installing Ingress Controller

- 5.3: API Reference

- 5.4: CLI Reference

- 5.5: Release Note

- 6: Quick Query

- 6.1: Overview

- 6.2: How-to guides

- 6.3: API Reference

- 6.4: CLI Reference

- 6.5: Release Note

1 - Event Streams

1.1 - Overview

Service Overview

Event Streams provides fully managed creation and configuration of the open source Apache Kafka for large-scale, massive message data processing. Samsung Cloud Platform automates the creation and configuration of Apache Kafka through a web-based console, and users can configure the main components of Apache Kafka—Broker, Zookeeper, and AKHQ—in a single or clustered form.

Event Streams cluster is composed of multiple Broker nodes, and Brokers can be installed from a minimum of 1 to a maximum of 10, typically installed with 3 or more. Zookeeper can be installed separately to manage the distributed Brokers, and if not installed separately, it is installed together on the Broker node. Additionally, a tool for managing Kafka called AKHQ (Apache Kafka HQ) is provided, allowing users to manage cluster operations through it.

Provided Features

Event Streams provides the following features.

- Auto Provisioning (Auto Provisioning): You can configure and set up an Apache Kafka cluster via the UI.

- Operation Control Management: Provides a function to control the status of running servers. Restart is possible to apply configuration values, along with starting and stopping the cluster.

- Providing AKHQ: By providing AKHQ, a tool that can manage Kafka, users can manage and monitor clusters through it.

- Broker node addition: If expansion is needed to improve cluster performance and stability, you can add nodes with the same specifications as the Broker nodes in use.

- Parameter Management: Performance improvement and security-related configuration parameters can be set and modified.

- Monitoring: CPU, memory, and cluster performance monitoring information can be viewed through the Cloud Monitoring service.

Components

Event Streams provides pre-validated engine versions and various server types in accordance with the open source support policy. Users can select and use them according to the scale of the service they wish to configure.

Engine Version

The engine versions supported by Event Streams are as follows.

Technical support can be used until the supplier’s EoTS (End of Technical Service) date, and the EOS date when new creation is stopped is set to six months before the EoTS date.

According to the supplier’s policy, the EOS and EoTS dates may change, so please refer to the supplier’s license management policy page for details.

- Apache Kafka: https://docs.confluent.io/platform/current/installation/versions-interoperability.html

| Provided Version | EoS Date | EoTS Date |

|---|---|---|

| 3.8.0 | 2026-06 (scheduled) | 2026-12-02 |

Server Type

The server types supported by Event Streams are as follows.

For detailed information about the server types provided by Event Streams, please refer to Event Streams Server Types.

Standard ess1v2m4

| Category | Example | Detailed description |

|---|---|---|

| Server Type | Standard | Provided Server Types

|

| Server Specifications | ess1 | Provided Server Specifications

|

| Server specifications | v2 | Number of vCores

|

| Server Specifications | m4 | Memory Capacity

|

Preceding Service

This is a list of services that must be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | Service | Detailed Description |

|---|---|---|

| Networking | VPC | A service that provides an independent virtual network in a cloud environment |

1.1.1 - Server Type

Event Streams server type

Event Streams provides a server type composed of various combinations such as CPU, Memory, Network Bandwidth, etc. When creating Event Streams, Apache Kafka is installed according to the selected server type suitable for the purpose of use.

The server types supported in Event Streams are as follows.

Standard ess1v2m4

Classification | Example | Detailed Description |

|---|---|---|

| Server Type | Standard | Provided server type distinction

|

| Server Specifications | ess1 | Classification of provided server type and generation

|

| Server Specification | v2 | Number of vCores

|

| Server Specification | m4 | Memory Capacity

|

Please select the server type by checking the node’s minimum specifications as follows.

| Division | vCPU | Memory |

|---|---|---|

| Broker | 2 vCore | 4 GB |

| Zookeeper | 1 vCore | 2 GB |

ess1 server type

The ess1 server type of Event Streams is provided with standard specifications (vCPU, Memory) and is suitable for various database workloads.

- Up to 3.3Ghz Intel 3rd generation (Ice Lake) Xeon Gold 6342 Processor Supports up to 16 vCPUs and 64 GB of memory

- Up to 12.5 Gbps networking speed

| Classification | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| Standard | ess1v1m2 | 1 vCore | 2 GB | Up to 10 Gbps |

| Standard | ess1v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | ess1v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | ess1v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | ess1v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | ess1v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | ess1v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | ess1v16m32 | 16 vCore | 32 GB | Up to 12.5 Gbps |

| Standard | ess1v16m64 | 16 vCore | 64 GB | Up to 12.5 Gbps |

ess2 server type

The ess2 server type of Event Streams is provided with standard specifications (vCPU, Memory) and is suitable for various database workloads.

- Up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor

- Supports up to 16 vCPUs and 64 GB of memory

- Up to 12.5 Gbps networking speed

| Classification | Server Type | CPU vCore | Memory | Network Bandwidth(Gbps) |

|---|---|---|---|---|

| Standard | ess2v1m2 | 1 vCore | 2 GB | Up to 10 Gbps |

| Standard | ess2v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | ess2v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | ess2v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | ess2v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | ess2v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | ess2v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | ess2v16m32 | 16 vCore | 32 GB | Up to 12.5 Gbps |

| Standard | ess2v16m64 | 16 vCore | 64 GB | Up to 12.5 Gbps |

esh2 server type

The esh2 server type of Event Streams is provided with high-capacity server specifications and is suitable for database workloads for large-scale data processing.

- Up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor

- Supports up to 32 vCPUs and 128 GB of memory

- Up to 25Gbps networking speed

| Division | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| High Capacity | esh2v32m64 | 32 vCore | 64 GB | Up to 25 Gbps |

| High Capacity | esh2v32m128 | 32 vCore | 128 GB | Up to 25 Gbps |

1.1.2 - Monitoring Metrics

Event Streams Monitoring Metrics

The following table shows the performance monitoring metrics of Event Streams that can be checked through Cloud Monitoring. For detailed usage of Cloud Monitoring, please refer to the Cloud Monitoring guide.

For server monitoring metrics of Event Streams, please refer to the Virtual Server Monitoring Metrics guide.

| Performance Item | Detailed Description | Unit |

|---|---|---|

| AKHQ State [PID] | AHKQ process PID | PID |

| Connections [Zookeeper Client] | Number of ZooKeeper connections | cnt |

| Disk Used | datadir usage | bytes |

| Failed [Client Fetch Request] | Number of failed client fetch requests | cnt |

| Failed [Produce Request] | Number of failed produce requests | cnt |

| Incomming Messages | Number of messages received by the broker | cnt |

| Instance State [PID] | Kafka process PID | PID |

| Kibana state [PID] | Kibana process PID | PID |

| Leader Elections | Number of leader elections | cnt |

| Leader Elections [Unclean] | Number of unclean leader elections | cnt |

| Log Flushes | Number of log flushes | cnt |

| Network In Bytes | Total bytes received by all topics | bytes |

| Network Out Bytes | Total bytes sent by all topics | bytes |

| Rejected Bytes | Total bytes rejected by all topics | bytes |

| Request Queue Length | Request queue size | cnt |

| Shards | Number of cluster shards | cnt |

| Zookeeper Sessions [Closed] | Number of ZooKeeper sessions closed per second | cnt |

| Zookeeper Sessions [Expired] | Number of ZooKeeper sessions expired per second | cnt |

| Zookeeper State [PID] | Zookeeper process PID | PID |

1.2 - How-to guides

The user can enter the necessary information of Event Streams through the Samsung Cloud Platform Console and create the service by selecting detailed options.

Creating Event Streams

You can create and use the Event Streams service in the Samsung Cloud Platform Console.

Please configure the Subnet type of VPC to General before creating the service.

- If the Subnet type is Local, the creation of the corresponding Database service is not possible.

To create Event Streams, follow these procedures.

Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

On the Service Home page, click the Create Event Streams button. It moves to the Create Event Streams page.

Event Streams Creation page, enter the information required for service creation and select detailed options.

Image and Version Selection area, please select the necessary information.

Classification NecessityDetailed Description Image Version Required Provides a list of Event Streams versions Table. Event Streams service information input itemsEnter Service Information Enter or select the required information in the area.

Classification NecessityDetailed Description Server Name Prefix Required The server name where Apache Kafka will be installed - Starts with lowercase English letters, and uses lowercase letters, numbers, and special characters(

-) to input 3 ~ 13 characters

- A postfix such as 001, 002 is attached based on the server name to create the actual server name

Cluster Name Required Name of the cluster that the servers are configured in - Enter in English, 3-20 characters

- A cluster is a unit that bundles multiple servers

Broker > Broker Node count required Broker Node count Broker > Server Type Required The type of server on which the Broker will be installed - Standard: Standard specification commonly used

- High Capacity: High-capacity server with 24vCore or more

- For more information about the server types provided by Event Streams, see Event Streams server types

Broker > Planned Compute Selection Current status of resources with Planned Compute set - In use: Number of resources with Planned Compute set that are currently in use

- Settings: Number of resources with Planned Compute set

- Coverage preview: Amount applied by resource-based Planned Compute

- Apply for Planned Compute service: Move to the Planned Compute service application page

- For more information, see How to apply for Planned Compute

Broker > Block Storage Required Block Storage type to be used for Broker node - Base OS: Area where the engine is installed

- DATA: Area for storing data files

- Please select the storage type and enter the capacity. (For more information on each Block Storage type, see Creating Block Storage)

- SSD: High-performance general volume

- HDD: General volume

- SSD_KMS/HDD_KMS: Additional encrypted volume using Samsung Cloud Platform KMS (Key Management System) encryption key

- Capacity should be entered in multiples of 8 within the range of 16 to 5,120

- Please select the storage type and enter the capacity. (For more information on each Block Storage type, see Creating Block Storage)

Zookeeper separate installation > use selection Whether to install Zookeeper node separately - Use is selected, Zookeeper nodes are installed separately.

- If Zookeeper nodes are not installed separately, Broker nodes also perform the Zookeeper role.

Zookeeper separate installation > Server type Selection The server type where Zookeeper will be installed - Zookeeper node provides vCPU 1, Memory 2G or vCPU 2, Memory 4G

Zookeeper separate installation > Planned Compute selection Current status of resources with Planned Compute set - In use: Number of resources in use among those with Planned Compute set

- Settings: Number of resources with Planned Compute set

- Coverage preview: Amount applied by resource-based Planned Compute

- Apply for Planned Compute service: Move to the Planned Compute service application page

- For more details, refer to Apply for Planned Compute

Zookeeper separate installation > Block Storage required Block Storage type to be used for Zookeeper node - Basic OS: area where the engine is installed

- DATA: area for storing data files

- After selecting the storage type, enter the capacity. (For more information on each Block Storage type, see Creating Block Storage)

- SSD: high-performance general volume

- HDD: general volume

- SSD_KMS/HDD_KMS: additional encrypted volume using Samsung Cloud Platform KMS (Key Management System) encryption key

- Capacity should be entered in multiples of 8 within the range of 16 to 5,120

- After selecting the storage type, enter the capacity. (For more information on each Block Storage type, see Creating Block Storage)

AKHQ > Use Required Whether AKHQ is installed - Use is selected, AKHQ will be installed.

AKHQ > Server Type Required The type of server where AKHQ will be installed - AKHQ only provides vCPU 2, Memory 4G type

AKHQ > Planned Compute Selection Current status of resources with Planned Compute set - In use: Number of resources with Planned Compute set that are in use

- Settings: Number of resources with Planned Compute set

- Coverage preview: Amount applied by resource-based Planned Compute

- Apply for Planned Compute service: Move to the Planned Compute service application page

- For more information, see Apply for Planned Compute

AKHQ > Block Storage required The type of Block Storage to be used on the server where AKHQ will be installed - Basic OS: The area where the engine is installed

AKHQ > AKHQ account required AKHQ account - Use lowercase English letters to enter 2-20 characters

AKHQ > AKHQ password required AKHQ account password - Enter 8-30 characters, including English, numbers, and special characters (excluding

"’)

AKHQ > AKHQ password confirmation required AKHQ account password confirmation - Re-enter the AKHQ account password identically

AKHQ > AKHQ Port number required AKHQ connection port number - The port number is automatically set to 8080 and cannot be modified

Network > Common Settings Required Network settings for servers created by the service - Select if you want to apply the same settings to all servers being installed

- Select pre-created VPC and Subnet

- IP: Only automatic creation is possible

- Public NAT settings are only possible in server-specific settings.

Network > Server-specific settings Required Network settings for installing servers created by the service - Select to apply different settings for each server being installed

- Select pre-created VPC and Subnet

- IP: Enter the IP for each server

- Public NAT feature is available only when the VPC is connected to the Internet Gateway, checking Use allows selection from the reserved IP in the VPC product’s Public IP. For more information, see Creating Public IP

IP Access Control Select Set service access policy - Set access policy for IP entered on the page, so you don’t need to set Security Group policy separately.

- Enter in IP format (e.g.,

192.168.10.1) or CIDR format (e.g.,192.168.10.0/24,192.168.10.1/32), and click the Add button

- To delete the entered IP, click the x button next to the entered IP

Maintenance period Select Event Streams maintenance period - Use Select the day, start time, and duration

- It is recommended to set the maintenance period for stable management of the service. Patch work will be carried out at the set time and service interruption may occur

- Issues caused by not applying patches (setting to not in use) are not the responsibility of our company

Table. Event Streams Service Configuration Items- Starts with lowercase English letters, and uses lowercase letters, numbers, and special characters(

Database Configuration Required Information Input area, please enter or select the required information.

Classification NecessityDetailed Description Zookeeper SASL account required Zookeeper account - Use lowercase English letters, 2-20 characters

Zookeeper SASL password required Zookeeper account password - Enter 8-30 characters including English, numbers, and special characters (excluding

"’)

Zookeeper SASL password confirmation required Confirm Zookeeper account password - Re-enter the Zookeeper SASL account password identically

Zookeeper Port number required Zookeeper port number 1,024 ~ 65,535can be entered as one of them, and Broker port or2888,3888cannot be used

Broker SASL account required Kafka connection account - Enter within 2-20 characters using lowercase English letters

Broker SASL password required Kafka connection account password - Enter 8-30 characters, including English, numbers, and special characters (excluding

"’)

Broker SASL password confirmation required Kafka connection account password confirmation - Re-enter the Broker SASL account password identically

Broker Port number required Kafka port number 1,024 ~ 65,435can be entered as one of them, and Broker port or2888,3888cannot be used

Parameter Required Event Streams configuration parameters - Inquiry button can be clicked to check detailed information of parameters

- Parameter modification is possible after service creation is completed, and restart is required when modified

Time Zone Selection Standard Time Zone where the service will be used Table. Essential information input items for Event Streams Database configurationEnter Additional Information Enter or select the required information in the area.

Classification NecessityDetailed Description Tag Selection Tag addition - Tag addition button to create and add tags or add existing tags

- Up to 50 tags can be added

- Newly added tags are applied after service creation is completed

Table. Event Streams service additional information input items

In the Summary panel, review the detailed information and estimated charges, and click the Complete button.

- Once creation is complete, check the created resource on the Resource List page.

Event Streams detailed information check

The Event Streams service allows you to view and modify the list of all resources and detailed information. The Event Streams details page consists of Details, Tags, Activity tabs.

To view detailed information about the Event Streams service, follow these steps.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- On the Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- Event Streams list page, click on the resource to check the detailed information. Move to the Event Streams details page.

- Event Streams details page top shows status information and additional function information.

| Division | Detailed Description |

|---|---|

| Cluster Status | Cluster Status

|

| Cluster Control | Buttons that can change the cluster status

|

| Additional features more | Cluster related management buttons

|

| Service Cancellation | Button to cancel the service |

Detailed Information

On the Event Streams list page, you can check the detailed information of the selected resource and modify the information if necessary.

| Classification | Detailed Description |

|---|---|

| Server Information | Server information configured in the corresponding cluster

|

| Service | Service Name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Resource Name

|

| Resource ID | Unique resource ID in the service |

| Creator | User who created the service |

| Creation Time | Time when the service was created |

| Modifier | Service information modified user |

| Modified Date | Date when service information was modified |

| Image/Version | Installed service image and version information |

| Cluster Name | Server cluster name composed of servers |

| Planned Compute | Planned Compute settings for current resources

|

| Maintenance period | Patch work period setting status

|

| Time Zone | Standard time zone where the service will be used |

| Zookeeper Port number | Zookeeper port number |

| Broker Port number | Kafka port number |

| AKHQ Connection Information | AKHQ Connection Information |

| Network | Installed network information(VPC, Subnet) |

| IP Access Control | Service Access Policy Setting

|

| Zookeeper | Zookeeper node’s server type, default OS, additional disk information

|

| Broker | Broker node’s server type, default OS, additional disk information

|

| AKHQ | AKHQ node’s server type, basic OS information

|

Tag

On the Event Streams list page, you can check the tag information of the selected resource, and add, change, or delete it.

| Classification | Detailed Description |

|---|---|

| Tag list | Tag list

|

Work History

You can check the operation history of the selected resource on the Event Streams list page.

| Classification | Detailed Description |

|---|---|

| Work history list | Resource change history

|

Managing Event Streams Resources

If you need to change the existing setup options for the generated Event Streams resource or manage Parameters, or configure additional Broker Nodes, you can perform the task from the Event Streams details page.

Operating Control

If changes occur to the Event Streams resource while it is running, you can start, stop, or restart it.

To control the operation of Event Streams, follow these procedures.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- On the Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- Event Streams list page, click the resource to control the operation. Move to the Event Streams details page.

- Check the status of Event Streams and complete the change through the control buttons below.

- Start: The server where the Event Streams service is installed and the Event Streams service is running.

- 중지: Event Streams service installed server and Event Streams service will be stopped.

- Restart: Only the Event Streams service will be restarted.

Synchronizing Service Status

You can query the current server status and synchronize it to the Console.

To synchronize the service status of Event Streams, follow these procedures.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- On the Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- On the Event Streams list page, click the resource to query the service status. It moves to the Event Streams details page.

- Service Status Synchronization button should be clicked. It takes a little time to retrieve, and during retrieval, the cluster changes to Synchronizing status.

- Once the inquiry is completed, the status of the server information item is updated, and the cluster is changed to the Running state.

Managing Parameters

It provides parameter inquiry and modification functions.

To view or modify the configuration parameters, follow these steps.

- 모든 서비스 > Data Analytics > Event Streams menu should be clicked. It moves to the Service Home page of Event Streams.

- Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- Event Streams list page, click the resource you want to query and modify parameters. It moves to the Event Streams detail page.

- Click the Parameter Management button. It moves to the Parameter Management page.

- On the Parameter Management page, click the Search button. The Database Search popup window opens.

- To inquire about parameter information, click the Confirm button. It may take some time to retrieve.

- You can modify the Parameter information after performing the inquiry.

- To modify the Parameter information, click the Modify button and enter the modification details in the Custom Value area of the Parameter to be modified.

- If the application type is dynamic, it is reflected immediately, and if it is static, a service restart is required, so the service is stopped.

- When the input is complete, click the Save button.

Changing the Server Type

You can change the configured server type.

To change the server type, follow these steps.

- If the server type is configured as Standard, it cannot be changed to High Capacity. If you want to change to High Capacity, please create a new service.

- Modifying the server type requires a server restart. Please check separately for SW license modifications and SW settings due to specification changes.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- On the Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- On the Event Streams list page, click the resource for which you want to change the server type. It moves to the Event Streams detail page.

- Click the Edit button of the server type you want to change at the bottom of the detailed information. The Server Type Edit popup window opens.

- Server Type Modification In the server type modification popup window, select the server type and click the OK button.

Expanding Storage

The storage added to the data area can be expanded up to a maximum of 5TB based on the initially allocated capacity. Storage can be expanded without interrupting Event Streams, and if configured as a cluster, all nodes are expanded simultaneously.

- If encryption is set for the existing Block Storage, encryption will also be applied to the additional Disk.

- Disk size modification is only possible if it is 16GB or more larger than the current disk size.

To increase the storage capacity, follow the next procedure.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- On the Event Streams list page, click the resource for which you want to change the server type. It moves to the Event Streams details page.

- Click the Edit button of the additional Disk you want to add at the bottom of the detailed information. The Disk Edit popup window opens.

- In the Disk Modification popup window, enter the expansion capacity and click the Confirm button.

Add Broker Node

If Event Streams cluster expansion is needed, nodes can be added with the same specifications as the Broker Node in use. The added nodes are added to the existing cluster without server downtime, and the existing data is automatically distributed.

- You can use up to 10 nodes within the cluster. Please note that additional nodes created will be charged extra.

- The cluster performance may be degraded while adding nodes.

To add a Broker node, follow these steps.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- On the Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- Event Streams Resource Click the resource you want to recover from the list page of Event Streams Resources. It moves to the Event Streams Details page.

- Click the Add Broker Node button. It moves to the Add Broker Node page.

- Required Information Input area, enter the corresponding information, and then click the Complete button.

| Classification | Necessity | Detailed Description |

|---|---|---|

| Server Name | Required | Server name where Broker is installed

|

| Cluster Name | Required | Cluster Name

|

| Additional Node count | Required | Number of Nodes to add

|

| Service Type > Server Type | Required | The type of server where the Broker will be installed

|

| Service Type > Planned Compute | Selection | Current status of resources with Planned Compute set

|

| Service Type > Block Storage | Required | Block Storage settings to be used for Broker nodes

|

| Network | Required | The network where servers are installed

|

Cancel Event Streams

You can cancel unused Event Streams to reduce operating costs. However, when canceling the service, the operating service may be stopped immediately, so you should consider the impact of stopping the service sufficiently before proceeding with the cancellation work.

To cancel Event Streams, follow these procedures.

- Click All services > Data Analytics > Event Streams menu. It moves to the Service Home page of Event Streams.

- Service Home page, click the Event Streams menu. It moves to the Event Streams list page.

- Event Streams list page, select the resource to be canceled and click the Service Cancellation button.

- Once the cancellation is complete, check if the resource has been cancelled on the Event Streams listing page.

1.3 - API Reference

1.4 - CLI Reference

1.5 - Release Note

Event Streams

- It provides Terraform.

- HDD, HDD_KMS disk types are also provided.

- An Event Streams service that easily creates and manages Apache Kafka clusters in a web environment has been released.

2 - Search Engine

2.1 - Overview

Service Overview

Search Engine provides automated creation and configuration of the distributed search and analytics engines Elasticsearch and OpenSearch through a web-based console. Users can select a server type that fits the system configuration to set up a cluster, and it supports the data analysis and visualization tools Kibana and the OpenSearch dashboard.

- Search Engine provides Elasticsearch Enterprise version and OpenSearch version.

- Elasticsearch Enterprise’s software license uses a user-owned license (BYOL, Bring Your Own License), and the software license policy in cloud environments must follow the supplier’s policy.

Search Engine Cluster consists of multiple master nodes and data nodes. Data nodes can be installed from a minimum of 1 up to a maximum of 10, and are usually installed with three or more. If a separate master node is not installed, the data node also performs the role of the master node, and up to 10 can be installed. When a separate master node is installed, data nodes can be up to 50.

Provided Features

Search Engine provides the following functions.

- Auto Provisioning (Auto Provisioning): You can configure and set up Elasticsearch and OpenSearch clusters via the UI.

- Operation Control Management: Provides a function to control the status of running servers. It allows restarting to apply configuration values, in addition to starting and stopping the cluster.

- Backup and Recovery: Backup is possible using the built-in backup function, and recovery is possible to the point in time of the backup file.

- Data Node Addition: If cluster expansion is needed, you can add nodes with the same specifications as the data nodes in use. Up to 10 nodes can be added within the cluster.

- Visualization tool support: Provides data analysis and visualization tools, and supports Elasticsearch Kibana or OpenSearch dashboards.

- Monitoring: CPU, Memory, cluster performance monitoring information can be checked through the Cloud Monitoring service.

Components

Search Engine provides pre-validated engine versions and various server types according to the open source support policy. Users can select and use them according to the scale of the service they want to configure.

Engine Version

Search Engine the supported engine versions are as follows.

Technical support can be used until the supplier’s EoTS (End of Technical Service) date, and the EOS date when new creation is stopped is set to six months before the EoTS date.

According to the supplier’s policy, the EOS and EoTS dates may change, so please refer to the supplier’s license management policy page for details.

Search Engine’s next version is scheduled to be provided after December 18, 2025. The actual service provision schedule may change.

- ElasticSearch Enterprise 8.19.0 version

- OpenSearch 2.19.3 version

- OpenSearch 3.2.0 version

| Provided Version | EoS Date | EoTS Date |

|---|---|---|

| 8.15.0 | TBD | TBD |

- OpenSearch: https://opensearch.org/releases/

| Provided Version | EoS Date | EoTS Date |

|---|---|---|

| 2.17.1 | TBD | TBD |

Server Type

The server types supported by Search Engine are as follows.

For detailed information about the server types provided by Search Engine, please refer to Search Engine Server Type.

Standard se1v2m4

| Category | Example | Detailed Description |

|---|---|---|

| Server Type | Standard | Provided server type

|

| Server specifications | se1 | Provided server specifications

|

| Server specifications | v2 | vCore count

|

| Server specifications | m4 | Memory capacity

|

Preliminary Service

This is a list of services that must be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | Service | Detailed Description |

|---|---|---|

| Networking | VPC | A service that provides an independent virtual network in a cloud environment |

2.1.1 - Server Type

Search Engine server type

Search Engine provides a server type composed of various combinations such as CPU, Memory, Network Bandwidth, etc. When creating a Search Engine, Elastic Search is installed according to the server type selected to match the purpose of use.

The server types supported by the Search Engine are as follows.

Standard ses1v2m4

Classification | Example | Detailed Description |

|---|---|---|

| Server Type | Standard | Provided server type distinction

|

| Server Specification | db1 | Classification of provided server type and generation

|

| Server Specification | v2 | Number of vCores

|

| Server Specification | m4 | Memory Capacity

|

ses1 server type

The ses1 server type of Search Engine is provided with standard specifications (vCPU, Memory) and is suitable for various database workloads.

- Up to 3.3Ghz Intel 3rd generation (Ice Lake) Xeon Gold 6342 Processor

- Supports up to 16 vCPUs and 256 GB of memory

- Up to 12.5 Gbps networking speed

| Classification | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| Standard | ses1v1m2 | 1 vCore | 2 GB | Up to 10 Gbps |

| Standard | ses1v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | ses1v2m16 | 2 vCore | 16 GB | up to 10 Gbps |

| Standard | ses1v2m24 | 2 vCore | 24 GB | Up to 10 Gbps |

| Standard | ses1v2m32 | 2 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses1v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | ses1v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | ses1v4m32 | 4 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses1v4m48 | 4 vCore | 48 GB | Up to 10 Gbps |

| Standard | ses1v4m64 | 4 vCore | 64 GB | up to 10 Gbps |

| Standard | ses1v6m12 | 6 vCore | 12 GB | Up to 10 Gbps |

| Standard | ses1v6m24 | 6 vCore | 24 GB | Up to 10 Gbps |

| Standard | ses1v6m48 | 6 vCore | 48 GB | Up to 10 Gbps |

| Standard | ses1v6m72 | 6 vCore | 72 GB | Up to 10 Gbps |

| Standard | ses1v6m96 | 6 vCore | 96 GB | Up to 10 Gbps |

| Standard | ses1v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | ses1v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses1v8m64 | 8 vCore | 64 GB | Up to 10 Gbps |

| Standard | ses1v8m96 | 8 vCore | 96 GB | Up to 10 Gbps |

| Standard | ses1v8m128 | 8 vCore | 128 GB | Up to 10 Gbps |

| Standard | ses1v10m20 | 10 vCore | 20 GB | Up to 10 Gbps |

| Standard | ses1v10m40 | 10 vCore | 40 GB | Up to 10 Gbps |

| Standard | ses1v10m80 | 10 vCore | 80 GB | up to 10 Gbps |

| Standard | ses1v10m120 | 10 vCore | 120 GB | Up to 10 Gbps |

| Standard | ses1v10m160 | 10 vCore | 160 GB | up to 10 Gbps |

| Standard | ses1v12m24 | 12 vCore | 24 GB | Up to 12.5 Gbps |

| Standard | ses1v12m48 | 12 vCore | 48 GB | Up to 12.5 Gbps |

| Standard | ses1v12m96 | 12 vCore | 96 GB | up to 12.5 Gbps |

| Standard | ses1v12m144 | 12 vCore | 144 GB | Up to 12.5 Gbps |

| Standard | ses1v12m192 | 12 vCore | 192 GB | up to 12.5 Gbps |

| Standard | ses1v14m28 | 14 vCore | 28 GB | Up to 12.5 Gbps |

| Standard | ses1v14m56 | 14 vCore | 56 GB | Up to 12.5 Gbps |

| Standard | ses1v14m112 | 14 vCore | 112 GB | Up to 12.5 Gbps |

| Standard | ses1v14m168 | 14 vCore | 168 GB | up to 12.5 Gbps |

| Standard | ses1v14m224 | 14 vCore | 224 GB | Up to 12.5 Gbps |

| Standard | ses1v16m32 | 16 vCore | 32 GB | Up to 12.5 Gbps |

| Standard | ses1v16m64 | 16 vCore | 64 GB | up to 12.5 Gbps |

| Standard | ses1v16m128 | 16 vCore | 128 GB | up to 12.5 Gbps |

| Standard | ses1v16m192 | 16 vCore | 192 GB | up to 12.5 Gbps |

| Standard | ses1v16m256 | 16 vCore | 256 GB | up to 12.5 Gbps |

ses2 server type

The ses1 server type of Search Engine is provided with standard specifications (vCPU, Memory) and is suitable for various database workloads.

- Up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor

- Supports up to 16 vCPUs and 256 GB of memory

- Up to 12.5 Gbps networking speed

| Classification | Server Type | CPU vCore | Memory | Network Bandwidth(Gbps) |

|---|---|---|---|---|

| Standard | ses2v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | ses2v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | ses2v2m16 | 2 vCore | 16 GB | Up to 10 Gbps |

| Standard | ses2v2m24 | 2 vCore | 24 GB | Up to 10 Gbps |

| Standard | ses2v2m32 | 2 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses2v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | ses2v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | ses2v4m32 | 4 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses2v4m48 | 4 vCore | 48 GB | Up to 10 Gbps |

| Standard | ses2v4m64 | 4 vCore | 64 GB | Up to 10 Gbps |

| Standard | ses2v6m12 | 6 vCore | 12 GB | Up to 10 Gbps |

| Standard | ses2v6m24 | 6 vCore | 24 GB | Up to 10 Gbps |

| Standard | ses2v6m48 | 6 vCore | 48 GB | Up to 10 Gbps |

| Standard | ses2v6m72 | 6 vCore | 72 GB | Up to 10 Gbps |

| Standard | ses2v6m96 | 6 vCore | 96 GB | Up to 10 Gbps |

| Standard | ses2v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | ses2v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | ses2v8m64 | 8 vCore | 64 GB | up to 10 Gbps |

| Standard | ses2v8m96 | 8 vCore | 96 GB | Up to 10 Gbps |

| Standard | ses2v8m128 | 8 vCore | 128 GB | Up to 10 Gbps |

| Standard | ses2v10m20 | 10 vCore | 20 GB | Up to 10 Gbps |

| Standard | ses2v10m40 | 10 vCore | 40 GB | Up to 10 Gbps |

| Standard | ses2v10m80 | 10 vCore | 80 GB | Up to 10 Gbps |

| Standard | ses2v10m120 | 10 vCore | 120 GB | Up to 10 Gbps |

| Standard | ses2v10m160 | 10 vCore | 160 GB | Up to 10 Gbps |

| Standard | ses2v12m24 | 12 vCore | 24 GB | Up to 12.5 Gbps |

| Standard | ses2v12m48 | 12 vCore | 48 GB | Up to 12.5 Gbps |

| Standard | ses2v12m96 | 12 vCore | 96 GB | Up to 12.5 Gbps |

| Standard | ses2v12m144 | 12 vCore | 144 GB | Up to 12.5 Gbps |

| Standard | ses2v12m192 | 12 vCore | 192 GB | Up to 12.5 Gbps |

| Standard | ses2v14m28 | 14 vCore | 28 GB | Up to 12.5 Gbps |

| Standard | ses2v14m56 | 14 vCore | 56 GB | Up to 12.5 Gbps |

| Standard | ses2v14m112 | 14 vCore | 112 GB | Up to 12.5 Gbps |

| Standard | ses2v14m168 | 14 vCore | 168 GB | Up to 12.5 Gbps |

| Standard | ses2v14m224 | 14 vCore | 224 GB | up to 12.5 Gbps |

| Standard | ses2v16m32 | 16 vCore | 32 GB | Up to 12.5 Gbps |

| Standard | ses2v16m64 | 16 vCore | 64 GB | up to 12.5 Gbps |

| Standard | ses2v16m128 | 16 vCore | 128 GB | Up to 12.5 Gbps |

| Standard | ses2v16m192 | 16 vCore | 192 GB | Up to 12.5 Gbps |

| Standard | ses2v16m256 | 16 vCore | 256 GB | up to 12.5 Gbps |

SEH2 server type

The seh2 server type of Search Engine is provided with large-capacity server specifications and is suitable for database workloads for large-scale data processing.

- Up to 3.2GHz Intel 4th generation (Sapphire Rapids) Xeon Gold 6448H Processor

- Supports up to 72 vCPUs and 288 GB of memory

- Up to 25Gbps networking speed

| Classification | Server Type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| High Capacity | seh2v24m48 | 24 vCore | 48 GB | Up to 25 Gbps |

| High Capacity | seh2v24m96 | 24 vCore | 96 GB | Up to 25 Gbps |

| High Capacity | seh2v24m192 | 24 vCore | 192 GB | Up to 25 Gbps |

| High Capacity | seh2v24m288 | 24 vCore | 288 GB | Up to 25 Gbps |

| High Capacity | seh2v32m64 | 32 vCore | 64 GB | Up to 25 Gbps |

| High Capacity | seh2v32m128 | 32 vCore | 128 GB | Up to 25 Gbps |

| High Capacity | seh2v32m256 | 32 vCore | 256 GB | Up to 25 Gbps |

| High Capacity | seh2v48m96 | 48 vCore | 96 GB | Up to 25 Gbps |

| High Capacity | seh2v48m192 | 48 vCore | 192 GB | Up to 25 Gbps |

| High Capacity | seh2v64m128 | 64 vCore | 128 GB | Up to 25 Gbps |

| High Capacity | seh2v64m256 | 64 vCore | 256 GB | Up to 25 Gbps |

| High Capacity | seh2v72m144 | 72 vCore | 144 GB | Up to 25 Gbps |

| High Capacity | seh2v72m288 | 72 vCore | 288 GB | Up to 25 Gbps |

2.1.2 - Monitoring Metrics

Search Engine Monitoring Metrics

The following table shows the performance monitoring metrics of Event Streams that can be checked through Cloud Monitoring. For detailed Cloud Monitoring usage, please refer to the Cloud Monitoring guide.

For server monitoring metrics of the Search Engine, please refer to the Virtual Server Monitoring Metrics guide.

| Performance Item | Detailed Description | Unit |

|---|---|---|

| Disk Usage | datadir usage | MB |

| Documents [Deleted] | total number of deleted documents | cnt |

| Documents [Existing] | total number of existing documents | cnt |

| Filesystem Bytes [Available] | available filesystem | bytes |

| Filesystem Bytes [Free] | free filesystem | bytes |

| Filesystem Bytes [Total] | total filesystem | bytes |

| Instance Status [PID] | Elasticsearch process PID | PID |

| JVM Heap Used [Init] | JVM heap used init (bytes) | bytes |

| JVM Heap Used [MAX] | JVM heap used max (bytes) | bytes |

| JVM Non Heap Used [Init] | JVM non-heap used init (bytes) | bytes |

| JVM Non Heap Used [MAX] | JVM non-heap used max (bytes) | bytes |

| Kibana Connections | Kibana connections | cnt |

| Kibana Memory Heap Allocated [Limit] | maximum allocated Node.js process heap size (bytes) | bytes |

| Kibana Memory Heap Allocated [Total] | total allocated Node.js process heap size (bytes) | bytes |

| Kibana Memory Heap Used | used Node.js process heap size (bytes) | bytes |

| Kibana Process Uptime | Kibana process uptime | ms |

| Kibana Requests [Disconnected] | request count metric | cnt |

| Kibana Requests [Total] | request count metric | cnt |

| Kibana Response Time [Avg] | response time metric | ms |

| Kibana Response Time [MAX] | response time metric | ms |

| Kibana Status [PID] | Kibana process PID | PID |

| License Expiry Date [ms] | license expiry date [milliseconds] | ms |

| License Status | license status | status |

| License Type | license type | type |

| Queue Time | queue time | ms |

| Segments | total number of segments | cnt |

| Segments Bytes | total segment size (bytes) | bytes |

| Shards | cluster shard count | cnt |

| Store Bytes | total store size (bytes) | bytes |

2.2 - How-to guides

The user can enter the necessary information of the Search Engine through the Samsung Cloud Platform Console and create the service by selecting detailed options.

Creating Search Engine

You can create and use the Search Engine service in the Samsung Cloud Platform Console.

Please configure the Subnet type of VPC to General before creating the service.

- If the Subnet type is Local, the creation of the corresponding Database service is not possible.

To create a Search Engine, follow the next procedure.

All Services > Database > Search Engine menu, click. It moves to the Service Home page of Search Engine.

On the Service Home page, click the Create Search Engine button. It moves to the Create Search Engine page.

Search Engine Creation page where you enter the information needed to create a service and select detailed options.

Image and Version Selection area, please select the necessary information.

Classification NecessityDetailed Description Image Required Select the type of image provided - Elasticsearch Enterprise, OpenSearch

Image Version Required Select the version of the selected image - Provide a list of versions of the server image provided

Fig. Search Engine image and version selection itemsEnter Service Information area, please enter or select the necessary information.

Classification NecessityDetailed Description Server Name Prefix Required The name of the server where Elasticsearch will be installed - Starts with a lowercase letter, using lowercase letters, numbers, and special characters (

-) to input 3 ~ 13 characters

- A postfix such as 001, 002 is attached based on the server name to create the actual server name

Cluster Name Required Name of the cluster that the servers are configured in - Enter in English, 3-20 characters

- The cluster is a unit that bundles multiple servers

MasterNode separate installation > use essential Whether to install the Master node separately - Use is selected, the Master node is installed separately.

- If the Master node is not installed separately, the data node also performs the master role.

MasterNode separate installation > MasterNode count required Number of Master nodes - The master node is installed with a fixed 3 units for failover recovery.

MasterNode separate installation > Server type Required Master node server type - Standard: Standard specification commonly used

- High Capacity: High-capacity server with 24vCore or more

- For more information on the server type provided by Search Engine, refer to Search Engine server type

MasterNode separate installation > Planned Compute selection Current status of resources with Planned Compute set - In use: Number of resources in use among those with Planned Compute set

- Settings: Number of resources with Planned Compute set

- Coverage preview: Amount applied by resource-based Planned Compute

- Apply for Planned Compute service: Move to the Planned Compute service application page

- For more information, see Apply for Planned Compute

MasterNode separate installation > Block Storage required Master node block storage type - Basic OS: area where the engine is installed

- DATA: data file storage area

- After selecting the storage type, enter the capacity. (For more information on each block storage type, see Creating Block Storage)

- SSD: high-performance general volume

- HDD: general volume

- SSD_KMS/HDD_KMS: additional encrypted volume using KMS (Key Management System) encryption key

- Capacity can be entered in multiples of 8 in the range of 16 to 5,120

- After selecting the storage type, enter the capacity. (For more information on each block storage type, see Creating Block Storage)

- Add Disk: data storage area

- After selecting Use, enter the Capacity of the storage

- To add storage, click the + button, and to delete, click the x button. Up to 9 can be added.

- Capacity can be entered in multiples of 8 in the range of 16 to 5,120, and up to 9 can be created

Number of Nodes Required Number of Data Nodes - In cases where the Master node is installed separately, 2 or more nodes are required, and in other cases, 1 or more nodes must be selected.

Service Type > Server Type Required Data Node Server Type - Standard: generally used standard specification

- High Capacity: high-capacity server with 24vCore or more

Service Type > Planned Compute Selection Current status of resources with Planned Compute set - In Use: Number of resources with Planned Compute set that are in use

- Settings: Number of resources with Planned Compute set

- Coverage Preview: Amount applied by Planned Compute for each resource

- Apply for Planned Compute Service: Move to the Planned Compute service application page

- For more information, see How to Apply for Planned Compute

Service Type > Block Storage Required Type of Block Storage to be used for data node - Basic OS: Area where the engine is installed

- DATA: Data file storage area

- Please select the storage type and enter the capacity. (For more information on each Block Storage type, refer to Creating Block Storage)

- SSD: High-performance general volume

- HDD: General volume

- SSD_KMS/HDD_KMS: Additional encrypted volume using KMS (Key Management System) encryption key

- Capacity can be entered in multiples of 8 within the range of 16 to 5,120

- Please select the storage type and enter the capacity. (For more information on each Block Storage type, refer to Creating Block Storage)

- Disk Add: Additional storage area for data, backup

- Select Use and enter the Purpose and Capacity of the storage

- To add storage, click the + button, and to delete, click the x button. Up to 9 can be added.

- Capacity can be entered in multiples of 8 within the range of 16 to 5,120, and up to 9 can be created

Kibana > Server Type Required The type of server where Kibana will be installed - Standard: Standard specification commonly used

Kibana > Planned Compute Selection Current status of resources with Planned Compute set - In use: Number of resources with Planned Compute set that are in use

- Settings: Number of resources with Planned Compute set

- Coverage preview: Amount applied by resource-based Planned Compute

- Apply for Planned Compute service: Move to the Planned Compute service application page

- For more information, see How to apply for Planned Compute

Kibana > Block Storage required The type of Block Storage to be used on the server where Kibana will be installed - Basic OS: The area where the engine is installed

Network > Common Settings Required Network settings for servers created by the service - Select if you want to apply the same settings to all servers being installed

- Select pre-created VPC and Subnet

- IP: Only automatic creation is possible

- Public NAT settings are only possible in server-specific settings.

Network > Server Settings Required Network settings for installing servers created by the service - Select to apply different settings for each server being installed

- Select pre-created VPC and Subnet

- IP: Enter the IP for each server

- The Public NAT function is available only when the VPC is connected to the Internet Gateway, and if Use is checked, you can select from the reserved IP in the VPC product’s Public IP. For more information, see Creating Public IP

IP Access Control Select Set service access policy - Set access policy for IPs entered on the page, so you don’t need to set Security Group policy separately.

- Enter in IP format (e.g.,

192.168.10.1) or CIDR format (e.g.,192.168.10.0/24,192.168.10.1/32), and click the Add button

- To delete the entered IP, click the x button next to the entered IP

Maintenance period Select Search Engine maintenance period - Use Selecting the day of the week, start time, and duration

- It is recommended to set the maintenance period for stable management of the service. Patch work is performed at the set time and service interruption occurs

- If set to unused, problems that occur due to non-application of patches are not the responsibility of our company.

Table. Search Engine service information input items- Starts with a lowercase letter, using lowercase letters, numbers, and special characters (

Database Configuration Required Information Input area, please enter or select the required information.

Classification NecessityDetailed Description Backup > Use Selection Whether to use node backup - If node backup is selected, select the storage period and backup start time

Backup > Retention Period Select Backup Retention Period - Please select the backup retention period. The file retention period can be set from 7 to 35 days

- Backup files may incur additional charges depending on capacity.

Backup > Backup start time Select Backup start time - Select the backup start time

- The minutes when the backup is performed are set randomly, and the backup end time cannot be set

Cluster Port number Required Elasticsearch connection port number 1,024 ~ 65,535can be entered as one of them, and Elasticsearch internal port 9300 and Kibana port 5301 are not available

Elastic username required Elasticsearch username - Enter within 2-20 characters using lowercase English letters

- The following usernames cannot be used.

- apm_system, beats_system, elastic, kibana, kibana_system, logstash_system, remote_monitoring_user, scp_kibana_system, scp_manager, maxigent_cl

Elastic password required Elasticsearch connection password - Enter 8-30 characters, including English, numbers, and special characters (excluding

"’</code>)

Elastic password confirmation required Confirm Elasticsearch connection password - Re-enter the Elasticsearch connection password identically

License Key required Elasticsearch License Key - Enter the entire contents of the issued license file (.json)

- If the entered license key is not valid, service creation may not be possible.

- OpenSearch does not require a License Key.

Time Zone Selection Standard Time Zone where the service will be used Fig. Search Engine Database Configuration Required Information Input ItemsEnter Additional Information Enter or select the required information in the area.

Classification NecessityDetailed Description Tag Select Add Tag - Add Tag button to create and add a tag or add an existing tag

- Up to 50 tags can be added

- Newly added tags will be applied after service creation is complete

Fig. Search Engine service additional information input items

In the Summary panel, review the detailed information and estimated charges, then click the Complete button.

- Once creation is complete, check the created resource on the Resource List page.

Search Engine detailed information check

The Search Engine service can check and modify the entire resource list and detailed information. The Search Engine details page consists of detailed information, tags, and work history tabs.

To check the detailed information of the Search Engine service, follow the next procedure.

- All services > Data Analytics > Search Engine menu, click. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click on the resource to check the detailed information. Move to the Search Engine details page.

- Search Engine Details The top of the page displays status information and additional features.

| Classification | Detailed Description |

|---|---|

| Cluster Status | Cluster Status

|

| Cluster Control | Buttons that can change the cluster status

|

| Additional features more | Cluster related management buttons

|

| Service Cancellation | Button to cancel the service |

Detailed Information

On the Search Engine List page, you can check the detailed information of the selected resource and modify the information if necessary.

| Classification | Detailed Description |

|---|---|

| Server Information | Server information configured in the corresponding cluster

|

| Service | Service Name |

| Resource Type | Service Name |

| SRN | Unique resource ID in Samsung Cloud Platform

|

| Resource Name | Resource Name

|

| Resource ID | Unique resource ID in the service |

| Creator | Service creator user |

| Creation Time | Time when the service was created |

| Modifier | Service information modified user |

| Modified Time | Time when service information was modified |

| Image/Version | Installed service image and version information |

| Cluster Name | Server cluster name composed of servers |

| Planned Compute | Planned Compute status of set resources

|

| Maintenance Period | Maintenance Period Status

|

| Backup | Backup setting status

|

| Time Zone | Standard time zone where the service will be used |

| License | Elasticsearch license information

|

| Elastic username | Elasticsearch user name |

| Kibana connection information | Kibana connection information |

| Network | Installed network information(VPC, Subnet) |

| IP access control | Service access policy setting

|

| Master | Master node’s server type, default OS, additional Disk information

|

| Data | Broker node’s server type, default OS, additional Disk information

|

| Kibana | Kibana node server type, basic OS information

|

Tag

On the Search Engine list page, you can check the tag information of the selected resource, and add, change, or delete it.

| Classification | Detailed Description |

|---|---|

| Tag List | Tag List

|

Work History

You can check the operation history of the resource selected on the Search Engine list page.

| Classification | Detailed Description |

|---|---|

| Work History List | Resource Change History

|

Search Engine Resource Management

If you need to change the existing setting options of the generated Search Engine resource or manage Parameters, or configure additional Nodes, you can perform the task on the Search Engine details page.

Operating Control

If there are changes to the Search Engine resources in operation, you can start, stop, and restart them.

To control the operation of the Search Engine, follow the next procedure.

- Click on the menu of all services > Data Analytics > Search Engine. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to control the operation. Move to the Search Engine details page.

- Check the Search Engine status and complete the change through the control button below.

- Start: The Search Engine service is installed on the server and the Search Engine service is running.

- Stop: The Search Engine service installed on the server and the Search Engine service will be stopped.

- Restart: Only the Search Engine service will be restarted.

Synchronizing Service Status

You can query the current server status and synchronize it to the Console.

To synchronize the service status of the Search Engine, follow the next procedure.

- Click All Services > Data Analytics > Search Engine menu. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click on the resource to check the service status. Move to the Search Engine details page.

- Service Status Synchronization button should be clicked. It takes some time to retrieve, and during retrieval, the cluster changes to Synchronizing status.

- When the query is complete, the status in the server information item is updated, and the cluster changes to Running status.

Changing Server Type

You can change the configured server type.

To change the server type, follow these steps.

- If the server type is configured as Standard, it cannot be changed to High Capacity. If you want to change to High Capacity, please create a new service.

- Modifying the server type requires a server restart. Please check separately for SW license modifications due to specification changes, as well as SW settings and reflections.

- All services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- Service Home page, click the Search Engine menu. Move to the Search Engine list page.

- Search Engine list page, click the resource to change the server type. Move to the Search Engine details page.

- Click the Edit button of the Server Type you want to change at the bottom of the detailed information. The Edit Server Type popup window opens.

- In the Server Type Modification popup window, select the server type and click the Confirm button.

Expanding Storage

The storage added to the data area can be expanded up to a maximum of 5TB based on the initially allocated capacity. Storage can be expanded without stopping the Search Engine, and if configured in a cluster, all nodes are expanded simultaneously.

- If encryption is set for the existing Block Storage, encryption will also be applied to the additional Disk. The disk size modification is only possible if it is 16GB or more larger than the current disk size.

To increase the storage capacity, follow the procedure below.

- All services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to change the server type. It moves to the Search Engine details page.

- Click the Modify button of the additional Disk you want to add at the bottom of the detailed information. The Disk Modification popup window opens.

- In the Disk Modification popup window, enter the expansion capacity and click the Confirm button.

Adding Storage

If you need more than 5TB of data storage space, you can add storage.

- If encryption is set for the existing Block Storage, encryption will also be applied to the additional Disk.

To add storage capacity, follow these steps.

- All services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to add storage. Move to the Search Engine details page.

- Click the Add Disk button at the bottom of the detailed information. The Add Disk popup window opens.

- In the Add Disk popup window, enter the purpose and capacity, then click the OK button.

Search Engine Backup

Through the backup settings function, users can set the data retention period and start cycle, and perform backup history inquiry and deletion through the backup history function.

Setting up backup

While creating the Search Engine, the procedure for setting up the backup is to refer to the Search Engine creation guide, and to modify the backup settings of the created resource, follow the following procedure.

- If backup is set, backup will be performed at the specified time after the set time, and additional fees will be incurred depending on the backup capacity.

- If the backup setting is changed to unset, the backup operation will be stopped immediately, and the saved backup data will be deleted and can no longer be used.

- All Services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to set the backup. Move to the Search Engine details page.

- Click the Edit button of the backup item. The Backup Edit popup window opens.

- If you set up a backup, click Use in the Backup Modification popup window, select the storage period, backup start time, and Archive backup cycle, and then click the Confirm button.

- If you want to stop the backup settings, uncheck Use in the Backup Modification popup window and click the OK button.

Check Backup History

To view the backup history, follow these steps.

- All Services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to check the backup history. Move to the Search Engine details page.

- Click the Backup History button. The Backup History popup window opens.

- Backup History popup window where you can check the backup status, version, backup start time, backup completion time, and capacity.

Deleting backup files

To delete the backup history, follow these steps.

- Click on the menu for all services > Data Analytics > Search Engine. It moves to the Service Home page of the Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, click the resource to check the backup history. Move to the Search Engine details page.

- Click the Backup History button. The Backup History popup window opens.

- In the backup history popup window, check the file you want to delete and click the Delete button.

Search Engine recovery

In the event of a failure or data loss that requires restoration from a backup file, cluster recovery allows recovery based on a specific point in time.

To restore the Search Engine, follow the following procedure.

- All Servives > Data Analytics > Search Engine menu should be clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine Resource Click on the resource you want to restore from the list page. It moves to the Search Engine Details page.

- Click the Cluster Recovery button. It moves to the Cluster Recovery page.

- Enter the corresponding information in the Cluster Recovery Configuration area, and then click the Complete button.

| Classification | Necessity | Detailed Description |

|---|---|---|

| Recovery Point | Required | Set the point in time that the user wants to recover

|

| Server Name Prefix | Required | Recovery Server Name

|

| Cluster Name | Required | Recovery Server Cluster Name

|

| Number of Nodes | Required | Number of Data Nodes

|

| Service Type > Server Type | Required | Data Node Server Type

|

| Service Type > Planned Compute | Selection | Current status of resources with Planned Compute set

|

| Service Type > Block Storage | Required | Block Storage to be used for data node

|

| MasterNode separate installation > use | essential | Whether to install Master node separately

|

| MasterNode separate installation > Number of MasterNodes | required | Number of Master nodes |

| MasterNode separate installation > Server type | Required | Master node server type

|

| MasterNode separate installation > Planned Compute | selection | Planned Compute setting resource status

|

| MasterNode separate installation > Block Storage | required | Block Storage to be used for Master Node

|

| Kibana > Server Type | Required | Kibana node server type

|

| Kibana > Planned Compute | Selection | Current status of resources with Planned Compute set

|

| Kibana > Block Storage | Required | Block Storage to be used for Kibana nodes

|

| Cluster Port number | Required | Elasticsearch connection port number

|

| Licnese Key | Essential | Elasticsearch License Key

|

| IP Access Control | Select | Set service access policy

|

| Maintenance period | Select | Maintenance period

|

Add Node

If Search Engine cluster expansion is needed, you can add nodes with the same specifications as the data node in use.

- You can use up to 10 nodes within the cluster. Please note that additional nodes created will be charged extra.

- The cluster performance may be degraded while adding nodes.

To add a node, follow these steps.

- All Services > Data Analytics > Search Engine menu should be clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engines Resource menu should be clicked. It moves to the Search Engine Detail page.

- Click the Add Broker Node button. It moves to the Add Broker Node page.

- Required Information Input area, enter the corresponding information, and then click the Complete button.

| Division | Mandatory | Detailed Description |

|---|---|---|

| Server Name Prefix | Required | Data Node Server Name

|

| Cluster Name | Required | Cluster Name

|

| Additional Node count | Required | Number of additional Nodes to add

|

| Service Type > Server Type | Required | Data Node Server Type

|

| Service Type > Planned Compute | Selection | Current status of resources with Planned Compute set

|

| Service Type > Block Storage | Required | Block Storage settings to be used for data nodes

|

| Network | Required | The network where servers are installed

|

Search Engine cancellation

You can cancel the unused Search Engine to reduce operating costs. However, if you cancel the service, the operating service may be stopped immediately, so you should consider the impact of stopping the service sufficiently before proceeding with the cancellation work.

To cancel the Search Engine, follow the next procedure.

- All services > Data Analytics > Search Engine menu is clicked. It moves to the Service Home page of Search Engine.

- On the Service Home page, click the Search Engine menu. It moves to the Search Engine list page.

- Search Engine list page, select the resource to be canceled and click the Service Cancellation button.

- Once the cancellation is complete, please check if the resource has been cancelled on the Search Engine list page.

2.3 - API Reference

2.4 - CLI Reference

2.5 - Release Note

Search Engine

- OpenSearch 2.17.1 is newly provided.

- It provides Terraform.

- HDD, HDD_KMS disk types are also provided.

- A Search Engine service that can easily create and manage ElasticSearch Enterprise in a web environment has been released.

3 - Vertica(DBaaS)

3.1 - Overview

Service Overview

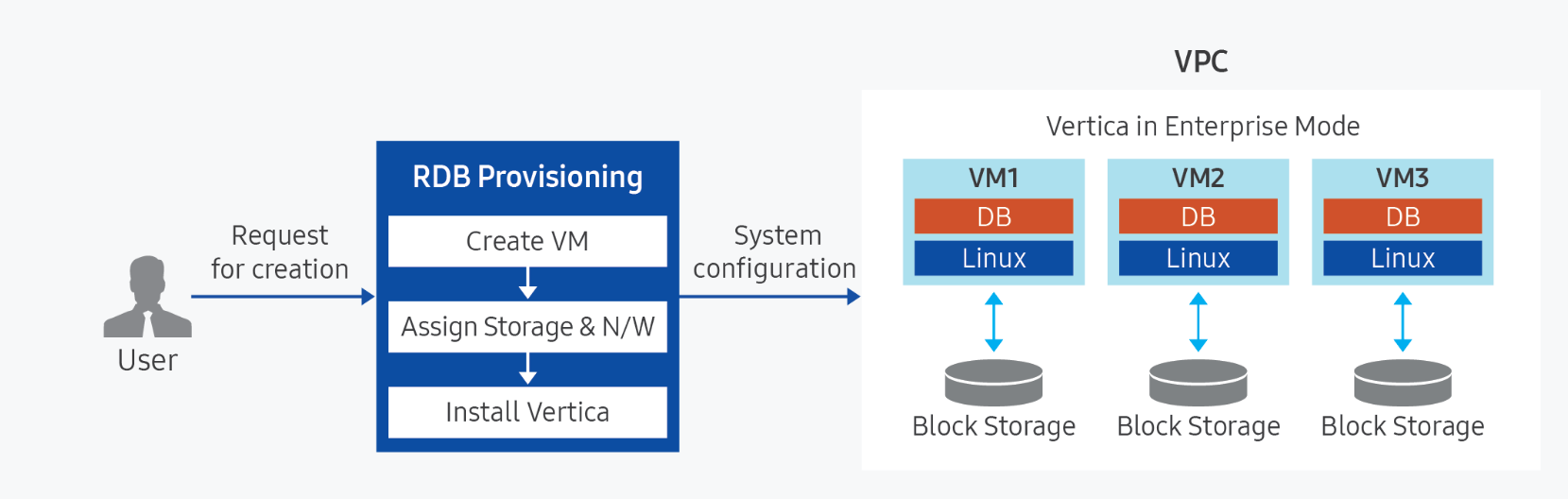

Vertica(DBaaS) is a high-availability enterprise database based on Data Warehouse for large-scale data analysis/processing. It is a data analysis platform that, through a single engine, can perform basic analyses such as queries on data coming from various sources without moving them, as well as AI analyses like machine learning. In Samsung Cloud Platform, DB management functions such as high‑availability configuration, backup/recovery, patching, parameter management, and monitoring are added to ensure stable management of single instances or critical data, enabling automation of tasks throughout the database lifecycle. Additionally, to prepare for issues with DB servers or data, it provides an automatic backup function at user‑specified times, supporting data recovery at the desired point in time.

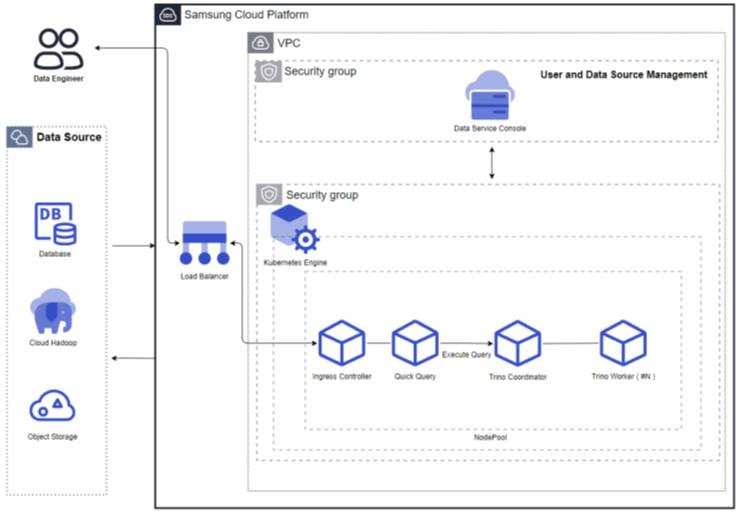

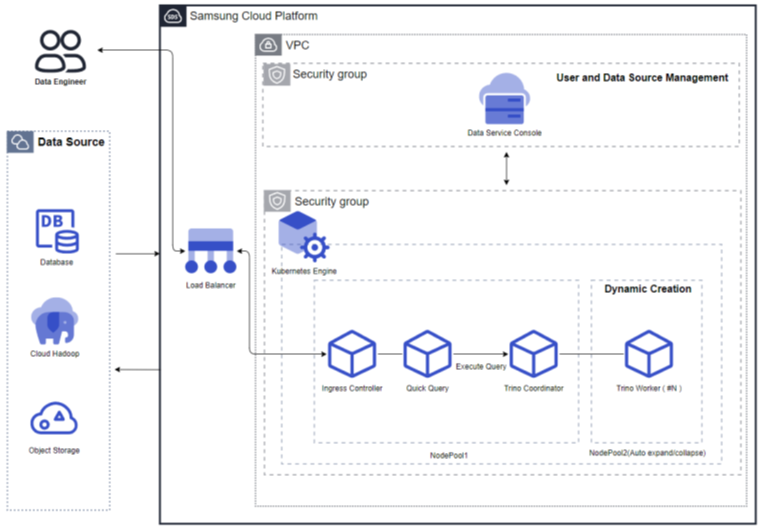

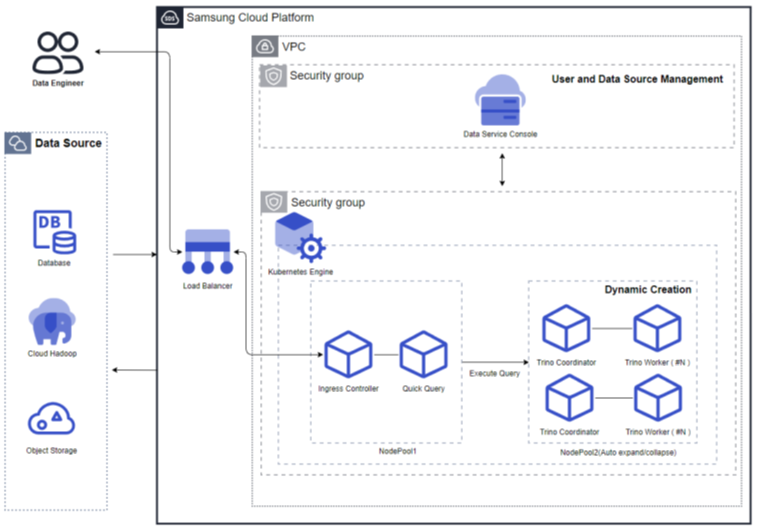

Service Architecture Diagram

Provided Features

Vertica (DBaaS) provides the following features.

- Auto Provisioning: Automatically installs the DB of the standard version of Samsung Cloud Platform based on Virtual Servers of various specifications.

- Cluster configuration: Provides its own high-availability architecture in a Masterless form.

- Operation Control Management: Provides a function to control the status of running servers. Servers can be started and stopped, and can be restarted if there is a problem with the DB or to apply configuration values.

- Backup and Recovery: Provides a data backup function based on its own backup commands. The backup retention period and backup start time can be set by the user, and additional charges apply based on backup size. It also provides a recovery function for backed-up data; when the user performs a recovery, a separate DB is created and recovery proceeds to the point selected by the user (backup save point, user-specified point). When recovering a Database, you can choose to install the Management Console for use.

- Service status query: You can view the final status of the current DB service.

- Monitoring: CPU, memory, DB performance monitoring information can be checked through the Cloud Monitoring service.

- High-performance processing of large-scale data: Guarantees stable performance in environments with massive parallel processing (MPP, Massively Parallel Processing) and SQL query Mixed Workload. Vertica processes queries through distributed processing and has a structure that allows queries to be started from any node, so there is no Single Point of Failure where queries would not be executed in case of a specific node failure.

Components

Vertica(DBaaS) provides pre-validated engine versions and various server types. Users can select and use them according to the scale of the service they want to configure.

Engine Version

The engine versions supported by Vertica(DBaaS) are as follows.

Technical support can be used until the supplier’s EoTS (End of Technical Service) date, and the EOS date when new creation is stopped is set to six months before the EoTS date.

According to the supplier’s policy, the EOS and EoTS dates may change, so please refer to the supplier’s license management policy page for details.

| Provided version | EOS date(Samsung Cloud Platform new creation stop date) | EoTS date(supplier technical support end date) |

|---|---|---|

| 24.2.0-2 | 2026-09 (planned) | 2027-04-30 |

Server Type

The server types supported by Vertica (DBaaS) are as follows.

For detailed information about the server types provided by Vertica (DBaaS), please refer to Vertica server types.

| Category | Example | Detailed Description |

|---|---|---|

| Server Type | Standard | Provided Server Types

|

| Server specifications | Db1 | Provided server specifications

|

| Server specifications | V2 | vCore count

|

| Server specifications | M4 | Memory capacity

|

Preliminary Service