1 - API Reference

API Reference Overview

The API Reference supported by AIOS is as follows.

| API Name | API | Detailed Description |

|---|

| Rerank API | POST /rerank, /v1/rerank, /v2/rerank | Applies an embedding model or cross-encoder model to predict the relevance between a single query and each item in a document list. |

| Score API | POST /score, /v1/score | Predicts the similarity between two sentences. |

| Chat Completions API | POST /v1/chat/completions | Compatible with OpenAI’s Completions API and can be used with the OpenAI Python client. |

| Completions API | POST /v1/completions | Compatible with OpenAI’s Completions API and can be used with the OpenAI Python client. |

| Embedding API | POST /v1/embeddings | Converts text into a high-dimensional vector (embedding) that can be used for various natural language processing (NLP) tasks, such as calculating text similarity, clustering, and searching. |

Table. AIOS Supported API List

Rerank API

POST /rerank, /v1/rerank, /v2/rerank

Overview

The Rerank API applies an embedding model or cross-encoder model to predict the relevance between a single query and each item in a document list.

Generally, the score of a sentence pair represents the similarity between the two sentences on a scale of 0 to 1.

- Embedding-based model: Converts the query and document into vectors and measures the similarity between the vectors (e.g., cosine similarity) to calculate the score.

- Reranker (Cross-Encoder) based model: Evaluates the query and document as a pair.

Request

Context

| Key | Type | Description | Example |

|---|

| Base URL | string | AIOS URL for API requests | application/json |

| Request Method | string | HTTP method used for API requests | POST |

| Headers | object | Header information required for requests | { “accept”: “application/json”, “Content-Type”: “application/json” } |

| Body Parameters | object | Parameters included in the request body | { “model”: “sds/bge-m3”, “query”: …, “documents”: […] } |

Table. Re-rank API - Context

Path Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Re-rank API - Path Parameters

Query Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Re-rank API - Query Parameters

Body Parameters

| Name | Name Sub | type | Required | Description | Default value | Boundary value | Example |

|---|

| model | - | string | ✅ | Model used for response generation | | | “sds/bge-reranker-v2-m3” |

| query | - | string | ✅ | User’s search query or question | | | “What is the capital of France?" |

| documents | - | array | ✅ | List of documents to be re-ranked | | Maximum model input length limit | [“The capital of France is Paris.”] |

| top_n | - | integer | ❌ | Number of top documents to return (0 returns all) | 0 | > 0 | 5 |

| truncate_prompt_tokens | - | integer | ❌ | Limits the number of input tokens | | > 0 | 100 |

Table. Re-rank API - Body Parameters

Example

curl -X 'POST' \

'https://aios.private.kr-west1.e.samsungsdscloud.com/rerank' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"model": "sds/bge-reranker-v2-m3",

Here is the translation of the given text:

"query": "What is the capital of France?",

"documents": [

"The capital of France is Paris.",

"France capital city is known for the Eiffel Tower.",

"Paris is located in the north-central part of France."

],

"top_n": 2,

"truncate_prompt_tokens": 512

}

Response

200 OK

| Name | Type | Description |

|---|

| id | string | API response’s unique identifier (UUID format) |

| model | string | Name of the model that generated the result |

| usage | integer | Object containing information about the resources used in the request |

| usage.total_tokens | integer | Total number of tokens used in processing the request |

| result | string | Array containing the results of the query-related documents |

| results[].index | integer | Order number in the result array |

| results[].document | object | Object containing the content of the searched document |

| results[].document.text | string | Actual text content of the searched document |

| results[].relevance_score | float | Score indicating the relevance between the query and the document (0 ~ 1) |

Table. Re-rank API - 200 OK

Error Code

| HTTP status code | Error Code Description |

|---|

| 400 | Bad Request |

| 422 | Validation Error |

| 500 | Internal Server Error |

Table. Re-rank API - Error Code

Example

{

"id": "rerank-scp-aios-rerank",

"model": "sds/sds/bge-m3",

"usage": {

"total_tokens": 65

},

"results": [

{

"index": 0,

"document": {

"text": "The capital of France is Paris."

},

"relevance_score": 0.8291233777999878

},

{

"index": 1,

"document": {

"text": "France capital city is known for the Eiffel Tower."

},

"relevance_score": 0.6996355652809143

}

]

}

Reference

Score API

Overview

The Score API predicts the similarity between two sentences. This API uses one of two models to calculate the score:

- Reranker (Cross-Encoder) model: Takes a pair of sentences as input and directly predicts the similarity score.

- Embedding model: Generates embedding vectors for each sentence and calculates the cosine similarity to derive the score.

Request

Context

| Key | Type | Description | Example |

|---|

| Base URL | string | AIOS URL for API requests | application/json |

| Request Method | string | HTTP method used for API requests | POST |

| Headers | object | Header information required for requests | { “accept”: “application/json”, “Content-Type”: “application/json” } |

| Body Parameters | object | Parameters included in the request body | { “model”: “sds/bge-reranker-v2-m3”, “text_1”: […], “text_2”: […] } |

Table. Score API - Context

Path Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Score API - Path Parameters

Query Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Score API - Query Parameters

Body Parameters

| Name | Name Sub | type | Required | Description | Default value | Boundary value | Example |

|---|

| model | - | string | ✅ | Specify the model to use for response generation | | | “sds/bge-reranker-v2-m3” |

| encoding_format | - | string | ❌ | Score return format | “float” | | “float” |

| text_1 | - | string, array | ✅ | First text to compare | | - Model’s maximum input length limit

| “What is the capital of France?" |

| text_2 | - | string, array | ✅ | Second text to compare | | - Model’s maximum input length limit

| [“The capital of France is Paris.”, ] |

| truncate_prompt_tokens | - | integer | ❌ | Limit input token count | | > 0 | 100 |

Table. Score API - Body Parameters

Example

curl -X 'POST' \

'https://aios.private.kr-west1.e.samsungsdscloud.com/score' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"model": "sds/bge-reranker-v2-m3",

"encoding_format": "float",

"text_1": [

"What is the largest planet in the solar system?",

"What is the chemical symbol for water?"

],

"text_2": [

"Jupiter is the largest planet in the solar system.",

"The chemical symbol for water is H₂O."

]

}'

Response

200 OK

| Name | Type | Description |

|---|

| id | string | Unique identifier for the response |

| object | string | Type of response object (e.g., “list” ) |

| created | integer | Creation time (Unix timestamp, seconds) |

| model | string | Name of the model used |

| data | array | List of score calculation results |

| data.index | integer | Index of the item in the data array |

| data.object | string | Type of data item (e.g., “score”) |

| data.score | number | Calculated score value, normalized to 0 ~ 1 |

| usage | object | Token usage statistics |

| usage.prompt_tokens | integer | Number of tokens used in the input prompt |

| usage.total_tokens | integer | Total number of tokens (input + output) |

| usage.completion_tokens | integer | Number of tokens used in the generated response |

| usage.prompt_tokens_details | null | Detailed information about prompt tokens |

Table. Score API - 200 OK

Error Code

| HTTP status code | Error Code Description |

|---|

| 400 | Bad Request |

| 422 | Validation Error |

| 500 | Internal Server Error |

Table. Score API - Error Code

Example

{

"id": "score-scp-aios-score",

"object": "list",

"created": 1748574112,

"model": "sds/bge-reranker-v2-m3",

"data": [

{

Here is the translated text:

"index": 0,

"object": "score",

"score": 1.0

},

{

"index": 1,

"object": "score",

"score": 1.0

}

],

“usage”: {

“prompt_tokens”: 53,

“total_tokens”: 53,

“completion_tokens”: 0,

“prompt_tokens_details”: null

}

}

## Reference

* [Score API vLLM documentation](https://docs.vllm.ai/en/latest/serving/openai_compatible_server.html#score-api_1)

# Chat Completions API

```python

POST /v1/chat/completions

Overview

Chat Completions API is compatible with OpenAI’s Completions API and can be used with the OpenAI Python client.

Request

Context

| Key | Type | Description | Example |

|---|

| Content-Type | string | | application/json |

Table. Chat Completions API - Context

Path Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Chat Completions API - Path Parameters

Query Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Chat Completions API - Query Parameters

Body Parameters

| Name | Name Sub | type | Required | Description | Default value | Boundary value | Example |

|---|

| model | - | string | ✅ | Specifies the model to use for generating responses | | | “meta-llama/Llama-3.3-70B-Instruct” |

| messages | role | string | ✅ | List of messages containing conversation history | | | [ { “role” : “user” , “content” : “message” }] |

| frequency_penalty | - | number | ❌ | Adjusts the penalty for repeating tokens | 0 | -2.0 ~ 2.0 | 0.5 |

| logit_bias | - | object | ❌ | Adjusts the probability of specific tokens (e.g., { “100”: 2.0 }) | null | Key: token ID, Value: -100 ~ 100 | { “100”: 2.0 } |

| logprobs | - | boolean | ❌ | Returns the probabilities of the top logprobs number of tokens | false | true, false | true |

| max_completion_tokens | - | integer | ❌ | Limits the maximum number of generated tokens | None | 0 ~ model maximum | 100 |

| max_tokens (Deprecated) | - | integer | ❌ | Limits the maximum number of generated tokens | None | 0 ~ model maximum | 100 |

| n | - | integer | ❌ | Specifies the number of responses to generate | 1 | | 3 |

| presence_penalty | - | number | ❌ | Adjusts the penalty for tokens already present in the text | 0 | -2.0 ~ 2.0 | 1.0 |

| seed | - | integer | ❌ | Specifies the seed value for controlling randomness | None | | |

| stop | - | string / array / null | ❌ | Stops generating when a specific string is encountered | null | | "\n" |

| stream | - | boolean | ❌ | Returns the result in streaming mode | false | true/false | true |

| stream_options | include_usage, continuous_usage_stats | object | ❌ | Controls streaming options (e.g., including usage statistics) | null | | { “include_usage”: true } |

| temperature | - | number | ❌ | Adjusts the creativity of the generated response (higher means more random) | 1 | 0.0 ~ 1.0 | 0.7 |

| tool_choice | - | string | ❌ | Specifies which tool to call- none: Does not call any tool

- auto: Model decides whether to call a tool or generate a message

- required: Model calls at least one tool

| | | |

| tools | - | array | ❌ | List of tools that the model can call- Only functions are supported as tools

- Supports up to 128 functions

| None | | |

| top_logprobs | - | integer | ❌ | Specifies the number of top logprobs tokens to return (between 0 and 20)- Each is associated with a log probability value

- logprobs must be set to true

- Shows the probability values for the top k completions

| None | 0 ~ 20 | 3 |

| top_p | - | number | ❌ | Limits the sampling probability of tokens (higher means more tokens are considered) | 1 | 0.0 ~ 1.0 | 0.9 |

Table. Chat Completions API - Body Parameters

Example

curl -X 'POST' \

'https://aios.private.kr-west1.e.samsungsdscloud.com/v1/chat/completions' \

-H 'accept: application/json' \

200 OK

| Name | Type | Description |

|---|

| id | string | Response’s unique identifier |

| object | string | Type of response object (e.g., “chat.completion”) |

| created | integer | Creation time (Unix timestamp, in seconds) |

| model | string | Name of the model used |

| choices | array | List of generated response choices |

| choices[].index | integer | Index of the choice |

| choices[].message | object | Generated message object |

| choices[].message.role | string | Role of the message author (e.g., “assistant”) |

| choices[].message.content | string | Actual content of the generated message |

| choices[].message.reasoning_content | string | Actual content of the generated reasoning message |

| choices[].message.tool_calls | array (optional) | Tool call information (may be included depending on the model/settings) |

| choices[].finish_reason | string or null | Reason why the response was terminated (e.g., “stop”, “length”, etc.) |

| choices[].stop_reason | object or null | Additional termination reason details |

| choices[].logprobs | object or null | Token-wise log probability information (may be included depending on the settings) |

| usage | object | Token usage statistics |

| usage.prompt_tokens | integer | Number of tokens used in the input prompt |

| usage.completion_tokens | integer | Number of tokens used in the generated response |

| usage.total_tokens | integer | Total number of tokens (input + output) |

Table. Chat Completions API - 200 OK

Error Code

| HTTP status code | Error Code Description |

|---|

| 400 | Bad Request |

| 422 | Validation Error |

| 500 | Internal Server Error |

Table. Chat Completions API - Error Code

Example

{

"id": "chatcmpl-scp-aios-chat-completions",

"object": "chat.completion",

"created": 1749702816,

"model": "meta-llama/Meta-Llama-3.3-70B-Instruct",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"reasoning_content": null,

"content": "The capital of Korea is Seoul.",

"tool_calls": []

},

"logprobs": null,

"finish_reason": "stop",

"stop_reason": null

}

],

"usage": {

"prompt_tokens": 54,

"total_tokens": 62,

"completion_tokens": 8,

"prompt_tokens_details": null

},

"prompt_logprobs": null

}

Reference

## Overview

Completions API is compatible with OpenAI's Completions API and can be used with the OpenAI Python client.

## Request

### Context

| Key |

Type |

Description |

Example |

| Base URL |

string |

API request URL for AIOS |

application/json |

| Request Method |

string |

HTTP method used for the API request |

POST |

| Headers |

object |

Header information required for the request |

{ “accept”: “application/json”, “Content-Type”: “application/json” } |

| Body Parameters |

object |

Parameters included in the request body |

’{“model”: “meta-llama/Llama-3.3-70B-Instruct”, “prompt” : “hello”, “stream”: “true”}’ |

Table. Completions API - Context

### Path Parameters

| Name |

type |

Required |

Description |

Default value |

Boundary value |

Example |

| None |

|

|

|

|

|

|

Table. Completions API - Path Parameters

### Query Parameters

| Name |

type |

Required |

Description |

Default value |

Boundary value |

Example |

| None |

|

|

|

|

|

|

Table. Completions API - Query Parameters

### Body Parameters

| Name |

Name Sub |

type |

Required |

Description |

Default value |

Boundary value |

Example |

| model |

- |

string |

✅ |

Model used to generate the response |

|

|

“meta-llama/Llama-3.3-70B-Instruct” |

| prompt |

- |

array, string |

✅ |

User input text |

|

|

"" |

| echo |

- |

boolean |

❌ |

Whether to include the input text in the output |

false |

true/false |

true |

| frequency_penalty |

- |

number |

❌ |

Adjust the penalty for repeating tokens |

0 |

-2.0 ~ 2.0 |

0.5 |

| logit_bias |

- |

object |

❌ |

Adjust the probability of specific tokens (e.g., { “100”: 2.0 }) |

null |

Key: token ID, Value: -100~100 |

{ “100”: 2.0 } |

| logprobs |

- |

integer |

❌ |

Return the probabilities of the top logprobs tokens |

null |

1 ~ 5 |

5 |

| max_completion_tokens |

- |

integer |

❌ |

Limit the maximum number of generated tokens |

None |

0~model maximum value |

100 |

| max_tokens (Deprecated) |

- |

integer |

❌ |

Limit the maximum number of generated tokens |

None |

0~model maximum value |

100 |

| n |

- |

integer |

❌ |

Specify the number of responses to generate |

1 |

|

3 |

| presence_penalty |

- |

number |

❌ |

Adjust the penalty for tokens already present in the text |

0 |

-2.0 ~ 2.0 |

1.0 |

| seed |

- |

integer |

❌ |

Specify a seed value for randomness control |

None |

|

|

| stop |

- |

string / array / null |

❌ |

Stop generating when a specific string is encountered |

null |

|

"\n" |

| stream |

- |

boolean |

❌ |

Whether to return the results in a streaming manner |

false |

true/false |

true |

| stream_options |

include_usage, continuous_usage_stats |

object |

❌ |

Control streaming options (e.g., include usage statistics) |

null |

|

{ “include_usage”: true } |

| temperature |

- |

number |

❌ |

Control the creativity of the generated response (higher means more random) |

1 |

0.0 ~ 1.0 |

0.7 |

| top_p |

- |

number |

❌ |

Limit the sampling probability of tokens (higher means more tokens considered) |

1 |

0.0 ~ 1.0 |

0.9 |

Table. Completions API - Body Parameters

### Example

```python

curl -X 'POST' \

'https://aios.private.kr-west1.e.samsungsdscloud.com/v1/completions' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"model": "meta-llama/Meta-Llama-3.3-70B-Instruct",

"prompt": "What is the capital of Korea?",

"temperature": 0.7

}'

Response

200 OK

| Name | Type | Description |

|---|

| id | string | Unique identifier of the response |

| object | string | Type of the response object (e.g., “text_completion”) |

| created | integer | Creation time (Unix timestamp, seconds) |

| model | string | Name of the model used |

| choices | array | List of generated response choices |

| choices[].index | number | Index of the choice |

| choices[].text | string | Generated text object |

| choices[].logprobs | object | Token-wise log probability information (included based on settings) |

| choices[].finish_reason | string or null | Reason why the response was terminated (e.g., “stop”, “length” etc.) |

| choices[].stop_reason | object or null | Additional termination reason details |

| choices[].prompt_logprobs | object or null | Log probability of input prompt tokens (may be null) |

| usage | object | Token usage statistics |

| usage.prompt_tokens | number | Number of tokens used in the input prompt |

| usage.total_tokens | number | Total number of tokens (input + output) |

| usage.completion_tokens | number | Number of tokens used in the generated response |

| usage.prompt_tokens_details | object | Details of prompt token usage |

<div class="figure-caption">

Table. Completions API - 200 OK

</div>

Error Code

| HTTP status code | Error Code Description |

|---|

| 400 | Bad Request |

| 422 | Validation Error |

| 500 | Internal Server Error |

Table. Completions API - Error Code

Example

{

"id": "cmpl-scp-aios-completions",

"object": "text_completion",

"created": 1749702612,

"model": "meta-llama/Meta-Llama-3.3-70B-Instruct",

"choices": [

{

"index": 0,

"text": " \nOur capital city is Seoul. \n\nA. 1\nB. ",

"logprobs": null,

"finish_reason": "length",

"stop_reason": null,

"prompt_logprobs": null

}

],

"usage": {

"prompt_tokens": 9,

"total_tokens": 25,

"completion_tokens": 16,

"prompt_tokens_details": null

}

}

Reference

Embedding API

Overview

The Embedding API converts text into high-dimensional vectors (embeddings) that can be used for various natural language processing (NLP) tasks, such as calculating text similarity, clustering, and search.

Request

Context

| Key | Type | Description | Example |

|---|

| Base URL | string | URL for AIOS API requests | application/json |

| Request Method | string | HTTP method used for API requests | POST |

| Headers | object | Header information required for requests | { “accept”: “application/json”, “Content-Type”: “application/json” } |

| Body Parameters | object | Parameters included in the request body | { “model”: “sds/bge-m3”, “input”: “What is the capital of France?”} |

Table. Embedding API - Context

Path Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Embedding API - Path Parameters

Query Parameters

| Name | type | Required | Description | Default value | Boundary value | Example |

|---|

| None | | | | | | |

Table. Embedding API - Query Parameters

Body Parameters

| Name | Name Sub | type | Required | Description | Default value | Boundary value | Example |

|---|

| model | - | string | ✅ | Specify the model to use for generating responses | | | “sds/bge-reranker-v2-m3” |

| input | - | array<string | ✅ | User’s search query or question | | | “What is the capital of France?" |

| encoding_format | - | string | ❌ | Specify the format to return the embedding | “float” | “float”, “base64” | [0.01319122314453125,0.057220458984375, … (omitted) |

| truncate_prompt_tokens | - | integer | ❌ | Limit the number of input tokens | | > 0 | 100 |

Table. Embedding API - Body Parameters

Example

curl -X 'POST' \

'https://aios.private.kr-west1.e.samsungsdscloud.com/v1/embedding' \

-H 'accept: application/json' \

-H 'Content-Type: application/json' \

-d '{

"model": "sds/bge-m3",

"input": "What is the capital of France?",

"encoding_format": "float"

}'

Response

200 OK

| Name | Type | Description |

|---|

| id | string | Unique identifier of the response |

| object | string | Type of the response object (e.g., “list”) |

| created | number | Creation time (Unix timestamp, seconds) |

| model | string | Name of the model used |

| data | array | Array of objects containing embedding results |

| data.index | number | Index of the input text (e.g., order of input texts) |

| data.object | string | Type of data item |

| data.embedding | array | Embedding vector values of the input text (sds-bge-m3 is a 1024-dimensional float array) |

| usage | object | Token usage statistics |

| usage.prompt_tokens | number | Number of tokens used in the input prompt |

| usage.total_tokens | number | Total number of tokens (input + output) |

| usage.completion_tokens | number | Number of tokens used in the generated response |

| usage.prompt_tokens_details | object | Detailed information about prompt tokens |

Table. Embedding API - 200 OK

Error Code

| HTTP status code | Error Code Description |

|---|

| 400 | Bad Request |

| 422 | Validation Error |

| 500 | Internal Server Error |

Table. Embedding API - Error Code

Example

{

"id":"embd-scp-aios-embeddings",

"object":"list","created":1749035024,

"model":"sds/bge-m3",

"data":[

{

"index":0,

"object":"embedding",

"embedding":

[0.01319122314453125,0.057220458984375,-0.028533935546875,-0.0008697509765625,-0.01422119140625,0.033416748046875,-0.0062408447265625,-0.04364013671875,-0.004497528076171875,0.0008072853088378906,-0.0193328857421875,0.041168212890625,-0.019317626953125,-0.0188751220703125,-0.047088623046875,

-0 ....(omitted)

-0.05706787109375,-0.0147705078125]

}

],

"usage":

{

"prompt_tokens":9,

"total_tokens":9,

"completion_tokens":0,

"prompt_tokens_details":null

}

}

Reference

2 - SDK Reference

SDK Reference Overview

AIOS models are compatible with OpenAI’s API, so they are also compatible with OpenAI’s SDK.

The following is a list of OpenAI and Cohere compatible APIs supported by Samsung Cloud Platform AIOS service.

| API Name | API | Detailed Description | Supported SDK |

|---|

| Text Completion API | | Generates a natural sentence that follows the given input string. | |

| Conversation Completion API | | Generates a response that follows the conversation content. | |

| Embeddings API | | Converts text into a high-dimensional vector (embedding) that can be used for various natural language processing (NLP) tasks such as text similarity calculation, clustering, and search. | |

| Rerank API | | Applies an embedding model or a cross-encoder model to predict the relevance between a single query and each item in a document list. | |

Table. Python SDK Compatible API List

Note

- The SDK Reference guide is based on a Virtual Server environment with Python installed.

- The actual execution may differ from the example in terms of token count and message content.

OpenAI SDK

Installing the openai Package

Install the OpenAI package.

Text Completion API

The Text Completion API generates a natural sentence that follows the given input string.

Request

Note

The Text Completion API can only use strings as input values.

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model calls.

model = "<<model>>" # Enter the model ID for AIOS model calls.

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.completions.create(

model=model,

prompt="Hi"

)

Reference

The aios endpoint-url and model ID for model calls can be found in the LLM Endpoint Usage Guide on the resource details page. Refer to

Using LLM.

Response

The text field in choices contains the model’s response.

Completion(

id='cmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

CompletionChoice(

finish_reason='length',

index=0,

logprobs=None,

text=' future president of the United States, I hope you’re doing well. As a',

stop_reason=None,

prompt_logprobs=None

)

],

created=1750000000,

model='<<model>>',

object='text_completion',

stream request

stream can be used to receive the completed answer one by one, rather than receiving the entire answer at once, as the model generates tokens.

Request

Set the stream parameter value to True.

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS model call endpoint-url to be input for AIOS model call

model = "<<model>>" # AIOS model call model ID to be input for AIOS model call

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.completions.create(

model=model,

prompt="Hi",

stream=True

)

# Receive the response as the model generates tokens.

for chunk in response:

print(chunk)

Response

Each token generates an answer, and each token can be checked in the choices’s text field.

Completion(

id='cmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

CompletionChoice(

finish_reason=None,

index=0,

logprobs=None,

text='.',

stop_reason=None

)

],

created=1750000000,

model='<<model>>',

object='text_completion',

system_fingerprint=None,

usage=None

)

Completion(..., choices=[CompletionChoice(..., text=' I', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text="'m", ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' looking', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' for', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' a', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' way', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' to', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' check', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' if', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' a', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' specific', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' process', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' is', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' running', ...)], ...)

Completion(..., choices=[CompletionChoice(..., text=' on', ...)], ...)

Completion(..., choices=[], ...,

usage=CompletionUsage(

completion_tokens=16,

prompt_tokens=2,

total_tokens=18,

completion_tokens_details=None,

prompt_tokens_details=None

)

)

conversation completion API

The conversation completion API takes a list of messages in order as input and responds with a message that is suitable for the current context as the next order.

Request

Text message only, you can call as follows:

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS model call for aios endpoint-url to enter.

model = "<<model>>" # AIOS model call for model ID to enter.

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.chat.completions.create(

model=model,

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hi"}

]

)

Note

Model call for aios endpoint-url and model ID information is provided in the resource details page’s LLM Endpoint usage guide. Please refer to

Using LLM.

Response

You can check the model’s answer in the choices’s message.

ChatCompletion(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

finish_reason='stop',

index=0,

logprobs=None,

message=ChatCompletionMessage(

content='Hello. How can I assist you today?',

refusal=None,

role='assistant',

annotations=None,

audio=None,

function_call=None,

tool_calls=[],

reasoning_content=None

),

stop_reason=None

)

],

created=1750000000,

model='<<model>>',

object='chat.completion',

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(

completion_tokens=10,

prompt_tokens=42,

total_tokens=52,

completion_tokens_details=None,

prompt_tokens_details=None

),

prompt_logprobs=None

)

Stream Request

Using stream, you can wait for the model to generate all answers and receive the response at once, or receive and process the response for each token generated by the model.

Request

Enter True as the value of the stream parameter.

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS model call for aios endpoint-url to enter.

model = "<<model>>" # AIOS model call for model ID to enter.

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.chat.completions.create(

model="meta-llama/Llama-3.3-70B-Instruct",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Hi"}

],

stream=True

)

# You can receive a response each time the model generates a token.

for chunk in response:

print(chunk)

Response

Each token generates a response, and each token can be checked in the choices field of the delta field.

ChatCompletionChunk(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

delta=ChoiceDelta(

content='',

function_call=None,

refusal=None,

role='assistant',

tool_calls=None

),

finish_reason=None,

index=0,

logprobs=None

)

],

created=1750000000,

model='<<model>>',

object='chat.completion.chunk',

service_tier=None,

system_fingerprint=None,

usage=None

)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content='It', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content="'s", ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' nice', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' to', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content='meet', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' you', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content='.', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' Is', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' there', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' something', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' I', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' can', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' help', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' you', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' with', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' or', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' would', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' you', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' like', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' to', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content=' chat', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content='?', ...), ...)], ...)

ChatCompletionChunk(..., choices=[Choice(delta=ChoiceDelta(content='', ...), ...)], ...)

ChatCompletionChunk(..., choices=[], ...,

usage=CompletionUsage(

completion_tokens=23,

prompt_tokens=42,

total_tokens=65,

completion_tokens_details=None,

prompt_tokens_details=None

)

)

Tool calling refers to the interface of external tools defined outside the model, allowing the model to generate answers that can perform suitable tools in the current context.

Using tool call, you can define metadata for functions that the model can execute and utilize them to generate answers.

Request

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS model call endpoint URL

model = "<<model>>" # AIOS model ID

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

# Function to get weather information

tools = [{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current temperature for provided coordinates in celsius.",

"parameters": {

"type": "object",

"properties": {

"latitude": {"type": "number"},

"longitude": {"type": "number"}

},

"required": ["latitude", "longitude"],

"additionalProperties": False

},

"strict": True

}

}]

messages = [{“role”: “user”, “content”: “What is the weather like in Paris today?”}]

response = client.chat.completions.create(

model=model,

messages=messages,

tools=tools # Inform the model of the metadata of the tools that can be used.

)

Response

choices’s message.tool_calls can be used to check how the model determines the execution method of the tool.

In the following example, you can see that the tool_calls’s function uses the get_weather function and checks what arguments should be inserted.

ChatCompletion(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

finish_reason='tool_calls',

index=0,

logprobs=None,

message=ChatCompletionMessage(

content=None,

refusal=None,

role='assistant',

annotations=None,

audio=None,

function_call=None,

tool_calls=[

ChatCompletionMessageToolCall(

id='chatcmpl-tool-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

function=Function(

arguments='{"latitude": 48.8566, "longitude": 2.3522}',

name='get_weather'

),

type='function'

)

],

reasoning_content=None

),

stop_reason=None

)

],

created=1750000000,

model='<<model>>',

object='chat.completion',

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(

completion_tokens=19,

prompt_tokens=194,

total_tokens=213,

completion_tokens_details=None,

prompt_tokens_details=None

),

prompt_logprobs=None

)

After adding the result value of the function as a tool message and generating the model’s response again, you can create an answer using the result value.

Request

Based on tool_calls’s function.arguments in the response data, you can actually call the function.

import json

# example function, always responds with 14 degrees.

def get_weather(latitude, longitude):

return "14℃"

tool_call = response.choices[0].message.tool_calls[0]

args = json.loads(tool_call.function.arguments)

result = get_weather(args["latitude"], args["longitude"]) # "14℃"

After adding the result value of the function as a tool message to the conversation context and calling the model again,

the model can create an appropriate answer using the result value of the function.

# Add the model's tool call message to messages

messages.append(response.choices[0].message)

# Add the result of the actual function call to messages

messages.append({

"role": "tool",

"tool_call_id": tool_call.id,

"content": str(result)

})

response_2 = client.chat.completions.create(

model=model,

messages=messages,

# tools=tools

Response

ChatCompletion(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

finish_reason='stop',

index=0,

logprobs=None,

message=ChatCompletionMessage(

content='The current weather in Paris is 14℃.',

refusal=None,

role='assistant',

annotations=None,

audio=None,

function_call=None,

tool_calls=[],

reasoning_content=None

),

stop_reason=None

)

],

created=1750000000,

model='<<model>>',

object='chat.completion',

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(

completion_tokens=11,

prompt_tokens=74,

total_tokens=85,

completion_tokens_details=None,

prompt_tokens_details=None

),

prompt_logprobs=None

)

Reasoning

Request

Reasoning is supported in models that provide a reasoning value, which can be checked as follows:

Note

Models that support reasoning may take longer to generate answers because they produce many tokens for reasoning.

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model calls.

model = "<<model>>" # Enter the model ID for AIOS model calls.

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.chat.completions.create(

model=model,

messages=[

{"role": "user", "content": "9.11 and 9.8, which is greater?"}

],

)

Response

The choices of the message field can be checked to see the content and also the reasoning_content, which provides the reasoning tokens.

ChatCompletion(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

finish_reason='stop',

index=0,

logprobs=None,

message=ChatCompletionMessage(

content='''

To determine whether 9.11 or 9.8 is larger, we compare the decimal parts since both numbers have the same whole number part (9).

1. Convert both numbers to the same decimal places for easier comparison:

- 9.11 remains as is.

- 9.8 can be written as 9.80.

2. Compare the tenths place:

- The tenths place of 9.11 is 1.

- The tenths place of 9.80 is 8.

3. Since 8 (from 9.80) is greater than 1 (from 9.11), 9.80 (or 9.8) is larger.

4. Verification by subtraction:

- Subtracting 9.11 from 9.8 gives \(9.80 - 9.11 = 0.69\), which is positive, confirming 9.8 is larger.

Thus, the larger number is \(\boxed{9.8}\).

''',

refusal=None,

role='assistant',

annotations=None,

audio=None,

function_call=None,

tool_calls=[],

reasoning_content="""Okay, so I need to figure out whether 9.11 is bigger than 9.8 or vice versa.

Hmm, let me think. Both numbers start with 9, so the whole number part is the same.

Here is the translation of the given text:

That means the difference must be in the decimal parts.

First, I remember that when comparing decimals, you look at the digits one by one after the decimal point.

The first digit after the decimal is the tenths place, then hundredths, and so on.

Since both numbers have 9 in the units place, I can focus on the decimal parts: 0.11 versus 0.08.

Wait, let me write them out to be clear. 9.11 is 9 and 11 hundredths, and 9.8 is 9 and 8 tenths.

Wait a second, maybe I should convert them to the same decimal places to make it easier.

So, 9.8 is the same as 9.80, right? Because adding a zero at the end of a decimal doesn't change its value.

That way, both numbers have two decimal places. Now comparing 9.11 and 9.80.

Looking at the tenths place first: 9.11 has 1 tenth, and 9.80 has 8 tenths.

Since 8 is more than 1, that means 9.80 is larger than 9.11. Wait, but hold on, the original numbers were 9.11 and 9.8. Did I make a mistake here?

Let me double-check. 9.8 is indeed equal to 9.80.

So when comparing tenths, 8 tenths (from 9.80) is definitely more than 1 tenth (from 9.11). So that would mean 9.8 is bigger.

But wait, maybe I confused the decimal places. Let me think again.

The first number after the decimal is tenths, so in 9.11, the first digit is 1 (tenths place) and the second is 1 (hundredths).

In 9.8, the first digit is 8 (tenths place), and there's no hundredths, which is equivalent to 0.

So comparing the tenths place first: 1 vs 8. Since 8 is greater than 1, 9.8 is larger.

But why did I initially think maybe 9.11 was bigger? Maybe because 11 is a two-digit number?

But no, in decimals, each position is a different place value.

The tenths place is more significant than the hundredths. So even though 11 hundredths is 0.11, that's still less than 0.8 (which is 8 tenths).

Another way to think about it: Convert both to fractions.

9.11 is 9 + 11/100, and 9.8 is 9 + 8/10.

Converting 8/10 to hundredths, that's 80/100.

So 9.8 is 9 + 80/100, whereas 9.11 is 9 + 11/100. Comparing the fractions: 80/100 is more than 11/100, so 9.8 is bigger.

Alternatively, maybe subtract them to see the difference. 9.8 minus 9.11 is 0.69, which is positive, so 9.8 is larger.

Wait, let me do the subtraction step by step.

9.8 minus 9.11:

First, write them aligned by decimal:

9.80

-9.11

-------

0.69

Yes, so the result is positive, so 9.8 is bigger.

Alternatively, if I had to do it without converting, maybe I can think in terms of money.

If these were dollars, 9.11 is $9.11 and 9.8 is $9.80.

Clearly, $9.80 is more than $9.11.

Hmm, so all these methods point to 9.8 being larger.

Maybe my initial confusion was because I saw 11 as a two, but

...omitted...

**Final Answer**

The number 9.8 is larger than 9.11. This is because when comparing the decimal parts, 0.8 (from 9.8) is greater than 0.11 (from 9.11).

Specifically, 9.8 can be written as 9.80, and comparing the tenths place (8 vs. 1) shows that 9.8 is larger.

The difference between them is 0.69, confirming that 9.8 is indeed the larger number.

**Final Answer**

\\boxed{9.8}"""

),

stop_reason=None

)

],

created=1750000000,

model=’«model»’,

object=‘chat.completion’,

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(

completion_tokens=4167,

prompt_tokens=27,

total_tokens=4194,

completion_tokens_details=None,

prompt_tokens_details=None

),

prompt_logprobs=None,

kv_transfer_params=None

)

### image to text

**vision**을 지원하는 모델의 경우, 다음과 같이 이미지를 입력할 수 있습니다.

<div class="scp-textbox scp-textbox-type-error">

<div class="scp-textbox-title">Note</div>

<div class="scp-textbox-contents">

<p>For models that support <strong>vision</strong>, there are limitations on the size and number of input images.</p>

<p>Please refer to <a href="/en/userguide/ai_ml/aios/overview/#provided-models">Provided Models</a> for more information on image input limitations.</p>

</div>

</div>

#### Request

You can input an image with **MIME type** and **base64**.

```python

import base64

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS endpoint-url for model calls

model = "<<model>>" # Model ID for AIOS model calls

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

image_path = "image/path.jpg"

def encode_image(image_path: str):

with open(image_path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

base64_image = encode_image(image_path)

response = client.chat.completions.create(

model=model,

messages=[

{

“role”: “user”,

“content”: [

{“type”: “text”, “text”: “what’s in this image?”},

{

“type”: “image_url”,

“image_url”: {

“url”: f"data:image/jpeg;base64,{base64_image}",

},

},

]

},

],

)

Response

The following is an analysis of the image to generate text.

ChatCompletion(

id='chatcmpl-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

choices=[

Choice(

finish_reason='stop',

index=0,

logprobs=None,

message=ChatCompletionMessage(

content="""Here's what's in the image:

* **A golden retriever puppy:** The main subject is a light-colored golden retriever puppy lying on green grass.

* **A bone:** The puppy is holding a large bone in its paws and appears to be enjoying chewing on it.

* **Grass:** The puppy is lying on a well-maintained lawn.

* **Vegetation:** Behind the puppy, there are some shrubs and other greenery.

* **Outdoor setting:** The scene is outdoors, likely a backyard.""",

refusal=None,

role='assistant',

annotations=None,

audio=None,

function_call=None,

tool_calls=[],

reasoning_content=None

),

stop_reason=106

)

],

created=1750000000,

model='<<model>>',

object='chat.completion',

service_tier=None,

system_fingerprint=None,

usage=CompletionUsage(

completion_tokens=114,

prompt_tokens=276,

total_tokens=390,

completion_tokens_details=None,

prompt_tokens_details=None

),

prompt_logprobs=None,

kv_transfer_params=None

)

Embeddings API

Embeddings converts input text into a high-dimensional vector of a fixed dimension. The generated vector can be used for various natural language processing tasks such as text similarity, clustering, and search.

Request

from openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # AIOS endpoint-url for model calls

model = "<<model>>" # Model ID for AIOS model calls

client = OpenAI(base_url=urljoin(aios_base_url, "v1"), api_key="EMPTY_KEY")

response = client.embeddings.create(

input="What is the capital of France?",

model=model

)

Note

The aios endpoint-url and model ID for model calls can be found in the LLM Endpoint Usage Guide on the resource details page. Refer to

Using LLM.

Response

data receives the converted value in vector form as a response.

CreateEmbeddingResponse(

data=[

Embedding(

embedding=[

0.01319122314453125,

0.057220458984375,

-0.028533935546875,

-0.0008697509765625,

-0.01422119140625,

...omitted...

],

index=0,

object='embedding'

)

],

model='<<model>>',

object='list',

usage=Usage(

prompt_tokens=9,

total_tokens=9,

completion_tokens=0,

prompt_tokens_details=None

),

id='embd-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

created=1750000000

)

Cohere SDK

The Rerank API is compatible with the Cohere SDK.

Installing the Cohere Package

The Cohere SDK can be used by installing the Cohere package.

Rerank API

Rerank calculates the relevance between the given query and documents, and ranks them.

It can help improve the performance of RAG (Retrieval-Augmented Generation) structure applications by adjusting relevant documents to the front.

Request

import cohere

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model calls.

model = "<<model>>" # Enter the model ID for AIOS model calls.

client = cohere.ClientV2("EMPTY_KEY", base_url=aios_base_url)

docs = [

"The capital of France is Paris.",

"France capital city is known for the Eiffel Tower.",

"Paris is located in the north-central part of France."

]

response = client.rerank(

model=model,

query="What is the capital of France?",

documents=docs,

top_n=3,

)

Note

The aios endpoint-url and model ID information for model calls are provided in the LLM Endpoint Usage Guide on the resource details page. Refer to

Using LLM.

Response

In results, you can check the documents sorted in order of relevance to the query.

V2RerankResponse(

id='rerank-xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx',

results=[

V2RerankResponseResultsItem(

document=V2RerankResponseResultsItemDocument(

text='The capital of France is Paris.'

),

index=0,

relevance_score=1.0

),

V2RerankResponseResultsItem(

Here is the translated text:

document=V2RerankResponseResultsItemDocument(

text='France capital city is known for the Eiffel Tower.'

),

index=1,

relevance_score=1.0

),

V2RerankResponseResultsItem(

document=V2RerankResponseResultsItemDocument(

text='Paris is located in the north-central part of France.'

),

index=2,

relevance_score=0.982421875

)

],

meta=None,

model=’«model»’,

usage={

’total_tokens’: 62

}

)

Langchain SDK

Langchain’s SDK is also composed of OpenAI and Cohere SDKs, so you can use the Langchain SDK.

langchain package installation

The Langchain SDK can be used with the AIOS model after installing the langchain package.

pip install langchain langchain-openai langchain-cohere langchain-together

The langchain-openai package can be used to utilize the text completion API and conversation completion API.

langchain_openai.OpenAI

When the text completion model (langchain_openai.OpenAI) is invoked, the result value is generated as text.

Request

from langchain_openai import OpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model calls.

model = "<<model>>" # Enter the model ID for AIOS model calls.

llm = OpenAI(

base_url=urljoin(aios_base_url, "v1"),

api_key="EMPTY_KEY",

model=model

)

llm.invoke("Can you introduce yourself in 5 words?")

Response

"""Hi, I'm a fun artist!

...omitted..."""

Note

The aios endpoint-url and model ID information for model calls are provided in the LLM Endpoint Usage Guide on the resource details page. Refer to

Using LLM.

langchain_openai.ChatOpenAI

When the conversation completion model (langchain_openai.ChatOpenAI) is invoked, the result value is generated as an AIMessage or Message object.

Request

from langchain_openai import ChatOpenAI

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model calls.

model = "<<model>>" # Enter the model ID for AIOS model calls.

chat_llm = ChatOpenAI(

base_url=urljoin(aios_base_url, "v1"),

api_key="EMPTY_KEY",

model=model

)

chat_completion = chat_llm.invoke("Can you introduce yourself in 5 words?")

chat_completion.pretty_print()

Note

Information for the aios endpoint-url and model ID for model invocation can be found in the LLM Endpoint usage guide on the resource details page. Please refer to

Using LLM.

Response

================================== Ai Message ==================================

I am an AI assistant.

embeddings

Embeddings models such as langchain-together, langchain-fireworks can be used.

Request

from langchain_together import TogetherEmbeddings

from urllib.parse import urljoin

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model invocation.

model = "<<model>>" # Enter the model ID for AIOS model invocation.

embedding = TogetherEmbeddings(

base_url=urljoin(aios_base_url, "v1"),

api_key="EMPTY_KEY",

model=model

)

embedding.embed_query("What is the capital of France?")

Note

Information for the aios endpoint-url and model ID for model invocation can be found in the LLM Endpoint usage guide on the resource details page. Please refer to

Using LLM.

Response

[

0.01319122314453125,

0.057220458984375,

-0.028533935546875,

-0.0008697509765625,

-0.01422119140625,

...omitted...

]

rerank

Rerank models can utilize langchain-cohere’s CohereRerank.

Request

from langchain_cohere.rerank import CohereRerank

aios_base_url = "<<aios endpoint-url>>" # Enter the aios endpoint-url for AIOS model invocation.

model = "<<model>>" # Enter the model ID for AIOS model invocation.

rerank = CohereRerank(

base_url=aios_base_url,

cohere_api_key="EMPTY_KEY",

model=model

)

docs = [

"The capital of France is Paris.",

"France capital city is known for the Eiffel Tower.",

"Paris is located in the north-central part of France."

]

rerank.rerank(

documents=docs,

query="What is the capital of France?",

top_n=3

)

Note

Information for the aios endpoint-url and model ID for model invocation can be found in the LLM Endpoint usage guide on the resource details page. Please refer to

Using LLM.

Response

[

{'index': 0, 'relevance_score': 1.0},

{'index': 1, 'relevance_score': 1.0},

{'index': 2, 'relevance_score': 0.982421875}

]

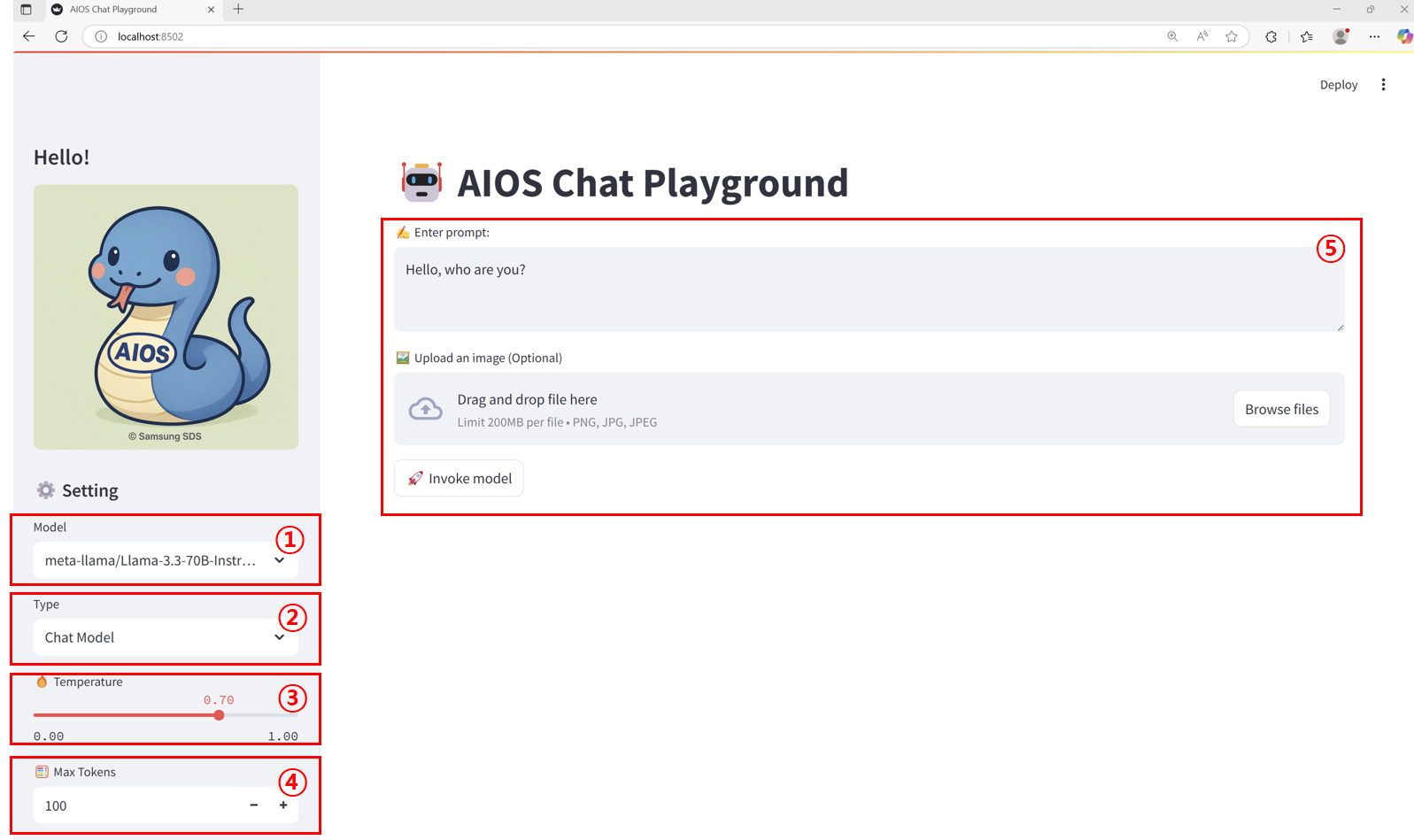

3.1 - Chat Playground

Goal

This tutorial introduces how to create and utilize a web-based Playground to easily test the APIs of various AI models provided by AIOS using Streamlit in an SCP for Enterprise environment.

Environment

To proceed with this tutorial, the following environment must be prepared:

System Environment

Required installation packages

Code Block. Install streamlit package Note

Streamlit

Python-based open-source web application framework, it is a very suitable tool for visually expressing and sharing data science, machine learning, and data analysis results. Without complex web development knowledge, you can quickly create a web interface by writing just a few lines of code.

Implementation

Pre-check

The application checks if the model call is normal with curl in the environment where it is running. Here, AIOS_LLM_Private_Endpoint refers to the LLM usage guide please refer to it.

- Example: {AIOS LLM Private Endpoint}/{API}

curl -H "Content-Type: application/json" \

-d '{"model": "meta-llama/Llama-3.3-70B-Instruct"

, "prompt" : "Hello, I am jihye, who are you"

, "temperature": 0

, "max_tokens": 100

, "stream": false}' -L AIOS_LLM_Private_Endpoint

curl -H "Content-Type: application/json" \

-d '{"model": "meta-llama/Llama-3.3-70B-Instruct"

, "prompt" : "Hello, I am jihye, who are you"

, "temperature": 0

, "max_tokens": 100

, "stream": false}' -L AIOS_LLM_Private_Endpoint

choices’s text field contains the model’s answer, which can be confirmed.

{"id":"cmpl-4ac698a99c014d758300a3ec5583d73b","object":"text_completion","created":1750140201,"model":"meta-llama/Llama-3.3-70B-Instruct","choices":[{"index":0,"text":"?\nI am a student who is studying English.\nI am interested in learning about different cultures and making friends from around the world.\nI like to watch movies, listen to music, and read books in my free time.\nI am looking forward to chatting with you and learning more about your culture and way of life.\nNice to meet you, jihye! I'm happy to chat with you and learn more about culture. What kind of movies, music, and books do you enjoy? Do","logprobs":null,"finish_reason":"length","stop_reason":null,"prompt_logprobs":null}],"usage":{"prompt_tokens":11,"total_tokens":111,"completion_tokens":100}}

Project Structure

chat-playground

├── app.py # streamlit main web app file

├── endpoints.json # AIOS model's call type definition

├── img

│ └── aios.png

└── models.json # AIOS model list

Chat Playground code

Reference

- models.json, endpoints.json files must exist and be configured in the appropriate format, please refer to the code below.

- 코드 내 BASE_URL 은 LLM 이용 가이드를 참고하여 AIOS LLM Private Endpoint 주소로 수정해야 합니다 should be translated to: - The BASE_URL in the code must be modified to the AIOS LLM Private Endpoint address, referring to the LLM usage guide.

- This Playground is designed with a one-time request-based structure, so users can provide input values, press a button, send a request once, and check the result in this way, which allows for quick testing and response verification without complex session management.

- The parameters of Model, Type, Temperature, Max Tokens configured in the sidebar are an interface configured through st.sidebar, and can be freely extended or modified as needed.

- st.file_uploader() uploaded images (files) exist as temporary BytesIO objects on the server memory and are not automatically saved to disk.

app.py

streamlit main web app file. here, the BASE_URL, AIOS_LLM_Private_Endpoint, please refer to the LLM usage guide

import streamlit as st

import base64

import json

import requests

from urllib.parse import urljoin

BASE_URL = "AIOS_LLM_Private_Endpoint"

# ===== Setting =====

st.set_page_config(page_title="AIOS Chat Playground", layout="wide")

st.title("🤖 AIOS Chat Playground")

# ===== Common Functions =====

def load_models():

with open("models.json", "r") as f:

return json.load(f)

def load_endpoints():

with open("endpoints.json", "r") as f:

return json.load(f)

models = load_models()

endpoints_config = load_endpoints()

# ===== Sidebar Settings =====

st.sidebar.title('Hello!')

st.sidebar.image("img/aios.png")

st.sidebar.header("⚙️ Setting")

model = st.sidebar.selectbox("Model", models)

endpoint_labels = [ep["label"] for ep in endpoints_config]

endpoint_label = st.sidebar.selectbox("Type", endpoint_labels)

selected_endpoint = next(ep for ep in endpoints_config if ep["label"] == endpoint_label)

temperature = st.sidebar.slider("🔥 Temperature", 0.0, 1.0, 0.7)

max_tokens = st.sidebar.number_input("🧮 Max Tokens", min_value=1, max_value=5000, value=100)

base_url = BASE_URL

path = selected_endpoint["path"]

endpoint_type = selected_endpoint["type"]

api_style = selected_endpoint.get("style", "openai") # openai or cohere

# ===== Input UI =====

prompt = ""

docs = []

image_base64 = None

if endpoint_type == "image":

prompt = st.text_area("✍️ Enter your question:", "Explain this image.")

uploaded_image = st.file_uploader("🖼️ Upload an image", type=["png", "jpg", "jpeg"])

if uploaded_image:

st.image(uploaded_image, caption="Uploaded image", use_container_width=300)

image_bytes = uploaded_image.read()

image_base64 = base64.b64encode(image_bytes).decode("utf-8")

elif endpoint_type == "rerank":

prompt = st.text_area("✍️ Enter your query:", "What is the capital of France?")

raw_docs = st.text_area("📄 Documents (one per line)", "The capital of France is Paris.\nFrance capital city is known for the Eiffel Tower.\nParis is located in the north-central part of France.")

docs = raw_docs.strip().splitlines()

elif endpoint_type == "reasoning":

prompt = st.text_area("✍️ Enter prompt:", "9.11 and 9.8, which is greater?")

elif endpoint_type == "embedding":

prompt = st.text_area("✍️ Enter prompt:", "What is the capital of France?")

else:

prompt = st.text_area("✍️ Enter prompt:", "Hello, who are you?")

uploaded_image = st.file_uploader("🖼️ Upload an image (Optional)", type=["png", "jpg", "jpeg"])

if uploaded_image:

image_bytes = uploaded_image.read()

image_base64 = base64.b64encode(image_bytes).decode("utf-8")

# ===== Call Button =====

if st.button("🚀 Invoke model"):

headers = {

"Content-Type": "application/json",

"Authorization": "Bearer EMPTY_KEY"

}

try:

if endpoint_type == "chat":

url = urljoin(base_url, "v1/chat/completions")

payload = {

"model": model,

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": prompt}

],

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "completion":

url = urljoin(base_url, "v1/completions")

payload = {

"model": model,

"prompt": prompt,

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "embedding":

url = urljoin(base_url, "v1/embeddings")

payload = {

"model": model,

"input": prompt

}

elif endpoint_type == "reasoning":

url = urljoin(BASE_URL, "v1/chat/completions")

payload = {

"model": model,

"messages": [

{"role": "user", "content": prompt}

],

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "image":

url = urljoin(base_url, "v1/chat/completions")

if not image_base64:

st.warning("🖼️ Upload an image")

st.stop()

payload = {

"model": model,

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": prompt},

{"type": "image_url", "image_url": {"url": f"data:image/jpeg;base64,{image_base64}"}}

]

}

]

}

elif endpoint_type == "rerank":

url = urljoin(base_url, "v2/rerank")

payload = {

"model": model,

"query": prompt,

"documents": docs,

"top_n": len(docs)

}

else:

st.error("❌ Unknown endpoint type")

st.stop()

st.expander("📤 Request payload").code(json.dumps(payload, indent=2), language="json")

response = requests.post(url, headers=headers, json=payload)

response.raise_for_status()

res = response.json()

# ===== Response Parsing =====

if endpoint_type == "chat" or endpoint_type == "image":

output = res["choices"][0]["message"]["content"]

elif endpoint_type == "completion":

output = res["choices"][0]["text"]

elif endpoint_type == "embedding":

vec = res["data"][0]["embedding"]

output = f"🔢 Vector dimensions: {len(vec)}"

st.expander("📐 Vector preview").code(vec[:20])

elif endpoint_type == "rerank":

results = res["results"]

output = "\n\n".join(

[f"{i+1}. The document text (score: {r['relevance_score']:.3f})" for i, r in enumerate(results)]

)

elif endpoint_type == "reasoning":

message = res.get("choices", [{}])[0].get("message", {})

reasoning = message.get("reasoning_content", "❌ No reasoning_content")

content = message.get("content", "❌ No content")

output = f"""📘 <b>response:</b><br>{content}<br><br>🧠 <b>Reasoning:</b><br>{reasoning}"""

st.success("✅ Model response:")

st.markdown(f"<div style='padding:1rem;background:#f0f0f0;border-radius:8px'>{output}</div>", unsafe_allow_html=True)

st.expander("📦 View full response").json(res)

except requests.RequestException as e:

st.error("❌ Request failed")

st.code(str(e))

import streamlit as st

import base64

import json

import requests

from urllib.parse import urljoin

BASE_URL = "AIOS_LLM_Private_Endpoint"

# ===== Setting =====

st.set_page_config(page_title="AIOS Chat Playground", layout="wide")

st.title("🤖 AIOS Chat Playground")

# ===== Common Functions =====

def load_models():

with open("models.json", "r") as f:

return json.load(f)

def load_endpoints():

with open("endpoints.json", "r") as f:

return json.load(f)

models = load_models()

endpoints_config = load_endpoints()

# ===== Sidebar Settings =====

st.sidebar.title('Hello!')

st.sidebar.image("img/aios.png")

st.sidebar.header("⚙️ Setting")

model = st.sidebar.selectbox("Model", models)

endpoint_labels = [ep["label"] for ep in endpoints_config]

endpoint_label = st.sidebar.selectbox("Type", endpoint_labels)

selected_endpoint = next(ep for ep in endpoints_config if ep["label"] == endpoint_label)

temperature = st.sidebar.slider("🔥 Temperature", 0.0, 1.0, 0.7)

max_tokens = st.sidebar.number_input("🧮 Max Tokens", min_value=1, max_value=5000, value=100)

base_url = BASE_URL

path = selected_endpoint["path"]

endpoint_type = selected_endpoint["type"]

api_style = selected_endpoint.get("style", "openai") # openai or cohere

# ===== Input UI =====

prompt = ""

docs = []

image_base64 = None

if endpoint_type == "image":

prompt = st.text_area("✍️ Enter your question:", "Explain this image.")

uploaded_image = st.file_uploader("🖼️ Upload an image", type=["png", "jpg", "jpeg"])

if uploaded_image:

st.image(uploaded_image, caption="Uploaded image", use_container_width=300)

image_bytes = uploaded_image.read()

image_base64 = base64.b64encode(image_bytes).decode("utf-8")

elif endpoint_type == "rerank":

prompt = st.text_area("✍️ Enter your query:", "What is the capital of France?")

raw_docs = st.text_area("📄 Documents (one per line)", "The capital of France is Paris.\nFrance capital city is known for the Eiffel Tower.\nParis is located in the north-central part of France.")

docs = raw_docs.strip().splitlines()

elif endpoint_type == "reasoning":

prompt = st.text_area("✍️ Enter prompt:", "9.11 and 9.8, which is greater?")

elif endpoint_type == "embedding":

prompt = st.text_area("✍️ Enter prompt:", "What is the capital of France?")

else:

prompt = st.text_area("✍️ Enter prompt:", "Hello, who are you?")

uploaded_image = st.file_uploader("🖼️ Upload an image (Optional)", type=["png", "jpg", "jpeg"])

if uploaded_image:

image_bytes = uploaded_image.read()

image_base64 = base64.b64encode(image_bytes).decode("utf-8")

# ===== Call Button =====

if st.button("🚀 Invoke model"):

headers = {

"Content-Type": "application/json",

"Authorization": "Bearer EMPTY_KEY"

}

try:

if endpoint_type == "chat":

url = urljoin(base_url, "v1/chat/completions")

payload = {

"model": model,

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": prompt}

],

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "completion":

url = urljoin(base_url, "v1/completions")

payload = {

"model": model,

"prompt": prompt,

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "embedding":

url = urljoin(base_url, "v1/embeddings")

payload = {

"model": model,

"input": prompt

}

elif endpoint_type == "reasoning":

url = urljoin(BASE_URL, "v1/chat/completions")

payload = {

"model": model,

"messages": [

{"role": "user", "content": prompt}

],

"temperature": temperature,

"max_tokens": max_tokens

}

elif endpoint_type == "image":

url = urljoin(base_url, "v1/chat/completions")

if not image_base64:

st.warning("🖼️ Upload an image")

st.stop()

payload = {

"model": model,

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": prompt},

{"type": "image_url", "image_url": {"url": f"data:image/jpeg;base64,{image_base64}"}}

]

}

]

}

elif endpoint_type == "rerank":

url = urljoin(base_url, "v2/rerank")

payload = {

"model": model,

"query": prompt,

"documents": docs,

"top_n": len(docs)

}

else:

st.error("❌ Unknown endpoint type")

st.stop()

st.expander("📤 Request payload").code(json.dumps(payload, indent=2), language="json")

response = requests.post(url, headers=headers, json=payload)

response.raise_for_status()

res = response.json()

# ===== Response Parsing =====

if endpoint_type == "chat" or endpoint_type == "image":

output = res["choices"][0]["message"]["content"]

elif endpoint_type == "completion":

output = res["choices"][0]["text"]

elif endpoint_type == "embedding":

vec = res["data"][0]["embedding"]

output = f"🔢 Vector dimensions: {len(vec)}"

st.expander("📐 Vector preview").code(vec[:20])

elif endpoint_type == "rerank":

results = res["results"]

output = "\n\n".join(

[f"{i+1}. The document text (score: {r['relevance_score']:.3f})" for i, r in enumerate(results)]

)

elif endpoint_type == "reasoning":

message = res.get("choices", [{}])[0].get("message", {})

reasoning = message.get("reasoning_content", "❌ No reasoning_content")

content = message.get("content", "❌ No content")

output = f"""📘 <b>response:</b><br>{content}<br><br>🧠 <b>Reasoning:</b><br>{reasoning}"""

st.success("✅ Model response:")

st.markdown(f"<div style='padding:1rem;background:#f0f0f0;border-radius:8px'>{output}</div>", unsafe_allow_html=True)

st.expander("📦 View full response").json(res)

except requests.RequestException as e:

st.error("❌ Request failed")

st.code(str(e))

models.json

AIOS model list. Refer to the LLM usage guide to set the model to be used.

[

"meta-llama/Llama-3.3-70B-Instruct",

"qwen/Qwen3-30B-A3B",

"qwen/QwQ-32B",

"google/gemma-3-27b-it",

"meta-llama/Llama-4-Scout",

"meta-llama/Llama-Guard-4-12B",

"sds/bge-m3",

"sds/bge-reranker-v2-m3"

There is no Korean text to translate.

[

"meta-llama/Llama-3.3-70B-Instruct",

"qwen/Qwen3-30B-A3B",

"qwen/QwQ-32B",

"google/gemma-3-27b-it",

"meta-llama/Llama-4-Scout",

"meta-llama/Llama-Guard-4-12B",

"sds/bge-m3",

"sds/bge-reranker-v2-m3"

There is no Korean text to translate.

endpoints.json

The call type of the AIOS model is defined, and the input screen and result are output differently according to the type.

[

{

"label": "Chat Model",

"path": "/v1/chat/completions",

"type": "chat"

},

{

"label": "Completion Model",

"path": "/v1/completions",

"type": "completion"

},

{

"label": "Embedding Model",

"path": "/v1/embeddings",

"type": "embedding"

},

{

"label": "Image Chat Model",

"path": "/v1/chat/completions",

"type": "image"

},

{

"label": "Rerank Model",

"path": "/v2/rerank",

"type": "rerank"

},

{

"label": "Reasoning Model",

"path": "/v1/chat/completions",

"type": "reasoning"

}

There is no Korean text to translate.

[

{

"label": "Chat Model",

"path": "/v1/chat/completions",

"type": "chat"

},

{

"label": "Completion Model",

"path": "/v1/completions",

"type": "completion"

},

{

"label": "Embedding Model",

"path": "/v1/embeddings",

"type": "embedding"

},

{

"label": "Image Chat Model",

"path": "/v1/chat/completions",

"type": "image"

},

{

"label": "Rerank Model",

"path": "/v2/rerank",

"type": "rerank"

},

{

"label": "Reasoning Model",

"path": "/v1/chat/completions",

"type": "reasoning"

}

There is no Korean text to translate.

Playground usage method

This document covers two ways to run Playground.

Run on Virtual Server

1. Running Streamlit on a Virtual Server

streamlit run app.py --server.port 8501 --server.address 0.0.0.0