The page has been translated by Gen AI.

Overview

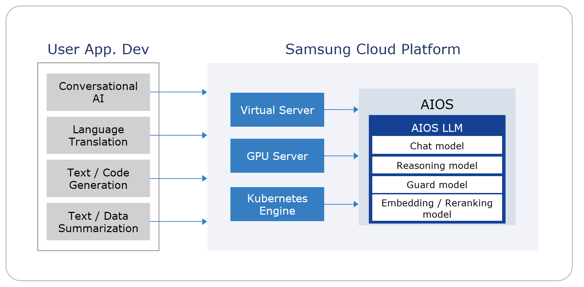

Service Overview

AIOS provides an environment where, after creating Virtual Server, GPU Server, and Kubernetes Engine resources on the Samsung Cloud Platform, you can develop AI applications using LLMs on those resources without installing or configuring a separate LLM service.

Features

- Convenient LLM Use Samsung Cloud Platform provides LLM Endpoints by default, allowing you to use LLMs directly on Virtual Server, GPU Server, and Kubernetes Engine resources.

- AI Development Productivity Improvement : AI developers can use various models with the same API, and support compatibility with OpenAI and LangChain SDKs, allowing easy integration with existing development environments and frameworks.

- ServiceWatch service integration provided: You can monitor data through the ServiceWatch service.

Service Architecture Diagram

Provided features

We provide the following features.

- AIOS LLM Endpoint Provision: When you request Virtual Server, GPU Server, or Kubernetes Engine services, the detailed page of the created resource provides LLM Endpoint information and a usage guide, and you can connect to the LLM on that resource and use it according to the guide.

- AIOS Report provided: You can view the number of calls and token usage by type, by resource, and by model, as well as the total usage per LLM.

Provided model

The LLM models provided by AIOS are as follows.

| Model name | model type | Introduction | Main uses | feature |

|---|---|---|---|---|

| gpt-oss-120b | Chat+Reasoning | Open-source GPT-series model based on 120 billion parameters, latest model | Research and experimentation, large-scale language understanding, AI services requiring complex reasoning/analysis, and construction of agent-based systems. |

|

| Qwen3-Coder-30B-A3B-Instruct | Code | Qwen3 series code model optimized for code generation and debugging | software development, AI code assistant, long document/repository analysis |

|

| Qwen3-30B-A3B-Thinking-2507 | Chat+Reasoning | Qwen3 model enhanced for long-form reasoning and deep thinking (Thinking) | Research, analysis report, logical writing, mathematics, science, coding |

|

| Llama-4-Scout | Chat+Vision | The latest Llama model with multimodal capability | Document analysis·summarization, customer support·chatbot |

|

| Llama-Guard-4-12B | moderation | Core security and moderation models to enhance reliability and safety in the latest large language models and multimodal AI services. | Used for automatically filtering harmful content in user inputs and model responses. |

|

| bge-m3 | embedding | a core embedding model with three characteristics: multifunctionality, multilingual capability, and support for large-scale inputs | In generative AI, it is used to combine Dense and Sparse retrieval for external knowledge search and answer evidence provision, ensuring both accuracy and generalization performance. |

|

| bge-reranker-v2-m3 | rerank | A core component of various information retrieval, question answering, and chatbot systems that require fast and accurate re‑ranking of search results in multilingual environments. | Reorder candidate answers or documents for a question by relevance |

|

Table. AIOS-provided LLM models

Availability by Region

AIOS is available in the environments below.

| region | Provision status |

|---|---|

| Korea West (kr-west1) | Provide |

| Korea East (kr-east1) | Not provided |

| South Korea South 1 (kr-south1) | Not provided |

| South Korea South 2 (kr-south2) | Not provided |

| South Korea 3 (kr-south3) | Not provided |

Table. AIOS availability by region

Preceding Service

This is a list of services that must be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | service | Detailed description |

|---|---|---|

| Compute | Virtual Server | Virtual server optimized for cloud computing |

| Compute | GPU Server | A virtual server suitable for tasks that require fast computation speed, such as AI model experiments, predictions, and inference, in a cloud environment. |

| Compute | Cloud Functions | Serverless computing-based Faas (Function as a Service) |

| Container | Kubernetes Engine | A service that provides lightweight virtual computing, containers, and Kubernetes clusters for managing them |

Table. AIOS Preceding Services