Overview

Service Overview

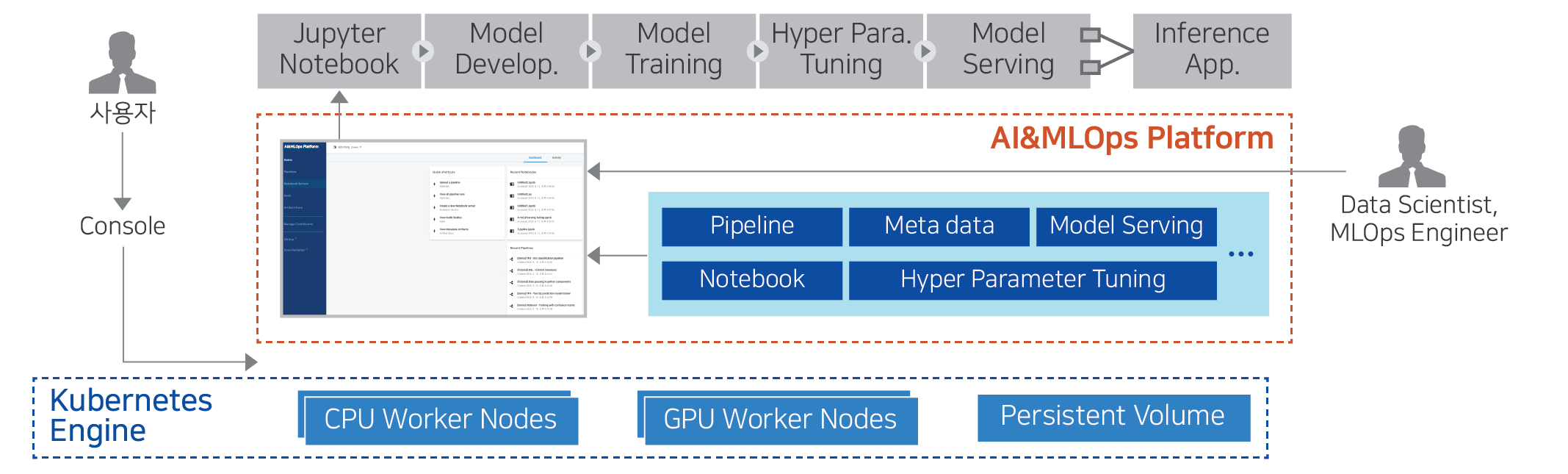

AI&MLOps Platform is a machine learning platform that automates the repetitive tasks of the entire pipeline of machine learning model development, learning, and deployment process. Through the AI&MLOps Platform service, integrated management of training data, models, and operational data is possible based on a Kubernetes-based AI/MLOps environment.

AI&MLOps Platform is an open-source product that provides Kubeflow.Mini service, which can utilize the development, learning, tuning, and deployment functions of machine learning models, and Enterprise service that adds Add-on functions such as distributed learning Job execution and monitoring.

Features

Cloud Native MLOps Environment: AI&MLOps Platform provides a machine learning model development environment optimized for the cloud, and it is convenient to link with various open sources based on Kubernetes.

Machine Learning Development and Operational Convenience: Provides a standardized environment that supports various machine learning frameworks such as TensorFlow, PyTorch, scikit-learn, Keras, etc. It automates the entire pipeline of machine learning model development, training, and deployment, making it easy to configure and create models, and reusable.

GPU Collaboration Enhancement: With Bare Metal Server-based Multi Node GPU and GPUDirect RDMA (Remote Direct Memory Access), the job speed of LLM (Large Language Model) and NLP (Natural Language Processing) can be dramatically improved.

Service Composition Diagram

Provided Features

The AI&MLOps Platform provides the following functions.

ML Model Development Environment and Features

Notebook provision: Creates a Jupyter Notebook and VS Code with ML Framework (Tensorflow, Pytorch, etc.).

TensorBoard: TensorBoard(*ML model training process visualization/analysis tool) server is created and managed.

Volumes: When developing an ML model, use a volume to store datasets and models, and connect a volume when creating a Jupyter Notebook.

Distributed Training Job for ML Model Execution/Management

Supports distributed learning Job execution and monitoring, inference service management and analysis. (Add-on)

It provides various functions for managing Job Queue and configuring MLOps environment, etc. (Add-on)

Job Scheduler(FIFO, Bin-packing, Gang based), GPU Fraction, GPU resource monitoring, etc. provide efficient GPU resource utilization features. (Add-on) BM-based Multi Node GPU and GPU Direct RDMA (Remote Direct Memory Access) significantly improved the job speed of LLM (Large Language Model) and NLP (Natural Language Processing) (Add-on)

ML Model Experiment Management and Pipeline

ML pipeline experiment management is provided through Experiments(KFP). It supports pipeline automation configuration function to execute ML tasks in a step-by-step manner.

Component

Operating System Version

The operating systems supported by the AI&MLOps Platform are as follows.

| Operating System(OS) | Version |

|---|---|

| RHEL | RHEL 8.3 |

| Ubuntu | Ubuntu 18.04, Ubuntu 20.04, Ubuntu 22.04 |

Regional Provision Status

The AI&MLOps Platform can be provided in the following environments.

| Region | Availability |

|---|---|

| Western Korea(kr-west1) | Provided |

| Korean East(kr-east1) | Provided |

| South Korea 1(kr-south1) | Not provided |

| South Korea southern region 2(kr-south2) | Not provided |

| South Korea, Busan(kr-south3) | Not provided |

Preceding service

This is a list of services that must be pre-configured before creating this service. Please refer to the guide provided for each service and prepare in advance for more details.

| Service Category | Service | Detailed Description |

|---|---|---|

| Container | Kubernetes Engine | Kubernetes container orchestration service |