We offer AI/ML services that enable easy and convenient development of ML/DL (Machine Learning/Deep Learning) models and the setup of training environments.

This is the multi-page printable view of this section. Click here to print.

AI-ML

- 1: CloudML

- 1.1: Overview

- 1.2: How-to guides

- 1.3: API Reference

- 1.4: CLI Reference

- 1.5: Release Note

- 2: AI&MLOps Platform

- 2.1: Overview

- 2.2: How-to guides

- 2.2.1: Cluster Deployment

- 2.2.2: Kubeflow Usage Guide

- 2.3: API Reference

- 2.4: CLI Reference

- 2.5: Release Note

1 - CloudML

1.1 - Overview

Service Overview

CloudML is an integrated platform that supports the entire machine learning process—from data analysis to model development, training, validation, and deployment—in a cloud environment.

Features

- Cloud ML is designed to enable users in various roles such as analysts, machine learning engineers, and developers to collaborate in a single environment and easily design and operate machine learning workflows.

- Cloud ML provides an analysis environment based on Python and R, and users with programming experience can leverage the platform more flexibly and effectively. In particular, using the generative AI–based Copilot feature allows code writing, refactoring, error correction, and function recommendation to be performed easily with natural language input, thereby increasing analytical productivity and accessibility.

- Cloud ML systematically supports each stage, including configuring the analysis environment, model development and serving, analysis automation, and visualization. It enables improvements in both productivity and model quality through repetitive experiments and operational automation.

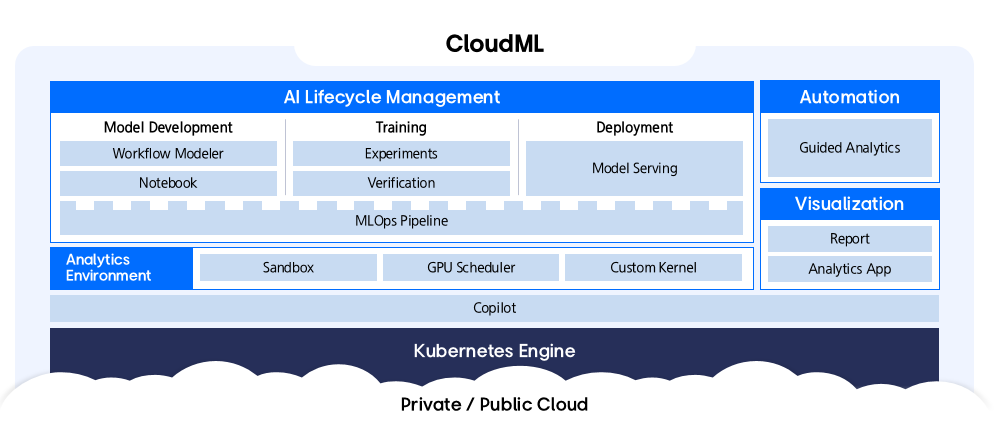

Service Architecture Diagram

CloudML consists of an analysis environment, machine learning lifecycle management, automated analysis support, visualization, and a generative AI‑based Copilot feature, allowing users to perform the entire machine‑learning process in an integrated manner.

Provided features

CloudML provides the following features.

- Visual Modeling: Provides an intuitive interface that lets you build and deploy machine learning models without coding using a Drag&Drop approach. You can easily manage the entire process from data loading to model evaluation and deployment.

- Code-based Development: In the Jupyter Notebook environment, you can freely write and execute code using Python, R, and others. It provides powerful features for advanced users and researchers.

- Workflow Automation: It efficiently automates complex machine learning workflows such as data preprocessing, model training, evaluation, and deployment.

- Experiment Management: You can train machine learning models with various parameter combinations and systematically manage and compare the results.

- Using Copilot Features: It provides a natural-language-based AI assistant that guides and automates the model development process. It supports various tasks such as code generation, refactoring, error correction, and documentation, enhancing productivity.

- Integrated Platform: All features are integrated within CloudML for convenient use.

- Scalability and Flexibility: Supports scaling computing resources and connecting various data sources as needed.

Constraints

Before using CloudML, be sure to check the following constraints and incorporate them into your service usage plan. Since Cloud ML operates in a Kubernetes-based environment, appropriate cluster resource configuration is required for stable service operation.

- Application Basic Resources: To run the Application, a minimum of 24 vCPU cores and 96 GBi of memory are allocated by default.

- Analysis Task Resources: To perform analysis tasks, additional CPU or GPU resource configuration is required beyond the basic resources above. It should be configured appropriately, taking the workload of the analysis tasks into account.

- Copilot (CPU-based usage): To run Copilot on CPU resources, a minimum of 16 vCPU cores and 10 GiB of memory are required. In this case, the CPU resources available for analysis tasks are reduced accordingly.

- Copilot (GPU-based usage): Copilot can also be configured to use dedicated GPU resources.

- Supported LLM models: Currently, the LLM models that can be applied to Copilot are limited to Llama3.

Provision status by region

CloudML is available in the following environments.

| region | Availability |

|---|---|

| Korea West (kr-west1) | Provide |

| Korea East (kr-east1) | Provide |

| South Korea South 1 (kr-south1) | Not provided |

| South Korea South 2 (kr-south2) | Not provided |

| South Korea South 3 (kr-south3) | Not provided |

Preliminary Service

This is a list of services that must be pre-configured before creating the service. Please refer to the guide provided for each service for details and prepare in advance.

| Service Category | service | Detailed description |

|---|---|---|

| Container | Container Registry | A service that stores, manages, and shares container images. |

| Container | Kubernetes Engine | Kubernetes container orchestration service |

| Networking | Load Balancer | A service that automatically distributes server traffic load. |

1.2 - How-to guides

Create CloudML

Users can create the service by entering the required CloudML information and selecting detailed options through the Samsung Cloud Platform Console.

To create a CloudML, follow these steps.

Click the All Services > AI/ML > CloudML menu. Navigate to CloudML’s Service Home page.

On the Service Home page, click the Create CloudML button. You will be taken to the CloudML page.

On the CloudML Creation page, enter the information required to create the service and select detailed options.

In the Version Selection area, select the version of the service.

Category RequiredDetailed description Select version Required Select CloudML version Table. CloudML service version selection optionsSCP Kubernetes Engine deployment Select the options needed to create a service in this area.

Category RequiredDetailed description Cluster name Required Select Kubernetes Engine cluster Table. CloudML Service Cluster Selection OptionsIn the Service Information Input area, select the options required to create the service.

Category required or notDetailed description CloudML name Required Enter service name Explanation Selection Enter service description Domain name Required Enter the domain name to be used for the service - Enter 2-63 characters using lowercase English letters, numbers, and special characters

endpoint Required Select the endpoint to use in the service - Choose between Private and Public

Copilot Selection Select whether to use Copilot in the service - Apply when selected requires agreement to terms in the popup window

- If the selected cluster is not configured with GPUs dedicated to LLMs, or the allocated LLM resources are insufficient, Copilot cannot be applied

Resource Information Required Display resource information of the selected cluster Enter SCR information Required Enter SCR information to be used in the service - Enter private endpoint, authentication key, secret key

Table. CloudML service information input itemsAdditional Information Input area, please enter or select the required information.

Category RequiredDetailed description tag Selection Add Tag - Up to 50 can be added per resource

- After clicking the Add Tag button, enter or select Key, Value values

Table. CloudML Additional Information Input Items

Summary Check the detailed information and estimated billing amount generated in the panel, and click the Complete button.

- When creation is complete, check the created resources on the CloudML List page.

Check CloudML detailed information

You can view and edit the full list of resources and detailed information for the CloudML service. CloudML Details page consists of Details, Tags, Activity Log tabs.

To view the detailed information of CloudML, follow these steps.

- Click the All Services > AI/ML > CloudML menu. Navigate to CloudML’s Service Home page.

- On the Service Home page, click the resource (CloudML) to view detailed information. You will be taken to the CloudML Details page.

- CloudML Details page displays CloudML’s status information and detailed information, and consists of Details, Tags, Activity History tabs.

Category Detailed description Service status CloudML status - Creating: Creating

- Deployed: Created / operating normally

- Updating: Updating settings

- Terminating: Terminating

- Error: Error occurred

Connection Guide Service Access Guide - Information on host to register on the user’s PC

Service termination Cancel Service button Table. CloudML status information and additional features

- CloudML Details page displays CloudML’s status information and detailed information, and consists of Details, Tags, Activity History tabs.

Detailed Information

CloudML List page lets you view detailed information of the selected resource and modify it if necessary.

| Category | Detailed description |

|---|---|

| service | Service name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform |

| Resource name | Resource name |

| Resource ID | Unique resource ID in the service |

| constructor | User who created the service |

| Creation date and time | Service creation date and time |

| editor | User who edited the service information |

| Modification date | Date and time the service information was modified |

| Product name | CloudML name |

| Copilot | Whether to use Copilot |

| Explanation | Description of the service |

| Cluster name | Selected Kubernetes Engine cluster name |

| domain name | Entered service domain name |

| Version | Selected service version |

| Installation node information | Node information installed on the cluster |

| SCR information | Entered SCR information |

tag

On the CloudML List page, you can view the tag information of the selected resource, and add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag list | Tag list

|

Job History

On the CloudML list page, you can view the operation history of the selected resource.

| Category | Detailed description |

|---|---|

| Task History List | Resource Change History

|

Terminate CloudML Service

Users can cancel the CloudML service through the Samsung Cloud Platform Console.

To cancel CloudML, follow the steps below.

- Click the All Services > AI/ML > CloudML menu. Navigate to CloudML’s Service Home page.

- Click the Cancel Service button on the Service Home page. A service cancellation alert window appears.

- Enter the CloudML name to delete in the dialog and click the Confirm button.

1.2.1 - Kubernetes Cluster Configuration

Configuring a Kubernetes cluster

To apply for the CloudML service, a dedicated cluster for CloudML must be set up. A dedicated cluster means creating a Kubernetes Engine that meets or exceeds the required minimum specifications and configuring several necessary settings. Create a dedicated cluster in advance before applying for the CloudML service.

- For instructions on creating a cluster, see the Cluster Creation guide.

- CloudML exposes an HTTPS endpoint on port 443. When creating a cluster, select Public Endpoint.

Recommended specifications for cluster nodes and storage

Cluster nodes can be added or modified after the cluster is created. The following are the recommended specifications for cluster nodes and storage that should be prepared to install CloudML for five users.

| Category | Item | role | capacity |

|---|---|---|---|

| cluster node | Kubernetes node pool (Virtual Server) | Application execution

| 24 core / 96 GBi |

| Cluster node | Kubernetes node pool (Virtual Server) | Run Analysis

| 8 core / 32 GBi x 2 EA

|

| repository | File Storage | Data storage | 1 TB |

If you need to change specifications such as adjusting the number of nodes, adding GPU nodes, or expanding resources, please request technical support.

- Technical Support Information Page: https://www.samsungsds.com/kr/support/support_tech.html

- Technical support request email: brightics.cs@samsung.com

Add a label to a node

Add labels to the nodes directly according to the role-specific recommendations in the cluster node and storage specifications.

- For instructions on adding labels to a node YAML, refer to the Edit Node YAML guide.

To add a label to a cluster node, follow these steps.

- Click the All Services > Container > Kubernetes Engine menu. Navigate to the Service Home page of Kubernetes Engine.

- On the Service Home page, click the Node menu. You will be taken to the Node List page.

- On the Node List page, select the cluster for which you want to view detailed information from the gear button at the top left, then click the Confirm button.

- Select the node you want to view details for and click it. You will be taken to the Node Details page.

- On the Node Details page, click the YAML tab. You will be taken to the YAML tab page.

- On the YAML tab page, click the Edit button. The node edit window opens.

- In the node edit window, add a label that matches the role and click the Save button.

- Check the following information and add a label that matches the node specifications.

Category Purpose-specific labels CPU node - App:

node.kubernetes.io/nodetype: ml-app

- Analytics:

node.kubernetes.io/nodetype: ml-analytics

GPU node - Analysis:

node.kubernetes.io/nodetype: ml-analytics-gpu

- Copilot:

node.kubernetes.io/nodetype: ml-gpu

Table. Kubernetes node label items by purpose - App:

- Check the following information and add a label that matches the node specifications.

1.3 - API Reference

1.4 - CLI Reference

1.5 - Release Note

CloudML

- We have launched the CloudML service, which supports the entire machine learning process—from data analysis to model development, training, validation, and deployment—in a cloud environment through the Samsung Cloud Platform.

2 - AI&MLOps Platform

2.1 - Overview

Service Overview

AI&MLOps Platform is a machine learning platform that automates repetitive tasks across the entire pipeline of developing, training, and deploying machine learning models. Through the AI&MLOps Platform service, integrated management of training data, models, and operational data is possible on a Kubernetes-based AI/MLOps environment.

The AI&MLOps Platform provides an Enterprise service that adds add-on features such as distributed training job execution and monitoring to the open-source product Kubeflow.Mini, which enables development, training, tuning, and deployment of machine learning models.

Features

Providing a Cloud Native MLOps Environment: The AI&MLOps Platform provides a cloud‑optimized machine learning model development environment, and its Kubernetes‑based architecture makes integration with various open‑source tools convenient.

Machine Learning Development and Operations Convenience: Provides a standardized environment that supports various machine learning frameworks such as TensorFlow, PyTorch, scikit-learn, Keras, etc. By automating the entire pipeline for developing, training, and deploying machine learning models, it makes model composition and creation easy and promotes reusability.

Enhanced GPU Integration: By leveraging Multi‑Node GPU on a Bare Metal Server and GPUDirect RDMA (Remote Direct Memory Access), the job speed of LLM (Large Language Model) and natural language processing (NLP) can be dramatically improved.

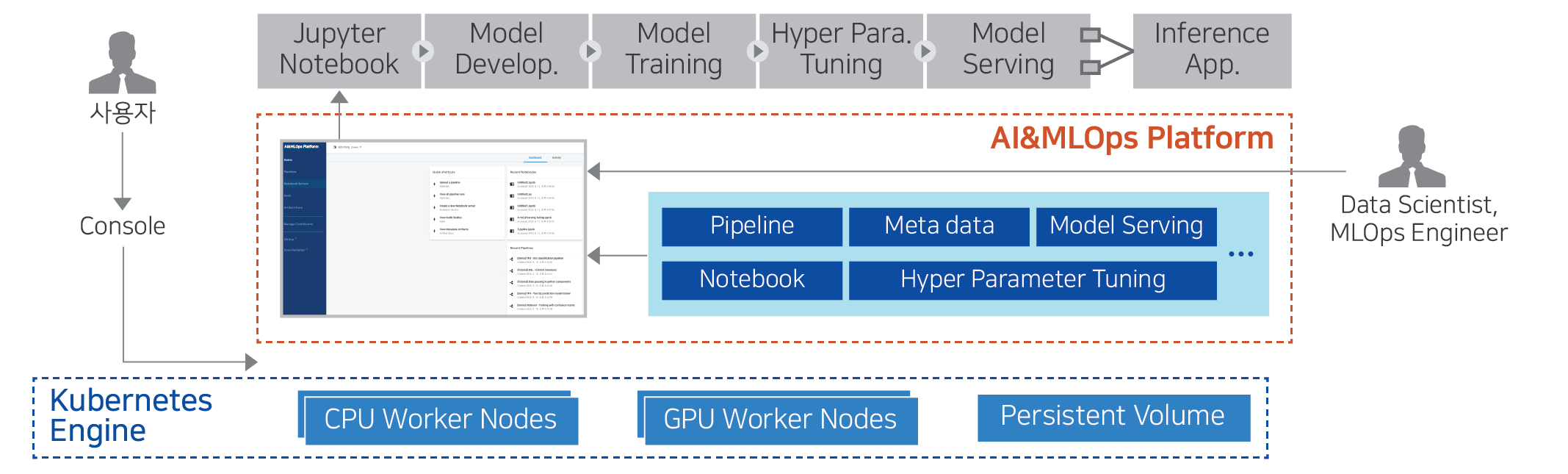

Service Diagram

Provided features

The AI&MLOps Platform provides the following features.

ML Model Development Environment and Features

- Notebook Provision: Creates Jupyter Notebooks and VS Code that include ML frameworks such as Tensorflow, Pytorch, etc.

- TensorBoard: TensorBoard(ML model training process visualization/analysis tool) creates and manages the server.

- Volumes: When developing ML models, store datasets and models, and connect a Volume when creating a Jupyter Notebook.

ML model distributed training Job execution/management

- Supports execution and monitoring of distributed training jobs, as well as management and analysis of inference services. (Add-on)

- Provides various features for configuring MLOps environments, such as Job Queue management. (Add-on)

- Provides efficient GPU resource utilization features such as Job Scheduler (FIFO, Bin-packing, Gang-based), GPU Fraction, and GPU resource monitoring, etc. (Add-on)

- We dramatically improved the job speed of LLM (Large Language Model) and natural language processing (NLP) by using BM-based Multi-Node GPU and GPU Direct RDMA (Remote Direct Memory Access). (Add-on)

ML Model Experiment Management and Pipeline

- Provides Experiments (KFP) for managing ML pipeline experiments.

- Supports pipeline automation features for configuring and executing ML tasks in stages.

Component

Operating System version

The operating systems supported by the AI&MLOps Platform are as follows.

| Operating System (OS) | Version |

|---|---|

| RHEL | RHEL 8.3 |

| Ubuntu | Ubuntu 18.04, Ubuntu 20.04, Ubuntu 22.04 |

Provision status by region

The AI&MLOps Platform is available in the environments below.

| region | Provision status |

|---|---|

| Korea West (kr-west1) | Provide |

| Korea East (kr-east1) | Provide |

| South Korea South 1 (kr-south1) | Not provided |

| South Korea South 2 (kr-south2) | Not provided |

| South Korea South 3(kr-south3) | Not provided |

Prior Service

This is a list of services that must be pre-configured before creating the service. For details, refer to the guide provided for each service and prepare in advance.

| Service Category | service | Detailed description |

|---|---|---|

| Container | Kubernetes Engine | Kubernetes container orchestration service |

2.2 - How-to guides

Create AI&MLOps Platform

Users can create the service by entering the required information for the AI&MLOps Platform and selecting detailed options through the Samsung Cloud Platform Console.

To create an AI&MLOps Platform, follow these steps.

- Click the All Services > AI/ML > AI&MLOps Platform menu. You will be taken to the Service Home page of AI&MLOps Platform.

- Service Home page, click the AI&MLOps Platform Create button. You will be taken to the AI&MLOps Platform Create page.

- On the AI&MLOps Platform creation Service Type Selection page, enter the information required to create the service and select detailed options.

- Select the service type in the Service Type and Version Selection area.

Category RequiredDetailed description Service type Required Service type selected by the user - AI&MLOps Platform

- Kubeflow Mini

Service type version Required Select version of the selected service - Provide a list of versions of the offered service

Table. AI&MLOps Platform service types and version selection items - Cluster Deployment Area Classification Select the options required to create a service in this area.

Category RequiredDetailed description Cluster deployment area Required - Deploy from Kubernetes Engine: Select the previously created Kubernetes Engine

- Deploy to a new cluster: When creating the AI&MLOps Platform, also create a Kubernetes Engine

Table. AI&MLOps Platform Service Cluster Deployment Area Classification ItemsReferenceThe configuration elements on the following Service Information Input page vary depending on the cluster deployment settings.

- Select the service type in the Service Type and Version Selection area.

- On the Service Information Input page of AI&MLOps Platform Creation, enter the information required to create the service and select detailed options.

- You can select the cluster deployment region.

- For instructions on configuring Deploy to a new cluster, see the Deploy to a new cluster guide.

- Deploy on SCP Kubernetes Engine For configuration, refer to the Deploy on SCP Kubernetes Engine guide.

- Refer to the Kubernetes Cluster specifications guide for the Kubernetes cluster specifications required for installation.

- You can select the cluster deployment region.

- On the Creation Information Check page of AI&MLOps Platform creation, review the detailed information you created and the estimated billing amount, and click the Complete button.

- Once creation is complete, check the created resources on the AI&MLOps Platform Service List page.

Check detailed information of AI&MLOps Platform

The AI&MLOps Platform service allows you to view and edit the full list of resources and detailed information. AI&MLOps Platform Service Details page consists of Details, Tags, Activity History tabs.

To view detailed information about the AI&MLOps Platform service, follow the steps below.

- Click the All Services > AI/ML > AI&MLOps Platform Service menu. Navigate to the Service Home page of the AI&MLOps Platform Service.

- On the Service Home page, click the AI&MLOps Platform menu. You will be taken to the AI&MLOps Platform Service List page.

- On the AI&MLOps Platform Service List page, click the resource to view detailed information. You will be taken to the AI&MLOps Platform Service Details page.

- AI&MLOps Platform Service Details page displays status information and additional feature information, and consists of Details, Tags, Activity History tabs.

Detailed Information

AI&MLOps Platform Service List page lets you view detailed information of the selected resource and edit the information if needed.

| Category | Detailed description |

|---|---|

| service | Service name |

| Resource Type | Resource Type |

| SRN | Unique resource ID in Samsung Cloud Platform |

| Resource name | Resource name

|

| Resource ID | Unique resource ID in the service |

| constructor | User who created the service |

| Creation date and time | Service creation date and time |

| editor | User who edited the service information |

| Modification date | Date and time the service information was modified |

| Dashboard status | Dashboard status value |

| Service name | Service name |

| Admin Email Address | Administrator email address |

| image name | Service image name |

| Version | Image version |

| Service type | Deployed service type |

tag

AI&MLOps Platform Service List page lets you view the tag information of the selected resource, and you can add, modify, or delete it.

| Category | Detailed description |

|---|---|

| Tag list | Tag list

|

Job History

AI&MLOps Platform Service List page lets you view the operation history of the selected resource.

| Category | Detailed description |

|---|---|

| Task History List | Resource Change History

|

Access AI&MLOps Platform

To access the AI&MLOps Platform dashboard, you must complete the prerequisite steps.

Preliminary work

To access the AI&MLOps Platform, you must preconfigure the relevant ports and the IP addresses required for connection in the Security Group and Firewall (if using a firewall).

Kubeflow Mini: port 31390 (inbound rules of Security Group, VPC firewall)

To access the cluster’s worker node, you must set an inbound rule for port 22 on the Security Group and Firewall (when using a VPC firewall).

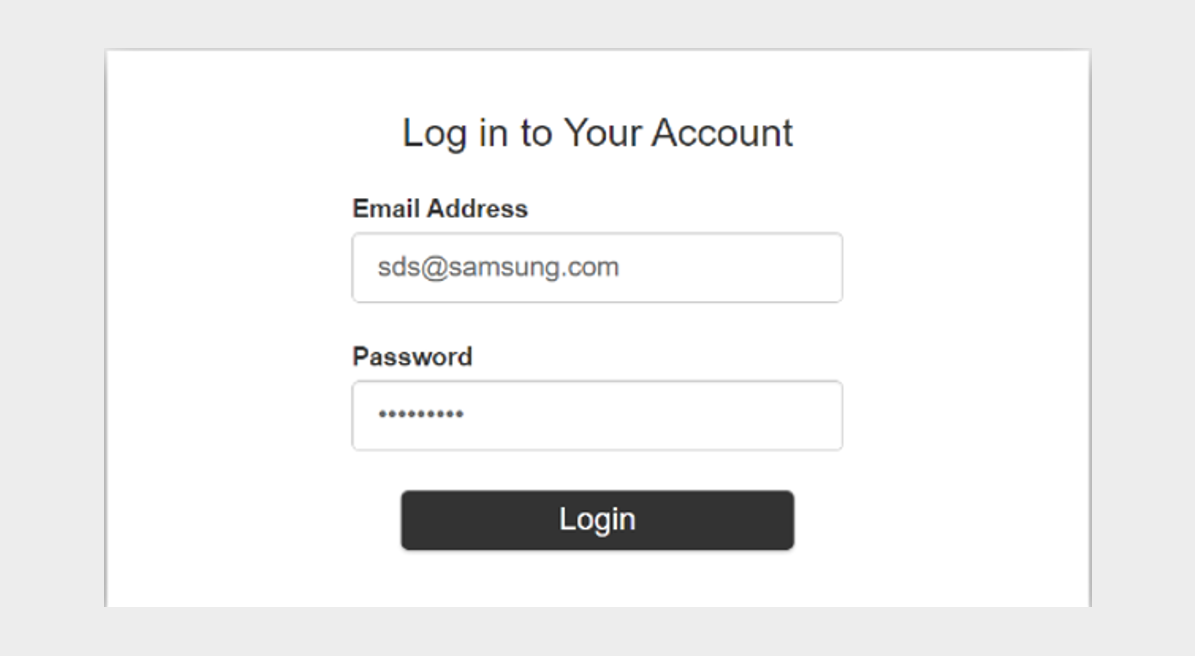

Access Dashboard

To access the AI&MLOps Platform service, follow these steps.

- Click the All Services > AI/ML > AI&MLOps Platform Service menu. You will be taken to the Service Home page of the AI&MLOps Platform service.

- Click the AI&MLOps Platform Service menu on the Service Home page. You will be taken to the AI&MLOps Platform Service List page.

- Click the resource to view detailed information on the AI&MLOps Platform Service List page. You will be taken to the AI&MLOps Platform Details page.

- AI&MLOps Platform Details on the page, click the Access Guide button. The Access Guide popup window opens.

- Access Guide In the popup window, click the dashboard’s URL link. You will be taken to the corresponding dashboard page.

Terminate AI&MLOps Platform

You can cancel the unused service to reduce operating costs. However, canceling the service may cause the running service to stop immediately, so you should thoroughly consider the impact of service interruption before proceeding with the cancellation.

To cancel the AI&MLOps Platform, follow the steps below.

- Click the All Services > AI/ML > AI&MLOps Platform Service menu. Navigate to the Service Home page of the AI&MLOps Platform Service.

- On the Service Home page, click the AI&MLOps Platform Service menu. You will be taken to the AI&MLOps Platform Service List page.

- Click the resource to view detailed information on the AI&MLOps Platform Service List page. You will be taken to the AI&MLOps Platform Details page.

- AI&MLOps Platform Details on the page, click the Cancel Service button. The Cancel Service popup will open.

- After entering the service name for verification, click Confirm.

- When termination is complete, check on the AI&MLOps Platform Service List page whether the resource has been terminated.

2.2.1 - Cluster Deployment

Cluster deployment area

In Samsung Cloud Platform, the AI&MLOps Platform creation’s service type selection provides two cloud deployment regions.

Before proceeding with the cluster deployment, be sure to verify the Kubernetes cluster specifications required for installation.

- Regardless of the selected cluster deployment region, you must verify the Kubernetes cluster specifications in advance.

- For detailed specification information, refer to the Cluster Specification guide.

Depending on the selection of the cluster deployment region, the installation details on the AI&MLOps Platform creation service information input page differ.

Deploy from SCP Kubernetes Engine

- Click the All Services > AI/ML > AI&MLOps Platform menu. You will be taken to the Service Home page of AI&MLOps Platform.

- On the Service Home page, click the AI&MLOps Platform Create button. It navigates to the AI&MLOps Platform Create page.

- On the service type selection page of AI&MLOps Platform creation, enter the information required to create the service and select detailed options.Cluster deploymentSelect the Deploy on SCP Kubernetes Engine option.

- On the Service Information Input page of AI&MLOps Platform Creation, enter the information required to create the service, and select detailed options.

- In the Service Information Input area, enter or view the information required to create a service.

Category Required statusDetailed description Service name Required Enter AI&MLOps Platform name - AI&MLOps Platform name cannot be duplicated within a project

Storage Class Required Storage Class is automatically registered Installation node information Lookup View the node information of the selected Kubernetes Engine Admin Email Address Required Enter the administrator (Admin) email address to use for login password Required Enter the password to use for login Confirm Password Required Re-enter password to prevent password errors Table. AI&MLOps Platform service information input fields - Additional Information Input area: enter or select the information required to create a service.

Category Required statusDetailed description tag Selection Select tags to add to the AI&MLOps Platform - Click ‘Add Tag’ to create a new tag or add an existing tag

- You can register up to 50 tags

- The newly added tags will be applied after the service creation is completed

Table. AI&MLOps Platform service additional information input fields

- In the Service Information Input area, enter or view the information required to create a service.

Deploy to a new cluster

- Click the All Services > AI/ML > AI&MLOps Platform menu. You will be taken to the Service Home page of AI&MLOps Platform.

- Service Home page, click the Create AI&MLOps Platform button. It navigates to the Create AI&MLOps Platform page.

- On the Service Type Selection page of the AI&MLOps Platform creation, enter the information required to create the service and select detailed options.Cluster deploymentSelect the Deploy to a new cluster option.

- On the Service Information Input page of AI&MLOps Platform creation, enter the information required to create a service and select detailed options.

In the Service Information Input area, enter or view the information required to create a service.

Category RequiredDetailed description Service name Required Enter AI&MLOps Platform name - AI&MLOps Platform name cannot be duplicated within a project

Storage Class Required Storage Class is automatically registered Installation node information Lookup View the node information of the selected Kubernetes Engine Admin Email Address Required Enter the email address of the administrator (Admin) to be used for login. password Required Enter the password to use for login Confirm Password Required Re-enter password to prevent password errors Table. AI&MLOps Platform Service Information Input ItemsKubernetes Engine Information Input Enter or select the required information in this area.

Category RequiredDetailed description Cluster name Required Cluster name - must start with an English letter and may use English letters, numbers, and special characters (

-)

- Enter within 3 to 30 characters

Control plane settings > Kubernetes version Required Select Kubernetes version Control Area Settings > Control Area Logging Selection Select whether to enable control plane logging - Audit/Event logs from the cluster control plane can be viewed in Cloud Monitoring’s log analysis

- Log storage up to 1 GB for all services within the account is provided for free, and logs exceeding 1 GB are deleted sequentially

- For more details, see Cloud Monitoring > Log Analysis

Network Settings Required Network connection settings for the node pool - VPC: Select a pre‑created VPC

- Subnet: Select a standard Subnet to use from the subnets of the chosen VPC

- Security Group: Click the Search button, then select a Security Group in the Select Security Group popup

- Load Balancer: Provides the

type:LoadBalancerfeature in a Kubernetes Service object- Select a load balancer on the same network

- Select whether to use

- Cannot be changed after configuration

File Storage Settings Required Select the file storage volume to use in the cluster - Default Volume (NFS): Select File Storage using the Search button

- The default Volume file storage provides only the NFS format

Table. Kubernetes Engine Service Information Input Items- must start with an English letter and may use English letters, numbers, and special characters (

Enter or select the required information in the Node Pool Information Input area.

Category Required statusDetailed description Node pool configuration Required Select node pool information - * Items marked with an asterisk are required fields and must be entered

- For the AI&MLOps Platform, image size may continuously increase depending on usage, so setting Block Storage to at least 200 GB enables smooth system configuration

Table. AI&MLOps Platform Service Information Input ItemsReference- A Windows OS node pool can be created only when an additional storage (CIFS) volume is in use in the cluster.

- Volume encryption for node pool Block Storage can only be set at initial creation.

- Enabling encryption may cause performance degradation in some features.

- Only when you have selected the node pool auto‑scaling or shrinking feature can you input node count, minimum node count, maximum node count.

In the Additional Information Input area, enter or select the required information.

Category RequiredDetailed description tag Selection Select tags to add to the AI&MLOps Platform - Click ‘Add Tag’ to create a new tag or add an existing tag

- You can register up to 50 tags

- The newly added tags will be applied after the service creation is completed

Table. AI&MLOps Platform Service Information Input Items

Cluster specifications

To use the AI&MLOps Platform, you need a Kubernetes Engine to install the AI&MLOps Platform. You can select an existing Kubernetes Engine, or you can create a Kubernetes Engine together when creating the AI&MLOps Platform.

The specifications of the Kubernetes cluster required for installation are as follows.

Node pool resource size (composed of 2 or more nodes)

- AI&MLOps Platform: vCPU 32, Memory 128G or more

- Kubeflow Mini: vCPU 24, Memory 96G or more

Kubernetes version

- AI&MLOps Platform v1.9.1 (k8s v1.30)

- Kubeflow Mini v1.9.1 (k8s v1.30)

2.2.2 - Kubeflow Usage Guide

Below, we guide you on how to use Kubeflow after creating it.

Add Kubeflow User

Below is a guide on how to use Kubeflow after it has been created.

Kubeflow only creates the account of the single Admin User entered on the initial installation screen.

When using the Kubeflow Dashboard, to add users other than the initial user, you must modify the settings of Dex (the authentication integration component of Kubeflow).

- Dex is deployed in the auth namespace, and its configuration is stored in a configmap named dex.

The following is an example of Dex configuration.

apiVersion: v1

kind: ConfigMap

metadata:

name: dex

namespace: auth

data:

config.yaml: |

issuer: http://dex.auth.svc.cluster.local:5556/dex

storage:

type: kubernetes

config:

inCluster: true

web:

http: 0.0.0.0:5556

logger:

level: "debug"

format: text

oauth2:

skipApprovalScreen: true

enablePasswordDB: true

staticPasswords:

- email: admin@kubeflow.org

hash: $2y$10$Yb9WVbn8pzVSM6fBgKdFae1Bh6Z.XTihi7bNu3sB6/h5bt1JuUOgq

username: admin

userID: 9cb67307-fd6d-4441-9b59-52acd78f4c9e

staticClients:

- id: kubeflow-oidc-authservice

redirectURIs: ["/login/oidc"]

name: 'Dex Login Application'

secret: pUBnBOY80SnXgjibTYM9ZWNzY2xreNGQok apiVersion: v1

kind: ConfigMap

metadata:

name: dex

namespace: auth

data:

config.yaml: |

issuer: http://dex.auth.svc.cluster.local:5556/dex

storage:

type: kubernetes

config:

inCluster: true

web:

http: 0.0.0.0:5556

logger:

level: "debug"

format: text

oauth2:

skipApprovalScreen: true

enablePasswordDB: true

staticPasswords:

- email: admin@kubeflow.org

hash: $2y$10$Yb9WVbn8pzVSM6fBgKdFae1Bh6Z.XTihi7bNu3sB6/h5bt1JuUOgq

username: admin

userID: 9cb67307-fd6d-4441-9b59-52acd78f4c9e

staticClients:

- id: kubeflow-oidc-authservice

redirectURIs: ["/login/oidc"]

name: 'Dex Login Application'

secret: pUBnBOY80SnXgjibTYM9ZWNzY2xreNGQok When the enablePasswordDB value in the configuration is true, Dex stores the list of users defined in staticPasswords from the configmap into its internal storage when the service starts. Therefore, by adding new user entries composed of email, hash, username, and userID to staticPasswords, you can freely add users beyond the initial ones and use the Kubeflow service.

The attribute values for adding a user can be defined as follows.

| parameter | Explanation |

|---|---|

| A value in a standard E‑mail format | |

| hash | Bcrypt algorithm encrypted user password value, and you can directly input the hash value generated by the Bcrypt algorithm

|

| username | User name

|

| userID | A uniquely identifiable ID value

|

From a node where you can use kubectl, use the following command to enter the edit screen of dex configmap.

kubectl edit configmap dex -n authkubectl edit configmap dex -n authstaticPasswords:

- email: admin@kubeflow.org

hash: $2y$10$Yb9WVbn8pzVSM6fBgKdFae1Bh6Z.XTihi7bNu3sB6/h5bt1JuUOgq

username: admin

userID: 9cb67307-fd6d-4441-9b59-52acd78f4c9e

- email: sds@samsung.com

hash: $2y$12$0g5.y86jnrt0v6In5NRCZ.YVuvrAUQ6j/RJYO3rV.kNulaDALOKfq

username: sds

userID: 8961d517-3498-4148-90c9-7e442ee91154staticPasswords:

- email: admin@kubeflow.org

hash: $2y$10$Yb9WVbn8pzVSM6fBgKdFae1Bh6Z.XTihi7bNu3sB6/h5bt1JuUOgq

username: admin

userID: 9cb67307-fd6d-4441-9b59-52acd78f4c9e

- email: sds@samsung.com

hash: $2y$12$0g5.y86jnrt0v6In5NRCZ.YVuvrAUQ6j/RJYO3rV.kNulaDALOKfq

username: sds

userID: 8961d517-3498-4148-90c9-7e442ee91154Since the staticPasswords value in the configmap is applied when the Dex service starts, restart the Dex service using the following command.

kubectl rollout restart deployment dex -n authkubectl rollout restart deployment dex -n authAttempt to log in using new user information.

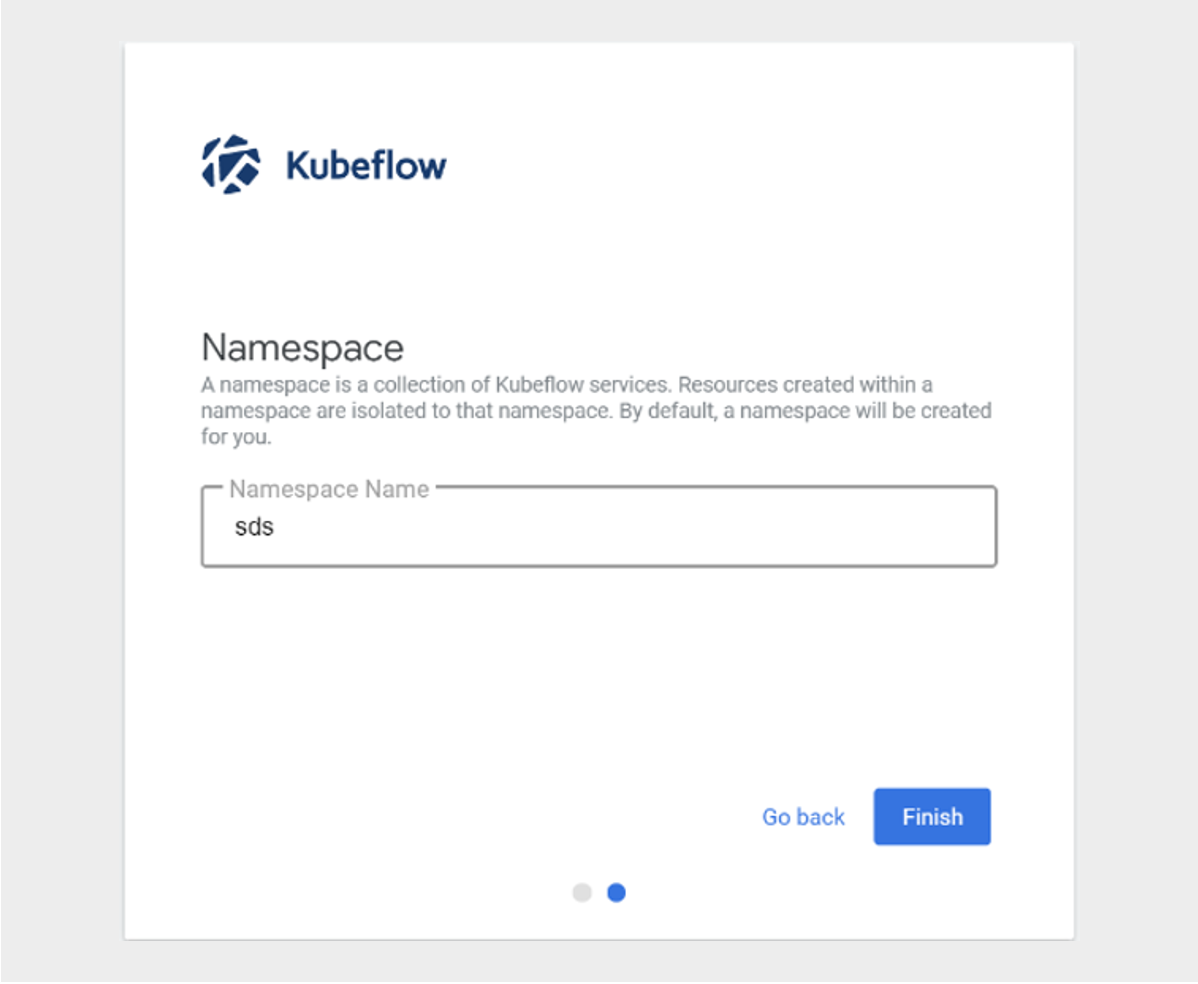

Verify that after successful login, it transitions to the screen for creating a new Namespace(profile).

The above content was written with reference to the official Kubeflow site. For more details, see Kubeflow Profiles.

How to use Custom Image in Kubeflow Jupyter Notebook

To use a custom image in the Kubeflow Notebook Controller that manages the Notebook life cycle of Kubeflow, you must meet several requirements.

Kubeflow assumes that Jupyter will start automatically when a Notebook image is run. Therefore, you need to set the default command to start Jupyter in the container image.

The following is an example of what should be included in a Dockerfile.

ENV NB_PREFIX

CMD ["sh","-c", "jupyter notebook --notebook-dir=/home/${NB_USER} --ip=0.0.0.0 --no-browser --allow-root --port=8888 --NotebookApp.token='' --NotebookApp.password='' --NotebookApp.allow_origin='*' --NotebookApp.base_url=${NB_PREFIX}"]ENV NB_PREFIX

CMD ["sh","-c", "jupyter notebook --notebook-dir=/home/${NB_USER} --ip=0.0.0.0 --no-browser --allow-root --port=8888 --NotebookApp.token='' --NotebookApp.password='' --NotebookApp.allow_origin='*' --NotebookApp.base_url=${NB_PREFIX}"]The above items are explained as follows.

| parameter | Explanation |

|---|---|

--notebook-dir=/home/jovyan | Set working directory

|

--ip=0.0.0.0 | Allow Jupyter Notebook to accept connections from any IP |

--allow-root | Allow the user to run Jupyter Notebook as root |

--port=8888 | Port configuration |

--NotebookApp.token=’’ –NotebookApp.password=’’ | Disable Jupyter authentication

|

--NotebookApp.allow_origin=’*’ | Allow origin |

--NotebookApp.base_url=NB_PREFIX | Base URL setting |

You can create a Custom Image by referring to the Dockerfile that builds the tesorflow notebook image.

- https://github.com/kubeflow/kubeflow/blob/v1.2.0/components/tensorflow-notebook-image/Dockerfile Please refer to it.

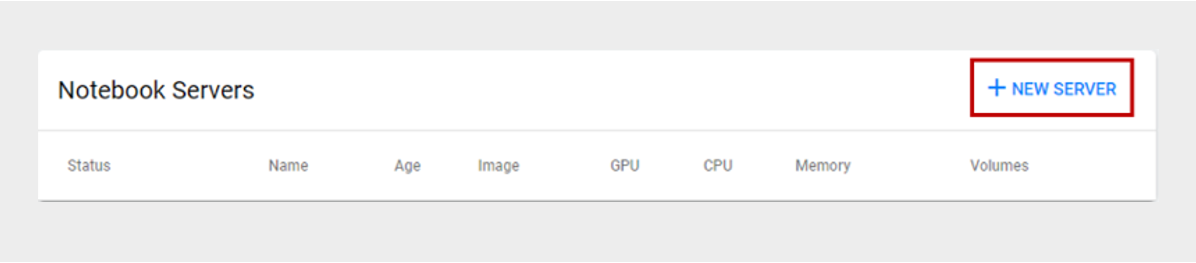

On the Notebook Servers page, click the +NEW SERVER button.

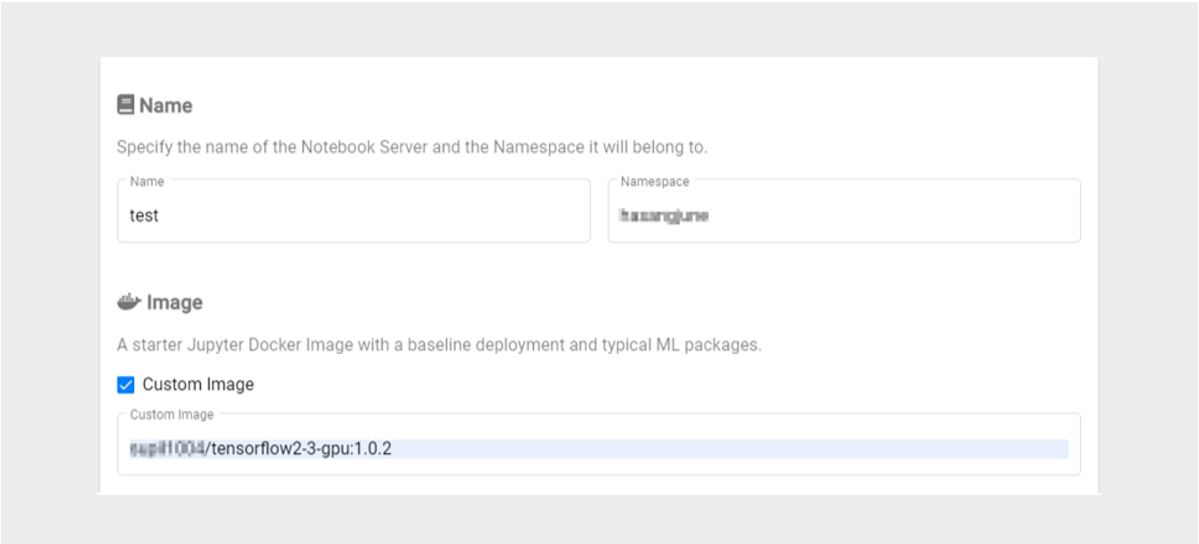

If you have created a Custom Image, check Custom Image on the Kubeflow Notebook Server screen and enter the Custom Image address to create a new Notebook Server.

The above content was written with reference to the Kubeflow official site.

- For more details, see the Kubeflow Notebooks > Container Images documentation on the official Kubeflow website.

2.3 - API Reference

2.4 - CLI Reference

2.5 - Release Note

AI&MLOps Platform

- The AI&MLOps Platform open-source version has been upgraded.

- Kubeflow 1.9

- The AI&MLOps Platform service, which automates repetitive tasks across the entire pipeline of machine learning model development, training, and deployment, has been launched.

- We provide a machine learning platform service based on Kubernetes.