This is the multi-page printable view of this section. Click here to print.

User Guide

- 1: Samsung Cloud Platform

- 1.1: New Features

- 1.2: Overview

- 1.3: Getting Started with Console

- 1.3.1: Log In

- 1.3.2: Console

- 1.3.3: Integrated Management

- 1.4: Copilot

- 2: Compute

- 2.1: Virtual Server

- 2.1.1: Overview

- 2.1.1.1: Server Type

- 2.1.1.2: Monitoring Metrics

- 2.1.1.3: ServiceWatch Metrics

- 2.1.2: How-to guides

- 2.1.2.1: Image

- 2.1.2.2: Keypair

- 2.1.2.3: Server Group

- 2.1.2.4: Change IP

- 2.1.2.5: Configure Linux NTP

- 2.1.2.6: Configure RHEL Repo and WKMS

- 2.1.2.7: Install ServiceWatch Agent

- 2.1.3: API Reference

- 2.1.4: CLI Reference

- 2.1.5: Release Note

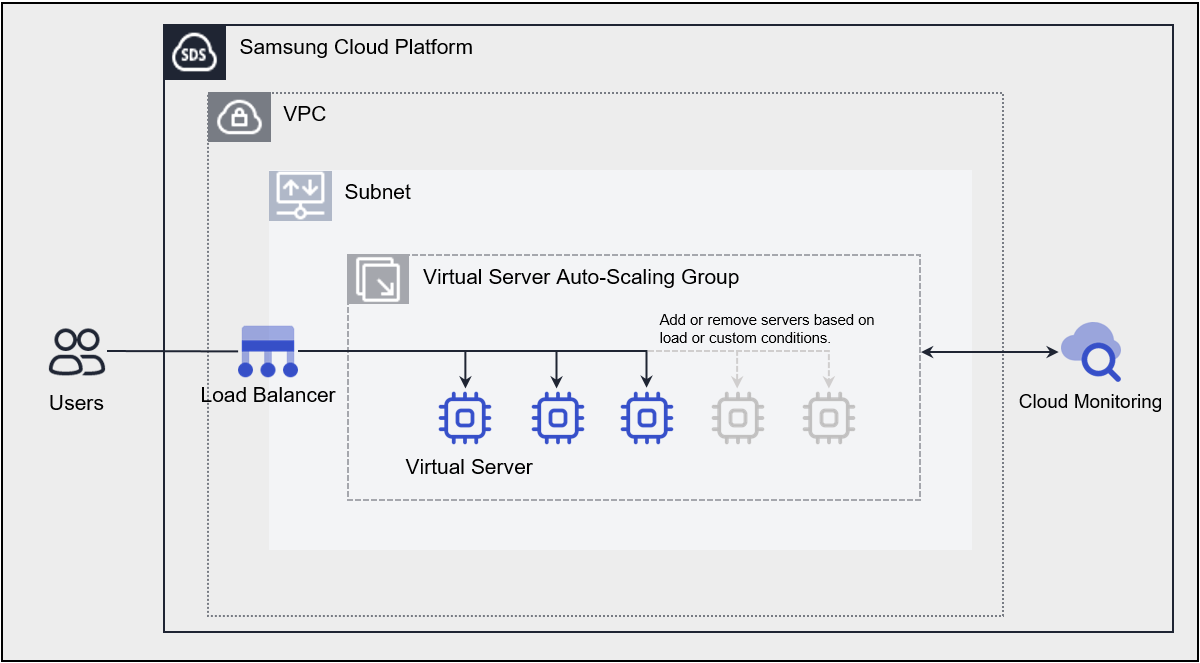

- 2.2: Virtual Server Auto-Scaling

- 2.2.1: Overview

- 2.2.1.1: Monitoring Metrics

- 2.2.1.2: ServiceWatch Metrics

- 2.2.2: How-to guides

- 2.2.2.1: Launch Configuration

- 2.2.2.2: Manage Policy

- 2.2.2.3: Manage Schedule

- 2.2.2.4: Manage Notification

- 2.2.3: API Reference

- 2.2.4: CLI Reference

- 2.2.5: Release Note

- 2.3: GPU Server

- 2.3.1: Overview

- 2.3.1.1: Server type

- 2.3.1.2: Monitoring Metrics

- 2.3.1.3: ServiceWatch Metrics

- 2.3.2: How-to guides

- 2.3.2.1: Manage Image

- 2.3.2.2: Manage Keypair

- 2.3.2.3: Use Multi-instance GPU on GPU Server

- 2.3.2.4: Use NVSwitch on GPU Server

- 2.3.2.5: Install ServiceWatch Agent

- 2.3.3: API Reference

- 2.3.4: CLI Reference

- 2.3.5: Release Note

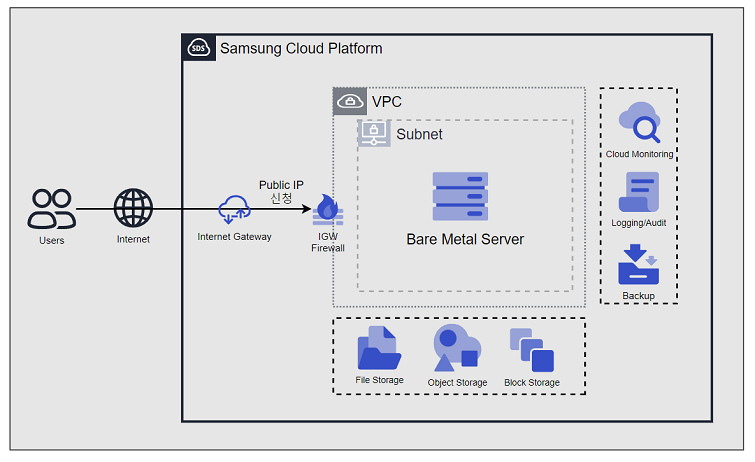

- 2.4: Bare Metal Server

- 2.4.1: Overview

- 2.4.1.1: Server Type

- 2.4.1.2: Monitoring Metrics

- 2.4.2: How-to guides

- 2.4.2.1: Install ServiceWatch Agent

- 2.4.2.2: Setting up RHEL Repo and WKMS

- 2.4.3: API Reference

- 2.4.4: CLI Reference

- 2.4.5: Release Note

- 2.5: Multi-node GPU Cluster

- 2.5.1: Overview

- 2.5.1.1: Server type

- 2.5.1.2: Monitoring Metrics

- 2.5.2: How-to guides

- 2.5.2.1: Manage Cluster Fabric

- 2.5.2.2: Install ServiceWatch Agent

- 2.5.2.3: Multi-node GPU Cluster Service Scope and Inspection Guide

- 2.5.3: Release Note

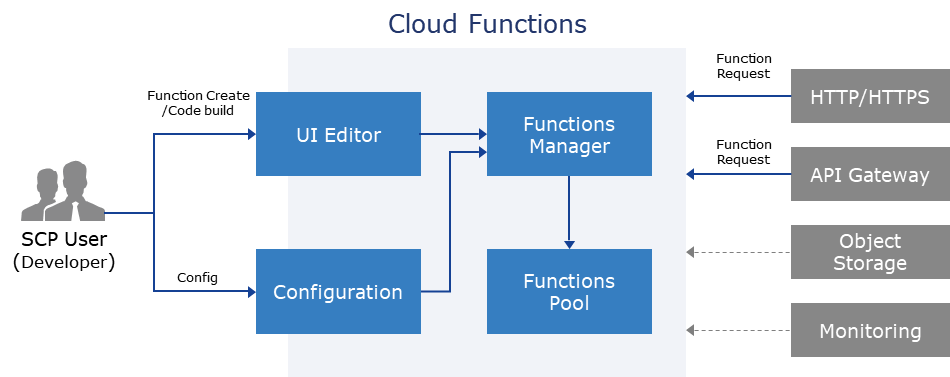

- 2.6: Cloud Functions

- 2.6.1: Overview

- 2.6.1.1: ServiceWatch Metrics

- 2.6.2: How-to guides

- 2.6.2.1: Configure Trigger

- 2.6.2.2: Blueprint Detailed Guide

- 2.6.2.3: Integrate PrivateLink Service

- 2.6.2.4: Resource-based Policy Guide

- 2.6.3: API Reference

- 2.6.4: CLI Reference

- 2.6.5: Release Note

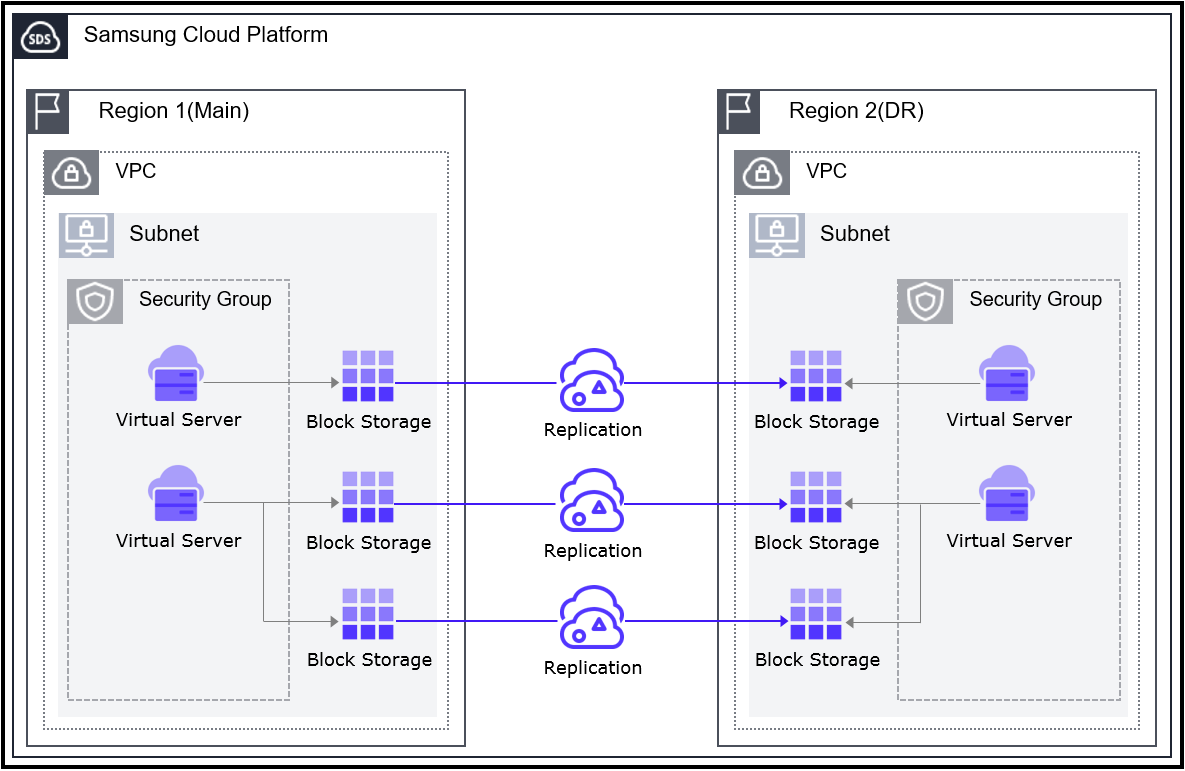

- 2.7: Virtual Server DR

- 2.7.1: Overview

- 2.7.2: Release Note

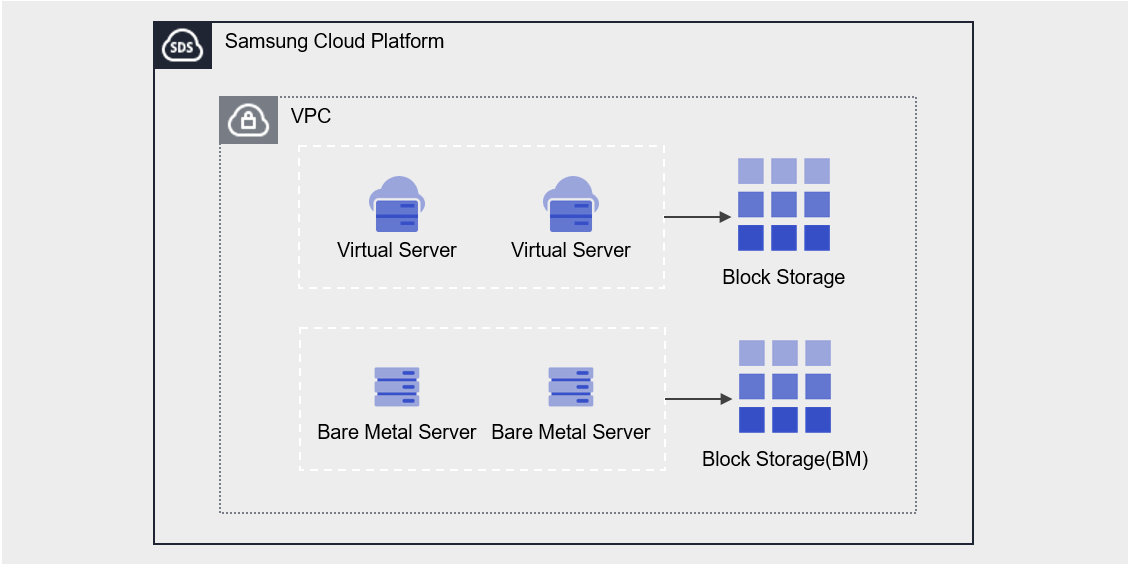

- 2.8: Block Storage

- 2.8.1: Overview

- 2.8.1.1: Monitoring Metrics

- 2.8.2: How-to guides

- 2.8.2.1: Connect to Server

- 2.8.2.2: Use Snapshot

- 2.8.2.3: Move Volume

- 2.8.3: API Reference

- 2.8.4: CLI Reference

- 2.8.5: Release Note

- 3: Storage

- 3.1: Block Storage(BM)

- 3.1.1: Overview

- 3.1.1.1: Monitoring Metrics

- 3.1.2: How-to Guides

- 3.1.2.1: Connect to Server

- 3.1.2.2: Use Snapshot

- 3.1.2.3: Use Replication

- 3.1.2.4: Use Volume Group

- 3.1.3: API Reference

- 3.1.4: CLI Reference

- 3.1.5: Release Note

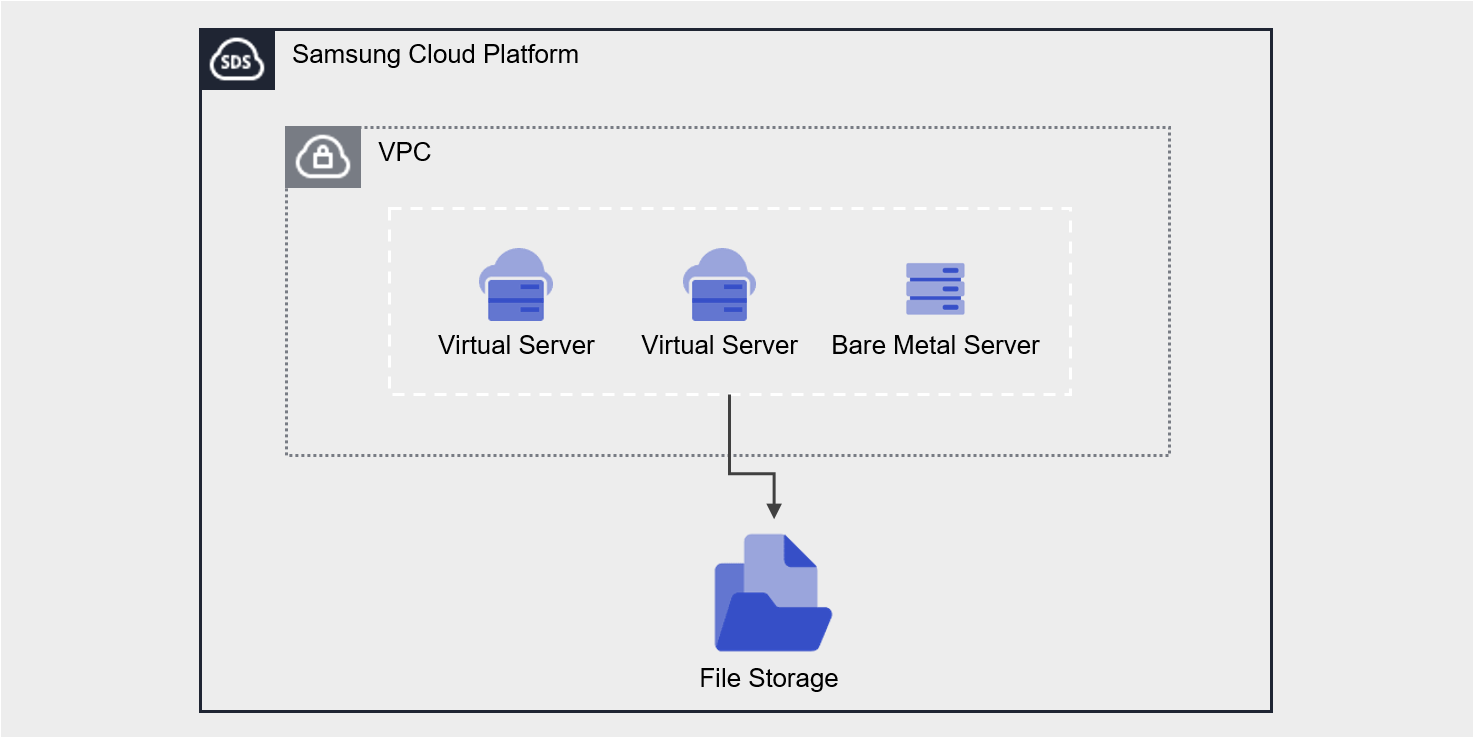

- 3.2: File Storage

- 3.2.1: Overview

- 3.2.1.1: Monitoring Metrics

- 3.2.1.2: ServiceWatch Metrics

- 3.2.2: How-to guides

- 3.2.2.1: Connect to Server

- 3.2.2.2: Use Snapshot

- 3.2.2.3: File-level Recovery

- 3.2.2.4: Use Disk Backup

- 3.2.2.5: Use Replication

- 3.2.3: API Reference

- 3.2.4: CLI Reference

- 3.2.5: Release Note

- 3.3: Object Storage

- 3.3.1: Overview

- 3.3.1.1: Amazon S3 Usage Guide

- 3.3.1.2: Monitoring Metrics

- 3.3.1.3: ServiceWatch Metrics

- 3.3.2: How-to guides

- 3.3.2.1: Access Control

- 3.3.2.2: File and Folder Management

- 3.3.2.3: Version Management

- 3.3.2.4: Permission Management

- 3.3.2.5: Replication Policy Management

- 3.3.3: Release Note

- 3.4: Archive Storage

- 3.4.1: Overview

- 3.4.1.1: ServiceWatch Metrics

- 3.4.2: How-to guides

- 3.4.2.1: Manage Archiving Policy

- 3.4.2.2: Use Version Management

- 3.4.2.3: Archive Recovery

- 3.4.3: API Reference

- 3.4.4: CLI Reference

- 3.4.5: Release Note

- 3.5: Backup

- 3.5.1: Overview

- 3.5.2: How-to guides

- 3.5.2.1: Instant Backup

- 3.5.2.2: Recovery

- 3.5.2.3: Use Backup Agent

- 3.5.2.4: Install Backup Agent

- 3.5.2.5: Use Replication

- 3.5.3: API Reference

- 3.5.4: CLI Reference

- 3.5.5: Release Note

- 3.6: Parallel File Storage

- 3.6.1: Overview

- 3.6.2: How-to guides

- 3.6.2.1: Use Snapshot

- 3.6.2.2: Install Agent

- 3.6.2.3: File-level Recovery

- 3.6.3: API Reference

- 3.6.4: CLI Reference

- 3.6.5: Release Note

- 4: Container

- 4.1: Kubernetes Engine

- 4.1.1: Overview

- 4.1.1.1: Monitoring Metrics

- 4.1.1.2: ServiceWatch Metrics

- 4.1.2: How-to guides

- 4.1.2.1: Managing Nodes

- 4.1.2.2: Managing Namespaces

- 4.1.2.3: Manage Workloads

- 4.1.2.4: Manage services and ingresses

- 4.1.2.5: Managing Storage

- 4.1.2.6: Configuration(Configuration) Management

- 4.1.2.7: Manage Permissions

- 4.1.3: Kubernetes Engine Usage Guide

- 4.1.3.1: Access Cluster

- 4.1.3.2: Authentication and Authorization

- 4.1.3.3: Using type LoadBalancer service

- 4.1.3.4: Usage Considerations

- 4.1.3.5: Version information

- 4.1.4: API Reference

- 4.1.5: CLI Reference

- 4.1.6: Release Note

- 4.2: Container Registry

- 4.2.1: Overview

- 4.2.1.1: Monitoring Metrics

- 4.2.1.2: ServiceWatch Metrics

- 4.2.2: How-to guides

- 4.2.2.1: Manage Repository

- 4.2.2.2: Manage Images and Tags

- 4.2.2.3: Manage Image Security Vulnerabilities

- 4.2.2.4: Manage Image Tag Deletion Policy

- 4.2.2.5: Use Container Registry with CLI

- 4.2.2.6: Example of Registry and Repository Policies

- 4.2.3: API Reference

- 4.2.4: CLI Reference

- 4.2.5: Release Note

- 5: Networking

- 5.1: VPC

- 5.1.1: Overview

- 5.1.1.1: ServiceWatch Metrics

- 5.1.2: How-to guides

- 5.1.2.1: Subnet

- 5.1.2.2: Port

- 5.1.2.3: Internet Gateway

- 5.1.2.4: NAT Gateway

- 5.1.2.5: Public IP

- 5.1.2.6: Private NAT

- 5.1.2.7: VPC Endpoint

- 5.1.2.8: VPC Peering

- 5.1.2.9: Transit Gateway

- 5.1.2.10: PrivateLink Service

- 5.1.2.11: PrivateLink Endpoint

- 5.1.2.12: NAT Logging

- 5.1.3: API Reference

- 5.1.4: CLI Reference

- 5.1.5: Release Note

- 5.2: Security Group

- 5.2.1: Overview

- 5.2.2: How-to guides

- 5.2.2.1: Security Group Logging

- 5.2.2.2: Migration Rules

- 5.2.3: API Reference

- 5.2.4: CLI Reference

- 5.2.5: Release Note

- 5.3: Load Balancer

- 5.3.1: Overview

- 5.3.1.1: ServiceWatch metric

- 5.3.2: How-to guides

- 5.3.2.1: LB Server Groups

- 5.3.2.2: LB Health Check

- 5.3.3: API Reference

- 5.3.4: CLI Reference

- 5.3.5: Release Note

- 5.4: DNS

- 5.4.1: Overview

- 5.4.1.1: TLD List

- 5.4.1.2: ServiceWatch Metrics

- 5.4.2: How-to guides

- 5.4.2.1: Private DNS

- 5.4.2.2: Hosted Zone

- 5.4.2.3: Public Domain Name

- 5.4.3: Release Note

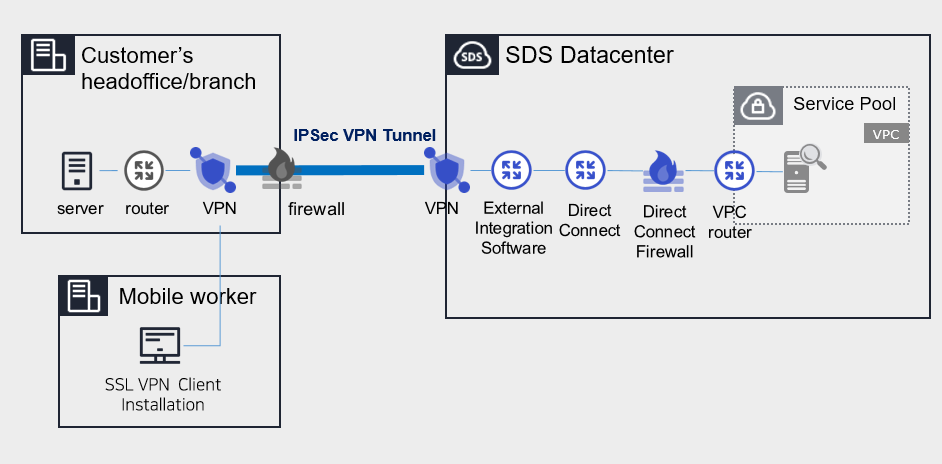

- 5.5: VPN

- 5.5.1: Overview

- 5.5.1.1: ServiceWatch Metrics

- 5.5.2: How-to guides

- 5.5.2.1: VPN Tunnel

- 5.5.3: API Reference

- 5.5.4: CLI Reference

- 5.5.5: Release Note

- 5.6: Firewall

- 5.6.1: Overview

- 5.6.2: How-to guides

- 5.6.2.1: Firewall Logging

- 5.6.2.2: Migration Rules

- 5.6.3: API Reference

- 5.6.4: CLI Reference

- 5.6.5: Release Note

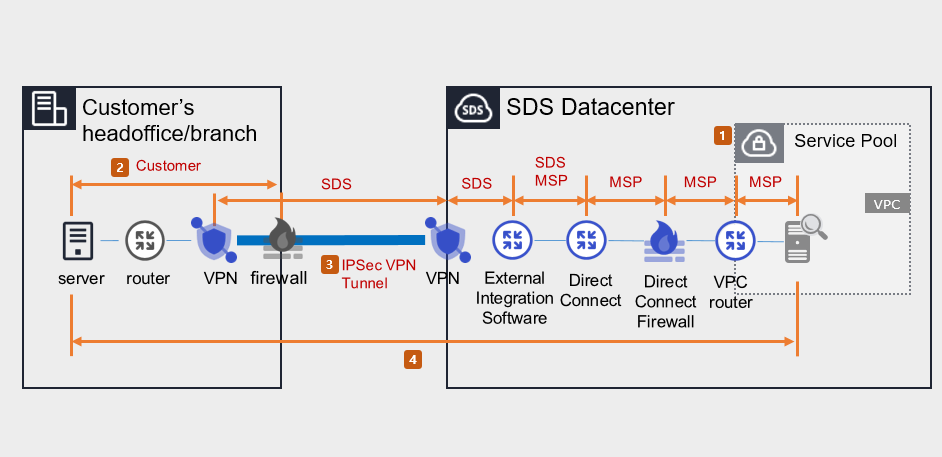

- 5.7: Direct Connect

- 5.7.1: Overview

- 5.7.1.1: ServiceWatch Metrics

- 5.7.2: How-to guides

- 5.7.3: API Reference

- 5.7.4: CLI Reference

- 5.7.5: Release Note

- 5.8: Cloud LAN-Campus

- 5.8.1: Overview

- 5.8.2: How-to guides

- 5.8.2.1: Campus Firewall Request Service

- 5.8.3: Release Note

- 5.9: Cloud LAN-Campus

- 5.9.1: Overview

- 5.9.2: How-to guides

- 5.9.3: Release Note

- 5.10: Cloud LAN-Data Center

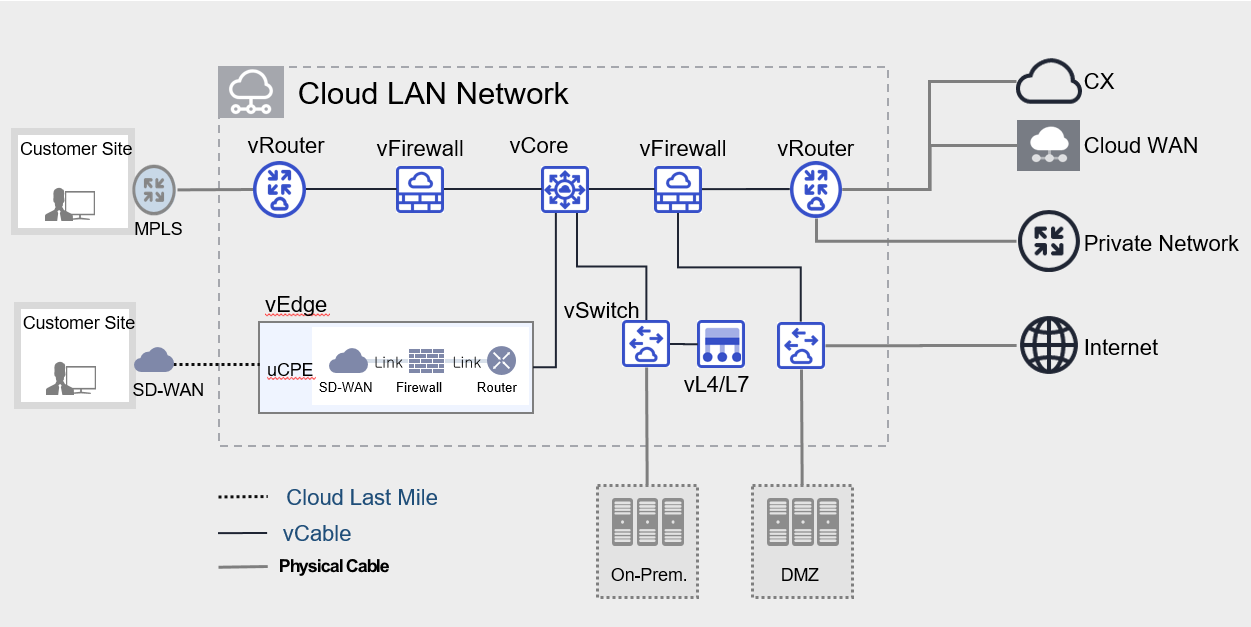

- 5.10.1: Overview

- 5.10.2: How-to guides

- 5.10.3: Release Note

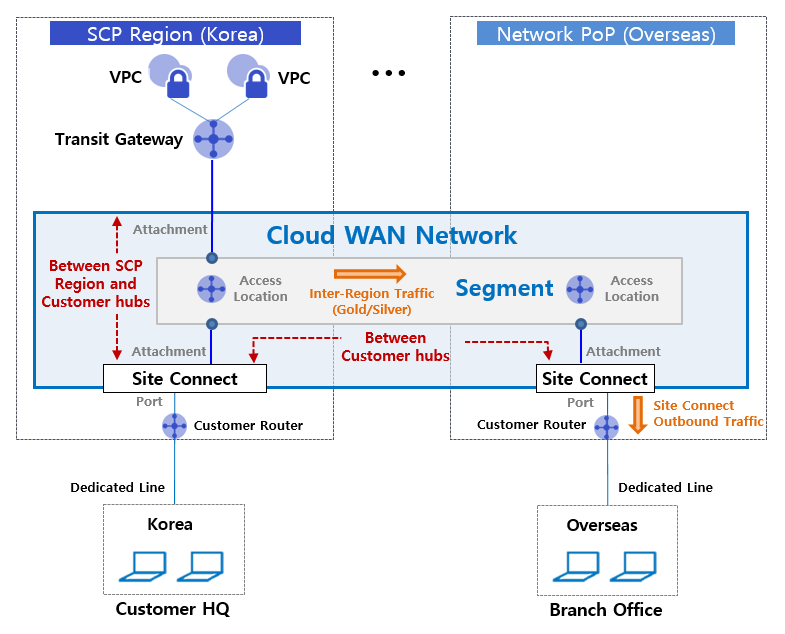

- 5.11: Cloud WAN

- 5.11.1: Overview

- 5.11.1.1: Monitoring Metrics

- 5.11.2: How-to guides

- 5.11.3: Release Note

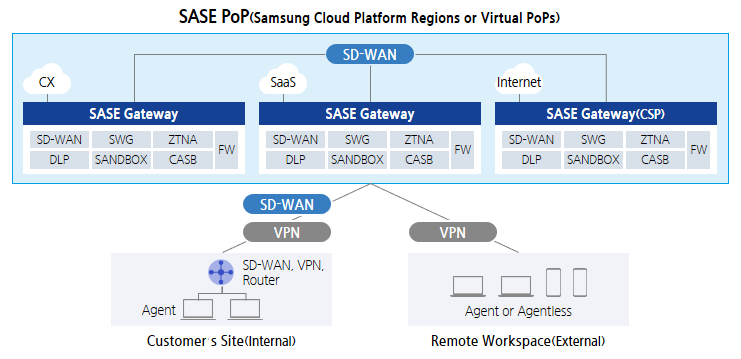

- 5.12: SASE

- 5.12.1: Overview

- 5.12.2: How-to guides

- 5.12.2.1: SASE Lastmile

- 5.12.3: Release Note

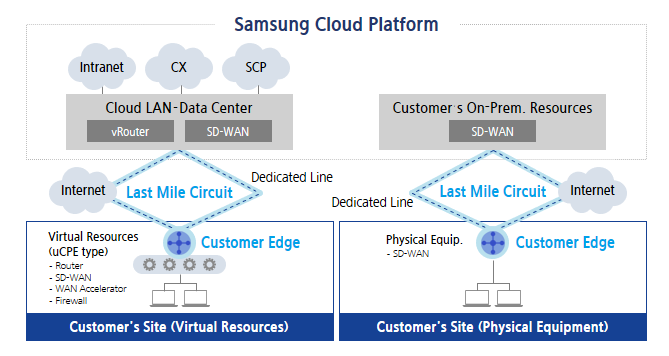

- 5.13: Cloud Last Mile

- 5.13.1: Overview

- 5.13.2: How-to guides

- 5.13.2.1: Circuit and Edge

- 5.13.3: Release Note

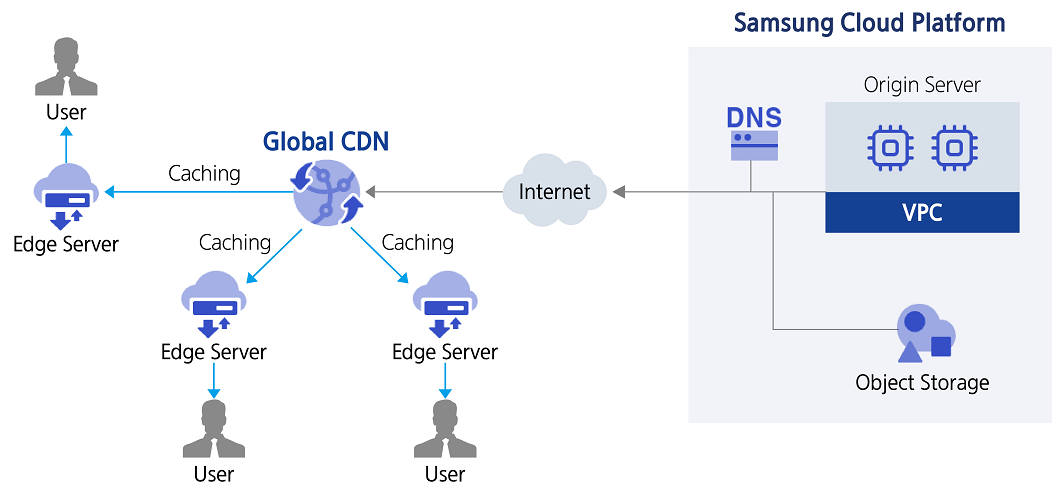

- 5.14: Global CDN

- 5.14.1: Overview

- 5.14.1.1: ServiceWatch Metrics

- 5.14.2: How-to guides

- 5.14.3: API Reference

- 5.14.4: CLI Reference

- 5.14.5: Release Note

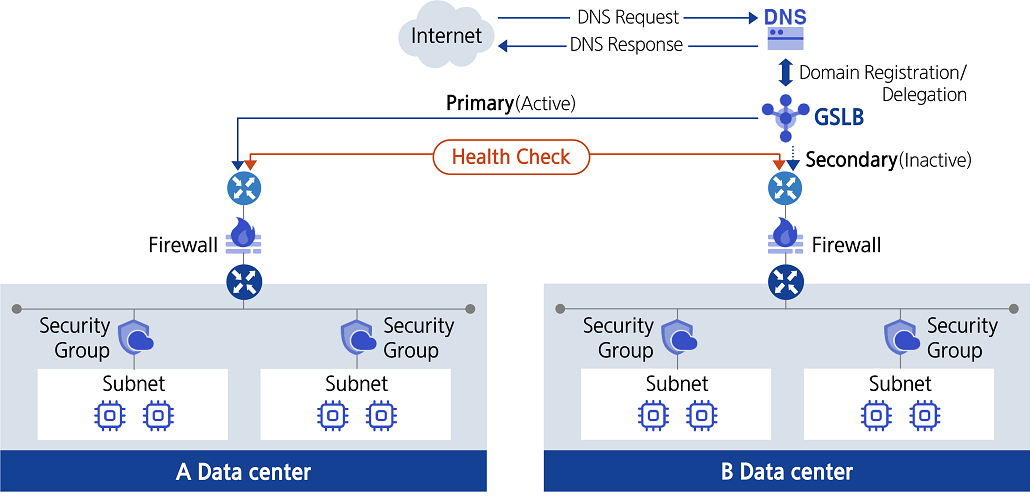

- 5.15: GSLB

- 5.15.1: Overview

- 5.15.2: How-to guides

- 5.15.3: API Reference

- 5.15.4: CLI Reference

- 5.15.5: Release Note

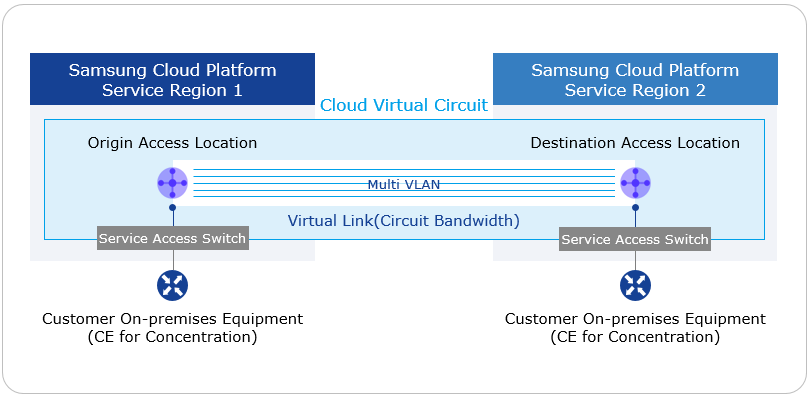

- 5.16: Cloud Virtual Circuit

- 5.16.1: Overview

- 5.16.2: How-to guides

- 5.16.3: Release Note

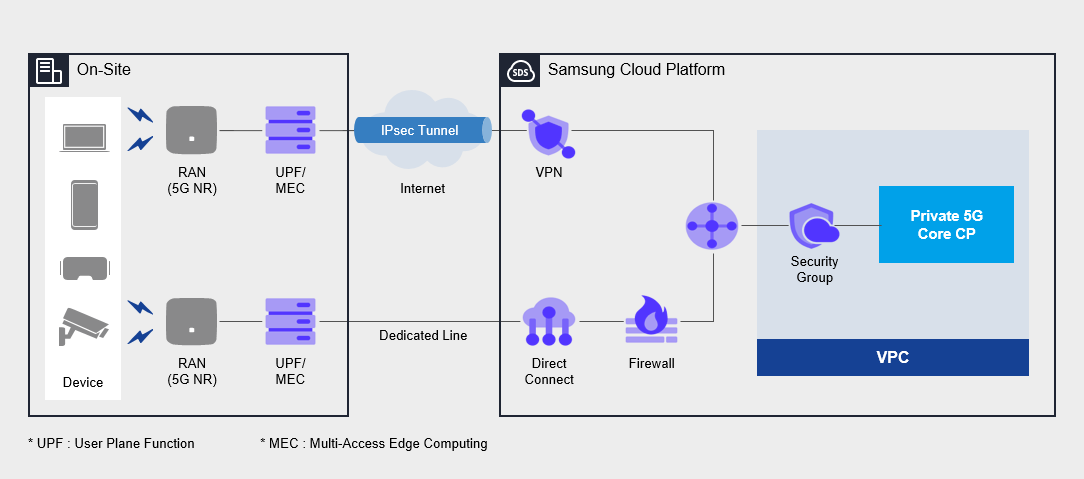

- 5.17: Private 5G Cloud

- 5.17.1: Overview

- 5.17.2: How-to guides

- 5.17.3: Release Note

- 6: Database

- 6.1: EPAS(DBaaS)

- 6.1.1: Overview

- 6.1.1.1: Server Types

- 6.1.1.2: Monitoring metrics

- 6.1.2: How-to guides

- 6.1.2.1: DB Service Manage

- 6.1.2.2: Backing up and restoring the database

- 6.1.2.3: Configure Read Replica

- 6.1.2.4: EPAS(DBaaS) Server Connection

- 6.1.2.5: Extension Usage

- 6.1.3: API Reference

- 6.1.4: CLI Reference

- 6.1.5: Release Note

- 6.2: PostgreSQL(DBaaS)

- 6.2.1: Overview

- 6.2.1.1: Server Types

- 6.2.1.2: Monitoring metrics

- 6.2.2: How-to guides

- 6.2.2.1: DB Service Manage

- 6.2.2.2: Backing up and restoring the DB

- 6.2.2.3: Configure Read Replica

- 6.2.2.4: DB Server Connection

- 6.2.2.5: Extension Use

- 6.2.3: API Reference

- 6.2.4: CLI Reference

- 6.2.5: Release Note

- 6.3: MariaDB(DBaaS)

- 6.3.1: Overview

- 6.3.1.1: Server Types

- 6.3.1.2: Monitoring Metrics

- 6.3.1.3: ServiceWatch Metrics

- 6.3.2: How-to guides

- 6.3.2.1: DB Service Manage

- 6.3.2.2: Backing up and restoring the database

- 6.3.2.3: Configure Read Replica

- 6.3.2.4: MariaDB(DBaaS) Server Connection

- 6.3.3: API Reference

- 6.3.4: CLI Reference

- 6.3.5: Release Note

- 6.4: MySQL(DBaaS)

- 6.4.1: Overview

- 6.4.1.1: Server Types

- 6.4.1.2: Monitoring metrics

- 6.4.1.3: ServiceWatch Metrics

- 6.4.2: How-to guides

- 6.4.2.1: DB Service Manage

- 6.4.2.2: Backing up and restoring the database

- 6.4.2.3: Configure Read Replica

- 6.4.2.4: MySQL(DBaaS) Server Connection

- 6.4.3: API Reference

- 6.4.4: CLI Reference

- 6.4.5: Release Note

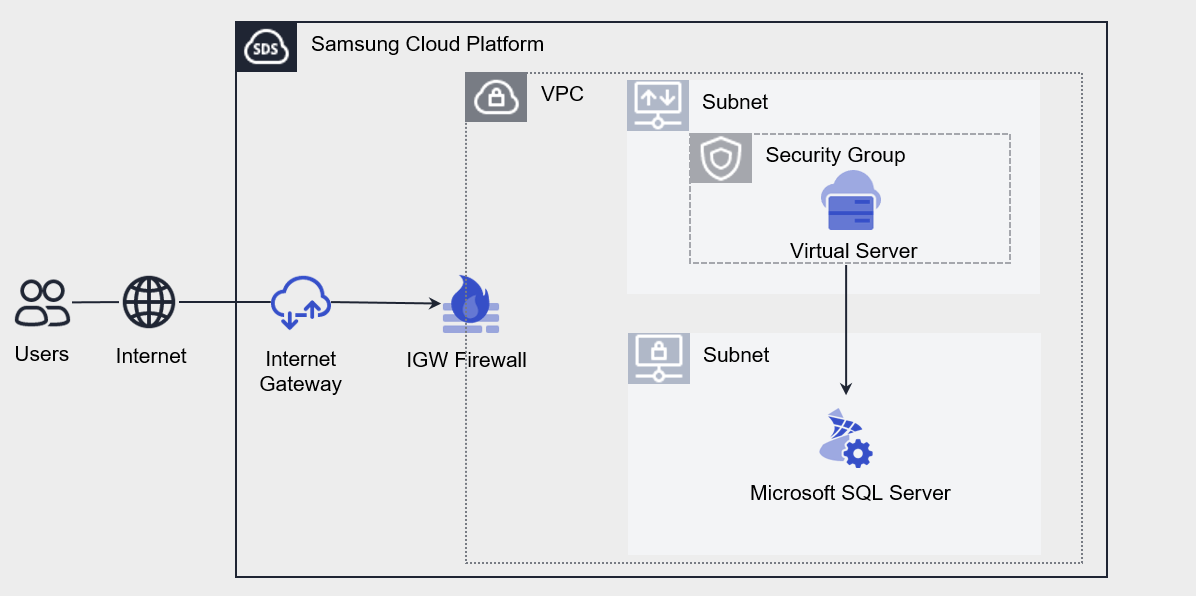

- 6.5: Microsoft SQL Server(DBaaS)

- 6.5.1: Overview

- 6.5.1.1: Server Types

- 6.5.1.2: Monitoring metrics

- 6.5.2: How-to guides

- 6.5.2.1: DB Service Manage

- 6.5.2.2: Backing up and restoring the DB

- 6.5.2.3: Secondary Add

- 6.5.2.4: Microsoft SQL Server(DBaaS) Server Connection

- 6.5.3: API Reference

- 6.5.4: CLI Reference

- 6.5.5: Release Note

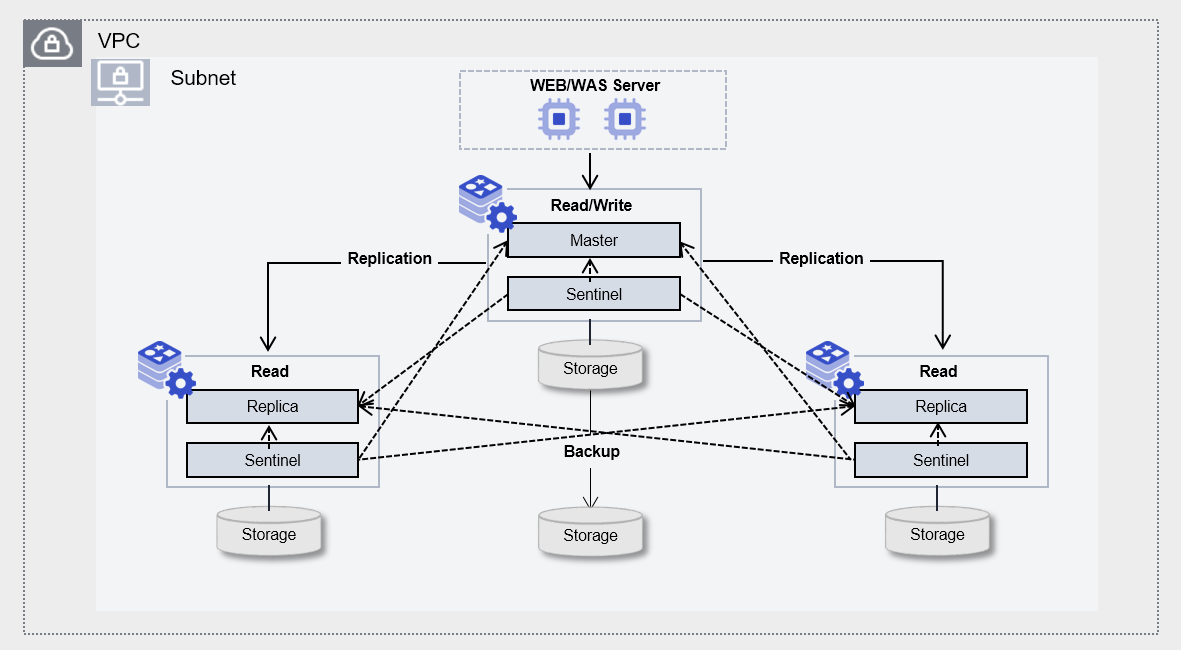

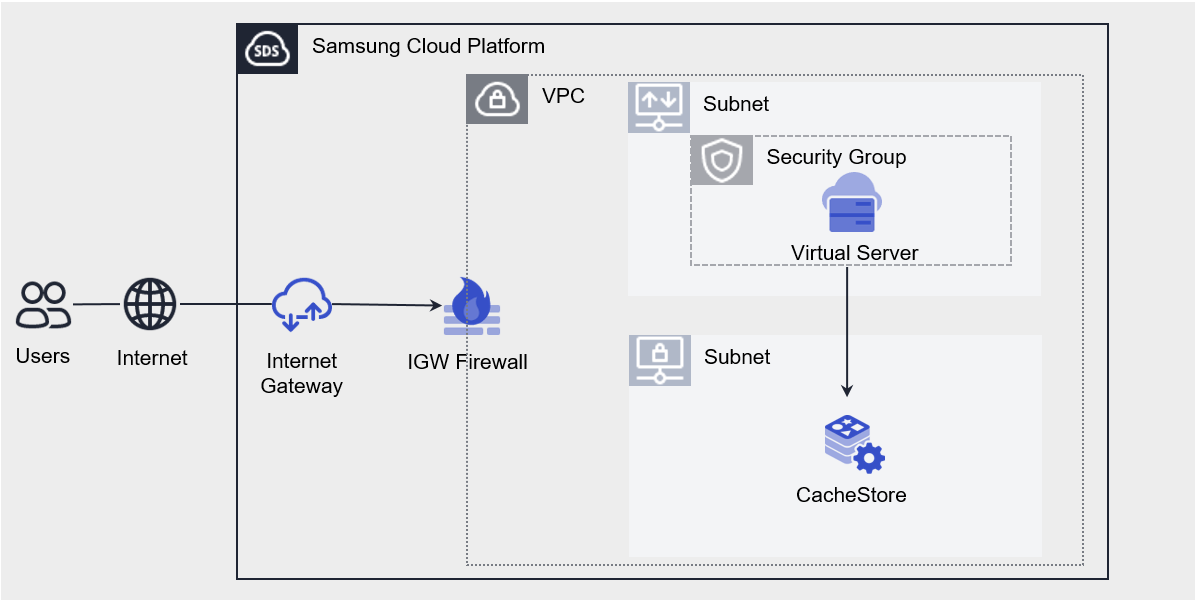

- 6.6: CacheStore(DBaaS)

- 6.6.1: Overview

- 6.6.1.1: Server Types

- 6.6.1.2: Monitoring metrics

- 6.6.2: How-to guides

- 6.6.2.1: CacheStore Service Manage

- 6.6.2.2: CacheStore Backup and Restore

- 6.6.2.3: CacheStore(DBaaS) Server Connection

- 6.6.3: API Reference

- 6.6.4: CLI Reference

- 6.6.5: Release Note

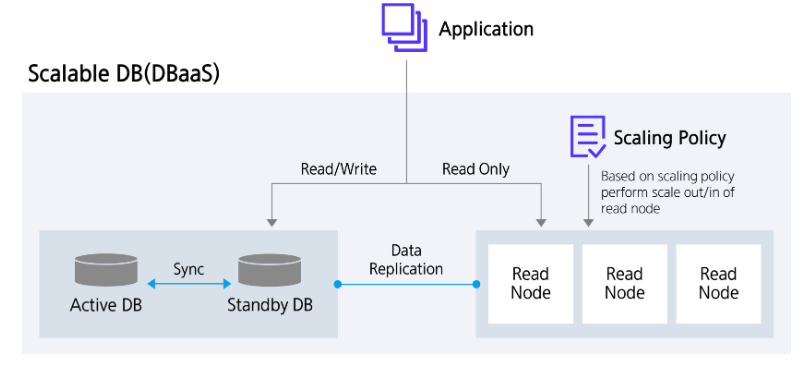

- 6.7: Scalable DB(DBaaS)

- 6.7.1: Overview

- 6.7.1.1: Server Types

- 6.7.1.2: ServiceWatch Metrics

- 6.7.2: How-to guides

- 6.7.2.1: Read Node Manage

- 6.7.2.2: Backup DB

- 6.7.3: Release Note

- 7: Data Analytics

- 7.1: Event Streams

- 7.1.1: Overview

- 7.1.1.1: Server Type

- 7.1.1.2: Monitoring Metrics

- 7.1.1.3: ServiceWatch Metrics

- 7.1.2: How-to guides

- 7.1.3: API Reference

- 7.1.4: CLI Reference

- 7.1.5: Release Note

- 7.2: Search Engine

- 7.2.1: Overview

- 7.2.1.1: Server Type

- 7.2.1.2: Monitoring metrics

- 7.2.2: How-to guides

- 7.2.3: API Reference

- 7.2.4: CLI Reference

- 7.2.5: Release Note

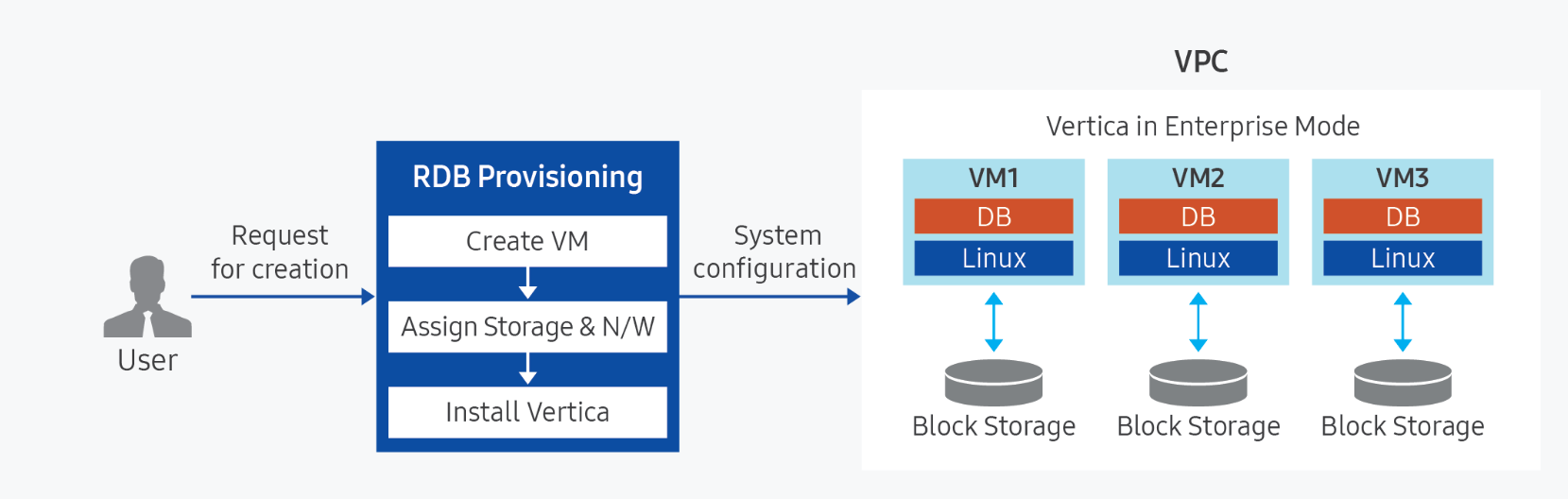

- 7.3: Vertica(DBaaS)

- 7.3.1: Overview

- 7.3.1.1: Server Type

- 7.3.1.2: Monitoring metrics

- 7.3.2: How-to guides

- 7.3.2.1: Backing up and restoring Vertica

- 7.3.3: API Reference

- 7.3.4: CLI Reference

- 7.3.5: Release Note

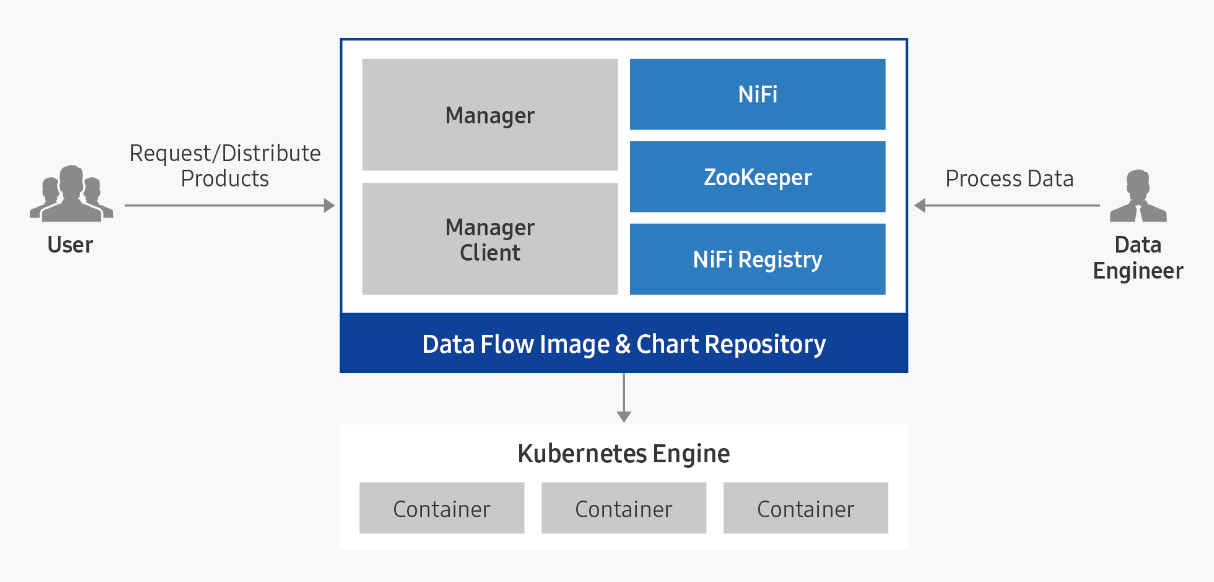

- 7.4: Data Flow

- 7.4.1: Overview

- 7.4.1.1: ServiceWatch metric

- 7.4.2: How-to guides

- 7.4.2.1: Data Flow Services

- 7.4.2.2: Install Ingress Controller

- 7.4.3: API Reference

- 7.4.4: CLI Reference

- 7.4.5: Release Note

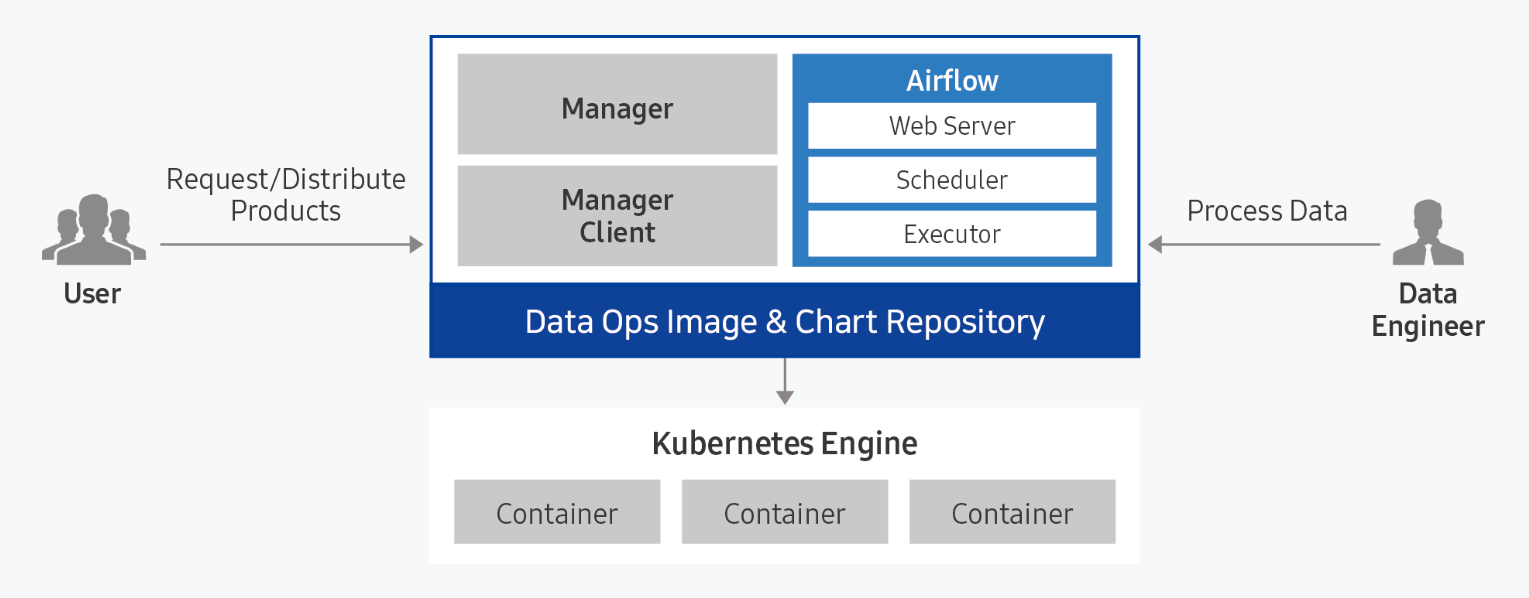

- 7.5: Data Ops

- 7.5.1: Overview

- 7.5.1.1: ServiceWatch metric

- 7.5.2: How-to guides

- 7.5.2.1: Data Ops Services

- 7.5.2.2: Installing Ingress Controller

- 7.5.3: API Reference

- 7.5.4: CLI Reference

- 7.5.5: Release Note

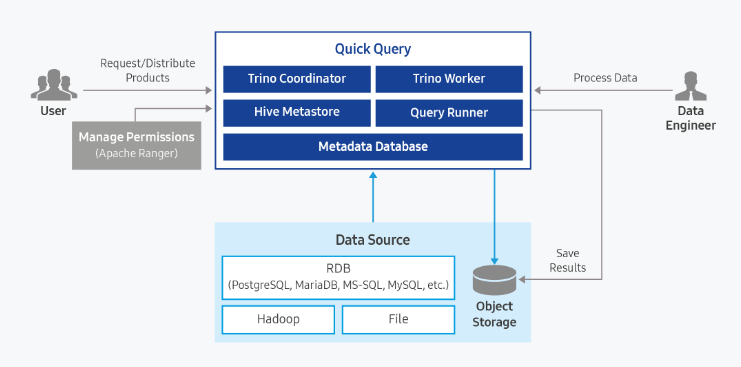

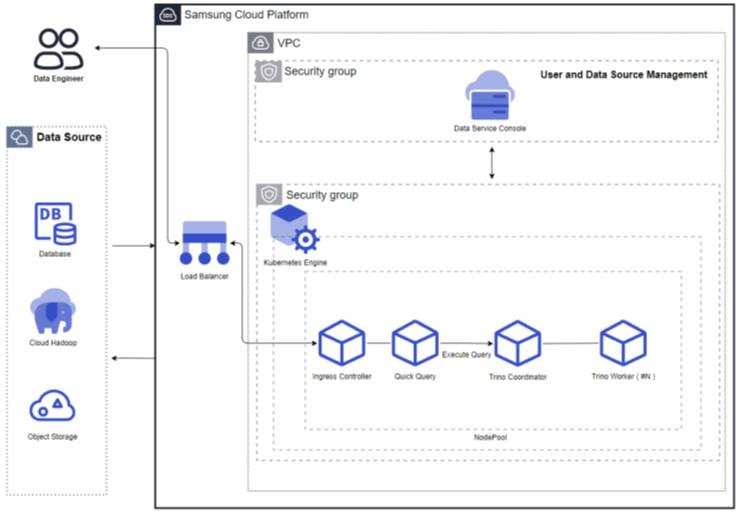

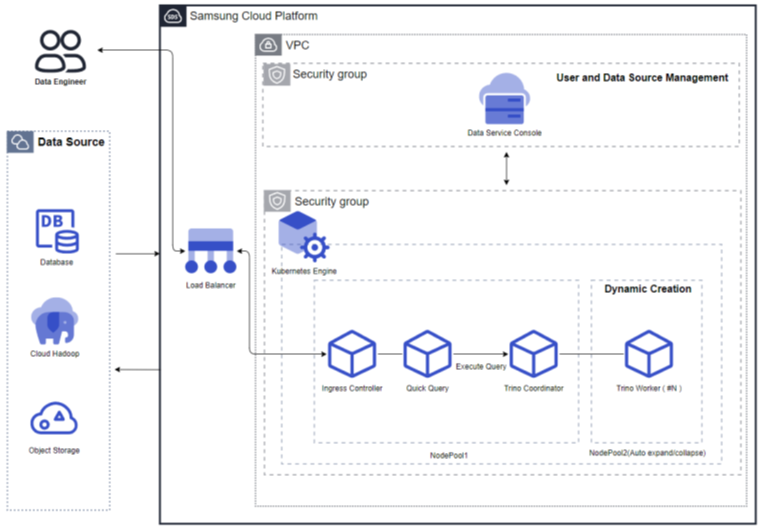

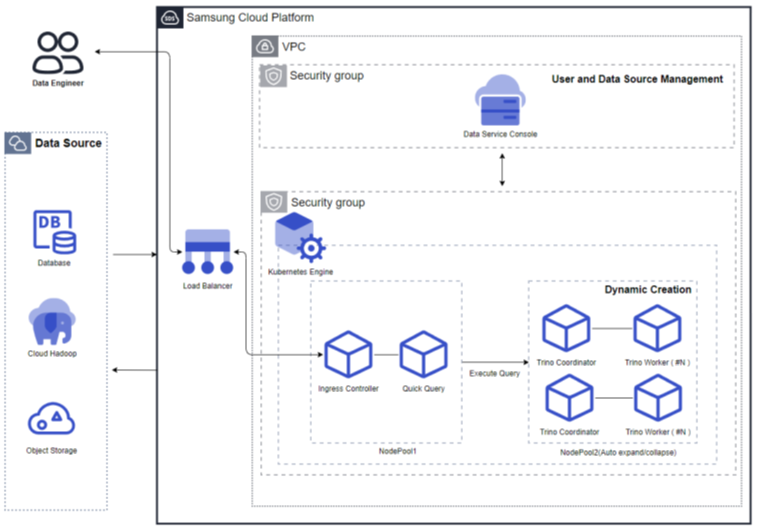

- 7.6: Quick Query

- 7.6.1: Overview

- 7.6.1.1: ServiceWatch metric

- 7.6.2: How-to guides

- 7.6.3: API Reference

- 7.6.4: CLI Reference

- 7.6.5: Release Note

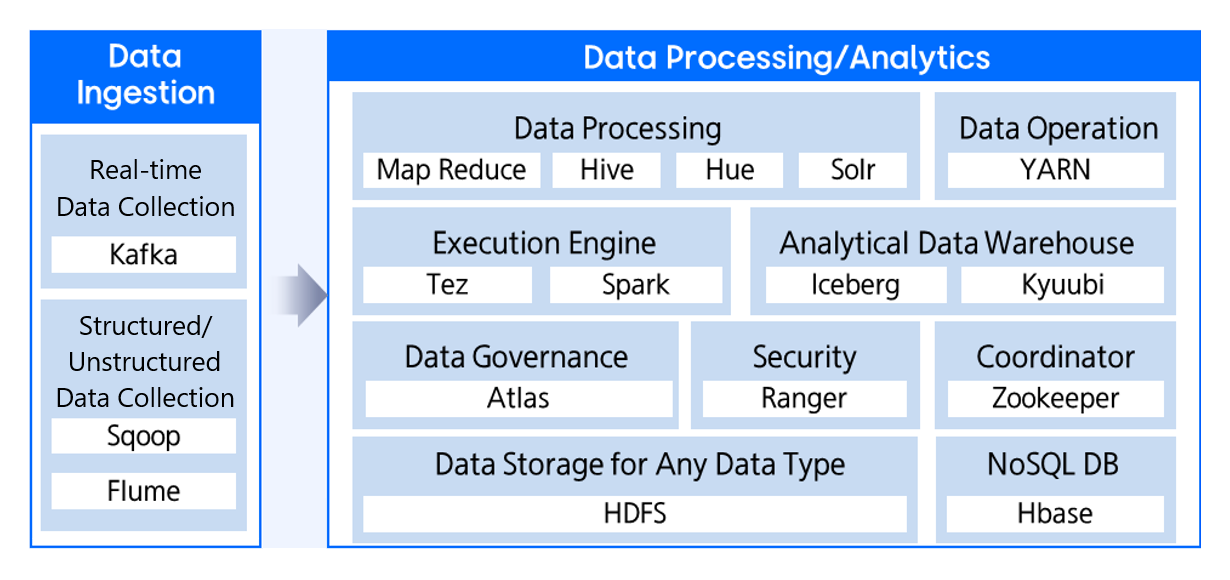

- 7.7: Cloud Hadoop

- 7.7.1: Overview

- 7.7.1.1: ServiceWatch metric

- 7.7.2: How-to guides

- 7.7.3: API Reference

- 7.7.4: Release Note

- 8: Application Service

- 8.1: API Gateway

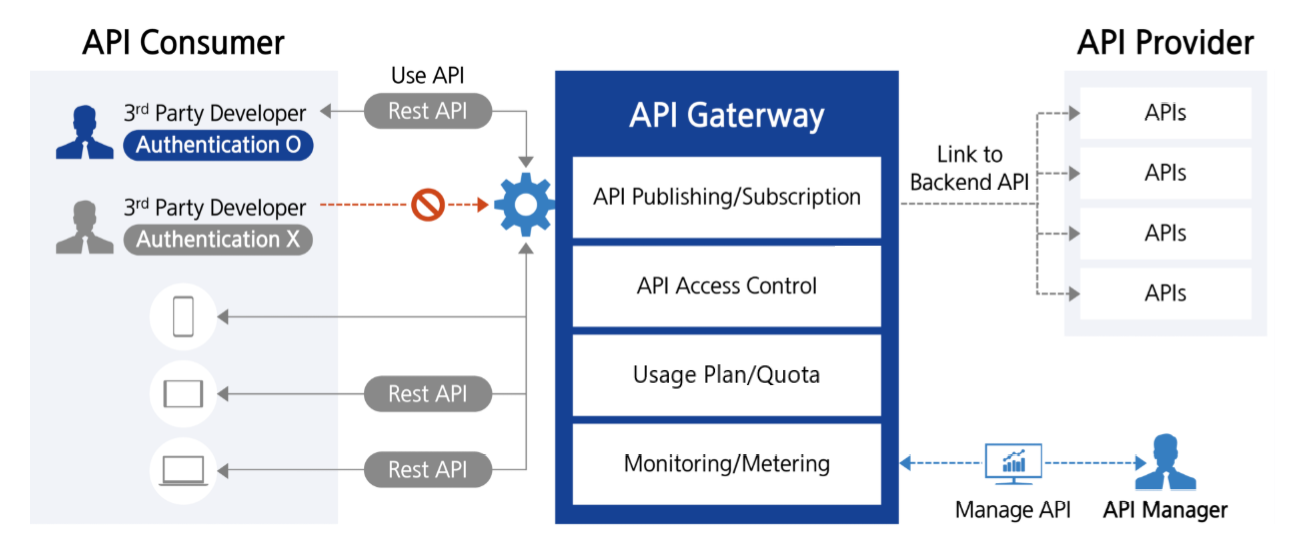

- 8.1.1: Overview

- 8.1.1.1: ServiceWatch metric

- 8.1.2: How-to guides

- 8.1.2.1: Resource-Based Policy Guide

- 8.1.3: API Reference

- 8.1.4: CLI Reference

- 8.1.5: Release Note

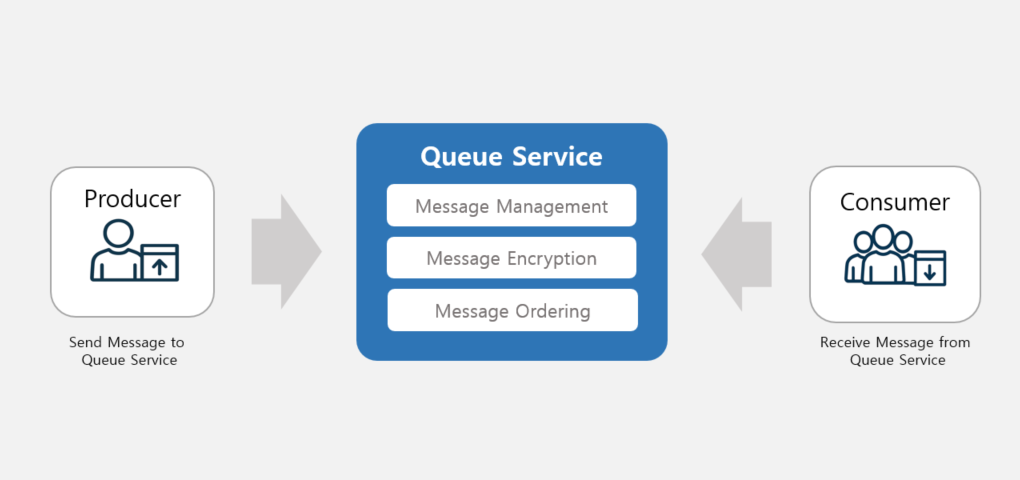

- 8.2: Queue Service

- 8.2.1: Message API reference

- 8.2.2: How to guides

- 8.2.3: Overview

- 8.2.3.1: ServiceWatch Metrics

- 8.2.4: CLI Reference

- 8.2.5: API Reference

- 8.2.6: Release Note

- 9: Security

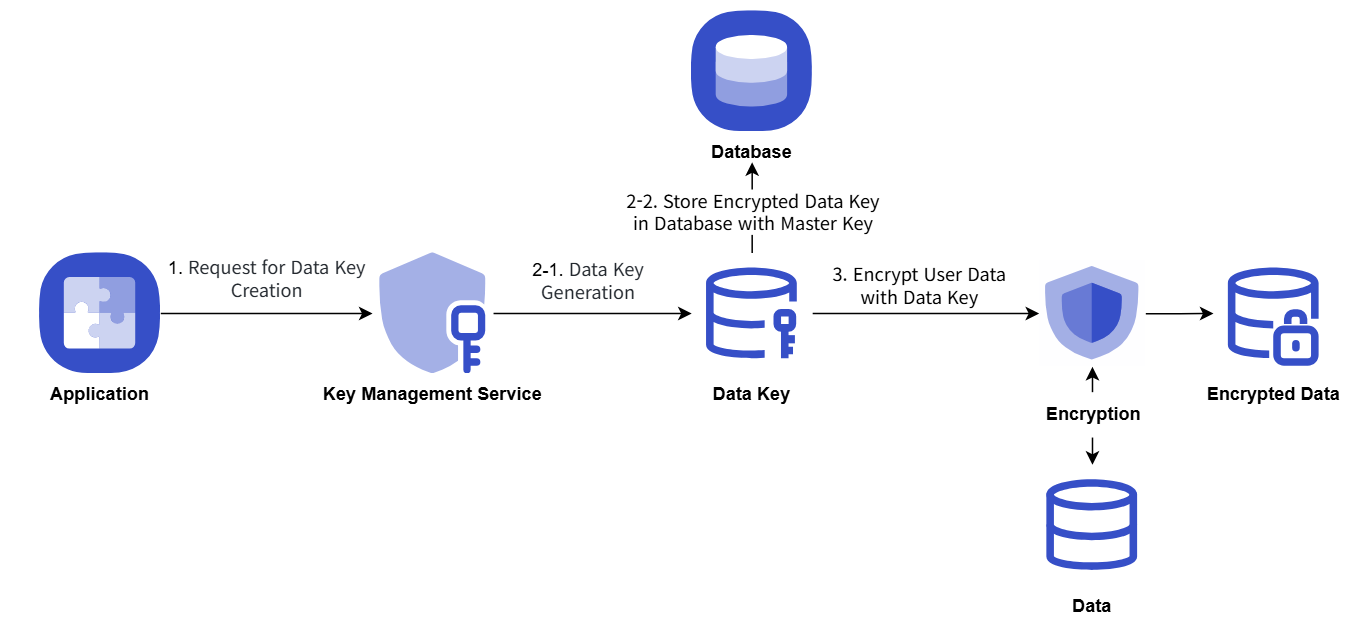

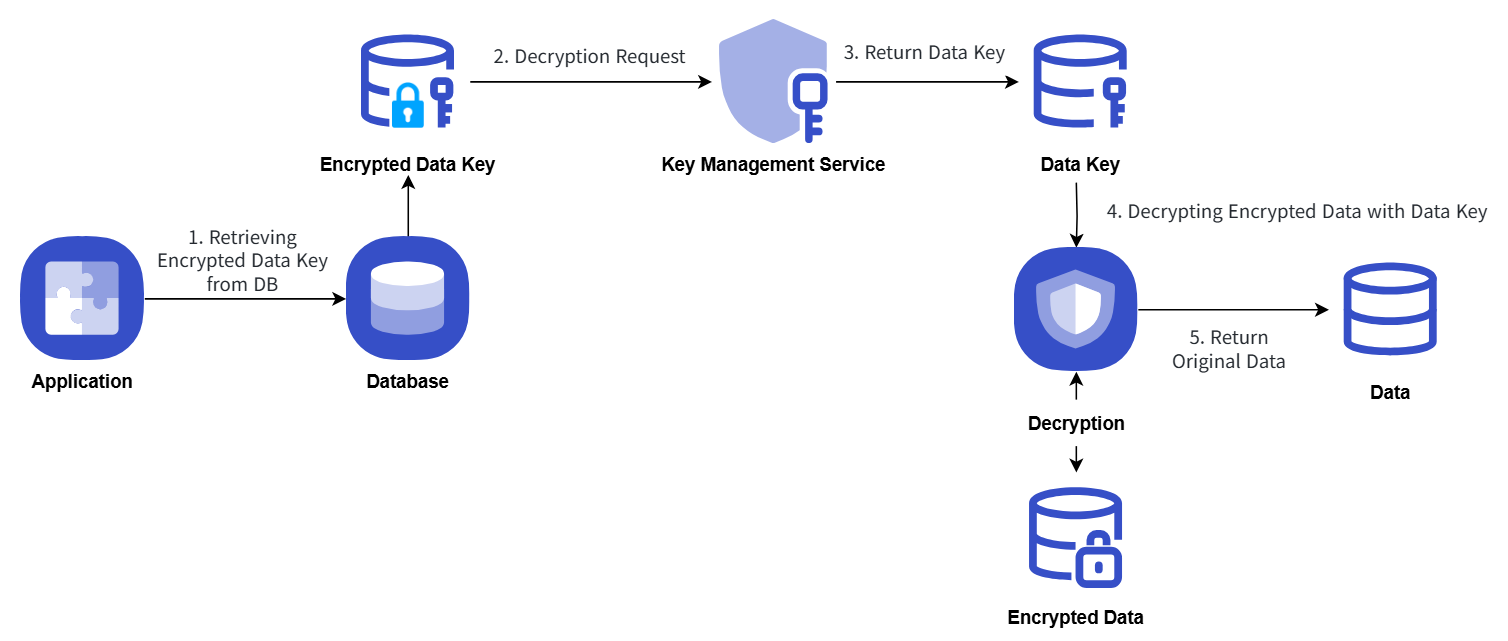

- 9.1: Key Management Service

- 9.1.1: Overview

- 9.1.2: How-to guides

- 9.1.2.1: Encryption Example Using Key Management Service Keys

- 9.1.2.2: Platform-managed Key

- 9.1.3: API Reference

- 9.1.4: CLI Reference

- 9.1.5: Release Note

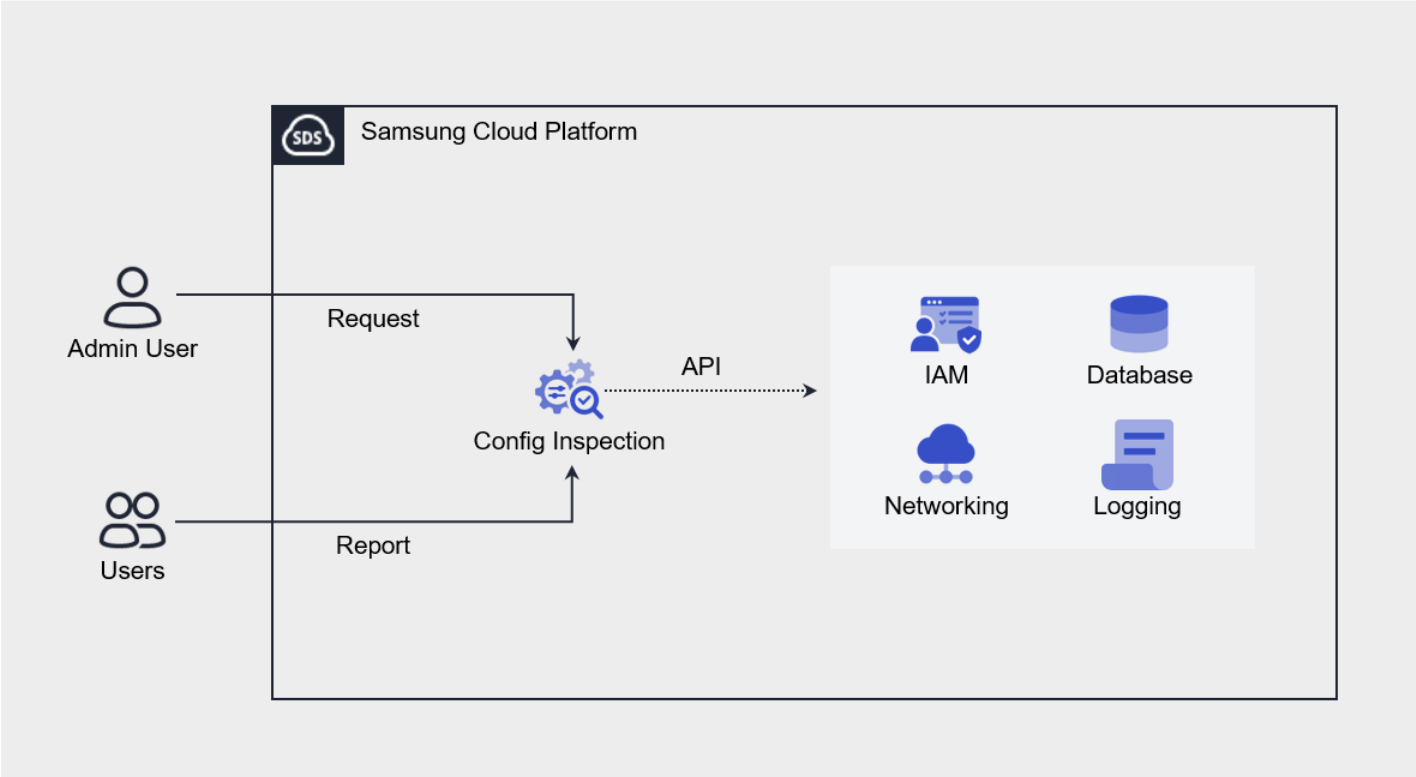

- 9.2: Config Inspection

- 9.2.1: Overview

- 9.2.1.1: Checklist

- 9.2.2: How-to guides

- 9.2.2.1: Check Dashboard

- 9.2.2.2: Manage Diagnosis Results

- 9.2.2.3: Pre-configuration

- 9.2.3: Release Note

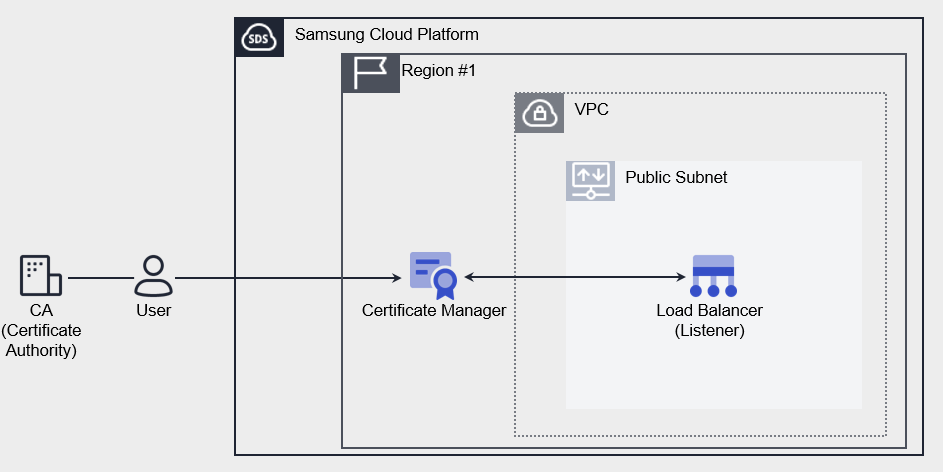

- 9.3: Certificate Manager

- 9.3.1: Overview

- 9.3.2: How-to guides

- 9.3.2.1: Extract Certificate Chain

- 9.3.3: API Reference

- 9.3.4: CLI Reference

- 9.3.5: Release Note

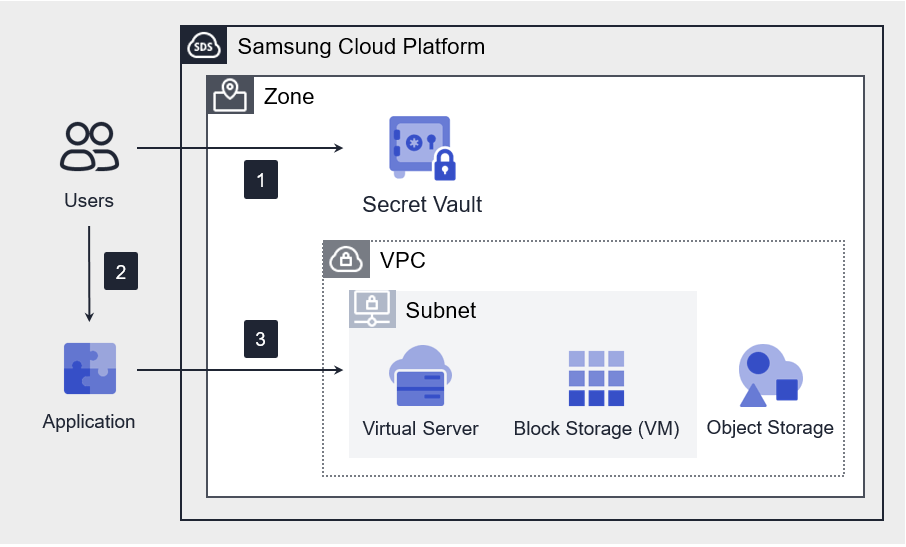

- 9.4: Secret Vault

- 9.4.1: Overview

- 9.4.2: How-to guides

- 9.4.3: API Reference

- 9.4.4: CLI Reference

- 9.4.5: Release Note

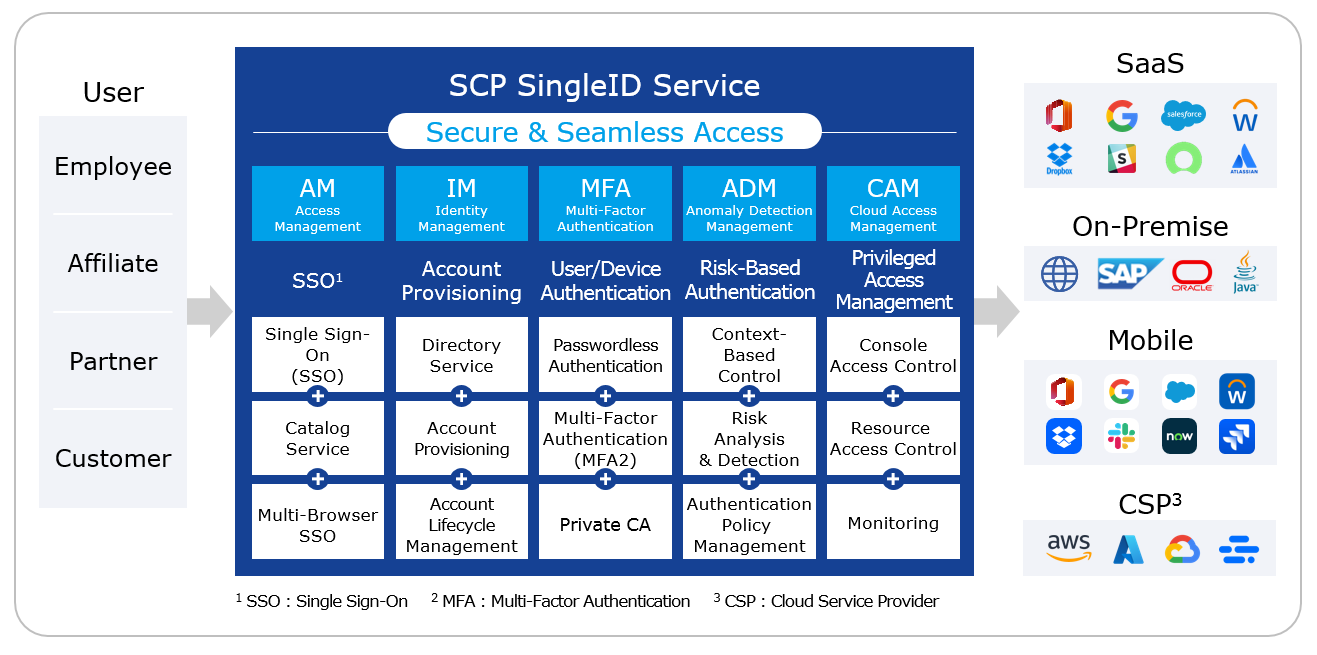

- 9.5: SingleID

- 9.5.1: Overview

- 9.5.2: How-to guides

- 9.5.2.1: SingleID Manuals

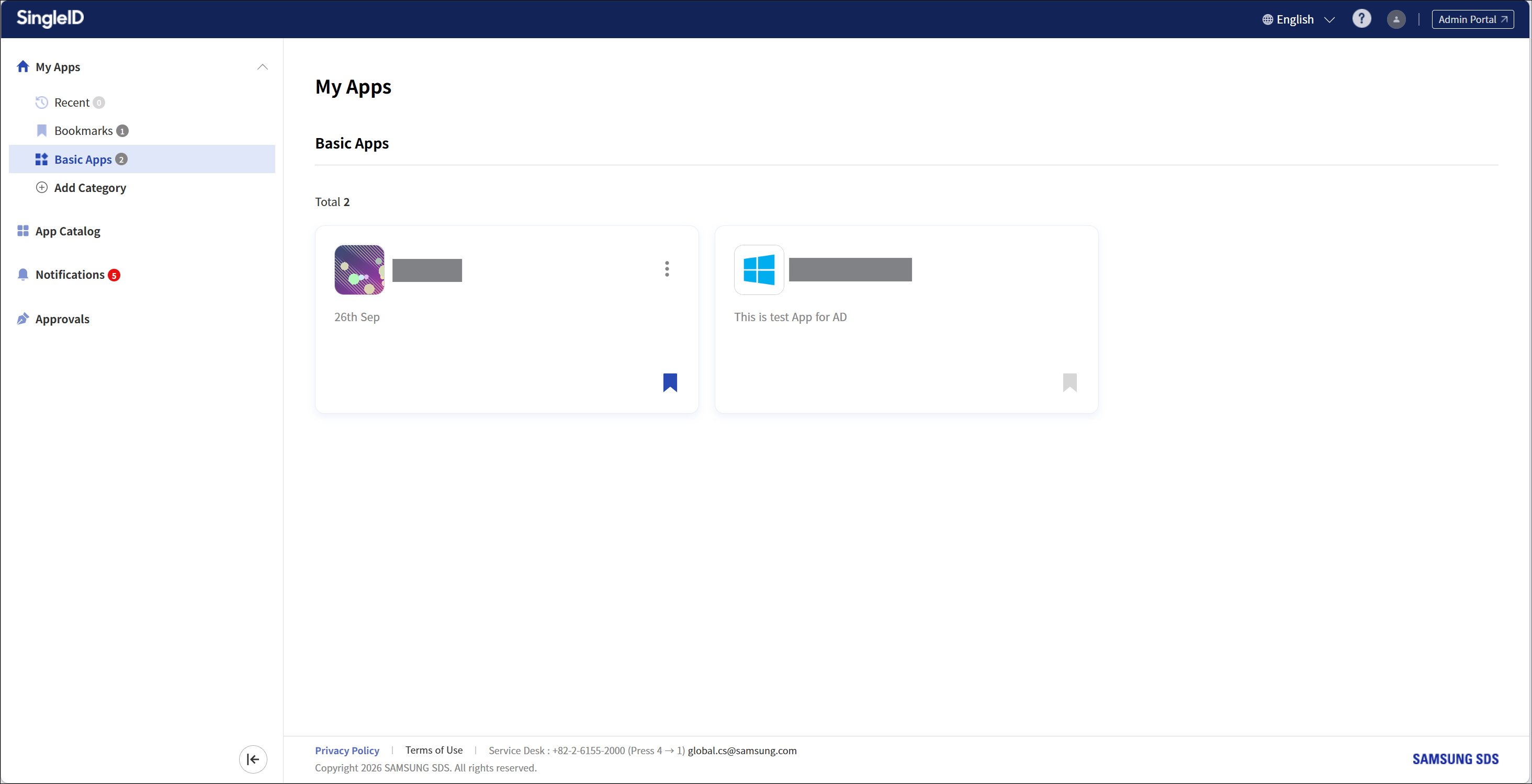

- 9.5.2.1.1: User Portal

- 9.5.2.1.1.1: Announcements and Language Settings

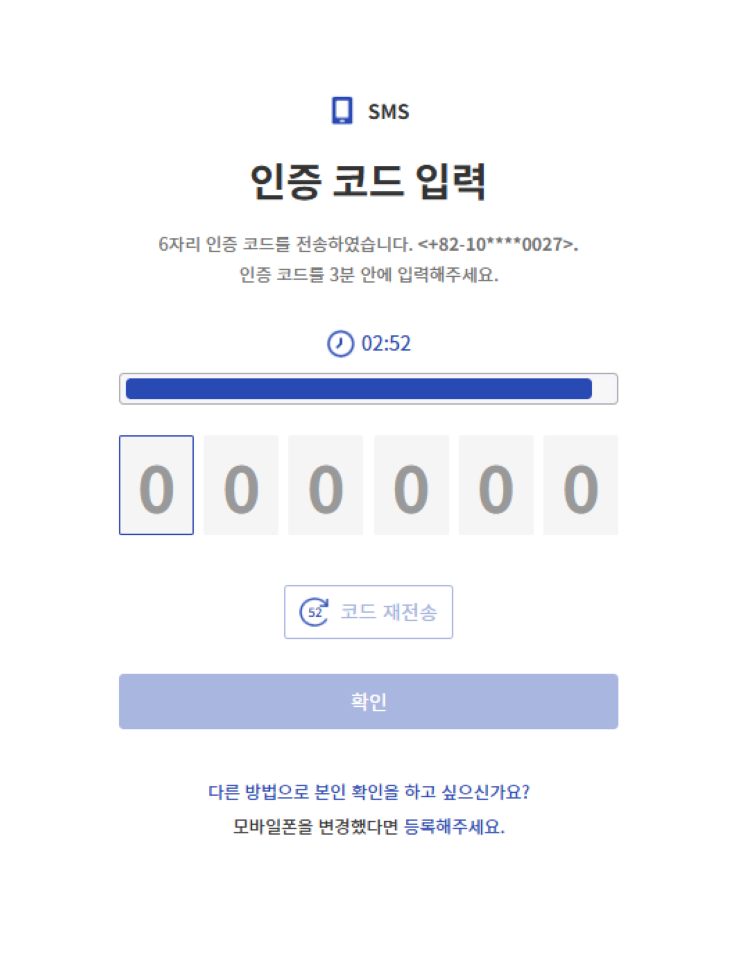

- 9.5.2.1.1.2: Log in using an authentication method

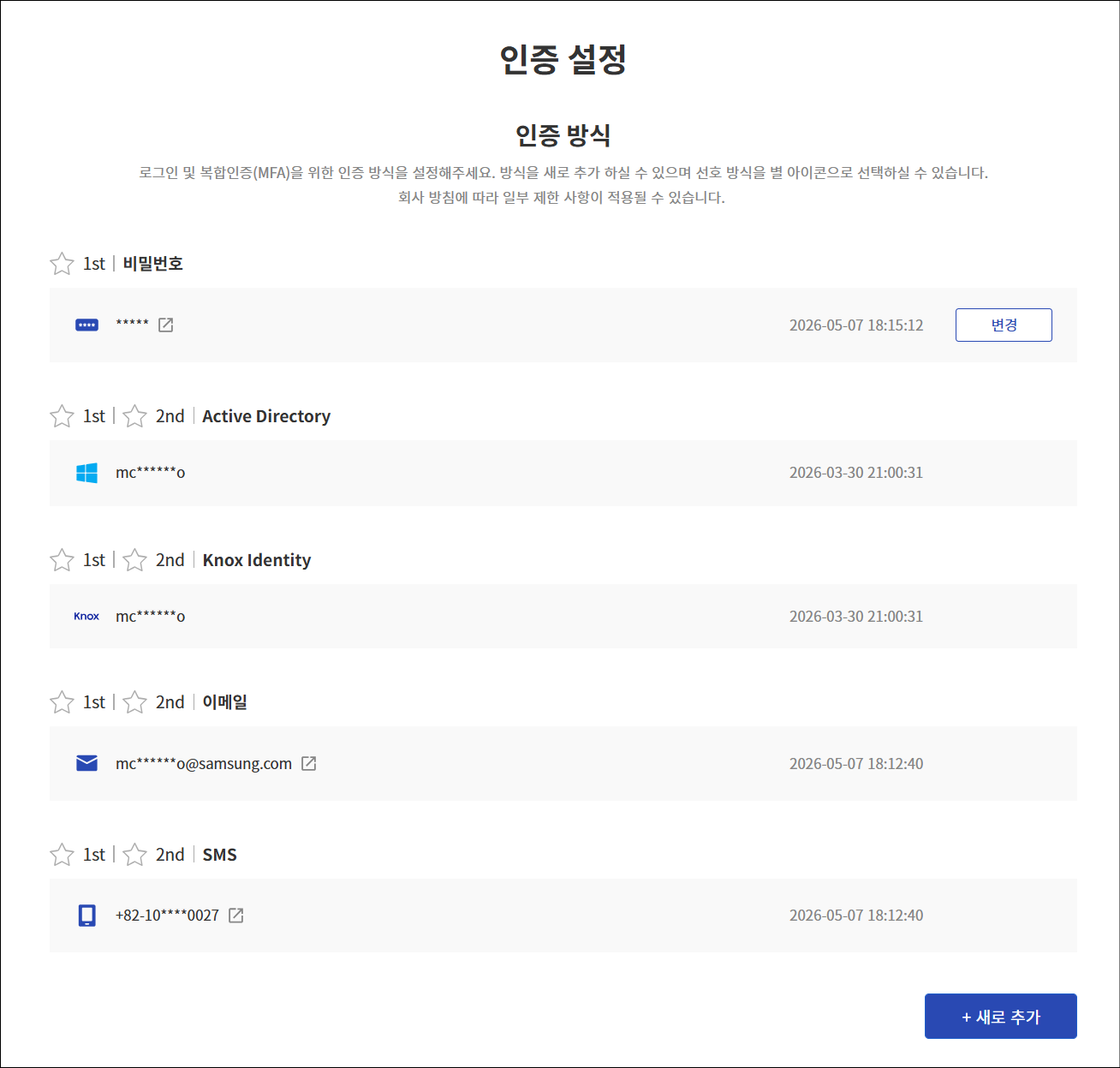

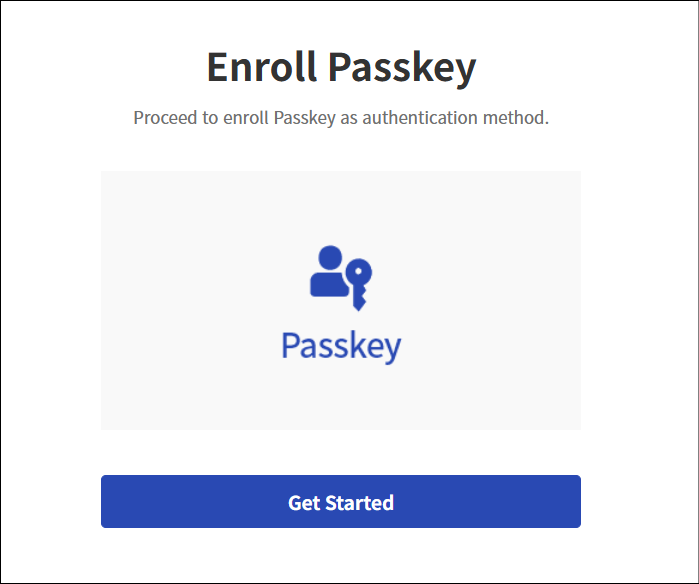

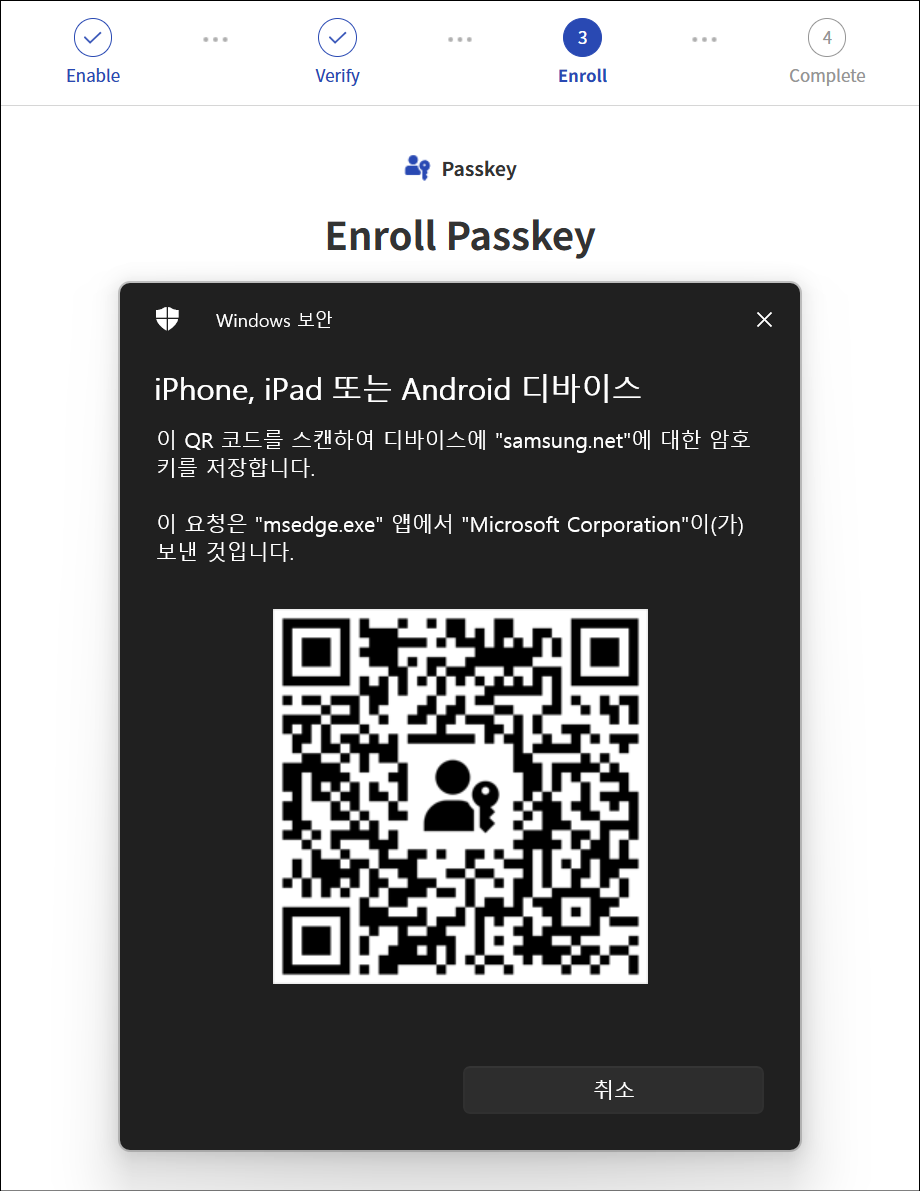

- 9.5.2.1.1.3: Register authentication tool

- 9.5.2.1.1.4: Sign Up

- 9.5.2.1.1.5: Find ID and Reset Password

- 9.5.2.1.1.6: Privacy Policy, Terms of Service, Service Desk

- 9.5.2.1.1.7: PC SSO Agent

- 9.5.2.1.1.8: My App

- 9.5.2.1.1.9: App Catalog

- 9.5.2.1.1.10: Notification

- 9.5.2.1.1.11: Approval Request

- 9.5.2.1.1.12: Personal Profile

- 9.5.2.1.2: Admin Portal

- 9.5.2.1.2.1: Dashboard

- 9.5.2.1.2.2: Integration

- 9.5.2.1.2.3: Identity Store

- 9.5.2.1.2.4: Policy

- 9.5.2.1.2.5: Terms and Conditions

- 9.5.2.1.2.6: Settings

- 9.5.2.1.2.7: Monitoring

- 9.5.2.1.2.8: Open Source licence

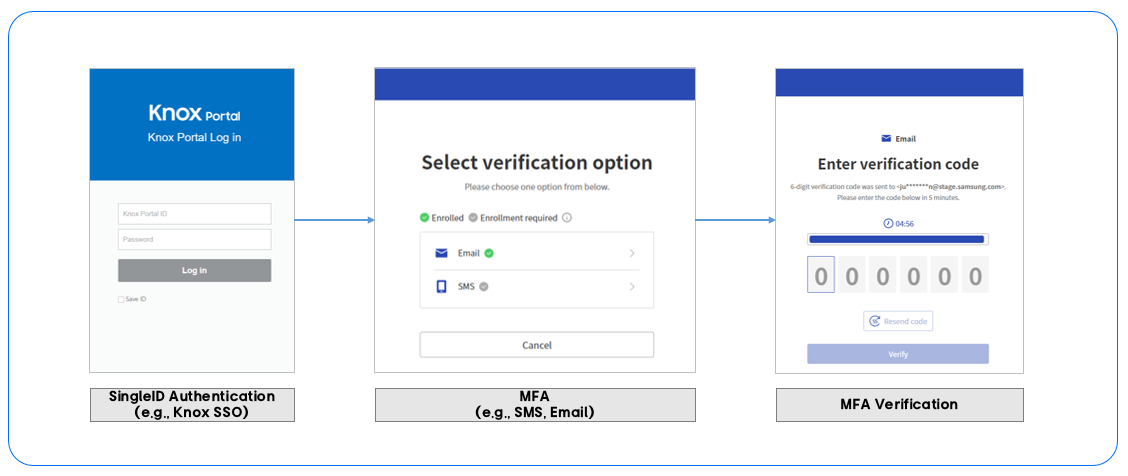

- 9.5.2.1.3: MFA Portal

- 9.5.2.1.3.1: Log in using an authentication method

- 9.5.2.1.3.2: Register authentication tool

- 9.5.2.1.3.3: policy

- 9.5.2.1.3.4: Configure Privacy Settings

- 9.5.2.1.3.5: Settings

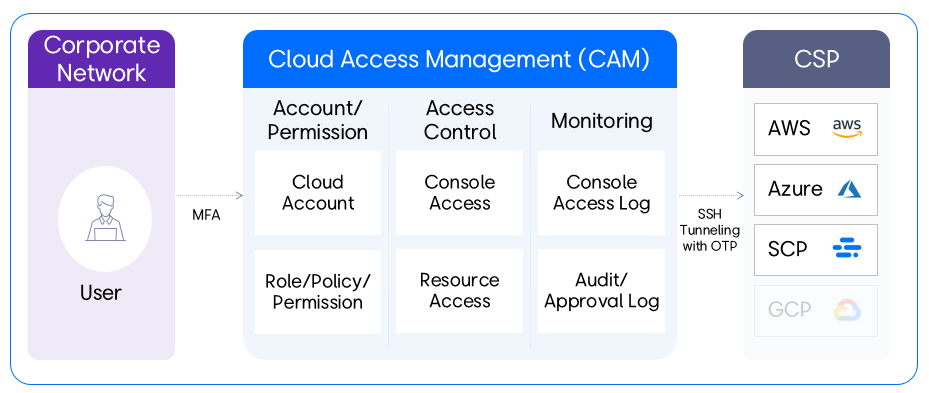

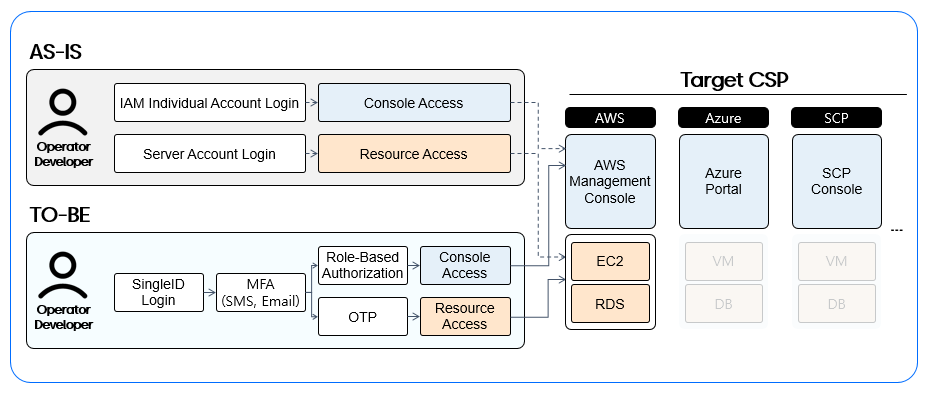

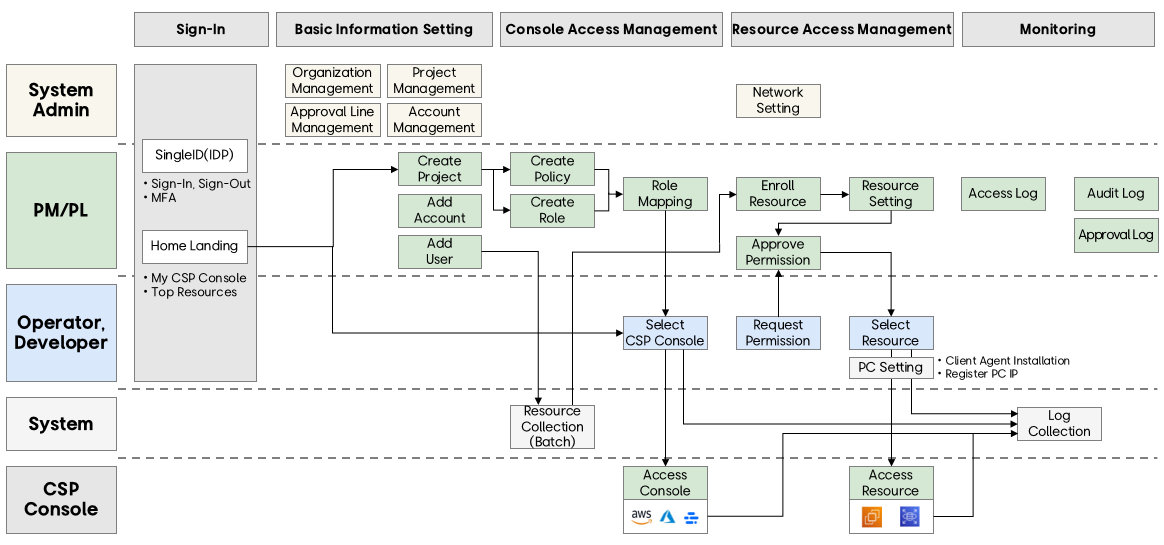

- 9.5.2.1.4: CAM Portal

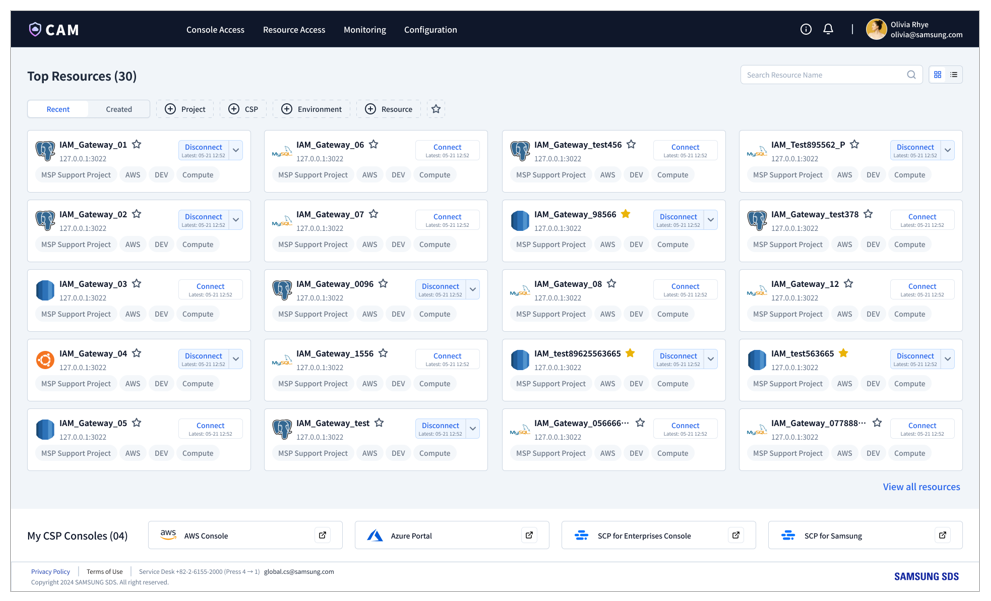

- 9.5.2.1.4.1: Getting Started

- 9.5.2.1.4.2: Home

- 9.5.2.1.4.3: Console Access

- 9.5.2.1.4.4: Resource Access

- 9.5.2.1.4.5: Monitoring

- 9.5.2.1.4.6: Configuration

- 9.5.2.1.5: SingleID Authenticator Manual Overview

- 9.5.2.1.5.1: Install App

- 9.5.2.1.5.2: User Authentication

- 9.5.2.1.5.3: Manage Authentication Method

- 9.5.2.1.5.4: Manage Service List

- 9.5.2.1.5.5: Open Source Licence(Android)

- 9.5.2.1.5.6: Open Source Licence(ISO)

- 9.5.2.1.6: Open API guides

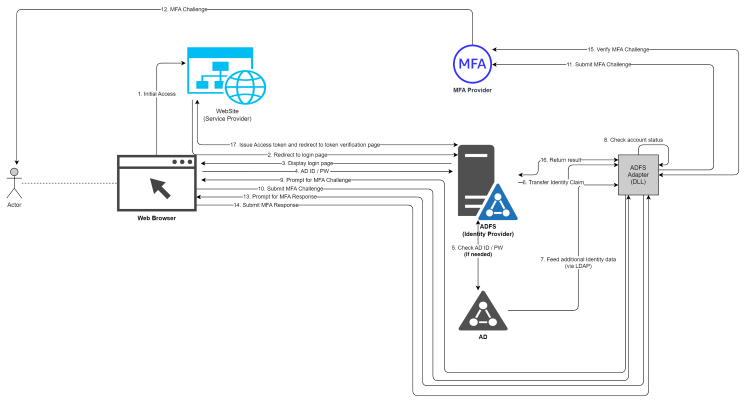

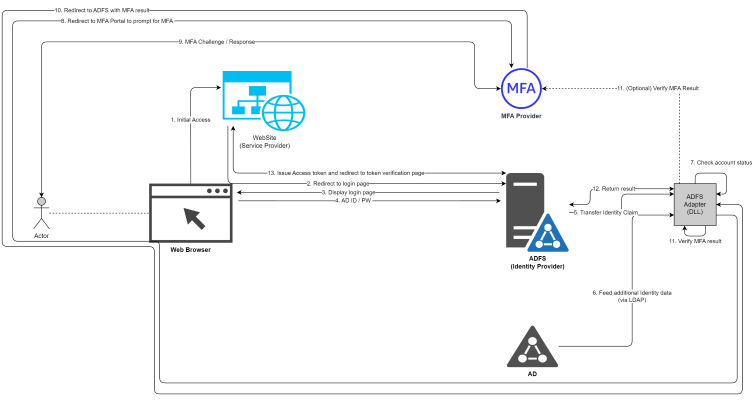

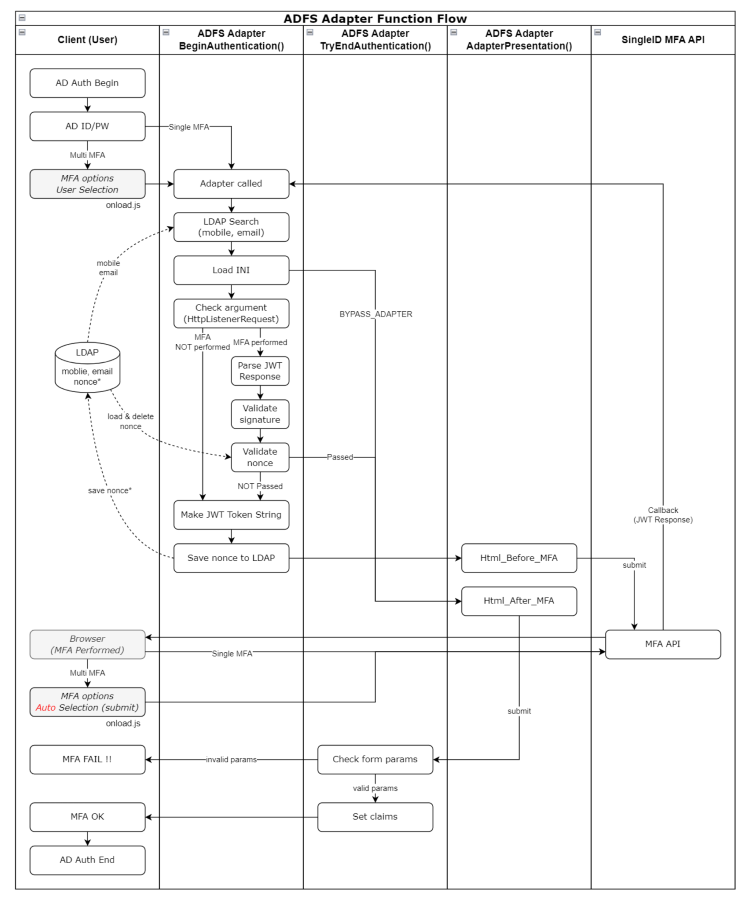

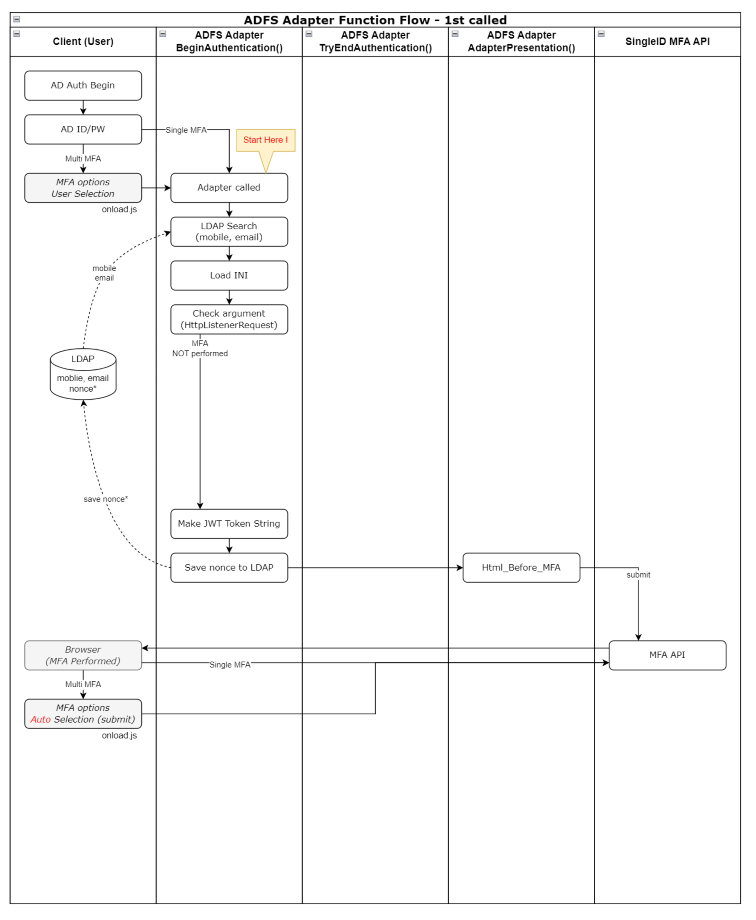

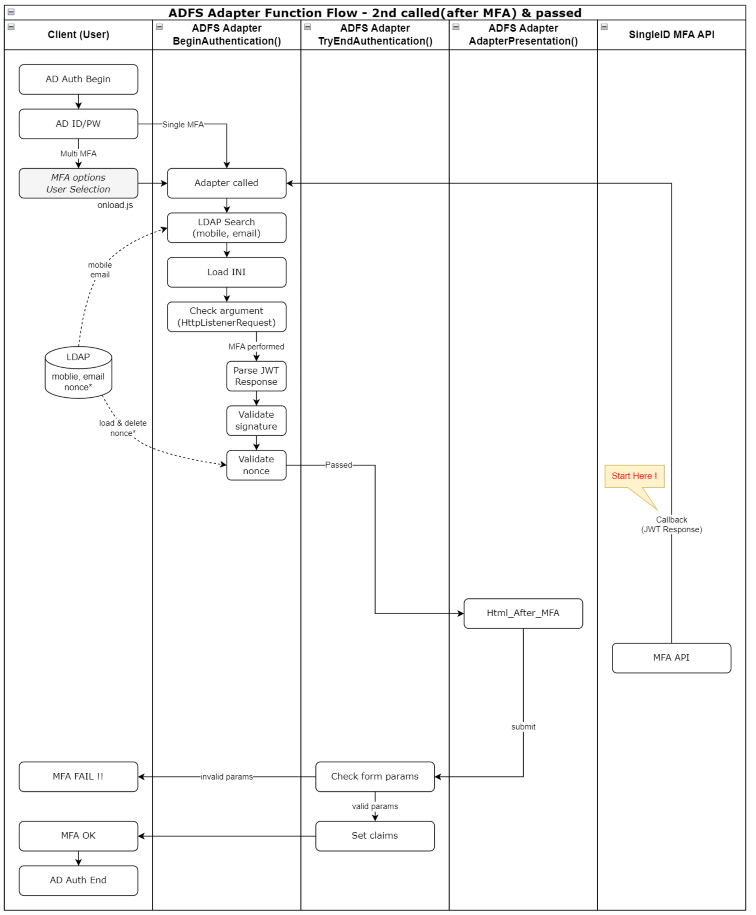

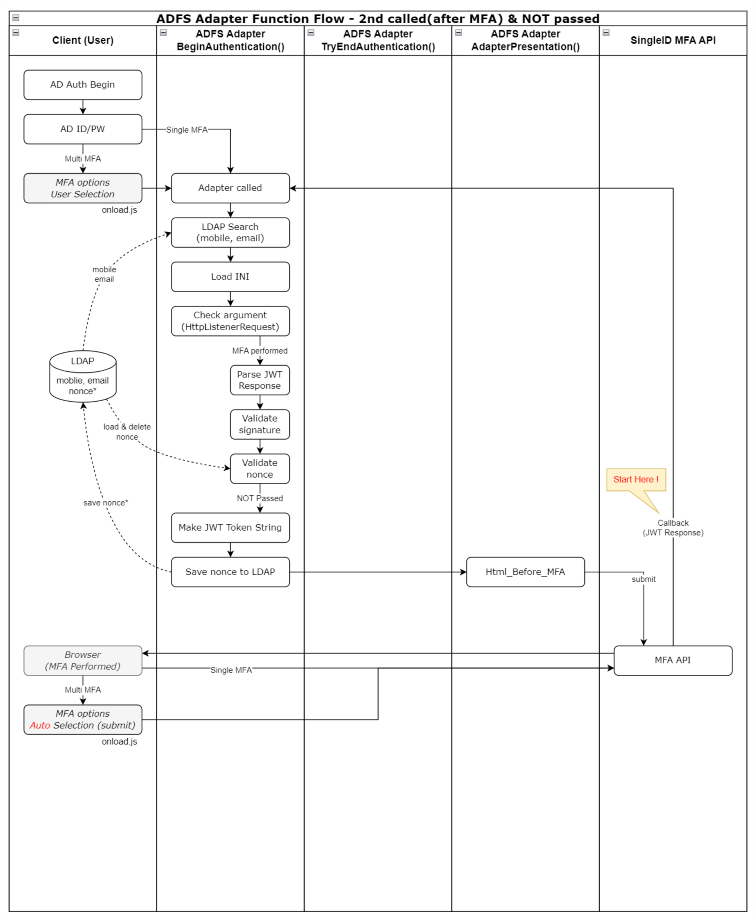

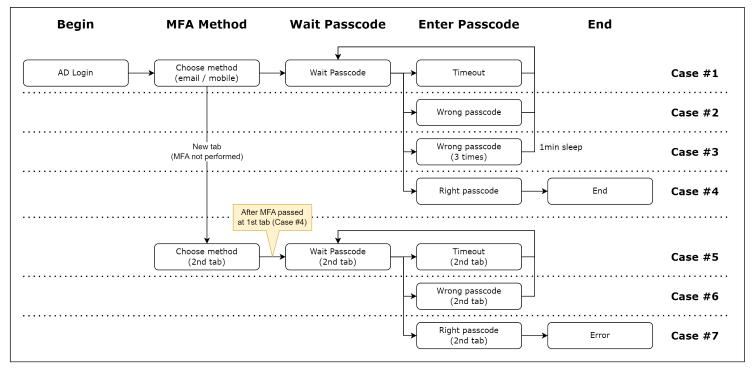

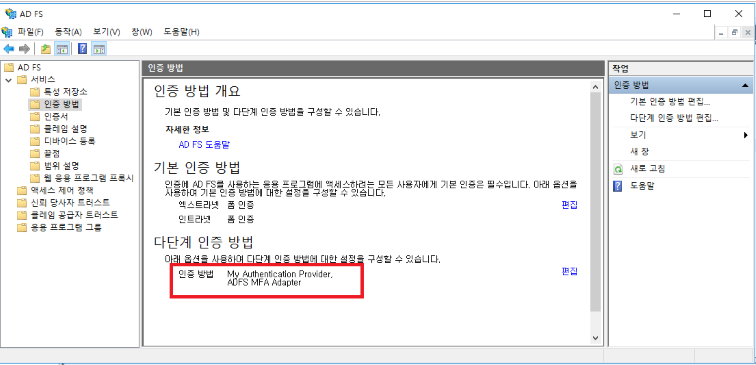

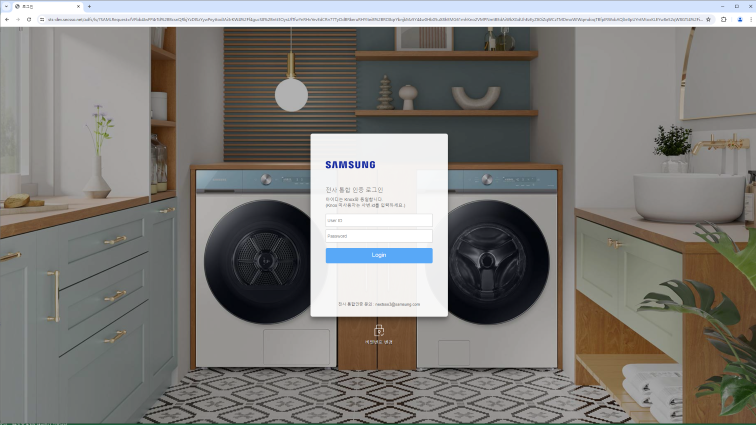

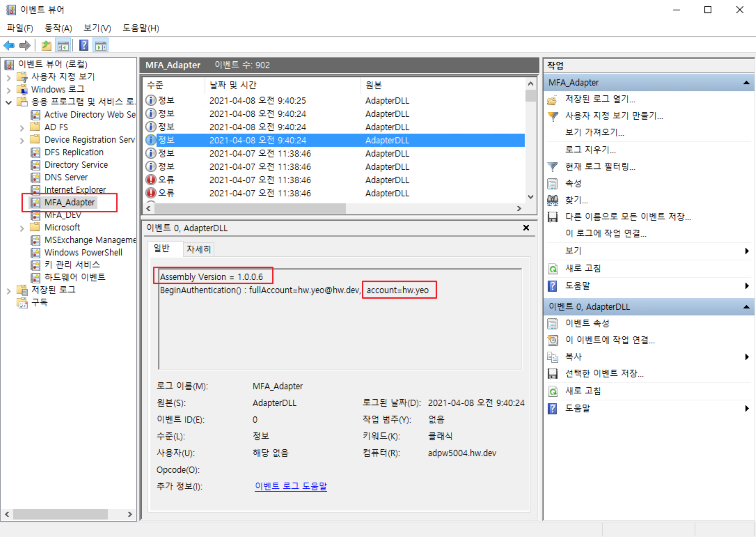

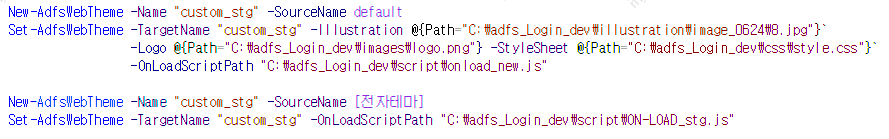

- 9.5.2.1.6.1: ADFS Adapter Guide

- 9.5.2.1.6.2: Adapter Configuration Guide

- 9.5.3: Release Note

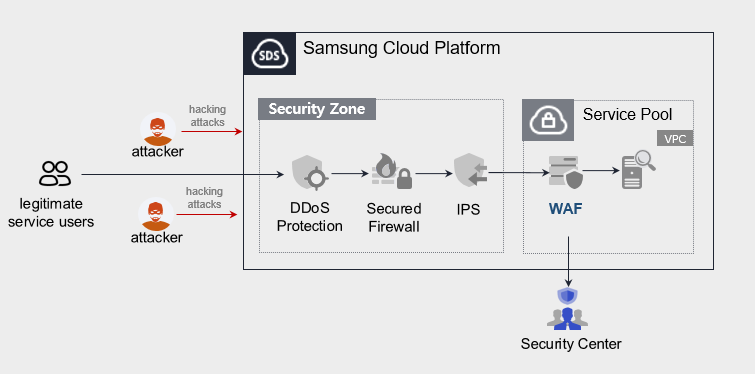

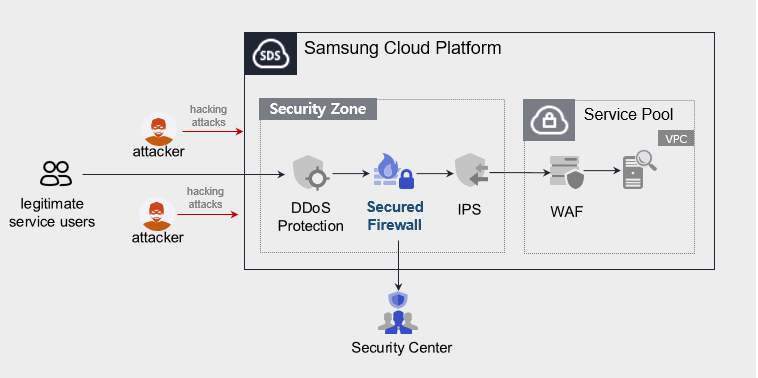

- 9.6: WAF

- 9.6.1: Overview

- 9.6.2: How-to guides

- 9.6.2.1: WAF Preparation

- 9.6.2.2: WAF Service Application

- 9.6.2.3: WAF Service Outage Response

- 9.6.3: Release Note

- 9.7: WAF

- 9.7.1: Overview

- 9.7.2: How-to guides

- 9.7.3: Release Note

- 9.8: WAF

- 9.8.1: Overview

- 9.8.2: How-to guides

- 9.8.2.1: WAF Build Process Guide

- 9.8.3: Release Note

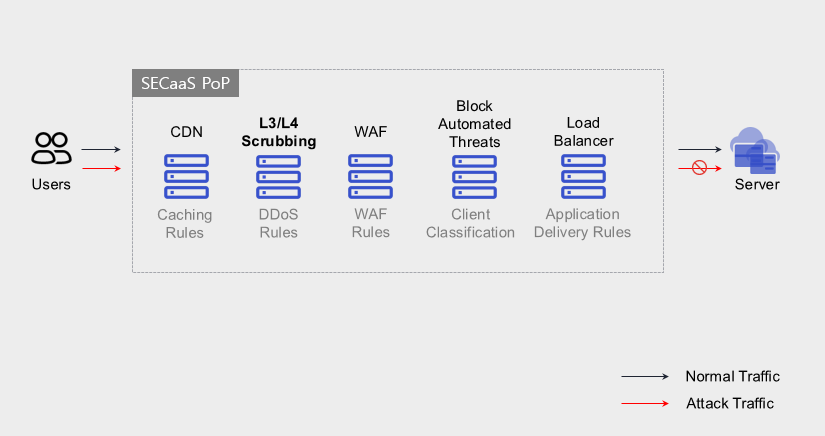

- 9.9: DDoS Protection

- 9.9.1: Overview

- 9.9.2: How-to guides

- 9.9.3: Release Note

- 9.10: DDoS Protection

- 9.10.1: Overview

- 9.10.2: How-to guides

- 9.10.3: Release Note

- 9.11: IPS

- 9.11.1: Overview

- 9.11.2: How-to guides

- 9.11.3: Release Note

- 9.12: Secured Firewall

- 9.12.1: Overview

- 9.12.2: How-to guides

- 9.12.3: Release Note

- 9.13: Secured VPN

- 9.13.1: Overview

- 9.13.2: How-to guides

- 9.13.2.1: Secured VPN Build Process Guide

- 9.13.3: Release Note

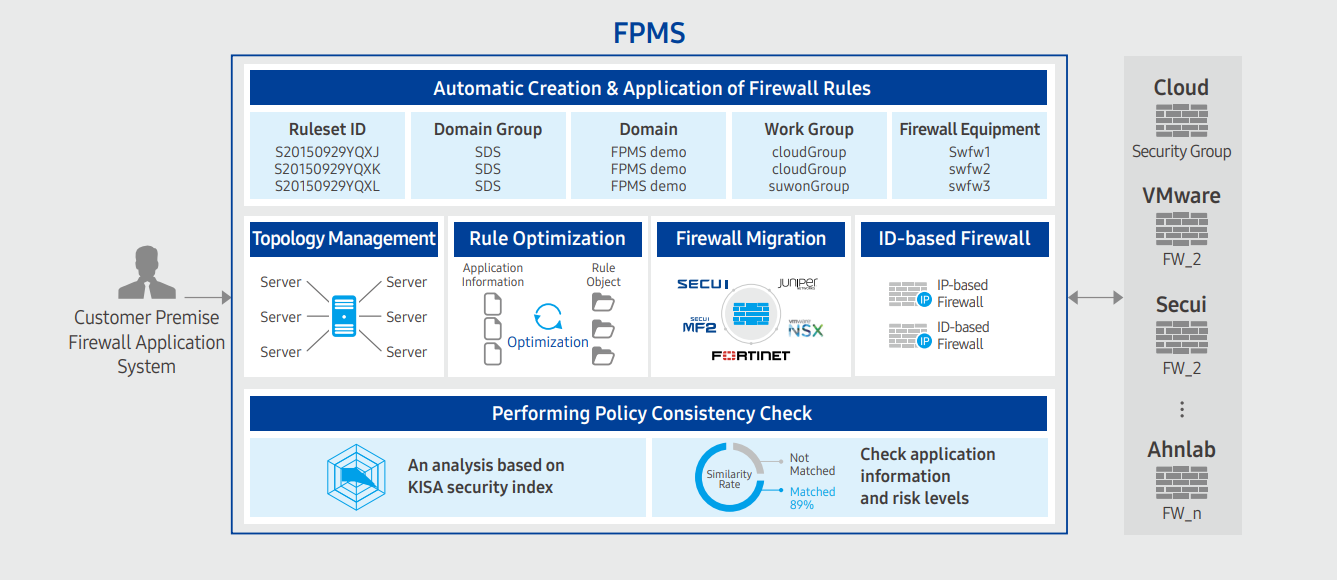

- 9.14: FPMS

- 9.14.1: Overview

- 9.14.2: How-to guides

- 9.14.3: Release Note

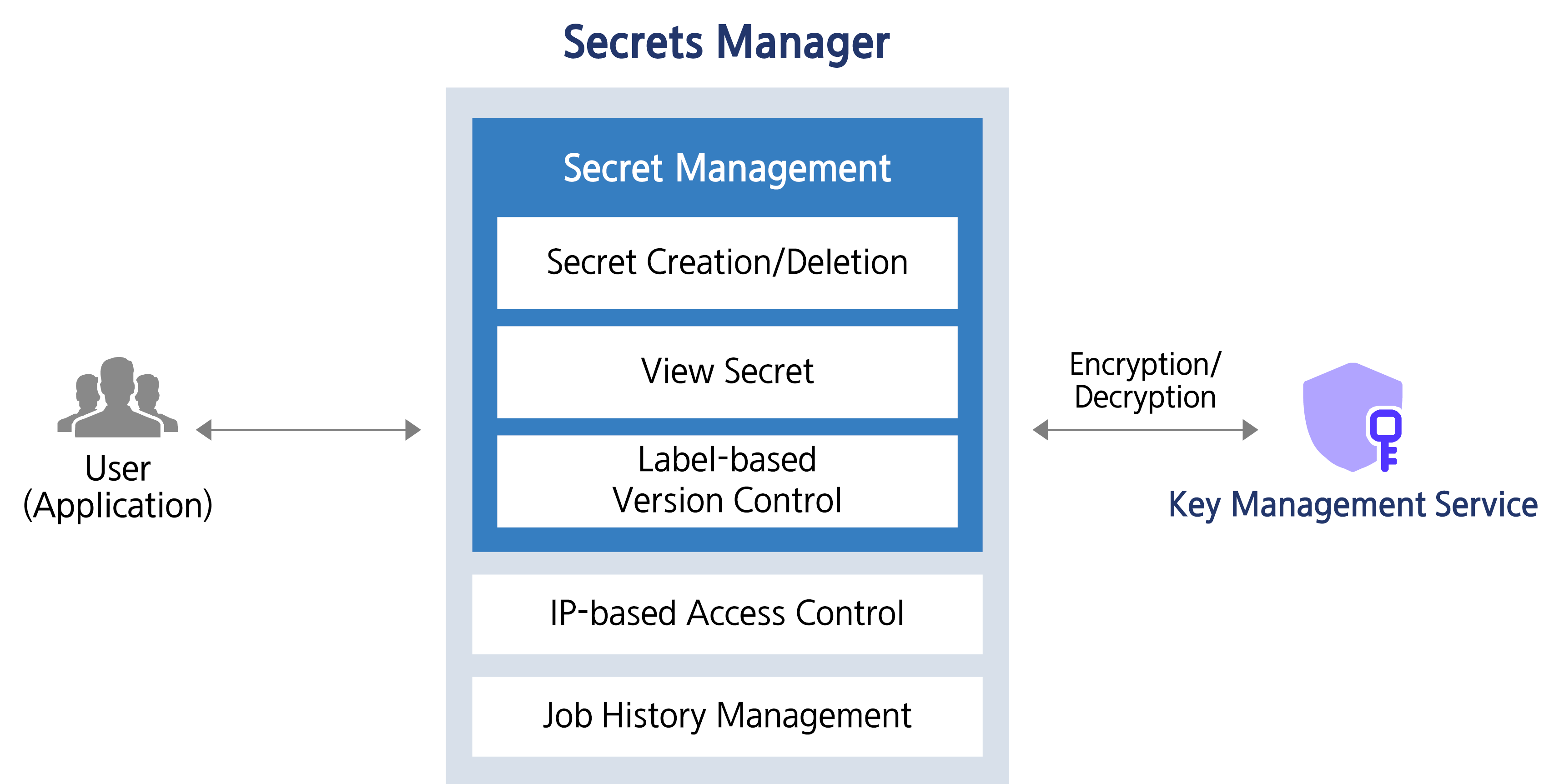

- 9.15: Secrets Manager

- 9.15.1: Overview

- 9.15.2: How-to guides

- 9.15.2.1: Secret Retrieval API Reference

- 9.15.3: Release Note

- 9.16: DDoS Protection

- 9.16.1: Overview

- 9.16.2: How-to guides

- 9.16.2.1: DDoS Protection Preparation

- 9.16.2.2: DDoS Protection Service Application

- 9.16.2.3: DDoS Protection Service Outage Response

- 9.16.3: Release Note

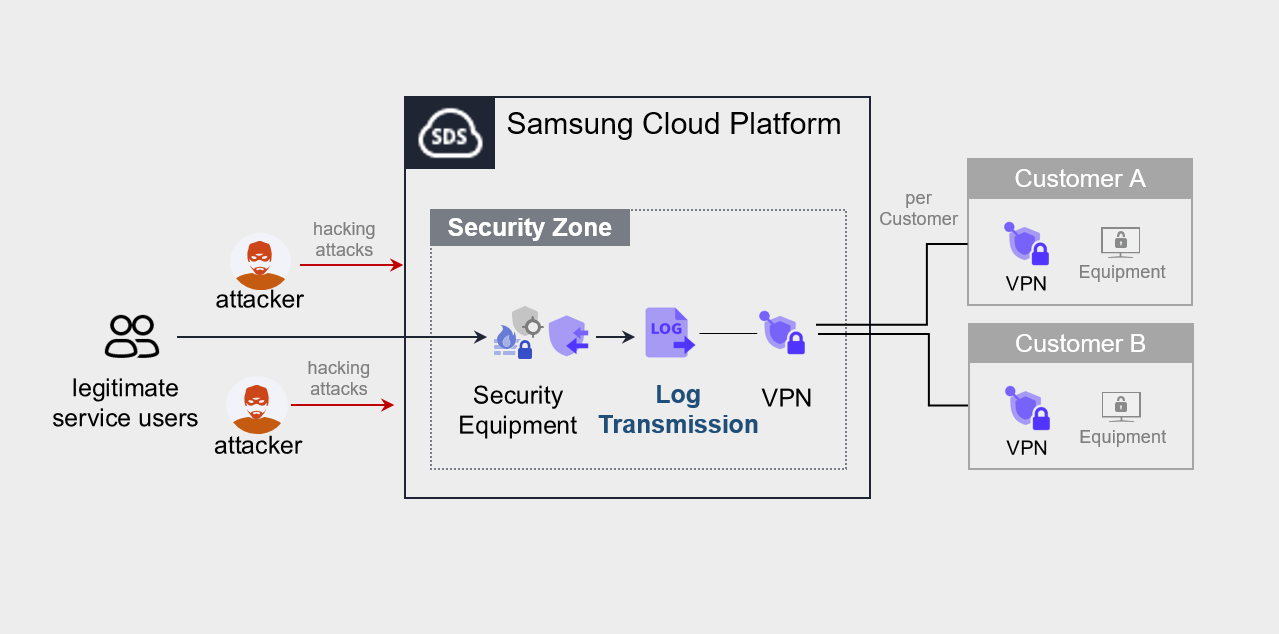

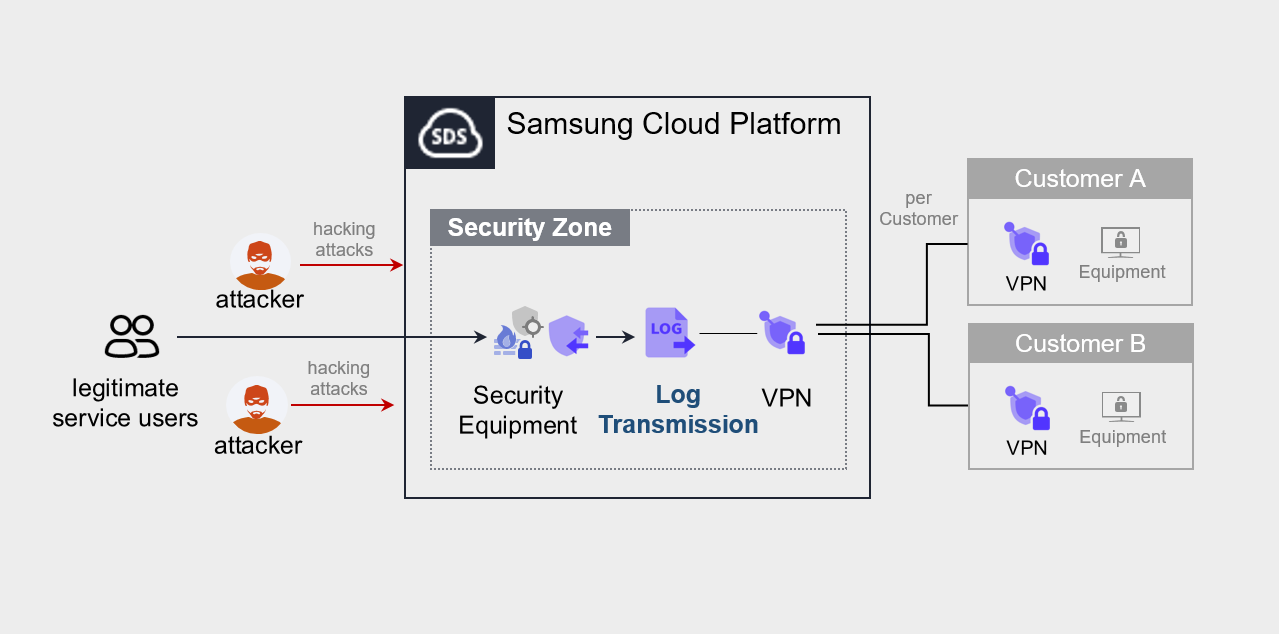

- 9.17: Log Transmission

- 9.17.1: Overview

- 9.17.2: How-to guides

- 9.17.3: Release Note

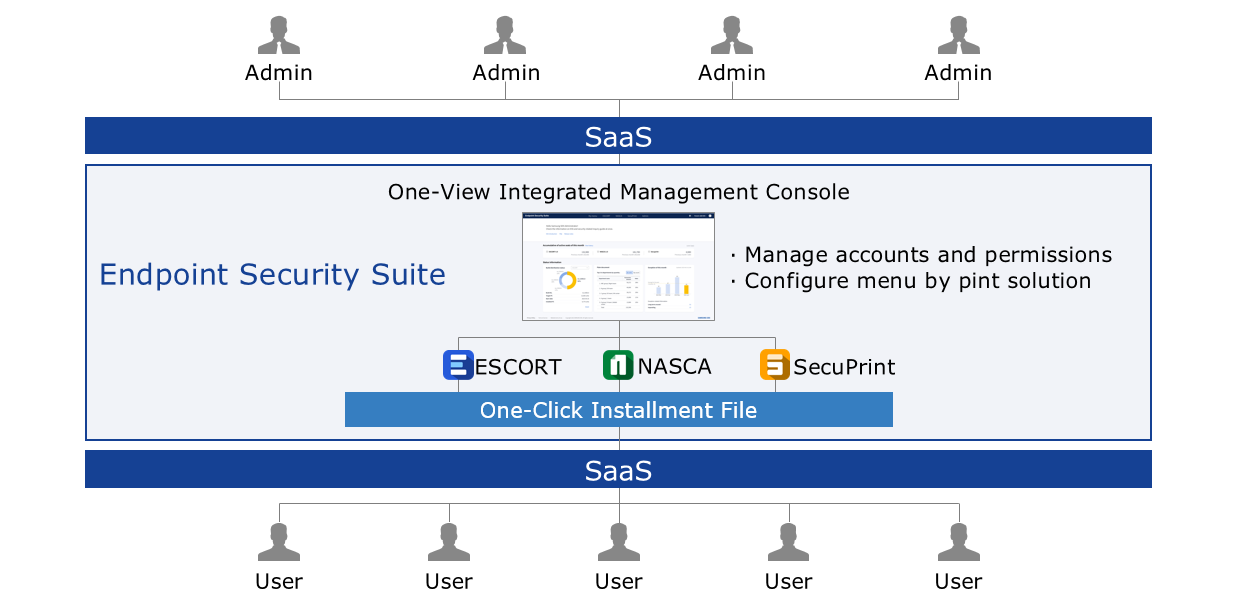

- 9.18: ESS(Endpoint Security Suite)

- 9.18.1: Overview

- 9.18.2: How-to guides

- 9.18.3: Release Note

- 9.19: Log Transmission

- 9.19.1: Overview

- 9.19.2: How-to guides

- 9.19.3: Release Note

- 10: Management

- 10.1: Architecture Diagram

- 10.1.1: Overview

- 10.1.2: How-to Guides

- 10.1.3: Release Note

- 10.2: Cloud Control

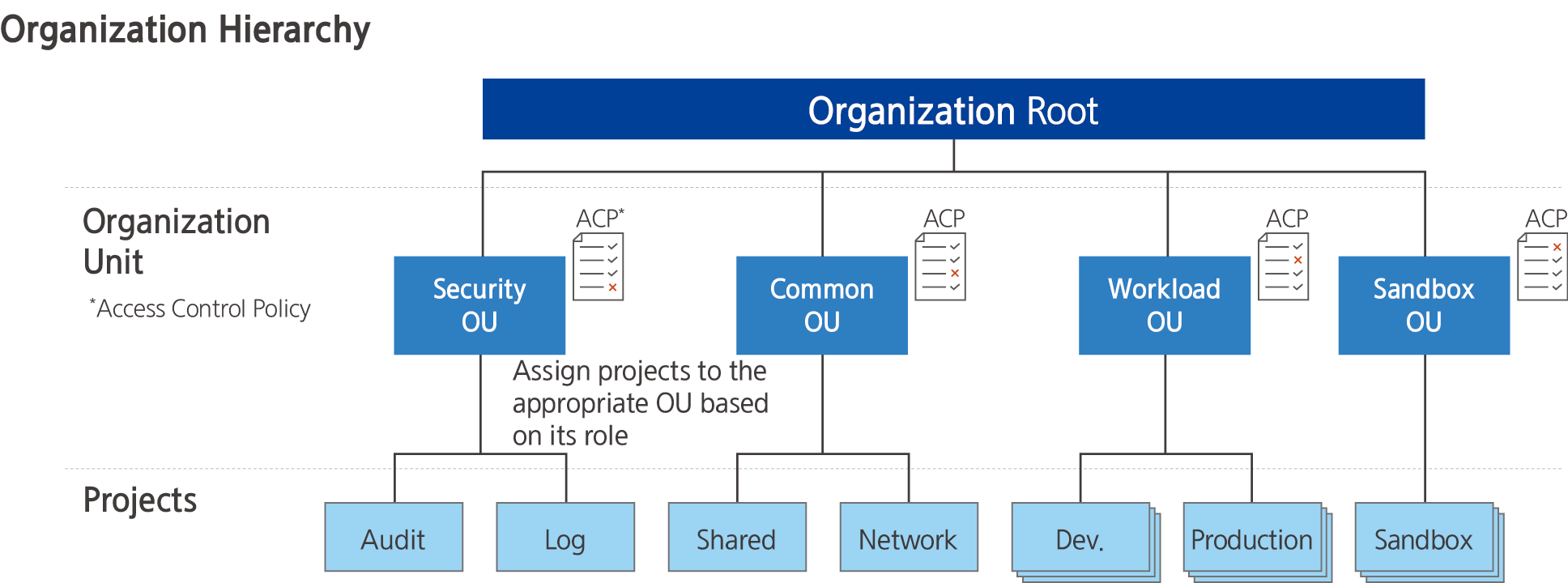

- 10.2.1: Overview

- 10.2.2: How-to guides

- 10.2.2.1: Managing Guardrails

- 10.2.2.2: Managing Organizations

- 10.2.2.3: Managing Accounts

- 10.2.3: API Reference

- 10.2.4: CLI Reference

- 10.2.5: Release Note

- 10.3: Cloud Monitoring

- 10.3.1: Overview

- 10.3.2: How-to guides

- 10.3.2.1: Using Monitoring Dashboards

- 10.3.2.2: Performance Analysis

- 10.3.2.3: Log Analysis

- 10.3.2.4: Managing Events

- 10.3.2.5: Using Custom Dashboards

- 10.3.2.6: Managing Agents

- 10.3.2.7: Appendix A. Service-specific Monitoring Targets

- 10.3.2.8: Appendix B. Service-specific Performance Metrics

- 10.3.2.9: Appendix C. Service-specific Status Checks

- 10.3.3: API Reference

- 10.3.4: Release Note

- 10.4: IAM

- 10.4.1: Overview

- 10.4.2: How-to Guides

- 10.4.2.1: User Group

- 10.4.2.2: Users

- 10.4.2.3: Policy

- 10.4.2.4: Role

- 10.4.2.5: Credential Providers

- 10.4.2.6: My Info.

- 10.4.2.7: JSON Writing Guide

- 10.4.3: API Reference

- 10.4.4: CLI Reference

- 10.4.5: Release Note

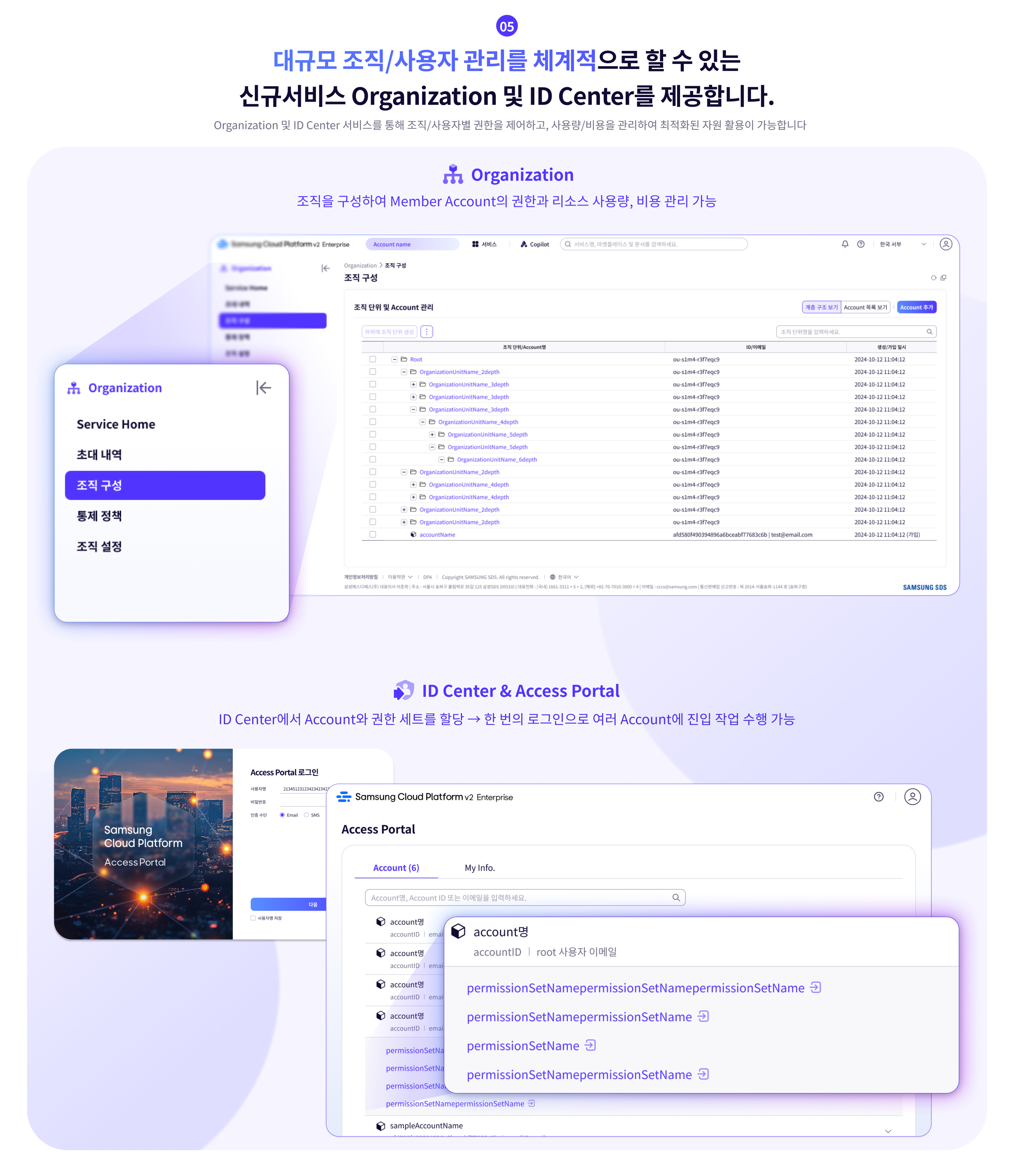

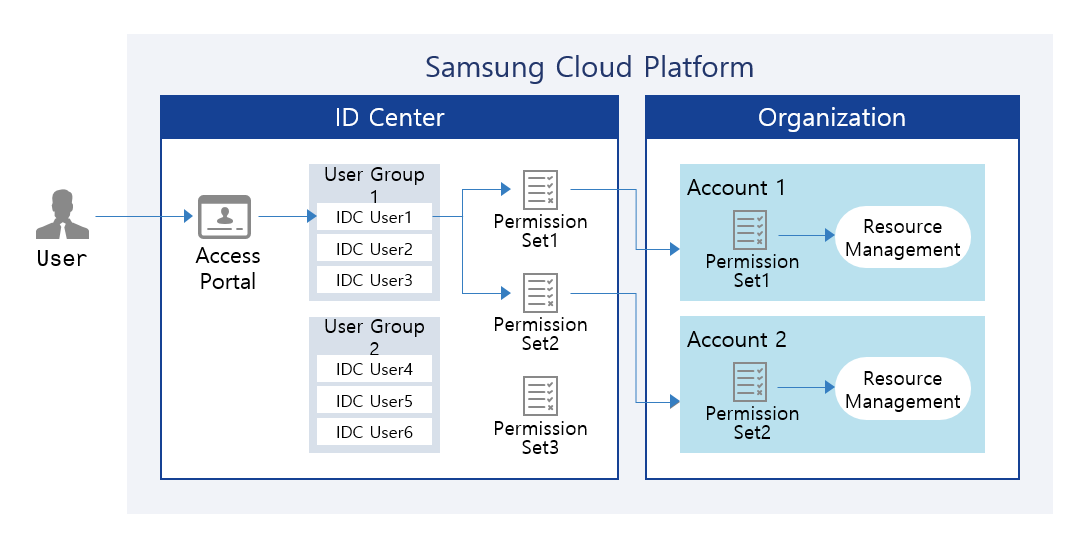

- 10.5: ID Center

- 10.5.1: Overview

- 10.5.2: How-to guides

- 10.5.2.1: Managing ID Center Users

- 10.5.2.2: Managing ID Center User Groups

- 10.5.2.3: Assigning ID Center Account

- 10.5.2.4: Managing ID Center Permission Sets

- 10.5.2.5: Using ID Center Access Portal

- 10.5.3: Release Note

- 10.6: Logging&Audit

- 10.6.1: Overview

- 10.6.2: How-to guides

- 10.6.2.1: Managing Trails

- 10.6.3: API Reference

- 10.6.4: CLI Reference

- 10.6.5: Release Note

- 10.7: Notification Manager

- 10.7.1: Overview

- 10.7.2: How-to Guides

- 10.7.2.1: Notification Policy

- 10.7.3: Release Note

- 10.8: Organization

- 10.8.1: Overview

- 10.8.2: How-to guides

- 10.8.2.1: Organization Configuration Information

- 10.8.2.2: Organization Control Policy

- 10.8.3: API Reference

- 10.8.4: CLI Reference

- 10.8.5: Release Note

- 10.9: Resource Explorer

- 10.9.1: Overview

- 10.9.2: How-to Guides

- 10.9.3: Release Note

- 10.10: Resource Groups

- 10.10.1: Overview

- 10.10.2: How-to Guides

- 10.10.3: Release Note

- 10.11: ServiceWatch

- 10.11.1: Overview

- 10.11.1.1: Metric

- 10.11.1.2: Alert

- 10.11.1.3: Log

- 10.11.1.4: Event

- 10.11.1.5: ServiceWatch integration service

- 10.11.1.6: Custom Metrics and Logs

- 10.11.2: How-to guides

- 10.11.2.1: Managing Dashboards and Widgets

- 10.11.2.2: Alert

- 10.11.2.3: indicator

- 10.11.2.4: Log

- 10.11.2.5: Event

- 10.11.2.6: Using ServiceWatch Agent

- 10.11.3: API Reference

- 10.11.4: CLI Reference

- 10.11.5: ServiceWatch Event Reference

- 10.11.5.1: ServiceWatch Event

- 10.11.5.1.1: Multi-node GPU Cluster

- 10.11.5.1.2: MySQL(DBaaS)

- 10.11.5.1.3: Global CDN

- 10.11.5.1.4: Data Flow

- 10.11.5.1.5: GSLB

- 10.11.5.1.6: Cloud Control

- 10.11.5.1.7: Cloud WAN

- 10.11.5.1.8: Object Storage

- 10.11.5.1.9: VPC

- 10.11.5.1.10: GPU Server

- 10.11.5.1.11: Virtual Server

- 10.11.5.1.12: Firewall

- 10.11.5.1.13: ID Center

- 10.11.5.1.14: Microsoft SQL Server(DBaaS)

- 10.11.5.1.15: Block Storage(BM)

- 10.11.5.1.16: Resource Groups

- 10.11.5.1.17: Cloud Functions

- 10.11.5.1.18: AI&MLOps Platform

- 10.11.5.1.19: Event Streams

- 10.11.5.1.20: Security Group

- 10.11.5.1.21: Archive Storage

- 10.11.5.1.22: API Gateway

- 10.11.5.1.23: Load Balancer

- 10.11.5.1.24: Data Ops

- 10.11.5.1.25: Scalable DB(DBaaS)

- 10.11.5.1.26: Cloud LAN-Campus

- 10.11.5.1.27: EPAS(DBaaS)

- 10.11.5.1.28: PostreSQL(DBaaS)

- 10.11.5.1.29: Logging&Audit

- 10.11.5.1.30: Search Engine

- 10.11.5.1.31: DNS

- 10.11.5.1.32: VPN

- 10.11.5.1.33: Secrets Manager

- 10.11.5.1.34: Quick Query

- 10.11.5.1.35: File Storage

- 10.11.5.1.36: CacheStore(DBaaS)

- 10.11.5.1.37: Secret Vault

- 10.11.5.1.38: Queue Service

- 10.11.5.1.39: Kubernetes Engine

- 10.11.5.1.40: Config Inspection

- 10.11.5.1.41: Cloud LAN-Datacenter

- 10.11.5.1.42: Identity Access Management

- 10.11.5.1.43: Bare Metal Server

- 10.11.5.1.44: ServiceWatch

- 10.11.5.1.45: MariaDB(DBaaS)

- 10.11.5.1.46: Container Registry

- 10.11.5.1.47: Vertica(DBaaS)

- 10.11.5.1.48: Backup

- 10.11.5.1.49: Organization

- 10.11.5.1.50: Cloud ML

- 10.11.5.1.51: Certificate Manager

- 10.11.5.1.52: Key Management Service

- 10.11.5.1.53: Direct Connect

- 10.11.5.1.54: Support Center

- 10.11.6: Release Note

- 10.12: Support Center

- 10.12.1: Overview

- 10.12.2: How-to Guides

- 10.12.2.1: Contact Us

- 10.12.2.2: Support Plan

- 10.12.2.3: Knowledge Center

- 10.12.3: Release Note

- 10.13: Quota Service

- 10.13.1: Overview

- 10.13.2: How-to guides

- 10.13.2.1: Organization Quota Template

- 10.13.3: Release Note

- 11: Financial Management

- 11.1: Cost Management

- 11.1.1: Overview

- 11.1.2: How-to Guides

- 11.1.2.1: Usage and Billing Details

- 11.1.2.2: Payment History

- 11.1.2.3: Cost Analysis

- 11.1.2.4: Credit Management

- 11.1.2.5: Budget Management

- 11.1.2.6: Cost Savings

- 11.1.2.7: Account

- 11.1.2.8: Payment Management

- 11.1.2.9: carbon emissions

- 11.1.2.10: EDP Report

- 11.1.3: Release Note

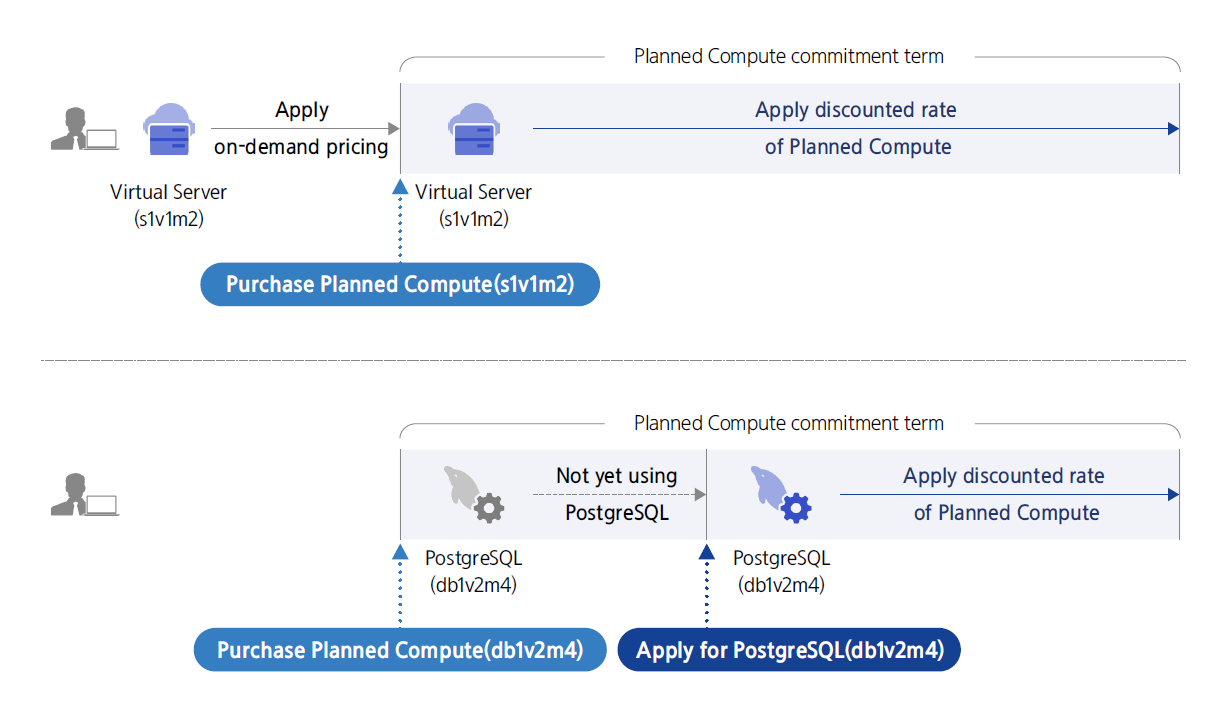

- 11.2: Planned Compute

- 11.2.1: Overview

- 11.2.2: How-to Guides

- 11.2.3: Release Note

- 11.3: Marketplace

- 11.3.1: Overview

- 11.3.2: How-to Guides

- 11.3.3: Release Note

- 12: DevOps Tools

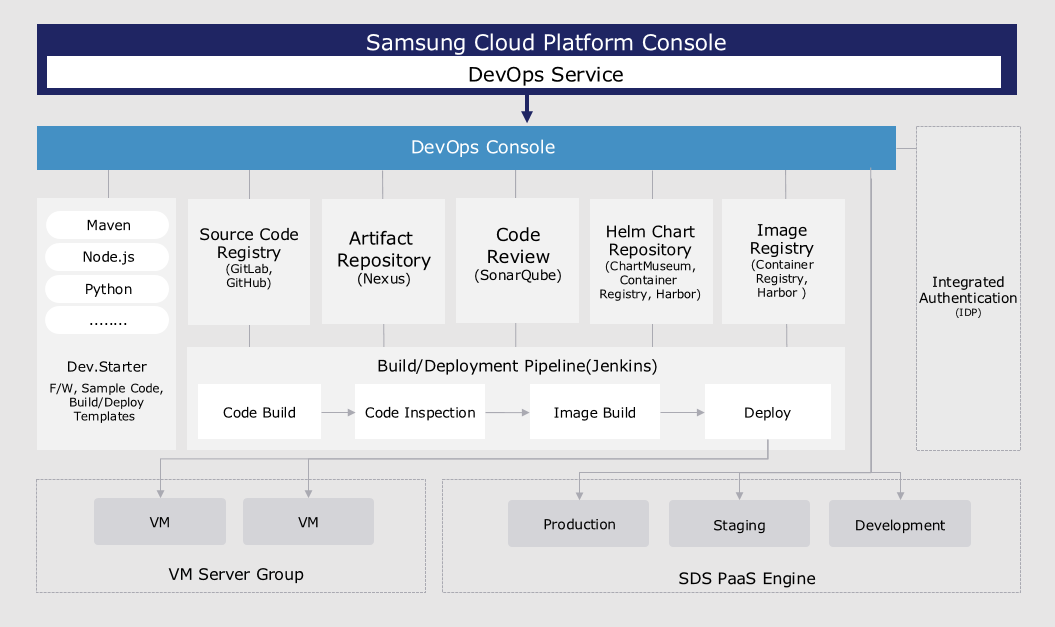

- 12.1: DevOps Service

- 12.1.1: Overview

- 12.1.2: How-to guides

- 12.1.3: API Reference

- 12.1.4: CLI Reference

- 12.1.5: Release Note

- 12.2: DevOps Console

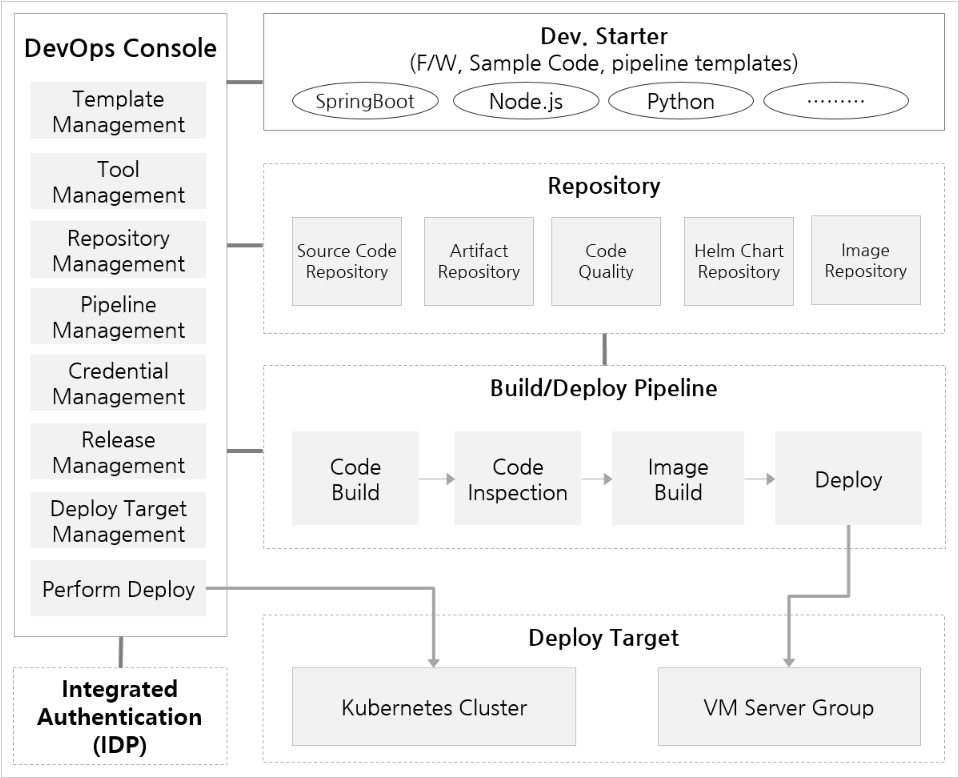

- 12.2.1: Overview

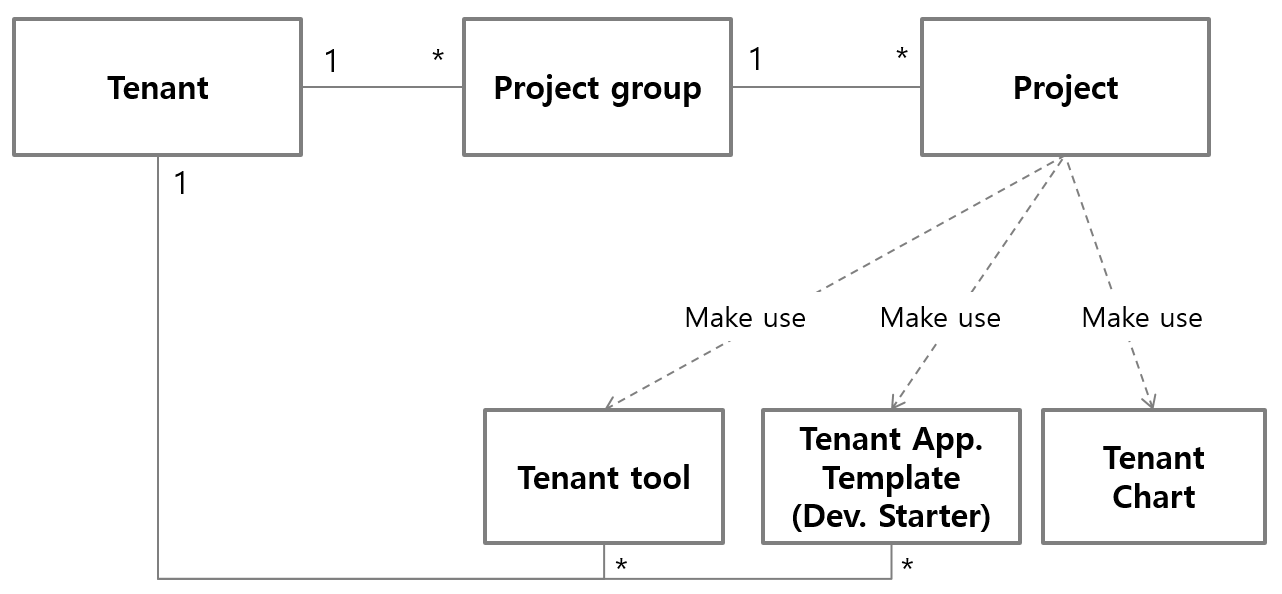

- 12.2.1.1: DevOps Console Introduction

- 12.2.1.2: Roles

- 12.2.1.3: Screen Configuration

- 12.2.2: Getting Started

- 12.2.2.1: Getting Started with DevOps Console

- 12.2.2.1.1: Registration Information

- 12.2.2.2: Tutorial (From Project Creation to Build/Deploy)

- 12.2.2.2.1: Add Build/Deploy (Helm Chart Deployment)

- 12.2.2.2.2: Add Build/Deploy (Workload Deployment)

- 12.2.2.2.3: Add Build/Deploy (VM Deployment)

- 12.2.2.2.4: Check Deployment Target Namespace Permissions (Before Project Creation)

- 12.2.3: Project

- 12.2.3.1: Project Overview

- 12.2.3.2: Create Project

- 12.2.3.2.1: Create Project (Helm Chart Deployment)

- 12.2.3.2.2: Create Project (Workload Deployment)

- 12.2.3.2.3: Create Project (ArgoCD Deployment)

- 12.2.3.2.4: Create Project (VM Deployment)

- 12.2.3.2.5: Create Project (Empty)

- 12.2.3.3: Start Project

- 12.2.3.4: Project Dashboard

- 12.2.3.5: Project Members

- 12.2.4: Build/Deploy

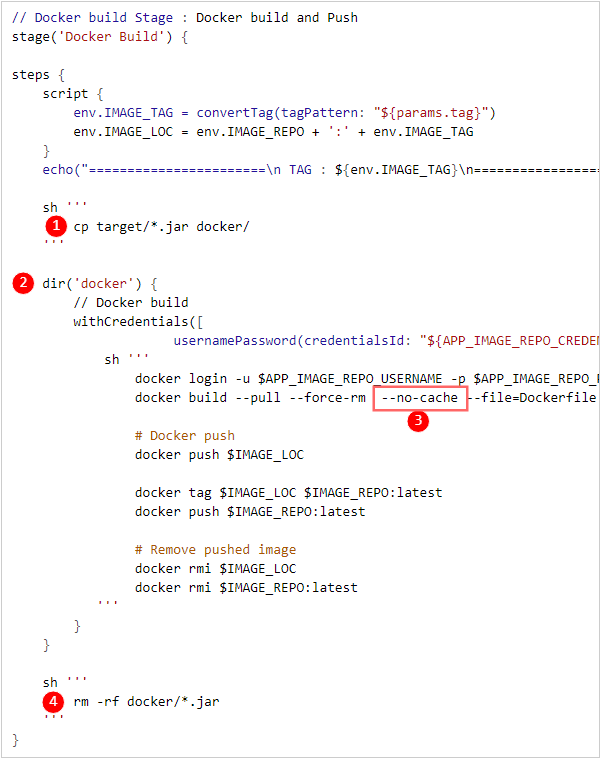

- 12.2.4.1: Build Pipeline

- 12.2.4.1.1: Stage

- 12.2.4.1.2: Multibranch Pipeline

- 12.2.4.2: Kubernetes Deployment

- 12.2.4.2.1: Helm Release

- 12.2.4.2.2: Workload

- 12.2.4.2.3: Blue/Green Deployment

- 12.2.4.2.4: Canary Deployment

- 12.2.4.2.5: Istio

- 12.2.4.2.6: ArgoCD

- 12.2.4.3: VM Deployment

- 12.2.4.4: Helm Install

- 12.2.4.5: Ingress/Service Management

- 12.2.4.6: Kubernetes Secret Management

- 12.2.4.7: Environment Variable Management

- 12.2.5: Project Group

- 12.2.5.1: Project Group Overview

- 12.2.5.2: Create Project Group

- 12.2.5.3: Project Group Dashboard

- 12.2.6: Tenant

- 12.2.6.1: Tenant Management

- 12.2.6.2: Tenant Dashboard

- 12.2.6.3: Tenant Notice

- 12.2.7: Repository

- 12.2.7.1: Code Repository

- 12.2.7.2: Artifact Repository

- 12.2.7.3: Image Repository

- 12.2.7.4: Chart Repository

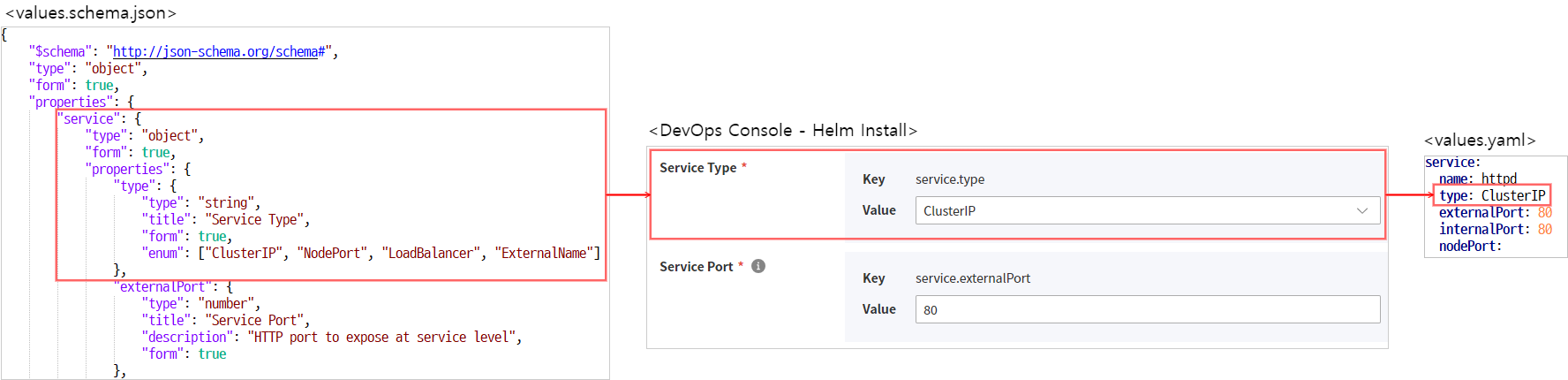

- 12.2.7.5: Helm Chart

- 12.2.7.5.1: Creating Helm Charts with Form Input Support

- 12.2.8: Quality

- 12.2.8.1: Code Quality

- 12.2.9: Tools & Templates

- 12.2.9.1: Tool Management

- 12.2.9.2: App Template

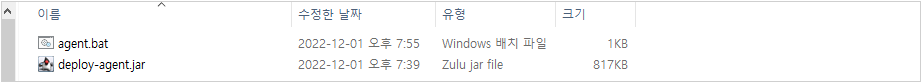

- 12.2.9.3: Register User-installed Jenkins Tool

- 12.2.10: Deployment Target

- 12.2.10.1: K8S Cluster

- 12.2.10.1.1: Verify Cluster Admin Token

- 12.2.10.2: VM Server Group

- 12.2.10.3: Request Permission

- 12.2.11: Release Management

- 12.2.11.1: Release Management

- 12.2.11.2: Workflow Management

- 12.2.11.3: Approval Template Settings

- 12.2.11.4: Task

- 12.2.11.5: JIRA Project

- 12.2.12: Release Note

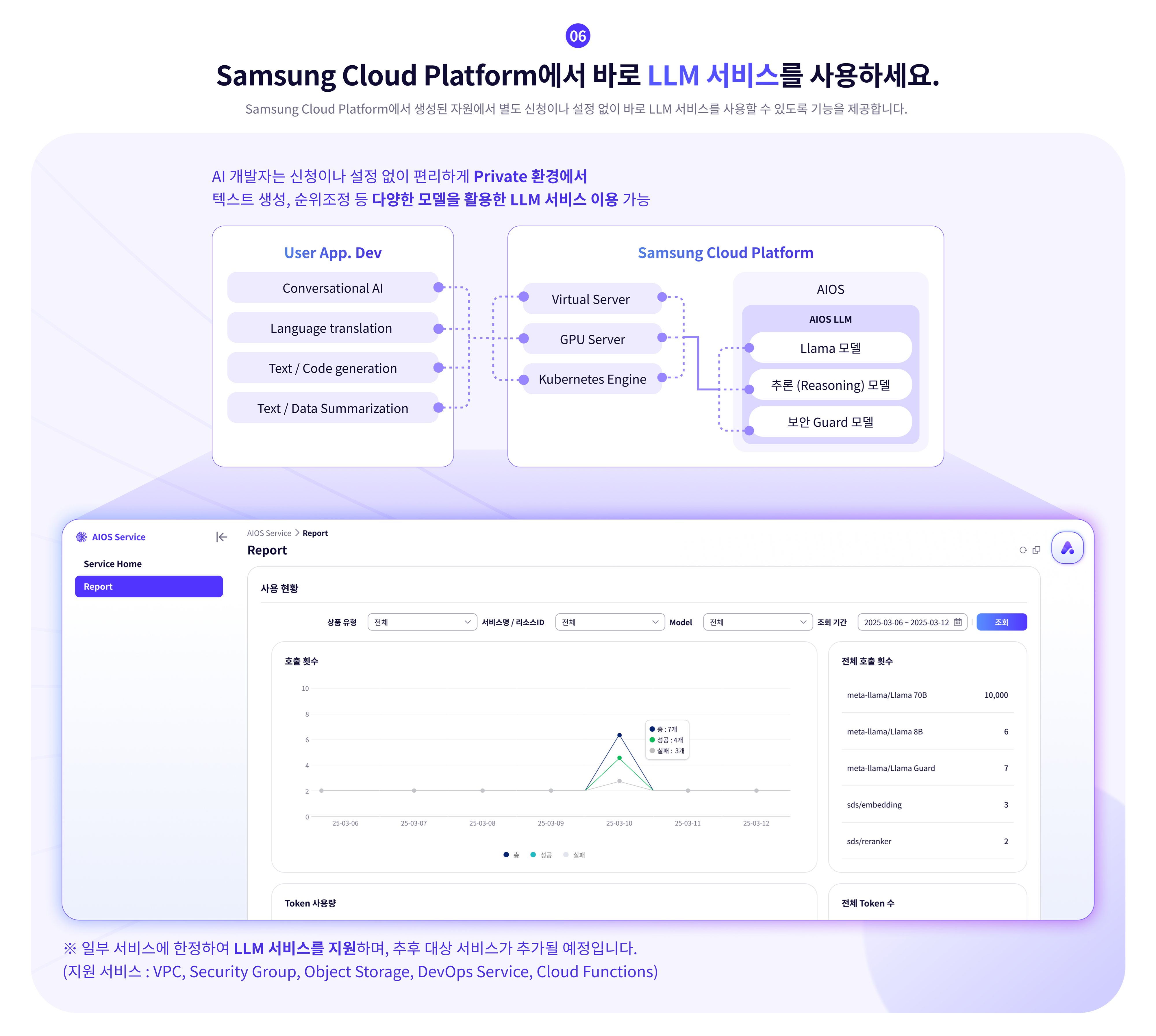

- 13: AI-ML

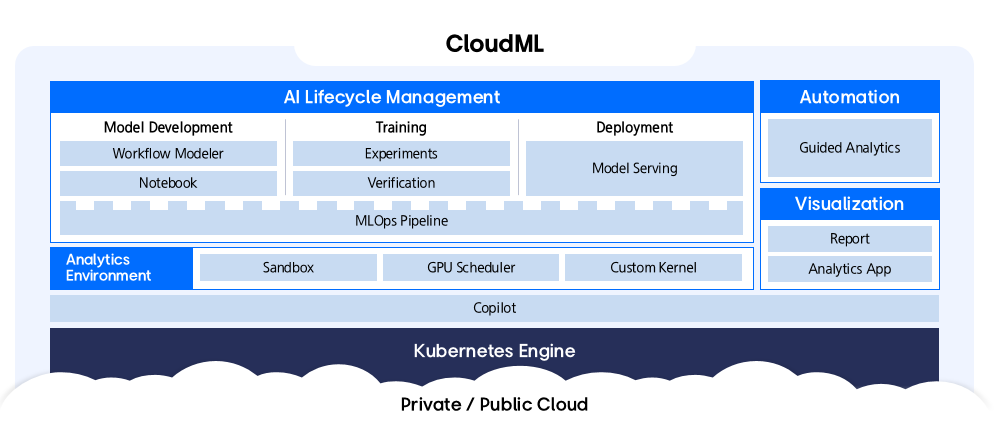

- 13.1: CloudML

- 13.1.1: Overview

- 13.1.2: How-to guides

- 13.1.2.1: Kubernetes Cluster Configuration

- 13.1.3: API Reference

- 13.1.4: CLI Reference

- 13.1.5: Release Note

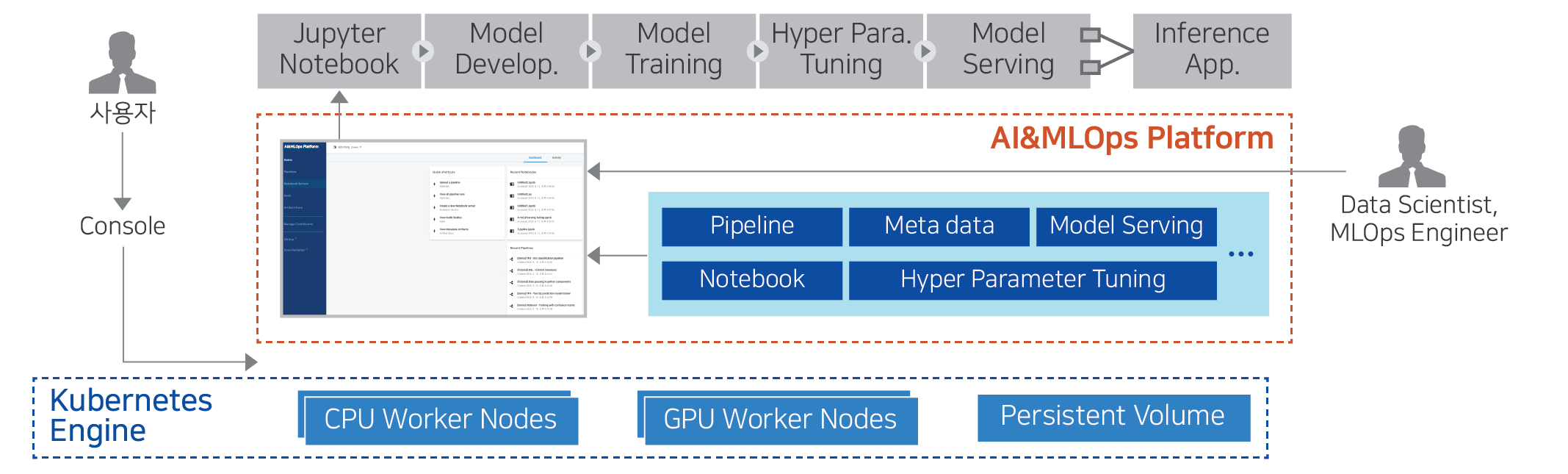

- 13.2: AI&MLOps Platform

- 13.2.1: Overview

- 13.2.2: How-to guides

- 13.2.2.1: Cluster Deployment

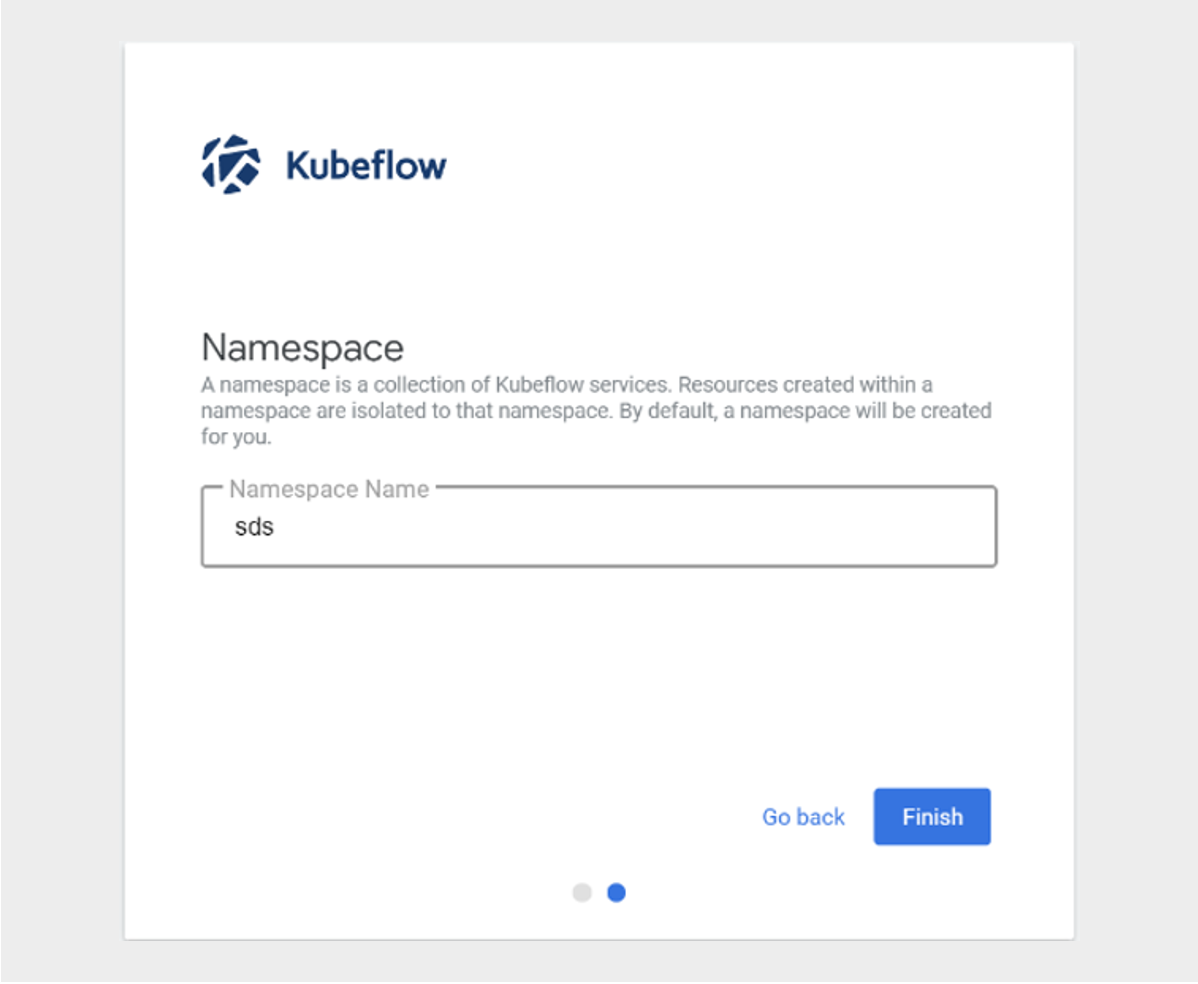

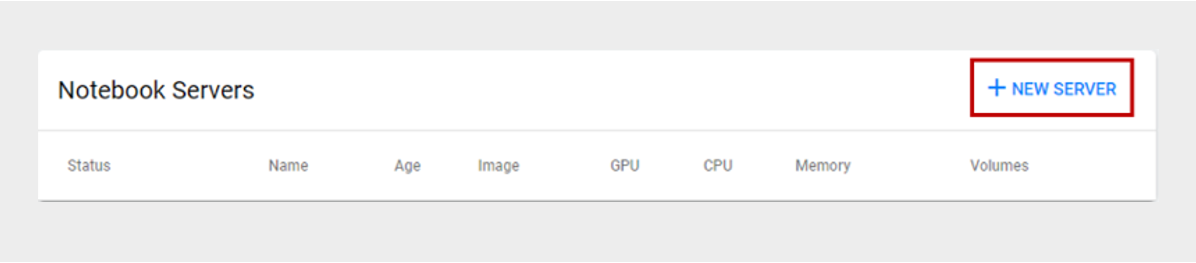

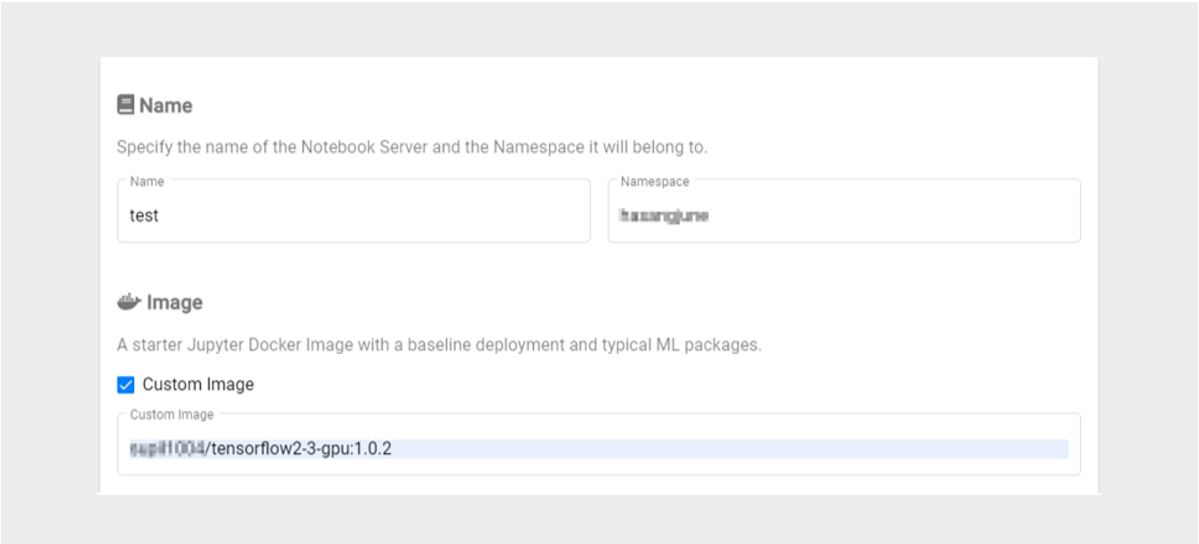

- 13.2.2.2: Kubeflow Usage Guide

- 13.2.3: API Reference

- 13.2.4: CLI Reference

- 13.2.5: Release Note

- 14: Hybrid Cloud

- 14.1: Edge Server

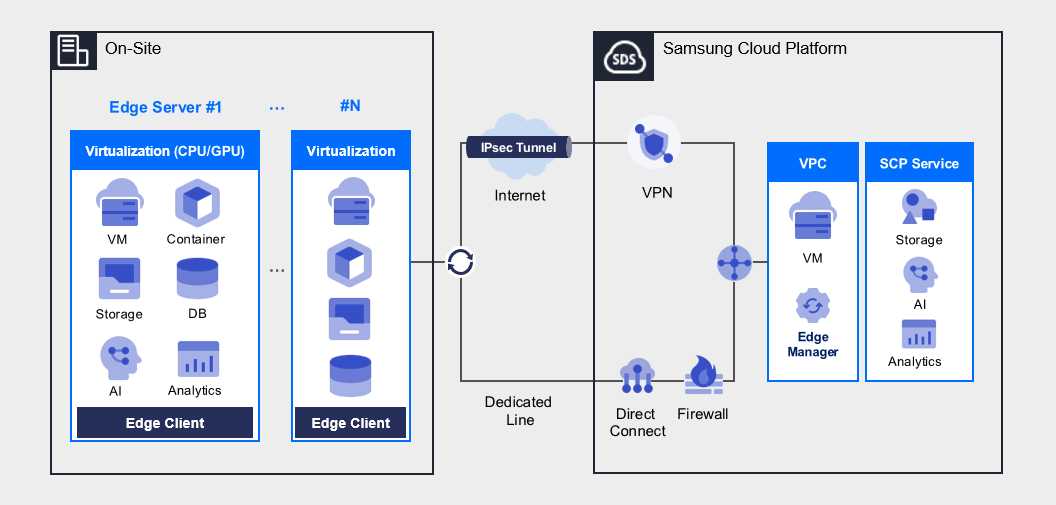

- 14.1.1: Overview

- 14.1.2: How-to guides

- 14.1.3: Release Note

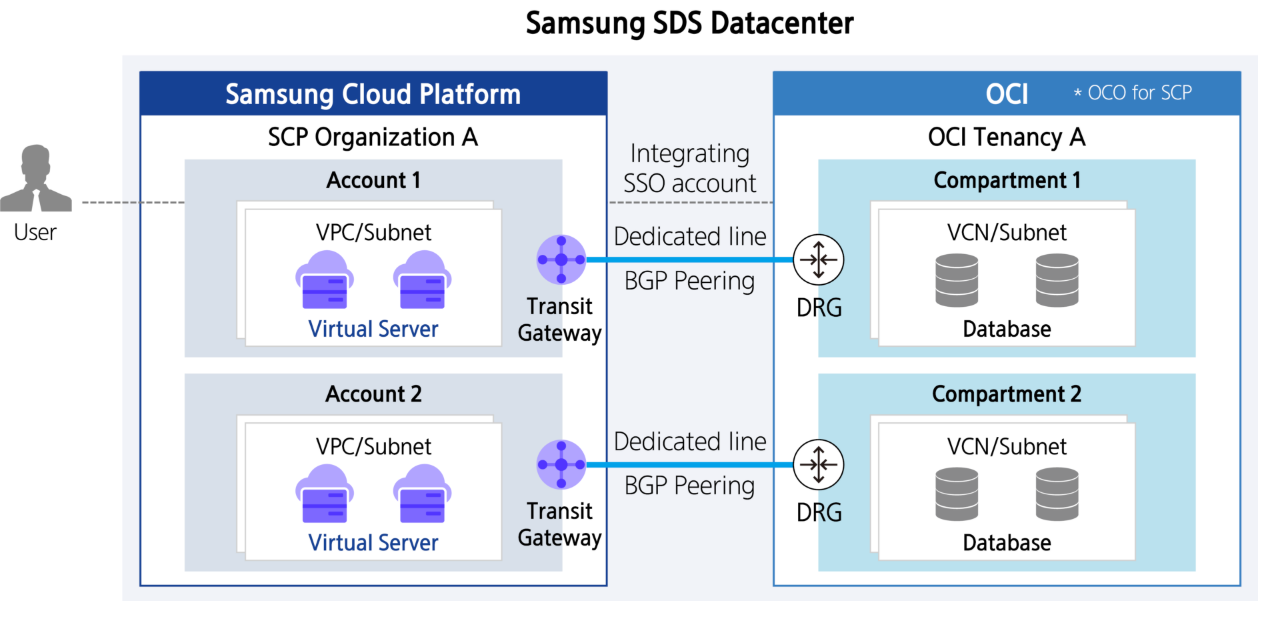

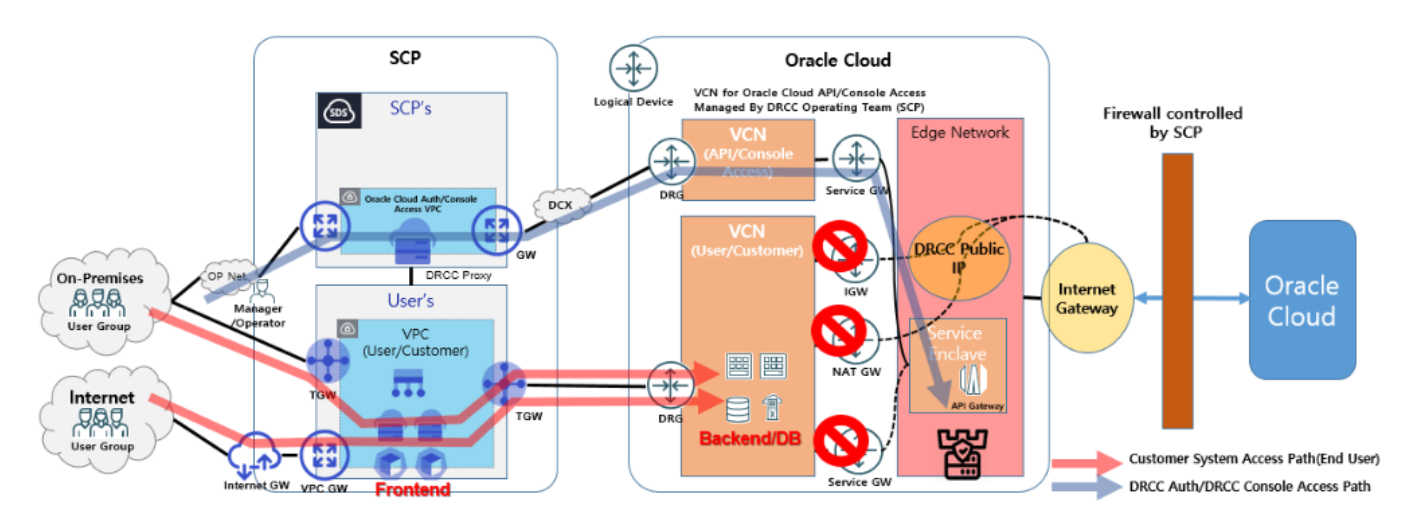

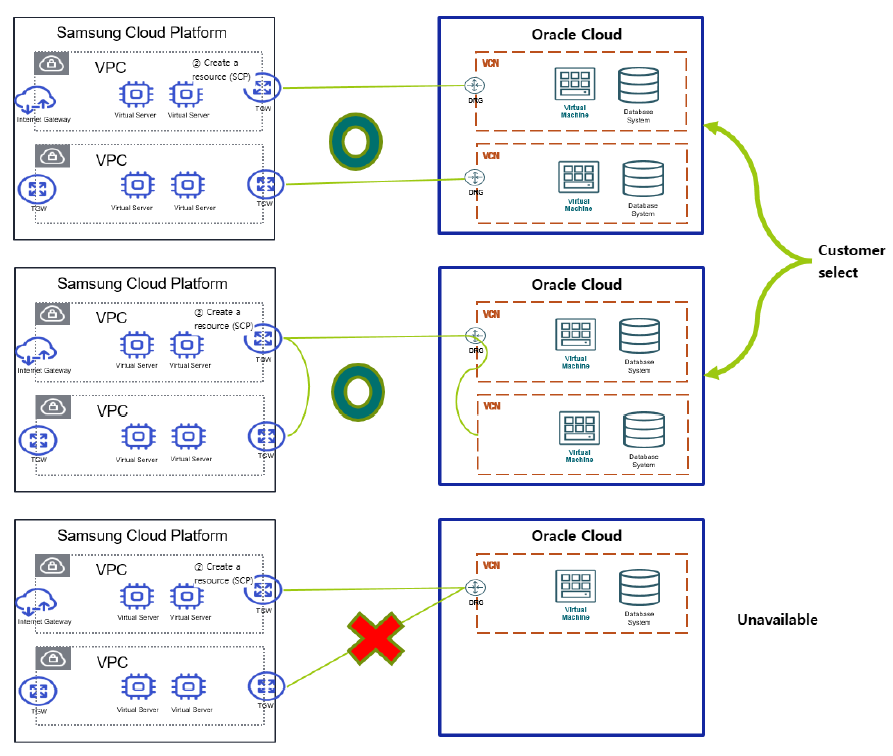

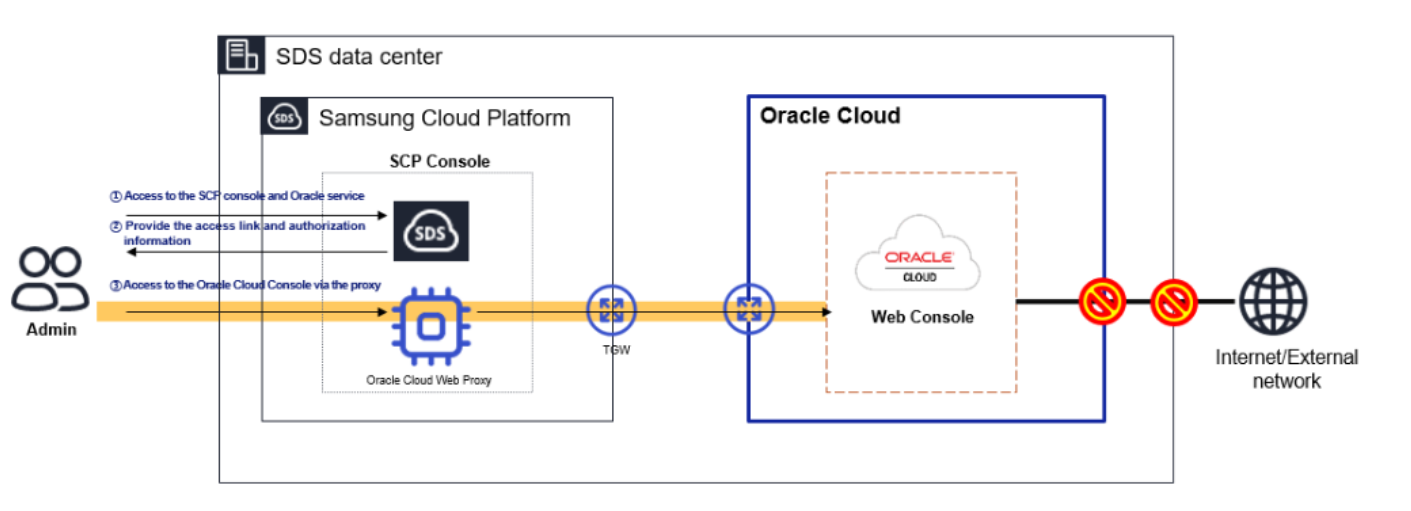

- 14.2: Oracle Services

- 14.2.1: Overview

- 14.2.2: How-to guides

- 14.2.3: Release Note

- 15: Developer Tools

- 15.1: MCP Server

- 15.1.1: Overview

- 15.1.2: How-to guides

- 15.1.2.1: Docs mcp server

- 15.1.3: Release Note

- 16: Release note

- 17: Glossary

- 18:

1 - Samsung Cloud Platform

1.1 - New Features

New feature

Explore the new features of the Samsung Cloud Platform Console~

Resource Management Using Copilot

Information about resources/services

Easy and fast integrated search

Improving accessibility of Account and Favorites

Providing services for large-scale organizations and systematic user management

LLM service that can be used without a separate application

1.2 - Overview

Samsung Cloud Platform

Samsung Cloud Platform is a cloud environment that virtualizes and provides various resource pools such as computing, storage, networking, and databases needed by enterprises.

Through the Samsung Cloud Platform, you can use the required resources in a cloud environment without needing to provide hardware or physical space. Since charges are billed based on actual usage, efficient budget management is possible, and companies can reduce costs associated with building and managing their own server environments.

The main terms used in Samsung Cloud Platform are as follows.

term | Detailed description |

|---|---|

| Region | Consists of one or more data centers to ensure availability as geographically separated cloud service delivery units. |

| Account | The basic unit and billing unit required to use the Samsung Cloud Platform service |

| Root user | User who created the Account and holds top-level privileges |

| IAM user | A user with restricted permissions created by the root user within the account |

| Service | Various types of IT services and infrastructure (Compute, Storage, Network, etc.) provided by Samsung Cloud Platform |

| Resource (resource) | An individual unit entity (Entity) that is created and managed as the user uses the service. |

Component

Samsung Cloud Platform provides a Service Portal, Console, and Documentation.

Category | Detailed description |

|---|---|

| Service Portal | Service and pricing overview for Samsung Cloud Platform, as well as information such as customer support |

| Console | Self-service interface that provides functions such as creating accounts and resources, and checking costs on the Samsung Cloud Platform. |

| Documetation | As a documentation service for Samsung Cloud Platform, it provides user guides, API/CLI references, and more. |

region

Regions are geographically distinct units of cloud service provision, composed of one or more data centers to ensure availability. Services offered on the Samsung Cloud Platform are managed by region, so the services provided may vary slightly. Refer to the user guide for each service.

| Region name | Region |

|---|---|

| Western Korea | Korea West (kr-west1) |

| Eastern Korea | Korea East (kr-east1) |

| South Korea (administrative) | South Korea South 1 (kr-south1) |

| South Korea (public) | South Korea 2 (kr-south2) |

| South Korea (Internet) | South Korea South 3(kr-south3) |

Account

To use the Samsung Cloud Platform, you need to create an Account, which can be done through sign‑up. A user who signs up and creates an Account becomes the Account’s root user and is responsible for payment. Once you sign up and register a payment method, you can create resources.

The root user can create users in IAM and add them to user groups. Users created in this way are IAM users.

The typical flow from a user creating an account to setting up a cloud environment and performing tasks is as follows.

- When a user signs up, an Account is created and they become a Root user.

- If a root user registers a payment method, they can create resources on the Samsung Cloud Platform.

- The root user creates IAM users and adds them to user groups so they can perform tasks according to their respective permissions.

- IAM users request the required services as needed to carry out their tasks.

The detailed responsibilities for each role are as follows.

| role | Job responsibilities |

|---|---|

| Root user | User with the highest privileges of the Account

|

| IAM user | User with limited permissions within the Account

|

1.3 - Getting Started with Console

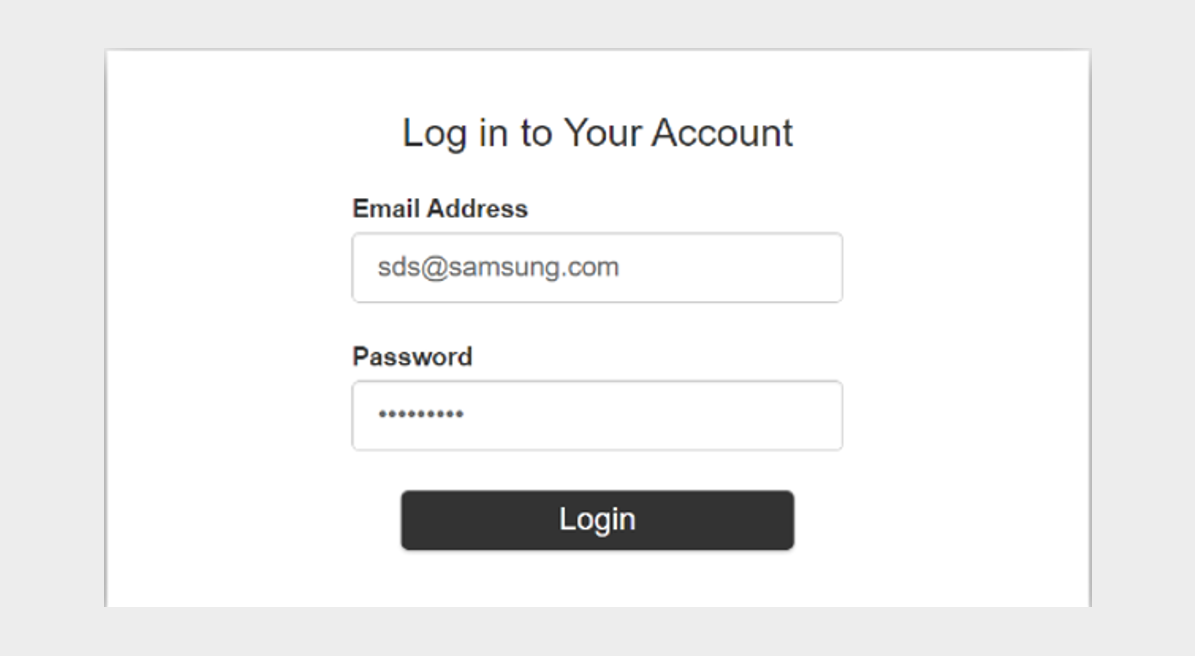

1.3.1 - Log In

To use the Samsung Cloud Platform Console, you need to create an Account, and you can create an Account through sign‑up. A user who signs up and creates an Account becomes the Account’s root user and is responsible for the Account’s payment. After signing up and registering a payment method, you can create resources. For details, see Register a payment method.

Sign Up

To use the Samsung Cloud Platform Console, you need to create an Account, which can be done through sign‑up. To create an Account in the Samsung Cloud Platform Console, follow these steps.

On the login page, select the user type Root User and then click the Sign Up button. You will be taken to the Sign Up page.

On the Sign Up page, complete Identity Verification.

- When identity verification is complete, click the Next button.

Item Required statusExplanation Prevent automatic input Required Enter the characters displayed in the image into the input field. email Required Email to be used as subscriber ID - Enter the email address and click the Duplicate Check and Verify button

Table. Personal authentication information - In Enter registration information, select the region information and agree to the terms.

Item RequiredExplanation region Required Select subscriber region Agree to the Terms of Service Required Check if the service terms are agreed. Consent to collection and use of personal information Required Check consent for collection and use of personal information Consent for overseas transfer of personal data Required Check consent for overseas transfer of personal data Are you at least 14 years old? Required Check if the user is 14 years or older. Consent to the collection and use of personal information Select Check consent for collection and use of personal information Table. Sign-up information input - Enter the required fields in Member Information Input.

Item RequiredExplanation ID(email) Required Email to be used as subscriber ID - Display email information used for identity verification

username Required Subscriber name - Can be entered using Korean, English, numbers, and spaces, up to 60 characters

Account name Required Account for the subscriber - Can be entered using Korean, English, numbers, and spaces, up to 60 characters

Password Required The subscriber can enter a password of 9 to 20 characters - Include at least one uppercase letter (English), one lowercase letter (English), one digit, and one special character (

!@#$%&*^)

- The ID cannot be used as the password

- Cannot use the same character three or more times consecutively

- Passwords that are easy to guess are not allowed

- Sequences of four or more consecutive letters or numbers are not allowed

- Password change interval: 90 days

Confirm Password Required Confirm the password the subscriber will use mobile phone number Required Enter mobile phone number - Enter the mobile phone number and click the Verify button to issue a verification code

- Enter the verification code received on the mobile phone and click the Confirm button

- If the verification code is valid, identity verification is completed

Notification language Required Set the language for notifications such as email and SMS provided by Samsung Cloud Platform - After logging in, change it in Notification Popover > Notification Settings

Table. Member information input information

- When identity verification is complete, click the Next button.

After entering all the information, click the Complete button, and a verification email will be sent to the entered email address.

- Click the Verify button in the received email to complete registration.

Log in

In the Samsung Cloud Platform Console, there are two types of users: Root users and IAM users. A Root user is the account creator who performs tasks that require unlimited access permissions. An IAM user is a user within an account who performs daily tasks, and a Root user can create IAM users. For detailed information about user creation, see IAM.

Log in as root user

To log in to the Samsung Cloud Platform Console as the Root user, follow these steps.

- On the login page, select the user type Root User, enter the ID (email), and click the Next button.

- Navigate to the Root user login page. Enter the password.

- Select the method to send the verification code, and click the Send Verification Code button.

- Enter the received verification code and click the Login button.

- If login succeeds, you will be redirected to the Console Home page.

- Please enter the password and verification code correctly. If you enter the password or verification code incorrectly five or more times, your account will be locked for security.

- If the account is locked, provide the locked account information to the user.

- If an allowed IP is configured, login from an unauthorized IP is not possible. Click the Access Allowed IP Not Set item to lift the IP restriction.

- If you have removed the allowed IP setting and logged in, for security go to the Management > IAM > My info. page and reset it. For more details, see Manage Access IP.

IAM user login

To log in to the Samsung Cloud Platform Console as an IAM user, follow these steps.

- On the login page, select the user type as IAM user, enter the Account information, and then click the Next button.

- Go to the IAM User Login page. Enter the IAM Username and Password.

- Select the authentication method and click the Next button.

- Enter the received verification code and click the Next button.

- If login succeeds, you will be redirected to the Console Home page.

- If an IAM user loses their password, they can click the Find Password button to reset the password.

- In the Password Recovery popup window, use your login information (Account information, IAM username) and the pre‑registered email account to reset your password, then log in again.

- Please enter the password and verification code correctly. If you enter the password or verification code incorrectly five or more times, your account will be locked for security.

- If the account is locked, provide the locked account information to the user or administrator.

- If an allowed IP is configured, login from an unauthorized IP is not possible. Click the Access Allowed IP Not Set item to lift the IP restriction.

- If you have removed the allowed IP setting and logged in, go to the Management > IAM > My info. page and reset it for security. For more details, refer to Managing Access IP.

Switch User

After logging into the Samsung Cloud Platform Console, you can switch to a Root user or an IAM user.

To switch users, follow the steps below.

- On the Console Home page, click the User Switch button to the right of the Account name. The User Switch popup opens.

- Click the user name you want to change among the usernames for each Account. A popup indicating user switching will open.

- After verifying the user name, click the Confirm button. The user switch completes and you are redirected to the Console Home page.

Changing the console language

You can set the language to be used in the Samsung Cloud Platform Console.

Change on the login page

- To change the language displayed in the Samsung Cloud Platform Console, click the user language in the upper right of the login page, select the desired language, and then log in.

Change on the Console page

- If you are logged into the Samsung Cloud Platform Console, you can change the language by clicking the language selector at the bottom of the page.

Edit User Information

You can change user information such as username, password, and mobile phone number.

To edit user information, click My menu > My Info. at the top right of the Console.

1.3.2 - Console

When you first log in to the Samsung Cloud Platform Console, you are taken to the Console page.

Console

On the Console page, you can view the Console Home page and configure its widgets. You can also view the list of all services in the Samsung Cloud Platform. The Console left menu’s Console Home and All Services provide the following functions.

| Provided features | description |

|---|---|

| Console Home | In the Console Home of the Console, important information about the Samsung Cloud Platform is provided, and shortcut widgets for services are offered

|

| All services | View all Samsung Cloud Platform service categories and service listings in the Console, and navigate to the respective service

|

Console Home

When you first log in to the Samsung Cloud Platform Console, you are taken to the Console page, and on the Console page, the Console Home page is displayed by default.

Console Home consists of widgets, and can be changed by clicking the Dashboard Settings button in the upper right corner.

| Provided features | Explanation |

|---|---|

| Welcome | Greeting and Samsung Cloud Platform phrase introduction

|

| Recent Visit Service | List of recently visited services

|

| Copilot | Introduction to Copilot or list of conversations with Copilot

|

| Architecture Diagram | Provide resource information in diagram form to easily grasp relationships between resources at a glance

|

| Notice | Samsung Cloud Platform Console Announcements |

| Register payment method | Register a payment method to use all resources of the Samsung Cloud Platform Console

|

| Support Center | A service that provides the technical support, standard architecture, incident response, and service inquiries/answers needed when using the Samsung Cloud Platform Console

|

| This month’s usage and estimated amount | Predict this month’s estimated amount and check the billed amount

|

| User access trend | Daily user access count for the past week, including duplicates. |

| Service Status | Service status by service category

|

| This month’s carbon emissions | Carbon emission data generated when using services on the Samsung Cloud Platform Console

|

Configure the Dashboard

- On the Console page, click Console Home in the left menu. You will be taken to the Console Home page.

- When you first log in, the default page is the Console > Console Home screen.

- On the Console Home page, click the Dashboard Settings button at the top right. The Dashboard Settings popup opens.

- Dashboard Settings popup’s Widget Settings: modify the widget items and their order displayed on Console Home.

- Welcome All widgets except the Welcome widget can be configured by the user.

- If you select the widget’s checkbox, you can add it to the dashboard; if you deselect the checkbox, you can remove it from the dashboard.

- You can change the order by clicking and holding the hamburger button next to the widget name and moving it up or down.

- Dashboard Settings popup’s Widget Settings: selecting Select All selects all widgets. Deselecting Select All deselects all widgets except Welcome.

- After reviewing widget changes in the Preview of the Dashboard Settings popup, click the Save button.

- You can preview the configured widget in the preview displayed on the right.

- Check the changed settings on the Console Home page.

All services

You can view the service categories and list of services offered by Samsung Cloud Platform at a glance, and navigate to the respective service.

1.3.3 - Integrated Management

Integrated management refers to the set of functions located at the top of the Samsung Cloud Platform Console.

Integrated Management Feature

In integrated management, you can view the service list and also see the list of services you recently visited. You can converse with Copilot, and you can view notifications received in the Samsung Cloud Platform Console. You can view the Samsung Cloud Platform region and see the list of regions that can be changed. In the My menu, you can view the user’s information and Account information.

| Category | Detailed description |

|---|---|

| service | Search for Samsung Cloud Platform services, etc., and go to the service

|

| Copilot | Copilot provided by Samsung Cloud Platform

|

| Unified Search | Search Samsung Cloud Platform services, internal Console documents, Marketplace, etc.

|

| Notification | Check notifications received from the Samsung Cloud Platform Console

|

| Support | Support Center, Documentation, can navigate to Announcements

|

| Region | You can view the region of Samsung Cloud Platform and check the list of regions that can be changed

|

| My menu | Check user and Account information

|

Service

You can search for services provided by the Samsung Cloud Platform by keyword, service category, recent visits, favorites, etc., and navigate to the service.

| Category | Detailed description |

|---|---|

| Search term lookup | Search within the service using a keyword

|

| Recent visit | List of recently visited services

|

| Bookmark | List of bookmarked services

|

| All services | A list of all services sorted in ascending order |

Add to Favorites

You can add the service to your favorites.

- Click the Service button at the top of the Console. 1. service Navigate to the popup window.

- In the Service popup, search for the service you want to add to favorites from All Services or Recent Visits. 2. Click the star icon to the left of the service name to add it to your favorites.

- Check that the star shape has been changed to yellow.

- In Service > Favorites, you can view the services you added to favorites.

- You can also view the services you bookmarked at the top of the console.

Check Favorites

You can view the services added to your favorites and navigate to the corresponding service.

- Click the Service button at the top of the Console. 1. Navigate to the Service popup window.

- Click Bookmark in the Service popup window. 2. You can view the services added to your favorites.

- In Service > Favorites, click the name of the service you want to navigate to. 3. Go to the service.

Remove from favorites

You can remove services added to your favorites.

- Click the Service button at the top of the Console. 1. Go to the Service page.

- In the Service popup, click Bookmark. 2. You can view the services added to your favorites.

- Deselect the star icon to the left of the service name you added to favorites.

- All services, etc., you can deselect the star icon to the left of the service name anywhere within service.

- In Service > Favorites, you can see that the service you unfavorited has been removed.

- The service that was unfavorited is also removed from the top of the Console.

Copilot

Copilot is a generative AI-based conversational assistant that can help understand, build, scale, and operate the Samsung Cloud Platform. In the Samsung Cloud Platform Console, you can click the Copilot icon to start a conversation with Copilot.

For more details, refer to Copilot. Below are a few example questions you can ask Copilot.

Example question

- How can I check the estimated charges?

- How do I create a Virtual Server?

- Please show the list of Virtual Server resources in my Account.

- Find the 192.168.21.1 IP among the Virtual Servers.

- How do I add a user to a user group?

- Download cost details

- What is the estimated bill amount for this month?

Unified Search

You can easily find services and documents provided by Samsung Cloud Platform using the unified search.

To find the desired service or document using the unified search, follow these steps.

Enter the keyword you want to search for in the integrated search box. 1. The search results popup opens.

- When you enter a keyword in the unified search box, the keyword is auto‑completed and a popup with the search results for the completed keyword opens.

- When you click the View All button in the search results category, the full results from that category are displayed.

Category Detailed description Service, documents within Condole, Marketplace Enter a keyword with at least two characters Resource name, Resource ID Enter the resource name or resource ID you are looking for in the form /+ resource name (or resource ID)- The resource name (or resource ID) must be at least two characters

Table. How to enter keywords for each search itemReference- After entering a keyword, click Ask Copilot to verify the keyword through Copilot.

- For detailed information about Copilot, see Copilot 개요.

Click the information you want to view in the search results popup. 2. Go to the page.

The range that can be found using the integrated search is as follows.

- Service and resource names, and resource IDs in Samsung Cloud Platform

- User guide documents: How-to guides, API reference, CLI reference

- Knowledge Center

- Marketplace

Notification

You can view notifications received in the Samsung Cloud Platform Console and navigate to the notification settings.

| Category | Detailed description |

|---|---|

| Notification List | The latest 10 notifications received in the Samsung Cloud Platform Console

|

| Notification Settings | When you click the Notification Settings button, you will be taken to the Notification popup’s Notification Settings

|

Check notification details

- Click the Notification button at the top right of the Console to view the latest 10 notifications received by the Console.

- When you select a specific notification item from the list of the latest 10 notifications, you are taken to the notification detail popup window.

Category Detailed description Resource name Name of the resource that triggered the alert Notification type Notification occurrence type - Notice: Alerts received regarding notices

- Service: Alerts received per service

- Cost Management: Alerts received regarding Cost Management

- IAM: Alerts received regarding IAM

- Notification Manager: Alerts received regarding Notification Manager

- Support: Alerts received regarding Support such as inquiries and service requests

Constructor Notification creator Notification creation date and time Notification creation timestamp Table. Notification detail information items

Check notification settings

- If you click the Notification button at the top right of the Console, you can view the latest 10 notifications received by the Console.

- Click the Notification Settings button next to Notification. 2. Notifications > Notification Settings navigates to the popup window.

- Notifications > Notification Settings You can view the notification settings status in the popup window.

Category Detailed description Notification language Language for receiving notifications Notification Target > Notification Type Notification types by recipient - Announcements: Notifications received about announcements

- Service: Notifications received per service

- Cost Management: Notifications received about Cost Management

- IAM: Notifications received about IAM

- Notification Manager: Notifications received about Notification Manager

- Support: Notifications received about Support, such as inquiries and service requests

Table. Notification Settings Items

Modify Notification Settings

- If you click the Notification button at the top right of the Console, you can view the latest 10 notifications received by the Console.

- Click the Notification Settings button next to Notification. 2. Notifications > Notification Settings navigates to the popup window.

- Notification > Notification Settings You can view the notification settings status in the popup window.

- Notification > Notification Settings In the popup window, you can edit the notification settings by clicking the Edit button.

Category Detailed description Notification language Language for receiving notifications Notification Target > Notification Type You can select the notification type (email, SMS) to receive for each notification target. The default setting is email - Announcements: notifications received about announcements

- Service: notifications received per service

- Cost Management: notifications received about Cost Management

- IAM: notifications received about IAM

- Notification Manager:notifications received about Notification Manager

- Support: notifications received about Support, such as inquiries and service requests

Table. Notification Settings Edit Items

Support

In Support, we provide Support Center, Documentation, Announcements.

- Support Center provides technical support, standard architecture, incident response, and service inquiries/answers needed when using Samsung Cloud Platform. * Please refer to Support Center.

- Documentation is a user guide that clearly and simply provides concepts of various services, how to use the Console, and ways to utilize them. * Please refer to the User Guide.

- Notice is a notice provided to users in the Samsung Cloud Platform Console.

Region

You can view the region of the Samsung Cloud Platform and check the list of regions that can be changed.

The services offered by Samsung Cloud Platform are managed on a per‑region basis, so the services provided may vary slightly. A region is a geographically distinct unit for delivering cloud services, composed of one or more data centers to ensure availability. For some services such as the Samsung Cloud Platform Console or IAM, you do not need to select a region because they are global services.

| Region name | Region |

|---|---|

| Global | - |

| Western Korea | Korea West (kr-west1) |

| East Korea | Korea East (kr-east1) |

| South Korea (administrative) | South Korea 1 (kr-south1) |

| South Korea (public) | South Korea 2 (kr-south2) |

| South Korea (Internet) | South Korea South 3 (kr-south3) |

My menu

If you click the profile-shaped button at the top right of the Console, you can see the features provided in the My menu. You can view the Account ID, IAM username, or Root username, and you can navigate to Account, My Info., Cost Management.

| Provided features | Explanation |

|---|---|

| Account ID | Account ID logged in to Samsung Cloud Platform Console |

| IAM username or Root username | IAM user name or Root user name logged in to the Samsung Cloud Platform Console |

| time zone | User-configured time zone

|

| Account | Account information

|

| My Info. | Provides user basic information and authentication key management functions. |

| Cost Management | You can view usage and billing details, payment history, and cost analysis, and manage Credit, budget, Account, and payment methods

|

| role switching | Can switch to another role

|

| Logout | Log out of the Samsung Cloud Platform Console |

1.4 - Copilot

Copilot Overview

You can use the Samsung Cloud Platform more conveniently through the generative AI‑based assistant service Copilot. Users can easily leverage Copilot using familiar interfaces and natural language, enabling them to conduct work accurately and efficiently.

You can start a conversation with Copilot by clicking the Copilot button in the Samsung Cloud Platform Console.

- Clearly state the reason for requesting the work and the final objective to ensure an accurate response.

- To solve complex problems, start by requesting small and simple requirements step by step.

- We use the terminology used in the Samsung Cloud Platform.

- Please be careful not to enter any sensitive information related to work, as it cannot be used.

- Answers generated based on generative AI may contain inaccurate information, so be sure to review them before use.

- For general questions regarding the Samsung Cloud Platform, please select General Inquiry, and for questions about the resources you are using, please select Resource Lookup.

Using Copilot

You can use Copilot via the Copilot button at the top of the Samsung Cloud Platform Console and the Copilot button at the bottom right.

You can also use Copilot via the Copilot widget on the dashboard of the Console Home page, which is the first login screen of the Samsung Cloud Platform Console.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- Copilot Please check the popup window. 2. The features provided by Copilot are as follows.

Category Detailed description Ask a question > General query General questions about using Samsung Cloud Platform - Example questions

- How do I create a Virtual Server?

- How can I check the estimated charges?

- What is the estimated bill for this month?

- General inquiry see

Ask a question > View Resource Question about resources used in Samsung Cloud Platform - Example question

- Show the list of Virtual Server resources in my Account.

- Find the 192.168.21.1 IP among the Virtual Servers

- Resource Query see

Start a new conversation Start a new conversation - Start a new chat Reference

Open in new window Display Copilot popup in a new tab - View in a new window Reference

Conversation List Check the list of previous conversations - 대화 목록 확인하기 Reference

Recommended Prompt Copilot’s recommended question list - Use recommended prompt Reference

Add tag information Add tag information to a specific resource or project - Add tag information Reference

Table. Copilot-provided featuresReference Tip- When you drag text in the Samsung Cloud Platform Console, the Copilot button appears. * Click the Copilot button to search the text with Copilot.

- On the creation page of each service, clicking the info button displayed next to an item provides a detailed guide via Copilot.

- You can enable or disable the Copilot Recommendation feature in My info under the My menu.

- Example questions

General query

Through Copilot, you can ask general questions about the features and usage of the Samsung Cloud Platform.

To make a general query to Copilot, follow the steps below.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- In the bottom prompt of the Copilot popup, select General Query and ask a comprehensive question about Samsung Cloud Platform.

- example question

- Service that must be created in advance before creating a Virtual Server

- What network settings are required to create a Virtual Server?

- Clearly state the reason for requesting the work and the final objective to ensure an accurate response.

- To solve complex problems, start by requesting small and simple requirements step by step.

- We use the terminology used in Samsung Cloud Platform.

- Please be careful not to enter any sensitive information related to work, as it cannot be used.

- Answers generated based on generative AI may contain inaccurate information, so be sure to review them before use.

- For overall questions related to the Samsung Cloud Platform, please select General Inquiry, and for questions about the resources you are using, select Resource Lookup.

Query Resource

You can ask questions about resources created in Samsung Cloud Platform through Copilot.

To ask Copilot questions about resources, follow these steps.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- Copilot In the bottom prompt of the popup window, select View Resource and ask Samsung Cloud Platform about the resource.

- example question

- Please show the list of Virtual Servers.

- Active state Load Balancer

- Clearly state the reason for requesting the work and the final objective to ensure an accurate response.

- To solve complex problems, begin by requesting small, simple requirements in stages.

- We use the terminology employed in Samsung Cloud Platform.

- Please ensure you do not enter any sensitive information related to work, as it cannot be used.

- Answers generated based on generative AI may contain inaccurate information, so be sure to review them before use.

- For overall questions related to the Samsung Cloud Platform, please select General Inquiry, and for questions about the resources you are using, select Resource Lookup.

Start a new conversation

You can start a new conversation with Copilot.

To start a new conversation with Copilot, follow these steps.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- Copilot Click the Start new conversation button in the top right corner of the popup window. 2. A new conversation is starting.

Check conversation list

You can view the list of conversations you had with Copilot.

To view the list of conversations with Copilot, follow these steps.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- Copilot click the Conversation List button in the top‑left corner of the popup window. 2. Conversation List expands.

- In the Conversation list, click the conversation you want to view to go to that conversation.

Check recommended prompts

You can view the recommended prompt questions provided by Copilot.

To view the Copilot recommended prompt questions, follow these steps.

- Click the Copilot button at the top of the console. 1. Navigate to the Copilot popup window.

- Click the Recommended Prompt button on the left side of the bottom prompt in the Copilot popup window. 2. Recommended Prompt The popup window opens.

- Recommended Prompt You can view the questions needed for your work in the popup window.

Category Detailed description Product - Are there any prerequisite services that must be set up before creating a Virtual Server?

- What are the constraints that differ between Virtual Server and Bare Metal Server?

- Are there any precautions to take when using a Bare Metal Server?

Fee - What is the contract rate policy?

- What time period is used as the basis for charge calculation?

- How can I check the estimated charges?

member - How do I register for an IAM account?

- How can I recover my password if I forget it?

- How do I change user information and password?

etc. - How should the network configuration and firewall settings be set up to access a Samsung Cloud Platform Virtual Machine from an overseas local internal network?

- If there are errors or improvement suggestions while using the service, how should I contact support?

Table. Recommended Prompt Question Items

Add tag information

You can add tag information to a specific resource or project.

To add tag information, follow these steps.

Click the Copilot button at the top of the console. 1. Copilot popup window opens.

Enter the tag addition command along with the name of the resource or project for which you want to add tag information in the bottom prompt of the Copilot popup window.

- Example: Add a tag value to Virtual Server Project1

Copilot After entering the tag save information displayed in the popup window, click the Save button.

Category required statusDetailed description Service name Required Home region of Cloud Control - Cloud Control sets the default region as the home region and cannot be changed

- All regions except the default region are managed by Cloud Control

Resource name Required Enter the primary organizational unit within the landing zone - Case-sensitive English letters, up to 128 characters

- The primary organizational unit includes shared Accounts (Log Account, Audit Account)

- Security: Name of the primary organizational unit for the shared Account

- Can be modified after the landing zone is created

tag value Required Add Tag - Add Tag after clicking the Add Tag button, enter or select Key, Value values

- Key value required

- Up to 10 can be added

Table. Tag storage itemsWhen the popup notifying tag saving opens, click the Confirm button.

View in a new window

You can view and use Copilot in a new browser tab.

To use Copilot in a new tab, follow these steps.

- Click the Copilot button at the top of the console. 1. Copilot popup window opens.

- Click the View new window button at the upper right of Copilot. 2. It opens in a new tab of Copilot’s browser.

- Click the Copilot tab opened in the browser. 3. Go to the Copilot page.

- Use the desired features on the Copilot page.

| Category | Detailed description |

|---|---|

| Ask a question > General query | General questions about using Samsung Cloud Platform

|

| Ask a question > View Resource | Question about resources used in Samsung Cloud Platform

|

| Start a new conversation | Start a new conversation

|

| Conversation List | Check the list of previous conversations

|

| Recommended Prompt List | Display list of recommended questions from Copilot

|

2 - Compute

Leveraging the country’s top reliability, we conveniently and elastically provide optimal computing resources tailored to each use case.

2.1 - Virtual Server

2.1.1 - Overview

Service Overview

Virtual Server is a cloud computing-optimized virtual server that lets you freely allocate the amount you need at the required time without having to purchase infrastructure resources such as CPU and memory individually. In a cloud environment, you can use resources with optimized performance according to your computing purpose, such as development, testing, and application execution.

Features

Easy and convenient computing environment setup: Through a web-based Console, users can easily perform self-service provisioning of Virtual Servers, as well as resource and cost management. If you need to change the capacity of major resources such as CPU or Memory while using a Virtual Server, you can easily scale up or down without operator intervention.

Providing various types of services: Provides virtualized vCore/Memory resources according to predefined server types (1~128 vCore).

- General Virtual Server: Provides commonly used computing specs (up to 16 vCore, 256 GB)

- High-capacity Virtual Server: Provided when resources larger than the standard Virtual Server spec are needed.

Strong Security Implementation: By using the Security Group service, you can control inbound/outbound traffic communicating with the external Internet or other VPCs (Virtual Private Cloud) to securely protect the server. Additionally, real-time monitoring enables stable operation of computing resources.

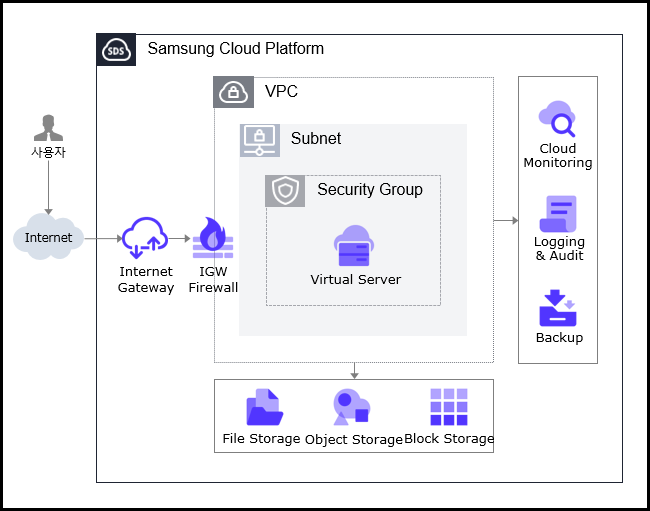

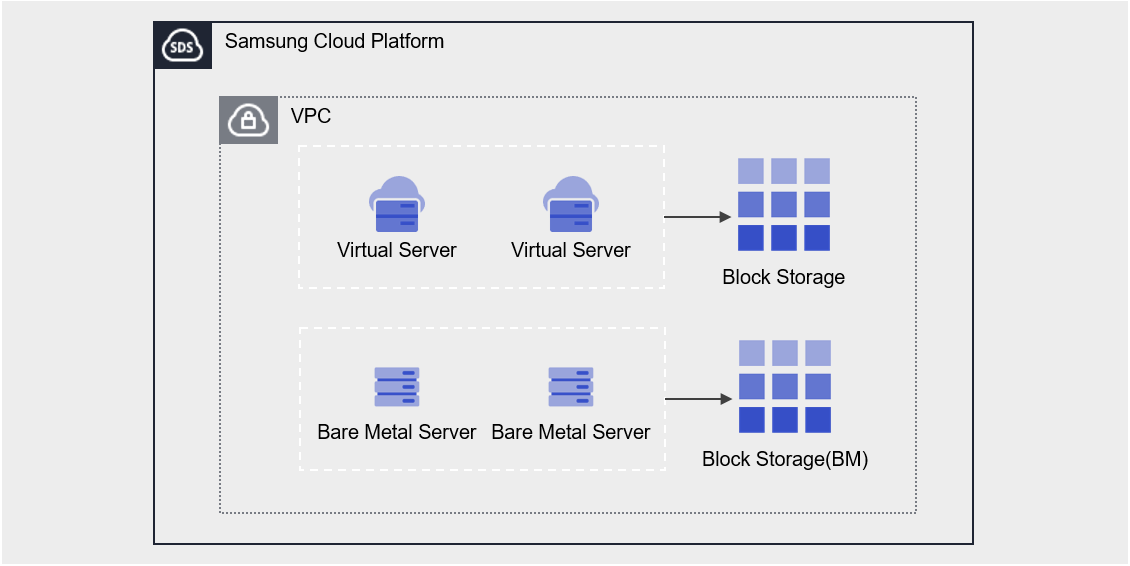

Service Architecture Diagram

Provided features

Virtual Server provides the following features.

- Auto Provisioning and Management: Provides Virtual Server provisioning, resource management, and cost management functions through a web-based Console. If you need to change the capacity of major resources such as CPU or Memory while using Virtual Server, you can modify the server type immediately using the server type modification feature.

- Standard Server Types and Image Provision: Provides virtualized vCore/Memory resources according to standard server types, and offers standard OS images.

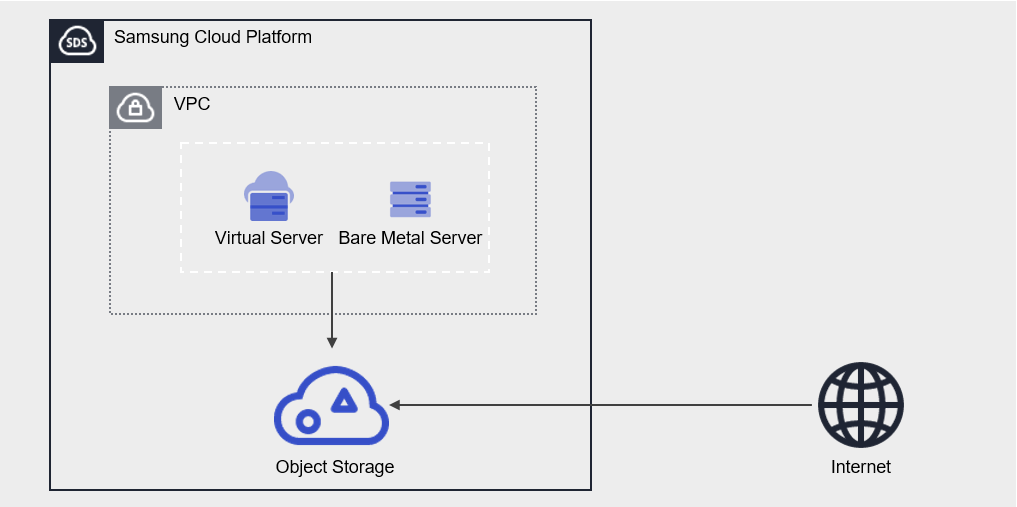

- Storage Connection: Provides additional attached storage beyond the OS disk. Block Storage, File Storage, and Object Storage can be attached and used.

- Network Connection: You can connect the standard subnet/IP settings of the Virtual Server and the Public NAT IP. Provides a local subnet connection for inter-server communication. This can be modified on the detail page.

- Security Group Application: Use the Security Group service to control inbound and outbound traffic communicating with external internet or other VPCs, thereby securely protecting the server.

- Monitoring: You can view monitoring information for computing resources such as CPU, Memory, and Disk through the Cloud Monitoring service.

- Backup and Recovery: You can back up and restore the Virtual Server Image using the Backup service.

- Cost Management: You can create, stop, or terminate servers as needed, and since billing is based on actual usage time, you can monitor costs according to consumption.

- ServiceWatch Service Integration: You can monitor data using the ServiceWatch service.

Component

Virtual Server provides standard server types and standard OS images. Users can select and use them according to the desired service scale.

Image

You can create and manage images. The main features are as follows.

- Image creation: You can create an Image from the configuration of a Virtual Server you are using, and you can also create an Image by uploading your Image file to Object Storage.

- Create Shared Image: You can create an Image with Visibility set to Private as a Shared Image that can be shared.

- Share with another Account: You can share the Image with another Account.

- Refer to the How-to guides > Image document for how to create and use images.

Keypair

To ensure a more secure OS login, we strengthen security by providing a Key Pair instead of the ID/Password entry method. The main features are as follows.

- Keypair creation: Generate a user credential to connect to the Virtual Server.

- Retrieve Public Key: You can load a file or manually enter the public key to retrieve it.

- Refer to the How-to guides > Keypair document for creating and using keypairs.

Server Group

Through Server Group settings, you can position the Block Storage added when creating a Virtual Server close to or distributed across racks and hosts. The main features are as follows.

- Server Group Creation: You can set Virtual Servers belonging to the same Server Group as Anti-Affinity(Distributed placement), Affinity(Proximate placement), or Partition(Virtual Server and Block Storage distributed placement).

- Refer to the How-to guides > Server Group document for how to create and use Server Groups.

OS Image provided version

The OS images provided by Virtual Server are as follows

| OS Image version | EoS Date |

|---|---|

| Alma Linux 8.10 | 2029-05-31 |

| Alma Linux 9.6 | 2025-11-17 |

| Oracle Linux 8.10 | 2029-07-31 |

| Oracle Linux 9.6 | 2025-11-25 |

| RHEL 8.10 | 2029-05-31 |

| RHEL 9.4 | 2026-04-30 |

| RHEL 9.6 | 2027-05-31 |

| Rocky Linux 8.10 | 2029-05-31 |

| Rocky Linux 9.6 | 2025-11-30 |

| Ubuntu 22.04 | 2027-06-30 |

| Ubuntu 24.04 | 2029-06-30 |

| Windows 2019 | 2029-01-09 |

| Windows 2022 | 2031-10-14 |

| Windows 2016 | 2027-01-12 |

- Linux operating systems such as Alma Linux and Rocky Linux provide only even Minor versions, except for the final release of a Major version. This policy ensures the stability and consistency of the SCP system. We recommend checking the EOS (End of Support) and EOL (End of Life) dates for the operating system, and, if necessary, applying new or additional individual packages to maintain a stable environment.

Server type

The server types supported by Virtual Server are as follows. For detailed information about server types, see Virtual Server Server Types.

Standard s1v2m4

Category | example | Detailed description |

|---|---|---|

| Server type | Standard | Provided server type classifications

|

| Server specifications | s1 | Provided server type classification and generation

|

| Server specifications | v2 | Number of vCores

|

| Server specifications | m4 | Memory capacity

|

Constraints

- When creating a Virtual Server with Rocky Linux or Oracle Linux, additional configuration is required for time synchronization (NTP: Network Time Protocol). For other images, it is set automatically and no separate configuration is needed.

For more details, refer to Linux NTP Setup. - If you created RHEL and Windows Server before August 2025, you need to modify the RHEL Repository and WKMS (Windows Key Management Service) settings.

For more details, see RHEL Repo and WKMS Configuration.

Preliminary Service

This is a list of services that need to be pre-configured before creating the service. Please refer to the guide provided for each service and prepare in advance.

| Service Category | service | Detailed description |

|---|---|---|

| Networking | VPC | A service that provides an isolated virtual network in a cloud environment |

| Networking | Security Group | Virtual firewall that controls server traffic |

2.1.1.1 - Server Type

Virtual Server server type

Virtual Server provides server types that match the intended use. Server types consist of various combinations such as CPU, Memory, and Network Bandwidth. The host server used for a Virtual Server is determined by the server type selected when creating the Virtual Server. Please choose a server type based on the specifications of the application you plan to run on the Virtual Server.

The server types supported by Virtual Server are as follows.

Standard s1v2m4

Category | example | Detailed description |

|---|---|---|

| Server type | Standard | Provided server type classifications

|

| Server specifications | s1 | Provided server type classification and generation

|

| Server specifications | v2 | Number of vCores

|

| Server specifications | m4 | Memory capacity

|

s1 server type

The s1 server type of Virtual Server is offered with standard specifications (vCPU, Memory) and is suitable for various applications.

- First generation of Samsung Cloud Platform v2: Intel 3rd generation (Ice Lake) Xeon Gold 6342 Processor with up to 3.3 GHz

- Supports up to 16 vCPUs and 256 GB of memory

- Maximum networking speed of 12.5 Gbps

| Category | Server type | vCPU | Memory | Network Bandwidth |

|---|---|---|---|---|

| Standard | s1v1m2 | 1 vCore | 2 GB | Up to 10 Gbps |

| Standard | s1v2m4 | 2 vCore | 4 GB | Up to 10 Gbps |

| Standard | s1v2m8 | 2 vCore | 8 GB | Up to 10 Gbps |

| Standard | s1v2m16 | 2 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v2m24 | 2 vCore | 24 GB | Up to 10 Gbps |

| Standard | s1v2m32 | 2 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v4m8 | 4 vCore | 8 GB | Up to 10 Gbps |

| Standard | s1v4m16 | 4 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v4m32 | 4 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v4m48 | 4 vCore | 48 GB | Up to 10 Gbps |

| Standard | s1v4m64 | 4 vCore | 64 GB | Up to 10 Gbps |

| Standard | s1v6m12 | 6 vCore | 12 GB | Up to 10 Gbps |

| Standard | s1v6m24 | 6 vCore | 24 GB | Up to 10 Gbps |

| Standard | s1v6m48 | 6 vCore | 48 GB | Up to 10 Gbps |

| Standard | s1v6m72 | 6 vCore | 72 GB | Up to 10 Gbps |

| Standard | s1v6m96 | 6 vCore | 96 GB | Up to 10 Gbps |

| Standard | s1v8m16 | 8 vCore | 16 GB | Up to 10 Gbps |

| Standard | s1v8m32 | 8 vCore | 32 GB | Up to 10 Gbps |

| Standard | s1v8m64 | 8 vCore | 64 GB | Up to 10 Gbps |

| Standard | s1v8m96 | 8 vCore | 96 GB | Up to 10 Gbps |

| Standard | s1v8m128 | 8 vCore | 128 GB | Up to 10 Gbps |

| Standard | s1v10m20 | 10 vCore | 20 GB | Up to 10 Gbps |

| Standard | s1v10m40 | 10 vCore | 40 GB | Up to 10 Gbps |

| Standard | s1v10m80 | 10 vCore | 80 GB | Up to 10 Gbps |

| Standard | s1v10m120 | 10 vCore | 120 GB | Up to 10 Gbps |

| Standard | s1v10m160 | 10 vCore | 160 GB | Up to 10 Gbps |