Networking Design

Networking Design

Understanding Traffic Requirements

The design of performance efficiency for cloud-based information systems starts with identifying the end user’s location and selecting the region where the information system will be built.

This is because the geographic distance between the end user and the data center closely affects the latency of user requests.

When deciding on a data center region, you should consider the following.

Legal regulation You must definitely consider the legal regulations regarding the geographic location where end users’ personal and important information is stored.

For example, public institutions may have to use a public cloud that has received CSAP certification.

Latency

The time it takes for data to be delivered from the source to the end user must be minimized.

Identify the services frequently used by the user, and it is advisable to build the system in a data center geographically close to that user.

When providing global services, you need to review whether to distribute the system across each country, or whether you can minimize latency through a Global CDN.

You must evaluate network requirements and determine the appropriate workload services and configurations.

Identify the data transmission requirements and network request frequency required by the information system.

I understand the bandwidth requirements. Analyze the workload, calculate network traffic based on the number of service requests per unit time and the size of request data, and secure bandwidth that can accommodate the traffic. Generally, traffic and bandwidth are expressed in bps (bit per second).

Establish a strategy to minimize network latency. When transmitting web content over the public internet, you can reduce latency by using a Global CDN. If a private network connection is required, you can configure a private connection via VPN, Direct Connect, Transit Gateway, etc., and you can also select the bandwidth of VPN or Direct Connect to meet the transmission speed requirements needed by the application.

I understand the throughput. Considering the throughput required for the workload, we review whether to configure a single VM or multiple VMs. Using Load Balancer, you can distribute load across multiple VMs, and if GSLB is applied, you can route request load to multiple Regions (IP addresses).

Establishing measures to reduce latency

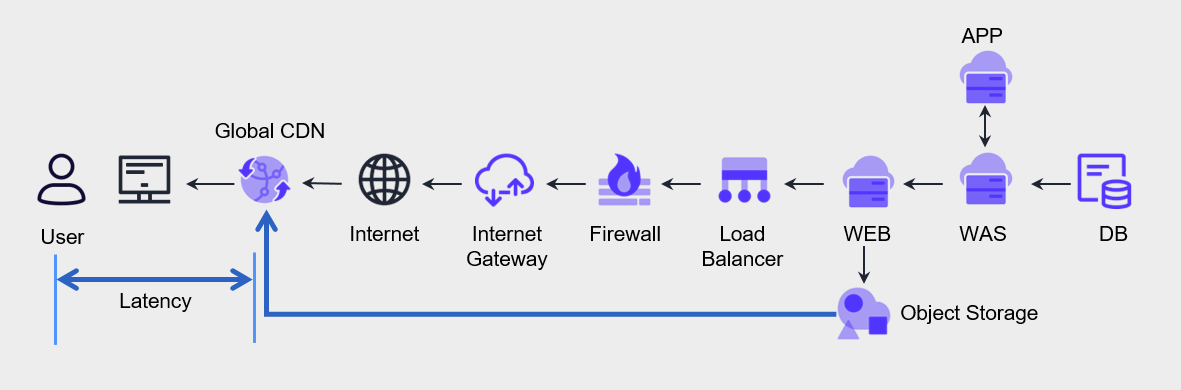

The user goes through various component processing steps to request a service from the web application and receive a response.

We have previously examined performance improvement measures in terms of computing and data storage.

If you look based on the figure below, the user and the web application are connected via the Internet, and the speed of this segment varies in latency depending on geographic distance, more precisely the number of network hops.

Generally, the farther the geographic distance, the longer the time required for data transmission.

We use Global CDN services to reduce such latency.

Global CDN uses numerous edge servers distributed across the global network to deliver static content stored in web servers or object storage to users faster and more securely.

Also, even in situations where traffic surges, it distributes the load of the origin server to prevent server overload, and end users can download content from the nearest edge server to use fast and stable web services.