Building MLOps with AI/ML

Building MLOps with AI/ML

Overview

Machine Learning (hereinafter referred to as ML) is expanding beyond the analysis and model development stages to the operational stage. Although the task of creating and applying ML models to services is increasing, in reality, more time is spent on data collection, analysis, and model tuning than on model development. In Production-level ML Systems, it is necessary to automate and manage complex ML workflows on the MLOps lifecycle, from model development/training to model tuning, model building/deployment, and model management.

Kubeflow is a Kubernetes-based open-source Machine Learning platform that supports these ML workflows. It was released as an open-source project in 2018 with the participation of Google, Cisco, IBM, and Red Hat, and Version 1.0 was released in March 2020.

Kubeflow provides ML workflows in each area by combining multiple open-source solutions (such as Istio, Knative, and Argo) based on Kubernetes in an extensible manner.

Samsung Cloud Platform provides the Kubeflow open-source itself as the Kubeflow Mini service and provides the AI&MLOps Platform service by extending various add-on functions.

The AI&MLOps Platform provides various additional functions that are not available in open-source solutions, such as distributed learning job execution and monitoring, inference service management and analysis, job queue management, job scheduler, GPU fraction, and GPU resource monitoring.

This document explains how to configure and utilize the AI&MLOps Platform, which supports MLOps, on the Samsung Cloud Platform.

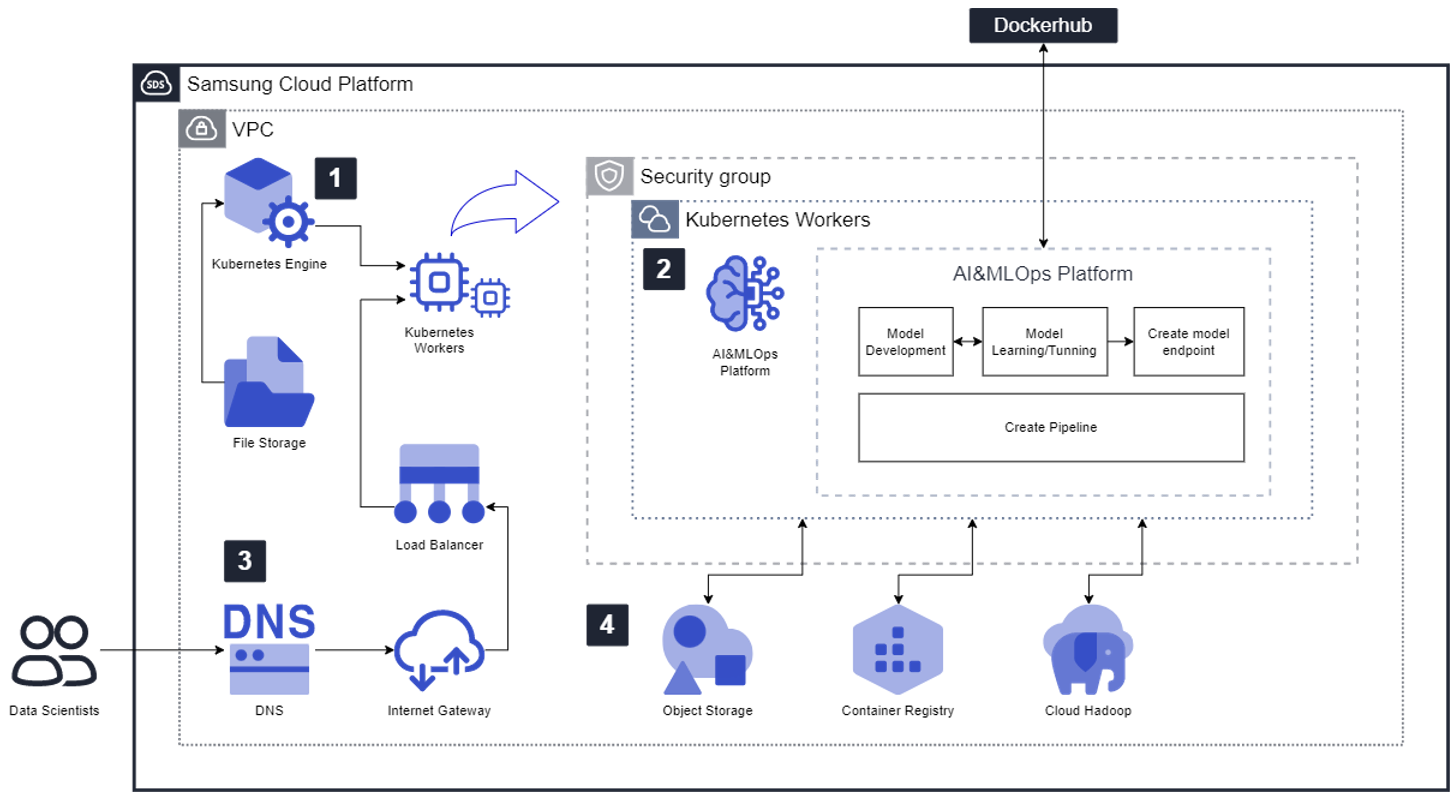

Architecture Diagram

To install the AI&MLOps Platform service, a Kubernetes Cluster is required. The user creates a Kubernetes Engine service. At this time, the Persistent Volume (PV) of the Kubernetes Cluster is created from the File Storage service.

The user selects the Kubernetes Cluster in VPC and deploys the AI&MLOps Platform. After the AI&MLOps Platform installation is complete, the user can access the AI&MLOps Platform Dashboard URL and use the ML workflow functions provided by the AI&MLOps Platform.

- Object Storage is used to store analysis data sets and model files, and can be linked using the S3 SDK in Jupyter Notebook.

- A Container Registry can be configured and used, or an external DockerHub can be used.

- Cloud Hadoop can be used for data preprocessing and large-scale data distributed processing.

※ When using services outside the user’s VPC, such as DockerHub or GitHub, it is necessary to add rules to Security Group and Firewall.

To use the AI&MLOps Platform Dashboard with a domain, a domain is created in the DNS service, and the corresponding domain and Kubernetes Worker are connected in the Load Balancer, allowing domain-based access.

To link services such as Object Storage, Container Registry, and Cloud Hadoop with the AI&MLOps Platform service, a separate service creation procedure is required, and each service’s usage guide should be referenced to use the services in conjunction with the AI&MLOps Platform service.

Use Cases

Building a Production Defect Judgment System based on AI&MLOps Platform

The AI&MLOps Platform provides excellent reusability, extensibility, and stability based on Kubernetes.

The AI&MLOps Platform provides a Jupyter Notebook-based model development environment and hyperparameter tuning and result verification functions, allowing for performance comparison between multiple models.

Additionally, it supports distributed learning using GPUs for ML frameworks (such as TensorFlow and PyTorch), which can shorten model training time, and provides an Endpoint API that can be called from applications and allows service expansion when deploying models.

When using high-performance infrastructure (GPU Direct RDMA) based on GPUs, distributed learning performance can be improved by an average of 1.5 times.

Developing an MLOps-based Model Development and Deployment System

Through the AI&MLOps Platform pipeline, reusable workflows can be created to configure the MLOps environment from model development to deployment, and models can be retrained with additional data using the same pipeline.

Prerequisites

A Kubernetes Cluster with at least the minimum specifications is required to install the AI&MLOps Platform. When creating a Kubernetes Cluster, the use of Load Balancer must be selected.

Restrictions

AI&MLOps Platform model deployment is currently only possible with the configured Kubernetes Cluster. It provides a single cluster for both model development and inference services without separating the environments.

Considerations

It is possible to consider using Object Storage for data and model storage. Additionally, Container Registry can be used for container image storage, or an external DockerHub can be used. Furthermore, pipeline configuration open-source tools like Elyra can be deployed to extend the functionality of the AI&MLOps Platform.

Related Services

This is a list of Samsung Cloud Platform services related to the functions or configurations described in this guide. Please refer to these services when selecting and designing services.

| Service Group | Service | Detailed Description |

|---|---|---|

| Container | Kubernetes Engine | Kubernetes container orchestration service |

| Storage | File Storage | Storage that allows multiple client servers to share files through network connections |

| Storage | Object Storage | Object storage that is convenient for data storage and search |

| Networking | Load Balancer | Service that automatically distributes server traffic loads |

| Networking | DNS | Service that allows easy domain setup and management |

| Container | Container Registry | Service that allows easy storage, management, and sharing of container images |

| Cloud Hadoop | Cloud Hadoop | Service that provides Hadoop clusters for easy and fast big data processing and analysis |